- Overview

- NL2Code Data Release

- Training (finetune.py)

- Inference (generate.py)

- Checkpoint Merge & Export

- Evaluation

- Useful Resources

- Retrain CodeUp on

rombodawg/MegaCodeTraining112kdata. (Running) - Report comprehensive code generation performance on a variety of programming language.

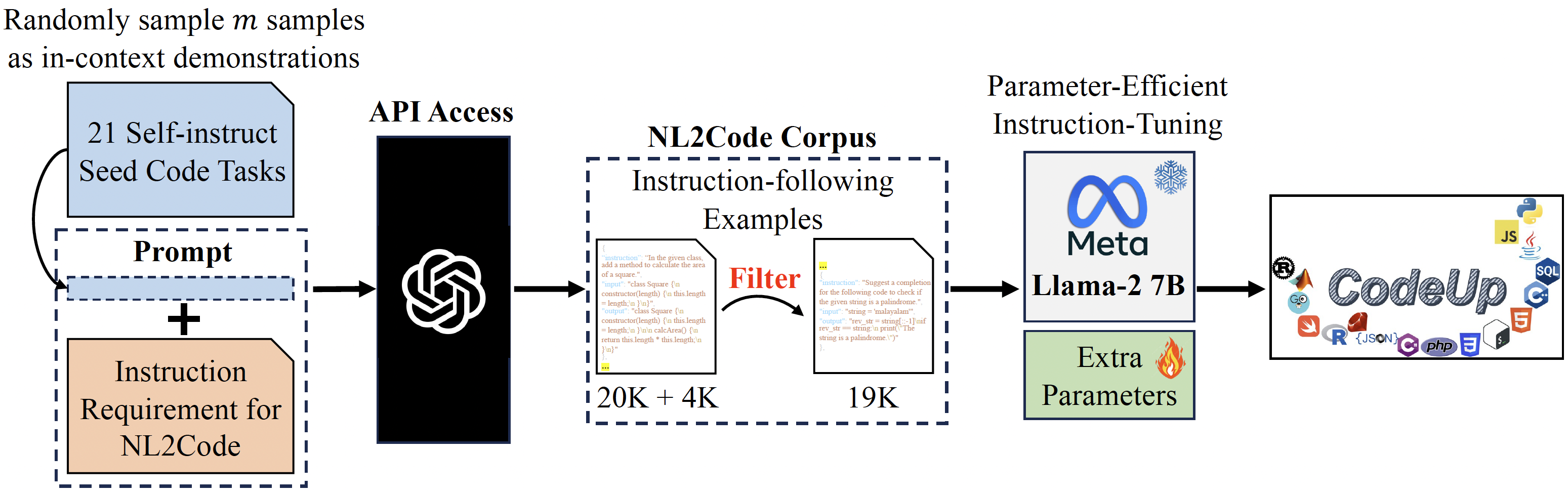

In recent years, large language models (LLMs) have shown exceptional capabilities in a wide range of applications due to their fantastic emergence ability. To align with human preference, instruction-tuning and reinforcement learning from human feedback (RLHF) are proposed for Chat-based LLMs (e.g., ChatGPT, GPT-4). However, these LLMs (except for Codex) primarily focus on the general domain and are not specifically designed for the code domain. Although Codex provides an alternative choice, it is a closed-source model developed by OpenAI. Hence, it is imperative to develop open-source instruction-following LLMs for the code domain. However, the large-scale number of LLMs' parameters ($\ge$7B) and training datasets require a vast amount of computational resources, which significantly impedes the development of training and inference on consumer hardware.

To handle these challenges, in this project, we adopt the latest powerful foundation model Llama 2 and construct high-quality instruction-following data for code generation tasks, and propose an instruction-following multilingual code generation Llama2 model. Meanwhile, to make it fit an academic budget and consumer hardware (e.g., a single RTX 3090) based on Alpaca-LoRA, we equip CodeUp with the advanced parameter-efficient fine-tuning (PEFT) methods (e.g., LoRA) which enable efficient adaptation of pre-trained language models (PLMs, also known as foundation model) to various downstream applications without fine-tuning the entire model's parameters. The overall training recipe is as follows.

In summary, the repo contains:

- The 19K high-quality instruction-following data used for fine-tuning code generation model.

- The code for selecting high-quality instruction data from Code Alpaca.

- The code for efficiently fine-tuning the model on a single RTX 3090.

- The code for running a Gradio interface for model inference

- The code for running the model locally on CPU device

Recently, it has attracted significant attention to exploiting much larger and more powerful LLMs (e.g., ChatGPT, GPT-4) to self-generate instruction-following data by delicate prompt design. However, many approaches primarily focus on the general domain and lack code-specific domain considerations. To this end, Code Alpaca follows the previous Self-Instruct paper [3] and Stanford Alpaca repo with some code-related modifications to conduct 20K instruction-following data data/code_alpaca_20k.json for code generation tasks. This JSON file following alpaca_data.json format is a list of dictionaries; each dictionary contains the following fields:

instruction:str, describes the task the model should perform. Each of the 20K instructions is unique.input:str, optional context or input for the task. For example, when the instruction is "Amend the following SQL query to select distinct elements", the input is the SQL query. Around 40% of the examples have an input.output:str, the answer to the instruction as generated bytext-davinci-003.

However, after carefully checking the LLMs-self-generated data, we observe three critical problems that may hinder LLMs' instruction learning due to ambiguous and irrelevant noise. That is

- When

instructiondoesn't specify the programming language (PL) of implementation, theoutputappears with diverse options, e.g., Python, C++, and JavaScript. - It is ambiguous to identify which programming language

outputis implemented by. - Both

instructionandoutputare irrelevant to the code-specific domain.

Hence, we filter the ambiguous and irrelevant data by rigorous design to obtain high-quality instruction data. Specifically, to solve 1) we set Python as the default PL of implementation and use Guesslang package to detect the PL of a given source code in output. If the Python is detected, this prompt is retained. Otherwise, it will be filtered. 2) and 3) In these cases, we delete these prompts. After that, about 5K low-quality instruction data is filtered. To supplement the high-quality instruction data, we further integrate the data/new_codealpaca.json data (about 4.5K) under the above filter rules. To achieve this, please run the following command:

cd data

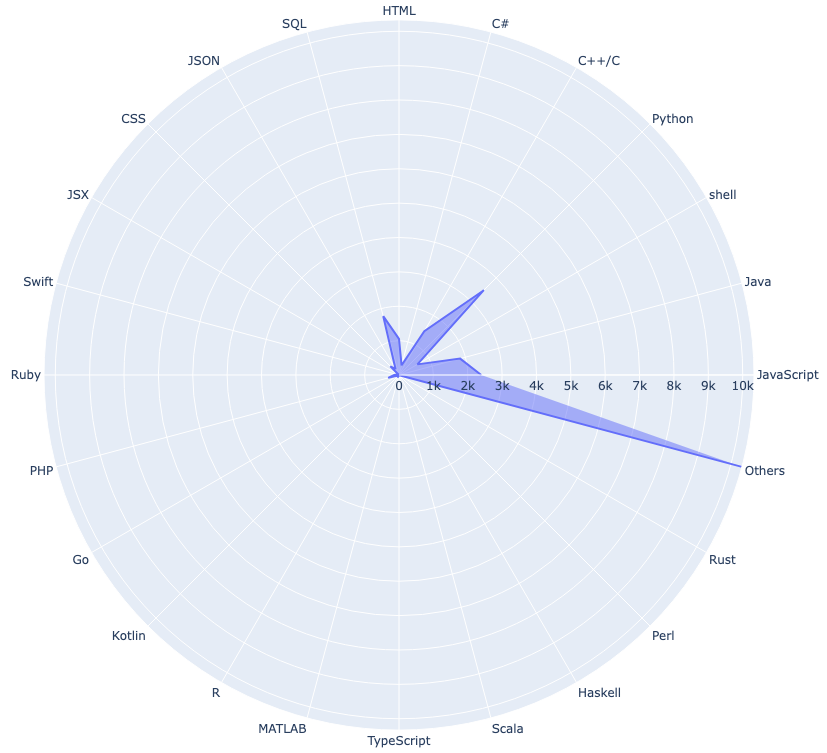

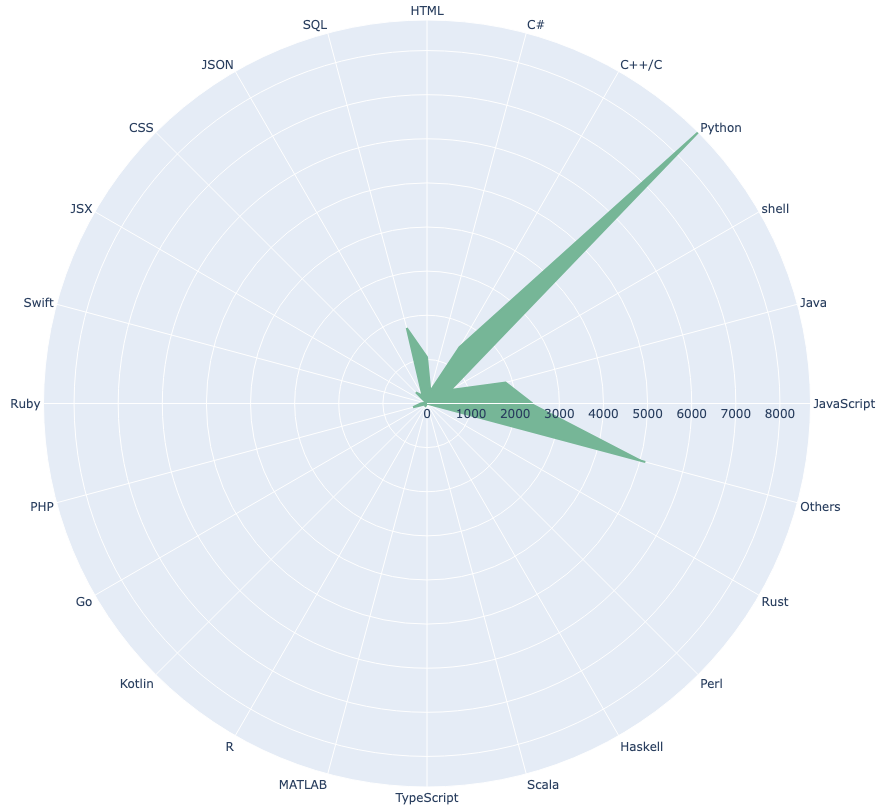

python preprocess.pyThis way, we gain the 19K high-quality instruction data of code generation. The following is the instruction number distribution of each PL with Radar visualization before and after filtering.

| Raw Data (20K + 4K) | Filtered Data (19K) |

|---|---|

|

|

{'JavaScript': 2393, 'Java': 1842, 'shell': 618, 'Python': 8693, 'C++/C': 1472, 'C#': 292, 'HTML': 1051, 'SQL': 1772, 'JSON': 206, 'CSS': 356, 'JSX': 2, 'Swift': 63, 'Ruby': 139, 'PHP': 326, 'Go': 34, 'Kotlin': 4, 'R': 89, 'MATLAB': 13, 'TypeScript': 7, 'Scala': 11, 'Haskell': 23, 'Perl': 16, 'Rust': 11}Examples in codeup_19k.json:

[

{

"instruction": "Write a function in HTML for creating a table of n rows and m columns.",

"input": "",

"output": "<table>\n <tbody>\n <% for (let i = 0; i < n; i++) { %>\n <tr>\n <% for (let j = 0; j < m; j++) { %>\n <td>Cell Content</td>\n <% } %>\n </tr>\n <% } %>\n </tbody>\n</table>"

},

...

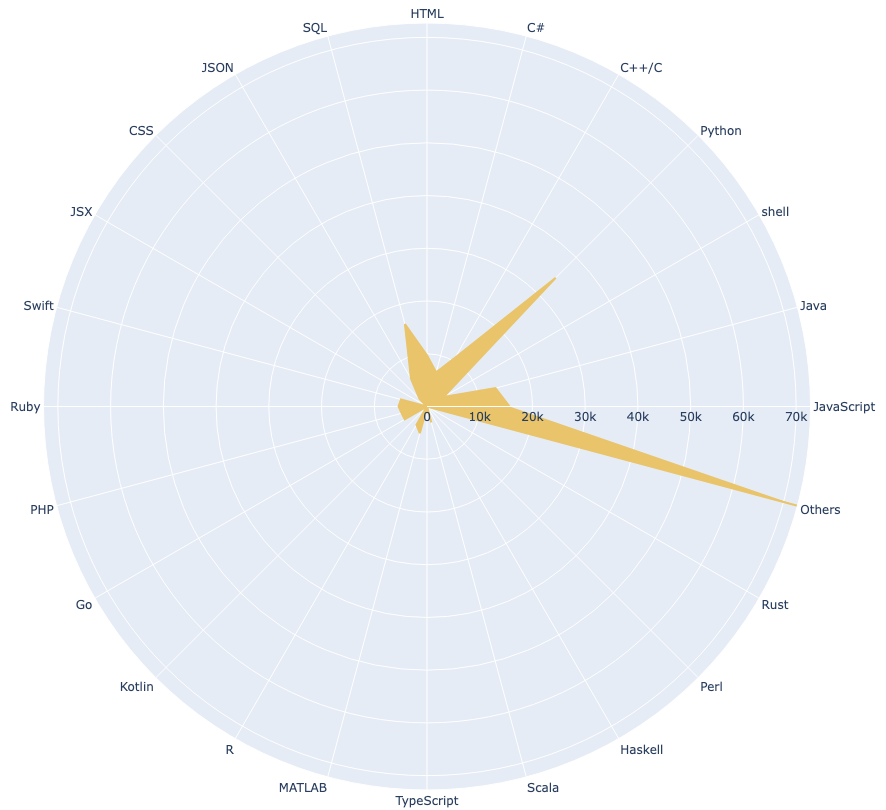

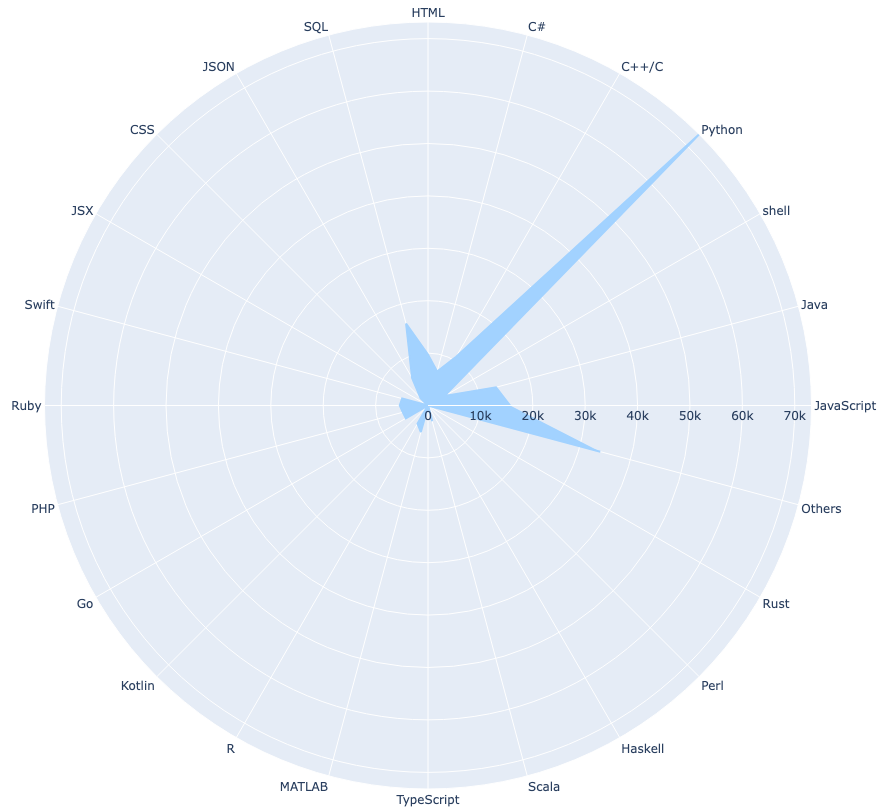

]As seen above, the instruction number of some PLs is still limited. Hence, we curate the 190K high-quality instruction data derived from rombodawg/MegaCodeTraining112k which is more complex and diverse. The following is the instruction number distribution of each PL with Radar visualization before and after filtering.

| MegaCodeTraining112k + Raw Data (200K + 24K) | Filtered Data (190K) |

|---|---|

|

|

{'JavaScript': 15676, 'Java': 13444, 'shell': 3612, 'Python': 73194, 'C++/C': 10744, 'C#': 6721, 'HTML': 9715, 'SQL': 16173, 'JSON': 6073, 'CSS': 2006, 'JSX': 9, 'Swift': 5111, 'Ruby': 5390, 'PHP': 4971, 'Go': 4879, 'Kotlin': 65, 'R': 4000, 'MATLAB': 5248, 'TypeScript': 198, 'Scala': 3130, 'Haskell': 101, 'Perl': 73, 'Rust': 129}Examples in codeup_190k.json:

[

{

"instruction": "Using a pseudo-random number generator, generate a 3 by 3 grid of non-repeating integers between 0 and 9, with each integer having an equal probability of being selected. Additionally, ensure that the sum of all integers in each row, column, and diagonal of the grid is not equal to any other row, column, or diagonal. Finally, output the resulting grid as a formatted table.",

"input": "",

"output": "Sure, here's the code to generate the grid:\n```python\nimport random\ndef generate_grid():\n grid = [[None] * 3 for _ in range(3)]\n rows = [set(range(10)) for _ in range(3)]\n cols = [set(range(10)) for _ in range(3)]\n diags = [set(range(10)) for _ in range(2)]\n \n for i in range(3):\n for j in range(3):\n valid_nums = rows[i].intersection(cols[j]).intersection(diags[0 if i == j else 1])\n num = random.choice(list(valid_nums))\n grid[i][j] = num\n rows[i].remove(num)\n cols[j].remove(num)\n diags[0 if i == j else 1].remove(num)\n \n return grid\n```\nTo output the resulting grid as a formatted table, you can use the following code:\n```python\ndef print_grid(grid):\n for row in grid:\n print(\"|\".join(str(num) for num in row))\n```\nYou can then call both functions to generate and print the grid:\n```python\ngrid = generate_grid()\nprint_grid(grid)\n```\nThis will output a 3x3 grid of non-repeating integers between 0 and 9, with each integer having an equal probability of being selected, and with each row, column, and diagonal having a unique sum."

},

...

]Furthermore, we follow the previous work to use the following prompts template templates/alpaca.json for fine-tuning the model. However, during inference (e.g., for the web demo), we use the user instruction with an empty input field (second option).

- for examples with a non-empty input field:

Below is an instruction that describes a task, paired with an input that provides further context. Write a response that appropriately completes the request.

### Instruction:

{instruction}

### Input:

{input}

### Response:

- for examples with an empty input field:

Below is an instruction that describes a task. Write a response that appropriately completes the request.

### Instruction:

{instruction}

### Response:

# first option

full_prompt = template["prompt_input"].format(instruction=instruction, input=input) + data_point["output"]

# second option

full_prompt = template["prompt_no_input"].format(instruction=instruction) + data_point["output"]To access Llama 2 model, please follow the Download Guide and the difference between two versions of LLaMA can be found in Model Card.

To reproduce our fine-tuning runs for CodeUp, first, install the dependencies.

pip install -r requirements.txtThe finetune.py file contains a straightforward application of PEFT to the Llama 2 model, as well as some code related to prompt construction and tokenization.

python finetune.py \

--base_model 'meta-llama/Llama-2-7b-hf' \

--data_path 'data/codeup_19k.json' \

--output_dir './codeup-peft-llama-2/7b' \

--batch_size 128 \

--micro_batch_size 4 \

--num_epochs 1 \

--learning_rate 1e-4 \

--cutoff_len 512 \

--val_set_size 2000 \

--lora_r 8 \

--lora_alpha 16 \

--lora_dropout 0.05 \

--lora_target_modules '[q_proj,v_proj]' \

--train_on_inputs \

--group_by_lengthNote that gradient accumulation steps equals batch_size // micro_batch_size.

However, the latest CodeUp-7B model (codeup-peft-llama-2/7b) was fine-tuned on a single NVIDIA GeForce RTX 3090 24GB memory on July 28 for 11 hours with the following command:

python finetune.py \

--base_model='meta-llama/Llama-2-7b-hf' \

--data_path='data/codeup_19k.json' \

--num_epochs=10 \

--cutoff_len=512 \

--group_by_length \

--output_dir='./codeup-peft-llama-2/7b' \

--lora_target_modules='[q_proj,k_proj,v_proj,o_proj]' \

--lora_r=16 \

--micro_batch_size=16or

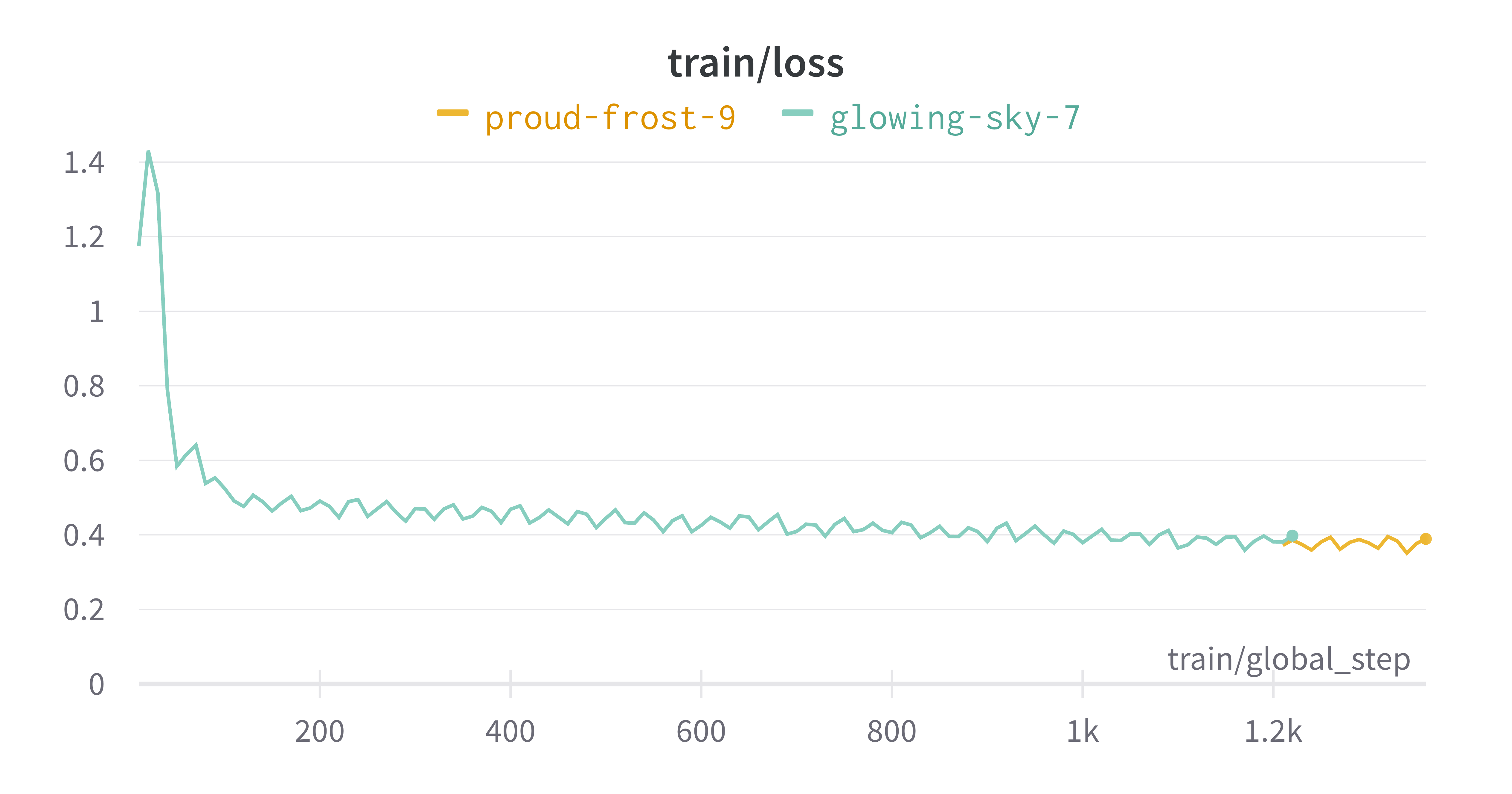

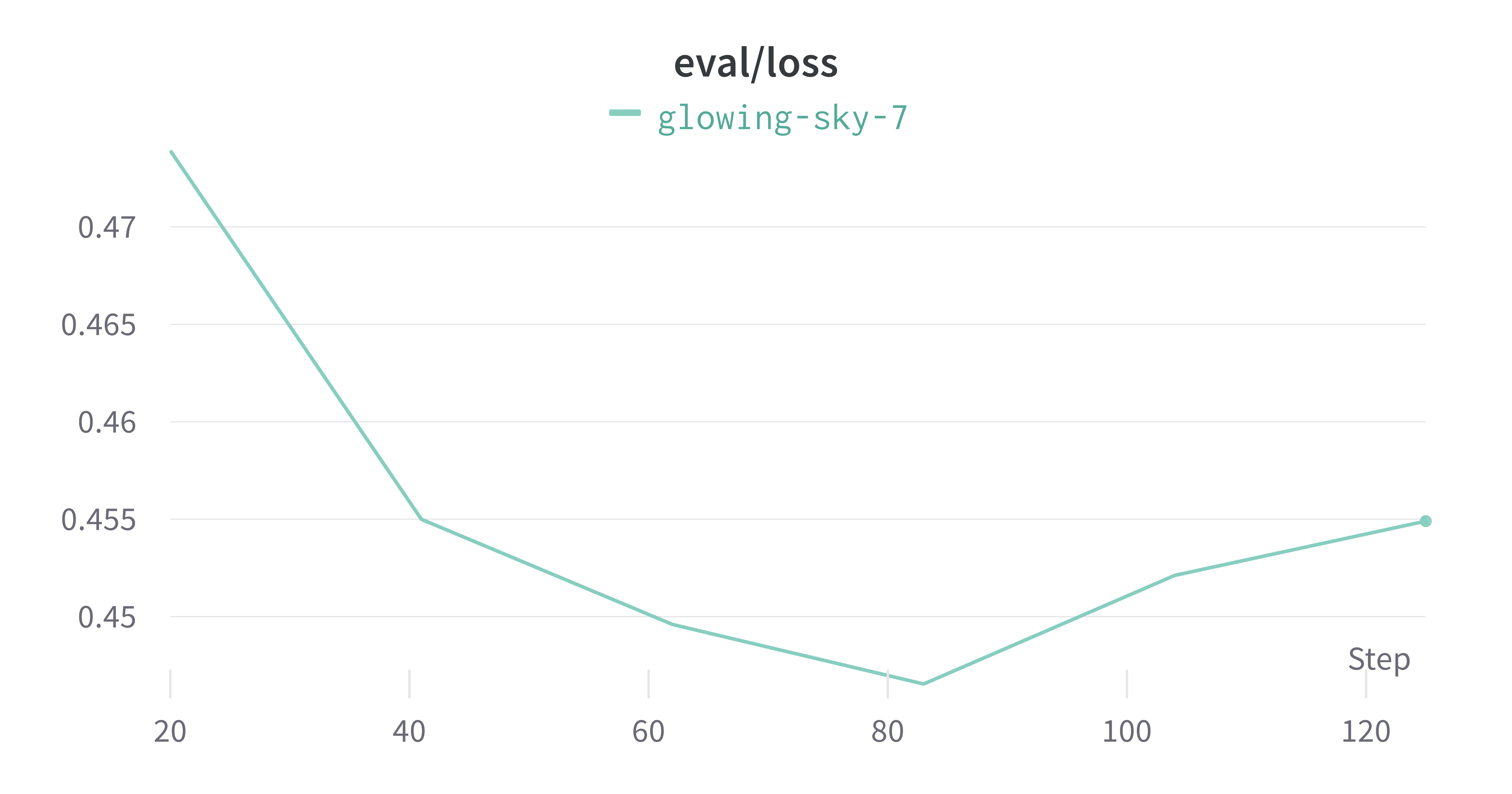

bash run_codeup_llama-2.sh # run_codeup_llama.sh for LLaMA V1| train/loss | eval/loss |

|---|---|

|

|

Note that if you meet the following OSError:

raise EnvironmentError(

OSError: meta-llama/Llama-2-13b-chat-hf is not a local folder and is not a valid model identifier listed on 'https://huggingface.co/models'

If this is a private repository, make sure to pass a token having permission to this repo with `use_auth_token` or log in with `huggingface-cli login` and pass `use_auth_token=True`.You can solve this Exception as follows.

Step 1:

git config --global credential.helper store

huggingface-cli loginStep 2:

Then, you can see the following prompt in your terminal:

$ huggingface-cli login

_| _| _| _| _|_|_| _|_|_| _|_|_| _| _| _|_|_| _|_|_|_| _|_| _|_|_| _|_|_|_|

_| _| _| _| _| _| _| _|_| _| _| _| _| _| _| _|

_|_|_|_| _| _| _| _|_| _| _|_| _| _| _| _| _| _|_| _|_|_| _|_|_|_| _| _|_|_|

_| _| _| _| _| _| _| _| _| _| _|_| _| _| _| _| _| _| _|

_| _| _|_| _|_|_| _|_|_| _|_|_| _| _| _|_|_| _| _| _| _|_|_| _|_|_|_|

To login, `huggingface_hub` requires a token generated from https://huggingface.co/settings/tokens .

Token: Step 3:

Click and open https://huggingface.co/settings/tokens, then copy User Access Tokens or create a new one. Note that as a prerequisite, you should already have access to Meta AI's Llama2 download.

Token has not been saved to git credential helper.

Your token has been saved to /home/john/.cache/huggingface/token

Login successfulAfter logining successfully, please rerun the above fine-tuning command. If you meet another bugs:

AttributeError: /home/xxx/lib/python3.8/site-packages/bitsandbytes/libbitsandbytes_cpu.so: undefined symbol: cget_col_row_statsPlease run the following commands to solve it.

$ nvidia-smi # get CUDA Version of your system

$ cd /home/xxx/lib/python3.8/site-packages/bitsandbytes

$ cp libbitsandbytes_cudaxxx.so libbitsandbytes_cpu.so # replace `xxx` with your CUDA Version

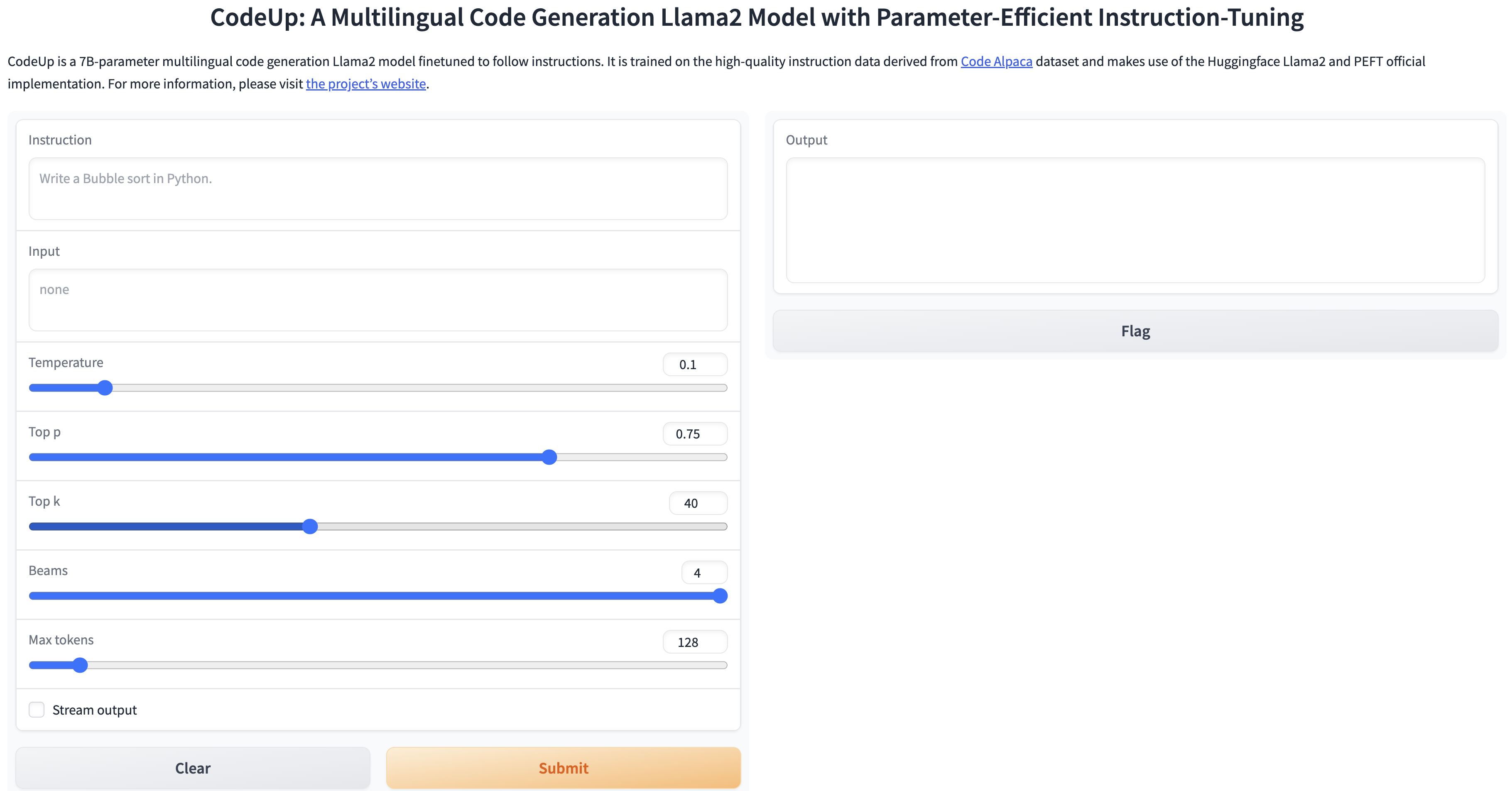

This file reads the foundation model (i.e., Llama2 7B) from the Hugging Face model hub and the LoRA weights from codeup-peft-llama-2/7b, and runs a Gradio interface for inference on a specified input. Users should treat this as an example code for using the model and modify it as needed.

python generate.py \

--load_8bit \

--base_model 'meta-llama/Llama-2-7b-hf' \

--lora_weights 'codeup-peft-llama-2/7b'Note that if you meet the bug of ImportError: cannot import name 'NotRequired' from 'typing_extensions', you can solve this as follows:

pip uninstall typing_extensions # upgrade 3.7.x to 4.7.x

pip install typing_extensionsThis script merge the LoRA weights back into the base model for exporting to Hugging Face format or to PyTorch state_dicts, which help users who want to run inference in projects like llama.cpp or alpaca.cpp, which can run LLM locally on your CPU device. After that, you can upload your model to Hugging Face Hub by git.

python export_checkpoint.py \

--base_model='meta-llama/Llama-2-7b-hf' \

--lora_weights='codeup-peft-llama-2/7b' \

--lora_target_modules='[q_proj,k_proj,v_proj,o_proj]' \

--export_dir='export_checkpoint/7b' \

--checkpoint_type='hf' # set to 'pytorch' if saved as state_dicts format of Pytorch Note that if you meet the following error when you upload large files by git, please make sure use Git LFS. Refer to Uploading files larger than 5GB to model hub and git: 'lfs' is not a git command unclear

error: RPC failed; HTTP 408 curl 22 The requested URL returned error: 408

fatal: the remote end hung up unexpectedly

Writing objects: 100% (54/54), 9.66 GiB | 7.72 MiB/s, done.

Total 54 (delta 0), reused 0 (delta 0)

fatal: the remote end hung up unexpectedly

Everything up-to-datesudo apt-get install git-lfs

git lfs install

huggingface-cli lfs-enable-largefiles .

git lfs track "*.png"

git lfs track "*.jpg"

git add .gitattributes

git add .

git commit -m "codeup-llama-2-7b-hf"

git push

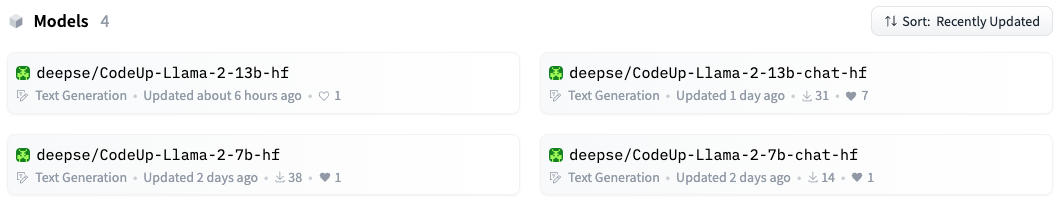

Up to now, we have contributed CodeUp-Llama-2-7b-hf, CodeUp-Llama-2-7b-chat-hf, CodeUp-Llama-2-13b-hf, and CodeUp-Llama-2-13b-chat-hf for which we use Llama-2-7b, Llama-2-7b-chat, and Llama-2-13b-chat as foundation model respectively, to Hugging Face Hub. The reason why we use Llama-2-xx-chat-based models, which have been trained on instruction-tuning (over 100K) and RLHF (over 1M), is to further enhance the understanding capability of instructions due to the amount and diversity limitation of our codeup_19k.json.

In summary, the individual LoRA weights can be found in codeup-peft-llama-2/7b, codeup-peft-llama-2/7b-chat, codeup-peft-llama-2/13b, and codeup-peft-llama-2/13b-chat, while the merged CodeUp weights (Llama 2 + LoRA weights) have been uploaded in Hugging Face Hub. It is worthwhile to note that if you follow the steps of Inference (generate.py), the inference can be conducted in a single RTX 3090 24GB. Otherwise, you need the standard GPUs memory of Llama 2 when you use the merged parameters from Hugging Face Hub.

We use the open-source framework Code Generation LM Evaluation Harness developed by BigCode team to evaluate our CodeUp performance.

git clone https://github.com/bigcode-project/bigcode-evaluation-harness.git

cd bigcode-evaluation-harness

pip install -e .Also make sure you have `git-lfs`` installed (above guide) and are logged in the Hub

huggingface-cli loginYou can use this evaluation framework to generate text solutions to code benchmarks with any autoregressive model available on Hugging Face hub, to evaluate (and execute) the solutions or to do both. While it is better to use GPUs for the generation, the evaluation only requires CPUs. So it might be beneficial to separate these two steps (i.e., --generation_only or --load_generations_path). By default both generation and evaluation are performed.

For more details on how to evaluate on the various tasks (i.e., benchmark), please refer to the documentation in bigcode-evaluation-harness/docs/README.md.

Below is an example of CodeUp to generate and evaluate on a multiple-py task (i.e., benchmark), which denotes HumanEval benchmark is translated into 18 programming languages.

accelerate launch main.py \

--model deepse/CodeUp-Llama-2-7b-hf \

--tasks multiple-py \

--max_length_generation 650 \

--temperature 0.8 \

--do_sample True \

--n_samples 200 \

--batch_size 200 \

--allow_code_execution \

--save_generations--modelcan be any autoregressive model available on Hugging Face hub can be used, but we recommend using code generation models trained specifically on Code such as SantaCoder, InCoder and CodeGen.--tasksdenotes a variety of benchmarks as follows:'codexglue_code_to_text-go', 'codexglue_code_to_text-java', 'codexglue_code_to_text-javascript', 'codexglue_code_to_text-php', 'codexglue_code_to_text-python', 'codexglue_code_to_text-python-left', 'codexglue_code_to_text-ruby', 'codexglue_text_to_text-da_en', 'codexglue_text_to_text-lv_en', 'codexglue_text_to_text-no_en', 'codexglue_text_to_text-zh_en', 'conala', 'concode', 'ds1000-all-completion', 'ds1000-all-insertion', 'ds1000-matplotlib-completion', 'ds1000-matplotlib-insertion', 'ds1000-numpy-completion', 'ds1000-numpy-insertion', 'ds1000-pandas-completion', 'ds1000-pandas-insertion', 'ds1000-pytorch-completion', 'ds1000-pytorch-insertion', 'ds1000-scipy-completion', 'ds1000-scipy-insertion', 'ds1000-sklearn-completion', 'ds1000-sklearn-insertion', 'ds1000-tensorflow-completion', 'ds1000-tensorflow-insertion', 'humaneval', 'instruct-humaneval', 'instruct-humaneval-nocontext', 'mbpp', 'multiple-cpp', 'multiple-cs', 'multiple-d', 'multiple-go', 'multiple-java', 'multiple-jl', 'multiple-js', 'multiple-lua', 'multiple-php', 'multiple-pl', 'multiple-py', 'multiple-r', 'multiple-rb', 'multiple-rkt', 'multiple-rs', 'multiple-scala', 'multiple-sh', 'multiple-swift', 'multiple-ts', 'pal-gsm8k-greedy', 'pal-gsm8k-majority_voting', 'pal-gsmhard-greedy', 'pal-gsmhard-majority_voting']

--limitrepresents the number of problems to solve, if it's not provided, all problems in the benchmark are selected.--allow_code_executionis for executing the generated code: it is off by default, read the displayed warning before calling it to enable execution.- Some models with custom code on the HF hub like SantaCoder require calling

--trust_remote_code, for private models add--use_auth_token. --save_generationssaves the post-processed generations in a json file at--save_generations_path(by defaultgenerations.json). You can also save references by calling--save_references--max_length_generationis the maximum token length of generation including the input token length. The default is 512, but for some tasks like GSM8K and GSM-Hard, the complete prompt with 8 shot examples (as used in PAL) take up~1500tokens, hence the value should be greater than that and the recommended value of--max_length_generationis2048for these tasks.- For APPS tasks, you can use

--n_samples=1for strict and average accuracies (from the original APPS paper) andn_samples>1forpass@kmetrics.

Note that some tasks (i.e., benchmarks) don't require code execution (i.e., don't specify --allow_code_execution) due to text generation task or lacking unit tests, such as

codexglue_code_to_text-<LANGUAGE>/codexglue_code_to_text-python-left/conala/concode that use BLEU evaluation.

In addition, we generate one candidate solution for each problem in these tasks, so use --n_samples=1 and --batch_size=1. (Note that batch_size should always be equal or less than n_samples).

- LLaMA, inference code for LLaMA models

- Llama 2, open foundation and fine-tuned chat models

- Stanford Alpaca, an instruction-following LLaMA model

- Alpaca-Lora, instruct-tune LLaMA on consumer hardware

- FastChat, an open platform for training, serving, and evaluating large language models. Release repo for Vicuna and Chatbot Arena.

- GPT Code UI, an open source implementation of OpenAI's ChatGPT Code interpreter

- PEFT, state-of-the-art parameter-efficient fine-tuning (PEFT) methods

- Codex, an evaluation harness for the HumanEval problem solving dataset

- Code Alpaca, an instruction-following LLaMA model trained on code generation instructions

- WizardLM, an instruction-following LLM using Evol-Instruct

- Self-Instruct, aligning pretrained language models with instruction data generated by themselves.

- StackLLaMA, a hands-on guide to train LLaMA with RLHF

- StarCoder, a language model (LM) trained on source code and natural language text.

- CodeGeeX, a multilingual code generation model

- CodeGen, an open large language model for code with multi-turn program synthesis

- InCoder, a generative model for code infilling and synthesis

- CodeT5+, a standard Transformer framework for code understanding and generation

- CodeBERT, a pre-trained language model for programming and natural languages

- llama.cpp, a native client for running LLaMA models on the CPU

- alpaca.cpp, a native client for running Alpaca models on the CPU

- Alpaca-LoRA-Serve, a ChatGPT-style interface for Alpaca models

- AlpacaDataCleaned, a project to improve the quality of the Alpaca dataset

- GPT-4 Alpaca Data, a project to port synthetic data creation to GPT-4

- Code Alpaca Data, a project for code generation

- CodeXGLUE, a machine learning benchmark dataset for code understanding and generation

- HumanEval, APPS, HumanEval+, MBPP, and DS-1000

- https://huggingface.co/decapoda-research/llama-7b-hf

- https://huggingface.co/tloen/alpaca-lora-7b

- https://huggingface.co/meta-llama/Llama-2-7b-hf

- https://huggingface.co/chansung/gpt4-alpaca-lora-7b

- A Survey of Large Language Models

- Codex: Evaluating Large Language Models Trained on Code

- LLaMA: Open and Efficient Foundation Language Models

- Llama 2: Open Foundation and Fine-Tuned Chat Models

- Self-Instruct: Aligning Language Model with Self Generated Instructions

- LoRA: Low-Rank Adaptation of Large Language Models

- InstructGPT: Training language models to follow instructions with human feedback

- Emergent Abilities of Large Language Models

- StarCoder: may the source be with you!

- WizardCoder: Empowering Code Large Language Models with Evol-Instruct

- CodeGeeX: A Pre-Trained Model for Code Generation with Multilingual Evaluations on HumanEval-X

- CodeT5+: Open Code Large Language Models for Code Understanding and Generation

- CodeGen: An Open Large Language Model for Code with Multi-Turn Program Synthesis

- InCoder: A Generative Model for Code Infilling and Synthesis

- CodeBERT: A Pre-Trained Model for Programming and Natural Languages

- CodeXGLUE: A Machine Learning Benchmark Dataset for Code Understanding and Generation

If you use the data or code in this repo, please cite the repo.

@misc{codeup,

author = {Juyong Jiang and Sunghun Kim},

title = {CodeUp: A Multilingual Code Generation Llama2 Model with Parameter-Efficient Instruction-Tuning},

year = {2023},

publisher = {GitHub},

journal = {GitHub repository},

howpublished = {\url{https://github.com/juyongjiang/CodeUp}},

}

Naturally, you should also cite the original LLaMA V1 [1] & V2 paper [2], and the Self-Instruct paper [3], and the LoRA paper [4], and the Stanford Alpaca repo, and Alpaca-LoRA repo, and Code Alpaca repo, and PEFT.