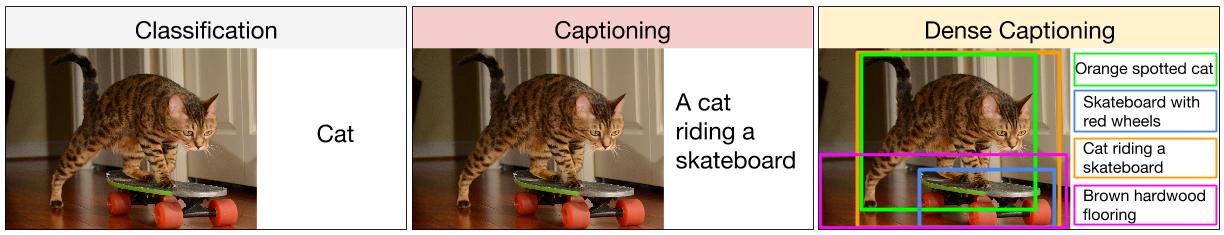

Image captioning is a new technology that combines LSTM text generation with the computer vision powers of a convolutional neural network. I first saw this technology in Andrej Karpathy's Dissertation. [Cite:karpathy2016connecting] Down Given Figure shows images from his work.

Andrej Karpathy's Dissertation

In this part, we will use LSTM and CNN to create a basic image captioning system. We will use transfer learning to utilize this proje:

We use inception to extract features from the images. Glove is a set of Natural Language Processing (NLP) vectors for common words. Below Figure gives a high-level overview of captioning.

We begin by importing the needed libraries.

- For the installation of the required libraries run

pip install requirements.txt

You will need to download the following data and place it in a folder for this example. Point the root_captioning string at the folder that you are using for the caption generation. This folder should have the following sub-folders.

- data - Create this directory to hold saved models.

- glove.6B - Glove embeddings.

- Flicker8k_Dataset - Flicker dataset.

- Flicker8k_Text

Note, the original Flickr datasets are no longer available, but you can download them from a location specified by this article.