Evolutionary Neural Architecture Search on Transformers for RUL Prediction

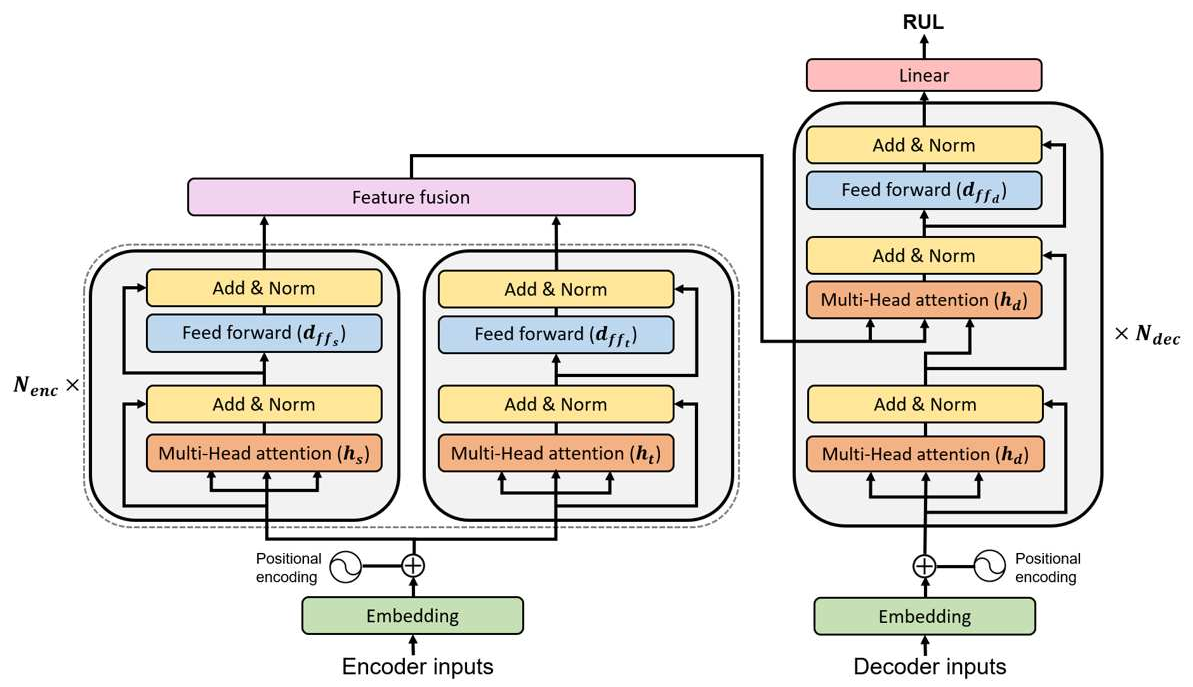

This work introduces a custom genetic algorithm (GA) based neural architecture search (NAS) technique that automatically finds the optimal architectures of Transformers for RUL predictions. Our GA provides a fast and efficient search, finding high-quality solutions based on performance predictor that is updated at every generation, thus reducing the needed network training. Note that the backbone architecture is the Transformer for RUL predictions and the preformance predictor is the NGBoost model

This work introduces a custom genetic algorithm (GA) based neural architecture search (NAS) technique that automatically finds the optimal architectures of Transformers for RUL predictions. Our GA provides a fast and efficient search, finding high-quality solutions based on performance predictor that is updated at every generation, thus reducing the needed network training. Note that the backbone architecture is the Transformer for RUL predictions and the preformance predictor is the NGBoost model

The proposed algorithm explores the below combinatorial parameter space defining the architecture of the Transformer model.

Our work has the following dependencies:

pip install -r requirements.txt- data_process_update_valid.py: to process multivariate time series data for preparing inputs for the Transformer.

- initialization_LHS.py: to perform the full training of the networks selected by LHS and to collect their validation RMSE to be used for training NGBoost.

- enas_transformer_cma_retraining.py: to run the evolutionary search and to find the solutions.

- topk_test.py: to calculate the test RMSE of each solution.

Data preparation

python3 data_process_update_valid.py --subdata 001 -w 40 -s 1 --vs 20Predictor initialization

python3 initialization_LHS.py --subdata 001 -w 40 -t 0 -ep 100 -n_samples 100 -pt 10Evolutionary NAS

python3 enas_transformer_cma_retraining.py --subdata 001 -w 40 --pop 1000 --gen 10 -t 0 -ep 100 Test results

python3 topk_test.py --subdata 001 -w 40 -t 0 -ep_init 100 -ep_train 100 --pop 1000 --gen 10 --model "NGB" -topk 10 -sp 100 -n_samples 100 --sc "ga_retrain"The performance of the discovered solutions in terms of test RMSE

| Metrics \ sub-datasets | FD001 | FD002 | FD003 | FD004 | SUM |

|---|---|---|---|---|---|

| Test RMSE | 11.50 | 16.14 | 11.35 | 20.00 | 58.99 |

| s-score | 202 | 1131 | 227 | 2299 | 3858 |

H. Mo and G. Iacca,

Evolutionary neural architecture search on transformers for remaining useful life prediction,

Materials and Manufacturing Processes, 2023,

https://doi.org/10.1080/10426914.2023.2199499.

Bibtex entry ready to be cited

@article{mo2023evolutionary,

title={Evolutionary neural architecture search on transformers for RUL prediction},

author={Mo, Hyunho and Iacca, Giovanni},

journal={Materials and Manufacturing Processes},

pages={1--18},

year={2023},

publisher={Taylor \& Francis}

}