DE⫶TR: End-to-End Object Detection with Transformers

PyTorch training code and pretrained models for DETR (DEtection TRansformer). We replace the full complex hand-crafted object detection pipeline with a Transformer, and match Faster R-CNN with a ResNet-50, obtaining 42 AP on COCO using half the computation power (FLOPs) and the same number of parameters. Inference in 50 lines of PyTorch.

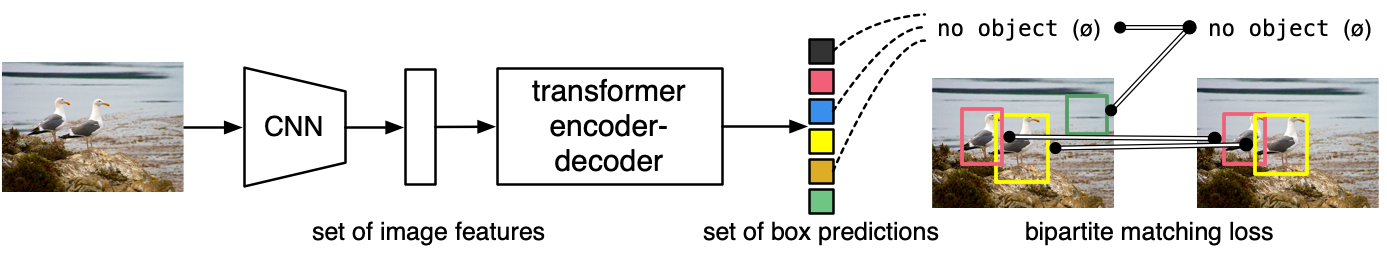

What it is. Unlike traditional computer vision techniques, DETR approaches object detection as a direct set prediction problem. It consists of a set-based global loss, which forces unique predictions via bipartite matching, and a Transformer encoder-decoder architecture. Given a fixed small set of learned object queries, DETR reasons about the relations of the objects and the global image context to directly output the final set of predictions in parallel. Due to this parallel nature, DETR is very fast and efficient.

About the code. We believe that object detection should not be more difficult than classification, and should not require complex libraries for training and inference. DETR is very simple to implement and experiment with, and we provide a standalone Colab Notebook showing how to do inference with DETR in only a few lines of PyTorch code. Training code follows this idea - it is not a library, but simply a main.py importing model and criterion definitions with standard training loops.

Additionnally, we provide a Detectron2 wrapper in the d2/ folder. See the readme there for more information.

For details see End-to-End Object Detection with Transformers by Nicolas Carion, Francisco Massa, Gabriel Synnaeve, Nicolas Usunier, Alexander Kirillov, and Sergey Zagoruyko.

See our blog post to learn more about end to end object detection with transformers.

Model Zoo

We provide baseline DETR and DETR-DC5 models, and plan to include more in future. AP is computed on COCO 2017 val5k, and inference time is over the first 100 val5k COCO images, with torchscript transformer.

| name | backbone | schedule | inf_time | box AP | url | size | |

|---|---|---|---|---|---|---|---|

| 0 | DETR | R50 | 500 | 0.036 | 42.0 | model | logs | 159Mb |

| 1 | DETR-DC5 | R50 | 500 | 0.083 | 43.3 | model | logs | 159Mb |

| 2 | DETR | R101 | 500 | 0.050 | 43.5 | model | logs | 232Mb |

| 3 | DETR-DC5 | R101 | 500 | 0.097 | 44.9 | model | logs | 232Mb |

COCO val5k evaluation results can be found in this gist.

The models are also available via torch hub, to load DETR R50 with pretrained weights simply do:

model = torch.hub.load('facebookresearch/detr:main', 'detr_resnet50', pretrained=True)COCO panoptic val5k models:

| name | backbone | box AP | segm AP | PQ | url | size | |

|---|---|---|---|---|---|---|---|

| 0 | DETR | R50 | 38.8 | 31.1 | 43.4 | download | 165Mb |

| 1 | DETR-DC5 | R50 | 40.2 | 31.9 | 44.6 | download | 165Mb |

| 2 | DETR | R101 | 40.1 | 33 | 45.1 | download | 237Mb |

Checkout our panoptic colab to see how to use and visualize DETR's panoptic segmentation prediction.

Notebooks

We provide a few notebooks in colab to help you get a grasp on DETR:

- DETR's hands on Colab Notebook: Shows how to load a model from hub, generate predictions, then visualize the attention of the model (similar to the figures of the paper)

- Standalone Colab Notebook: In this notebook, we demonstrate how to implement a simplified version of DETR from the grounds up in 50 lines of Python, then visualize the predictions. It is a good starting point if you want to gain better understanding the architecture and poke around before diving in the codebase.

- Panoptic Colab Notebook: Demonstrates how to use DETR for panoptic segmentation and plot the predictions.

Usage - Object detection

There are no extra compiled components in DETR and package dependencies are minimal, so the code is very simple to use. We provide instructions how to install dependencies via conda. First, clone the repository locally:

git clone https://github.com/facebookresearch/detr.git

Then, install PyTorch 1.5+ and torchvision 0.6+:

conda install -c pytorch pytorch torchvision

Install pycocotools (for evaluation on COCO) and scipy (for training):

conda install cython scipy

pip install -U 'git+https://github.com/cocodataset/cocoapi.git#subdirectory=PythonAPI'

That's it, should be good to train and evaluate detection models.

(optional) to work with panoptic install panopticapi:

pip install git+https://github.com/cocodataset/panopticapi.git

Data preparation

Download and extract COCO 2017 train and val images with annotations from http://cocodataset.org. We expect the directory structure to be the following:

path/to/coco/

annotations/ # annotation json files

train2017/ # train images

val2017/ # val images

Training

To train baseline DETR on a single node with 8 gpus for 300 epochs run:

python -m torch.distributed.launch --nproc_per_node=8 --use_env main.py --coco_path /path/to/coco

A single epoch takes 28 minutes, so 300 epoch training takes around 6 days on a single machine with 8 V100 cards. To ease reproduction of our results we provide results and training logs for 150 epoch schedule (3 days on a single machine), achieving 39.5/60.3 AP/AP50.

We train DETR with AdamW setting learning rate in the transformer to 1e-4 and 1e-5 in the backbone. Horizontal flips, scales and crops are used for augmentation. Images are rescaled to have min size 800 and max size 1333. The transformer is trained with dropout of 0.1, and the whole model is trained with grad clip of 0.1.

Evaluation

To evaluate DETR R50 on COCO val5k with a single GPU run:

python main.py --batch_size 2 --no_aux_loss --eval --resume https://dl.fbaipublicfiles.com/detr/detr-r50-e632da11.pth --coco_path /path/to/coco

We provide results for all DETR detection models in this gist. Note that numbers vary depending on batch size (number of images) per GPU. Non-DC5 models were trained with batch size 2, and DC5 with 1, so DC5 models show a significant drop in AP if evaluated with more than 1 image per GPU.

Multinode training

Distributed training is available via Slurm and submitit:

pip install submitit

Train baseline DETR-6-6 model on 4 nodes for 300 epochs:

python run_with_submitit.py --timeout 3000 --coco_path /path/to/coco

Usage - Segmentation

We show that it is relatively straightforward to extend DETR to predict segmentation masks. We mainly demonstrate strong panoptic segmentation results.

Data preparation

For panoptic segmentation, you need the panoptic annotations additionally to the coco dataset (see above for the coco dataset). You need to download and extract the annotations. We expect the directory structure to be the following:

path/to/coco_panoptic/

annotations/ # annotation json files

panoptic_train2017/ # train panoptic annotations

panoptic_val2017/ # val panoptic annotations

Training

We recommend training segmentation in two stages: first train DETR to detect all the boxes, and then train the segmentation head. For panoptic segmentation, DETR must learn to detect boxes for both stuff and things classes. You can train it on a single node with 8 gpus for 300 epochs with:

python -m torch.distributed.launch --nproc_per_node=8 --use_env main.py --coco_path /path/to/coco --coco_panoptic_path /path/to/coco_panoptic --dataset_file coco_panoptic --output_dir /output/path/box_model

For instance segmentation, you can simply train a normal box model (or used a pre-trained one we provide).

Once you have a box model checkpoint, you need to freeze it, and train the segmentation head in isolation. For panoptic segmentation you can train on a single node with 8 gpus for 25 epochs:

python -m torch.distributed.launch --nproc_per_node=8 --use_env main.py --masks --epochs 25 --lr_drop 15 --coco_path /path/to/coco --coco_panoptic_path /path/to/coco_panoptic --dataset_file coco_panoptic --frozen_weights /output/path/box_model/checkpoint.pth --output_dir /output/path/segm_model

For instance segmentation only, simply remove the dataset_file and coco_panoptic_path arguments from the above command line.

License

DETR is released under the Apache 2.0 license. Please see the LICENSE file for more information.

Contributing

We actively welcome your pull requests! Please see CONTRIBUTING.md and CODE_OF_CONDUCT.md for more info.