An incredibly fast implementation of Whisper optimized for Apple Silicon.

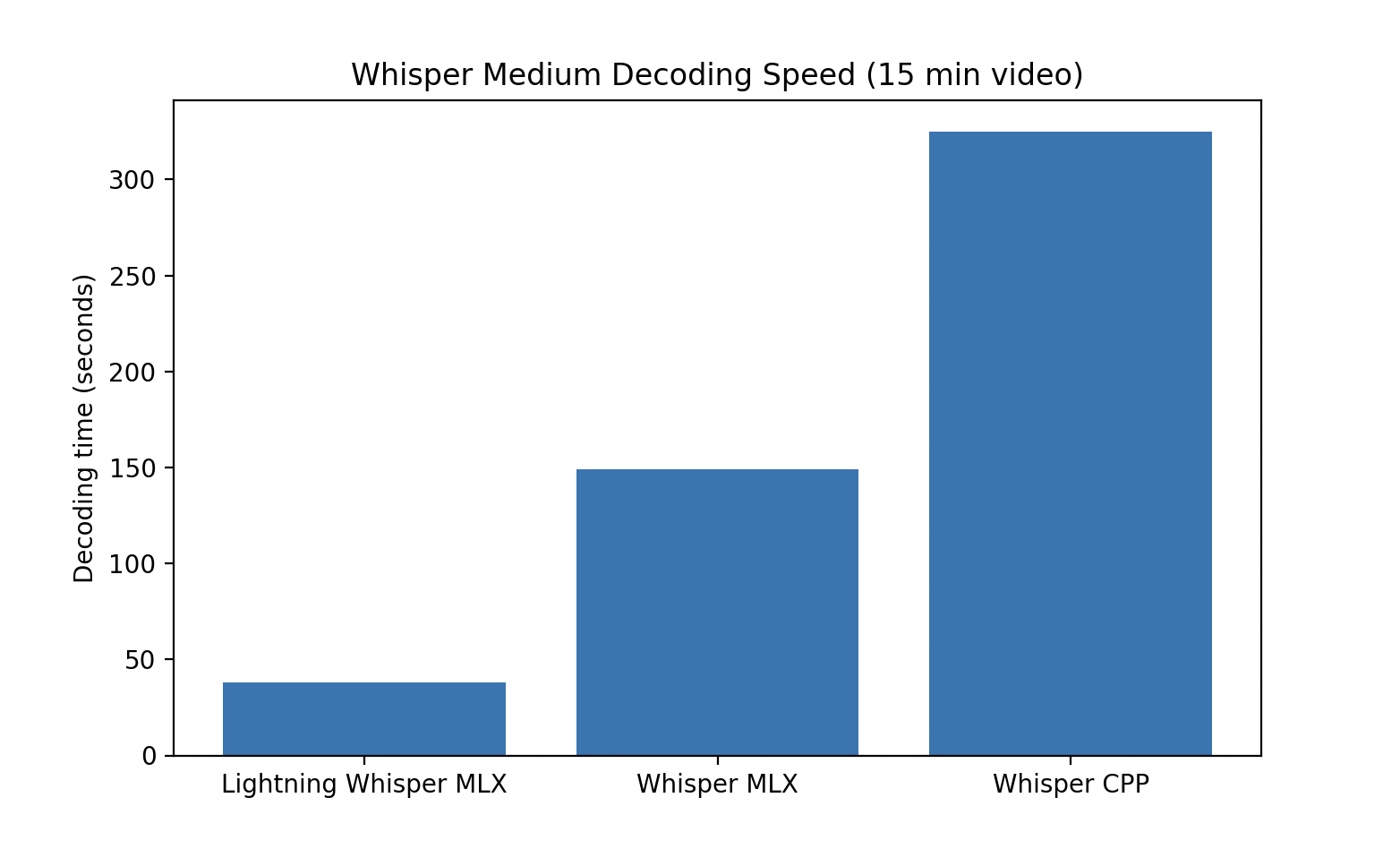

10x faster than Whisper CPP, 4x faster than current MLX Whisper implementation.

- Batched Decoding -> Higher Throughput

- Distilled Models -> Faster Decoding (less layers)

- Quantized Models -> Faster Memory Movement

- Coming Soon: Speculative Decoding -> Faster Decoding with Assistant Model

Install lightning whisper mlx using pip:

pip install lightning-whisper-mlxModels

["tiny", "small", "distil-small.en", "base", "medium", distil-medium.en", "large", "large-v2", "distil-large-v2", "large-v3", "distil-large-v3"]

Quantization

[None, "4bit", "8bit"]

from lightning_whisper_mlx import LightningWhisperMLX

whisper = LightningWhisperMLX(model="distil-medium.en", batch_size=12, quant=None)

text = whisper.transcribe(audio_path="/audio.mp3")['text']

print(text)- The default batch_size is

12, higher is better for throughput but you might run into memory issues. The heuristic is it really depends on the size of the model. If you are running the smaller models, then higher batch size, larger models, lower batch size. Also keep in mind your unified memory!