This is a tool to derive formal semantic representations of natural language sentences given CCG derivation trees and semantic templates.

If you can use Docker, this Docker image is highly recommended.

docker pull masashiy/ccg2lambdaThe installation guide below and our Dockerfile are not maintained now.

In order to run most part of this software, it is necessary to install python3,

nltk 3, lxml, simplejson and yaml python libraries. I recommend to use a python virtual environment,

and install the packages in the virtual environment with pip:

sudo apt-get install python3-dev

sudo apt-get install python-virtualenv

sudo apt-get install libxml2-dev libxslt1-dev

git clone https://github.com/mynlp/ccg2lambda.git

cd ccg2lambda

virtualenv --no-site-packages --distribute -p /usr/bin/python3 py3

source py3/bin/activate

pip install lxml simplejson pyyaml -I nltk==3.0.5You also need to install WordNet:

python -c "import nltk; nltk.download('wordnet')"To ensure that all software is working as expected, you can run the tests:

python scripts/run_tests.py(all tests should pass, except a few expected failures).

You also need to install the Coq Proof Assistant that we use for automated reasoning. In Ubuntu, you can install it by:

sudo apt-get install coqThen, compile the coq library that contains the axioms:

coqc coqlib.vOur system assigns semantics to CCG structures. At the moment, we support C&C for English, and Jigg for Japanese.

Installing C&C parser (for English)

You can download and install the C&C syntactic parser by running the following script from the ccg2lambda directory:

./en/install_candc.shIf that fails, you may succeed by following these alternative instructions, in which case you need to manually create a file en/candc_location.txt with the path to the C&C parser:

echo "/path/to/candc-1.00/" > en/candc_location.txtInstalling Jigg parser (for Japanese)

Simply do:

./ja/download_dependencies.shThe command above will download Jigg, its models, and create the file ja/jigg_location.txt where the path to Jigg is specified. That is all.

Let's assume that we have a file sentences.txt with one sentence per line,

and that we want to semantic parse those sentences. Here is the content of

my file:

All women ordered coffee or tea.

Some woman did not order coffee.

Some woman ordered tea.

And we want to obtain a symbolic semantic representation such as:

forall x. (woman(x) -> exists y. ((tea(y) \/ coffee(y)) /\ order(x, y)))

exists x. (woman(x) /\ -exists y. (cofee(y) /\ order(x, y)))

exists x. (woman(x) /\ exists y. (tea(y) /\ order(x, y)))

First we need to obtain the CCG derivations (parse trees) of the sentences in the text file using C&C and convert its XML format into Jigg's XML format:

cat sentences.txt | sed -f en/tokenizer.sed > sentences.tok

/path/to/candc-1.00/bin/candc --models /path/to/candc-1.00/models --candc-printer xml --input sentences.tok > sentences.candc.xml

python en/candc2transccg.py sentences.candc.xml > sentences.xmlThen, we are ready to obtain the semantic representations by using semantic templates and the CCG derivations obtained above:

python scripts/semparse.py sentences.xml en/semantic_templates_en_emnlp2015.yaml sentences.sem.xmlThe semantic representations are in the sentences.sem.xml file,

where a new XML node <semantics> has been added with as many child nodes

as the CCG structure. Each semantic span has the logical representation

obtained up to that span. The root span has the logical representation

of the whole sentence. Here there is an excerpt of the semantics XML node

of the last sentence:

<semantics status="success" root="s2_sp0">

<span id="s2_sp0" child="s2_sp1 s2_sp9" sem="exists x.(_woman(x) & TrueP & exists z4.(_tea(z4) & TrueP & _order(x,z4)))"/>

<span id="s2_sp1" child="s2_sp2 s2_sp5" sem="exists x.(_woman(x) & TrueP & exists z4.(_tea(z4) & TrueP & _order(x,z4)))"/>

<span id="s2_sp2" child="s2_sp3 s2_sp4" sem="\F2 F3.exists x.(_woman(x) & F2(x) & F3(x))"/>

<span id="s2_sp3" sem="\F1 F2 F3.exists x.(F1(x) & F2(x) & F3(x))"/>

<span id="s2_sp4" sem="\x._woman(x)" type="_woman : Entity -> Prop"/>

<span id="s2_sp5" child="s2_sp6 s2_sp7" sem="\Q2.Q2(\w.TrueP,\x.exists z4.(_tea(z4) & TrueP & _order(x,z4)))"/>

<span id="s2_sp6" sem="\Q1 Q2.Q2(\w.TrueP,\x.Q1(\w.TrueP,\y._order(x,y)))" type="_order : Entity -> Entity -> Prop"/>

<span id="s2_sp7" child="s2_sp8" sem="\F1 F2.exists x.(_tea(x) & F1(x) & F2(x))"/>

<span id="s2_sp8" sem="\x._tea(x)" type="_tea : Entity -> Prop"/>

<span id="s2_sp9" sem="\X.X"/>

</semantics>The sem attribute contains the logical formulas, and the type attributes

the types of the predicates (types only appear at the leaves).

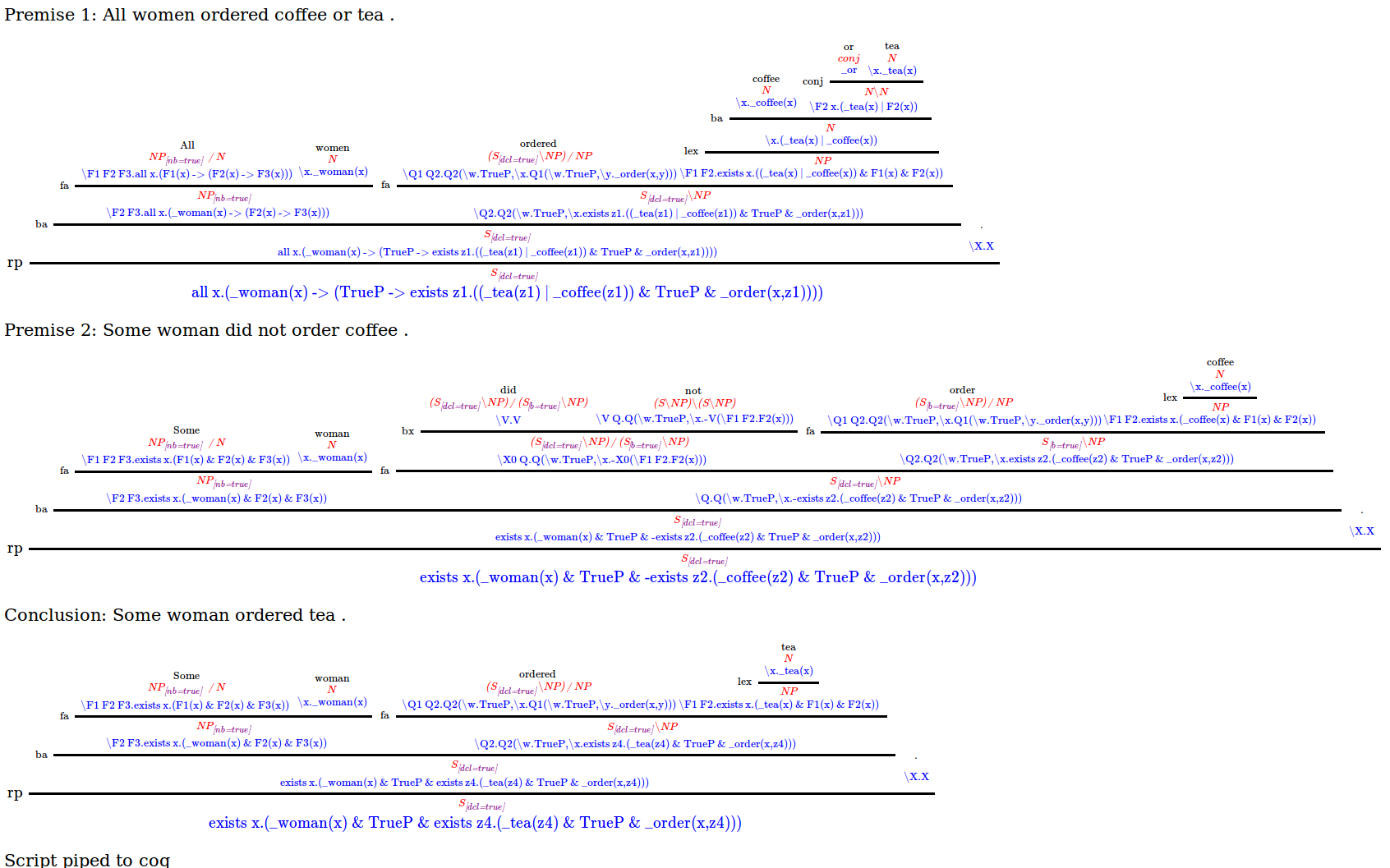

We believe that the semantic representations above can be used for several NLP tasks. We have been using them so far for recognizing textual entailment. For this purpose, we assume that all sentences in the file are premises, except the last one, which is the conclusion.

To build a theorem out of those logical representations, pipe it to a theorem prover (Coq) and judge the entailment relation, you can run the following command:

python scripts/prove.py sentences.sem.xml --graph_out graphdebug.htmlThat command will output yes (entailment relation - the conclusion

can be proved given the premises), no (contradiction - the negated

conclusion can be proved), unknown (otherwise).

If the parsing process and theorem proving succeeded,

graphdebug.html will have a graphical representation

of the CCG trees, augmented with logical formulas at

every node below the syntactic category. The script

that pipes the theorem to Coq is also displayed at

the bottom. If the semantic parsing or prover fails,

graphdebug.html may contain plain debugging information

(e.g. python error messages, etc.). Here is the graphdebug.html

of the example above:

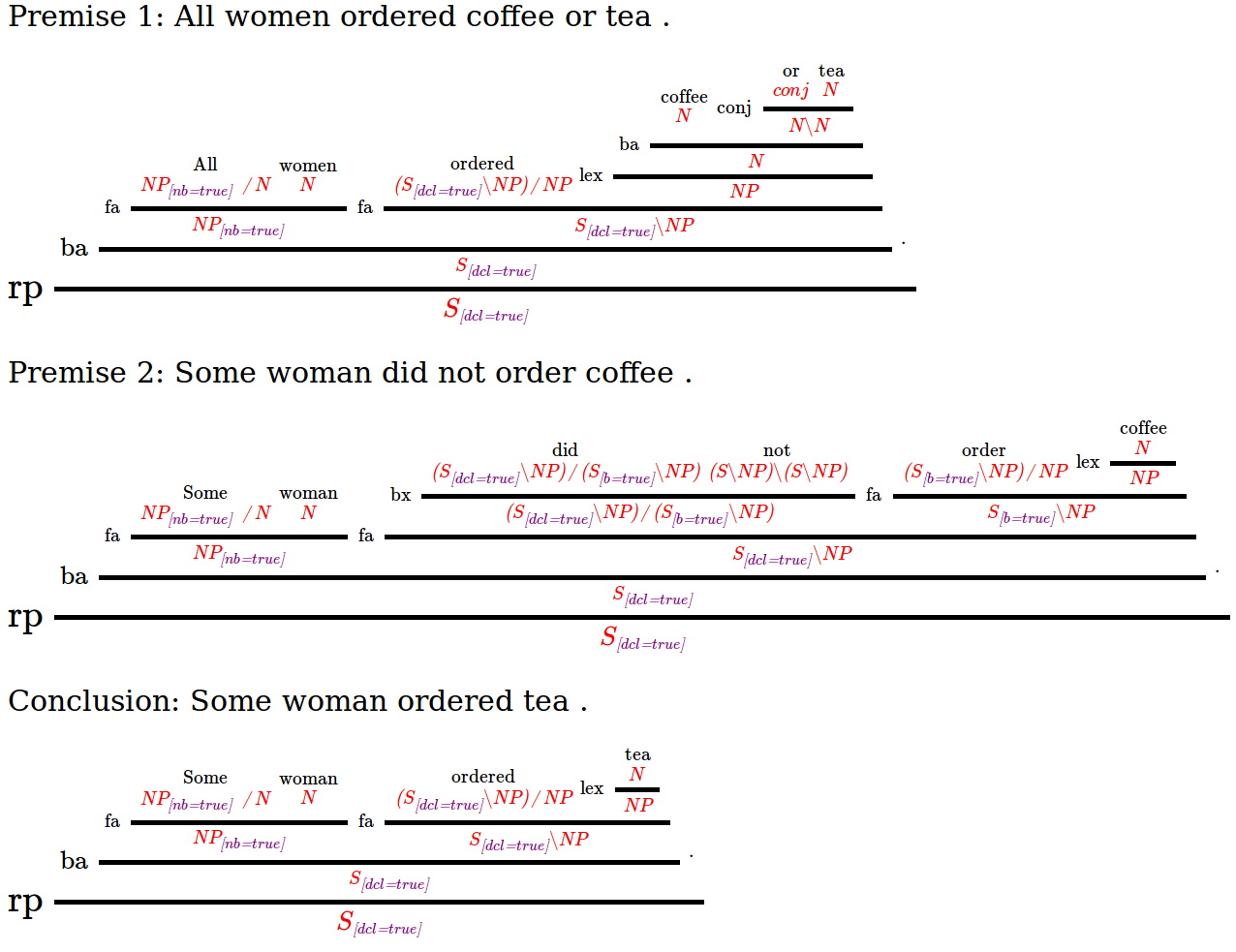

It is also possible to visualize CCG trees, either before or after augmenting them with semantic representations. For example, to visualize the CCG trees only (without semantic representations):

python scripts/visualize.py sentences.xml > sentences.htmland then open the file sentences.html with your favourite web browser.

You should be able to see something like this:

If you wish to reproduce our reported results, please follow the instructions below:

- Experiments on FraCaS at EMNLP 2015

- Experiments on JSeM at EMNLP 2016

- Experiments on SICK at EACL 2017

- Experiments on STS for SICK and MSRvid at EMNLP 2017

- Experiments on SICK at NAACL-HLT 2018

You can find one of our semantic templates in en/semantic_templates_en.yaml. Here are some notes:

- Each Yaml block is a rule. If the rule matches the attributes of a CCG node, then the semantic template specified by "semantics" is applied. It is possible to write an arbitrary set of field names and their values. For example, you could specify the "category" of the CCG node and the surface "surf" form of a word (in case the CCG node is a leaf). Only the "category" field and the "semantics" field are compulsory.

- If you underspecify the characteristics of a CCG node, the semantic rule will match more general CCG nodes. It is also possible to underspecify the features of syntactic categories.

- If more than one rule applies, only the last one in the file will be applied.

- Any CCG node can be matched by the semantic rules.

- If the rule matches a CCG leaf node, then the base form (or surface form, if base not present) will be passed as the first argument to the lambda expression of the semantic template (specified by "semantics" field in Yaml rule).

- If the rule matches a CCG node with only one child, then the semantic expression of the child will be passed as the first argument to the semantic template assigned to this CCG node.

- If the rule matches a CCG node with two children, then the semantic expressions of the left and right children will be passed as the first and second argument to the semantic template of this CCG node, respectively.

- Rules that are intended to match inner nodes of the CCG derivation need to specify either:

- A "rule" attribute, specifying what type of combination operation is there.

- A "child" attribute, specifying at least one attribute of one child (see next point).

- You can specify in a semantic rule the characteristics of the children at the current CCG node. To that purpose, simply prefix the attribute name with "child0_" or "child1_" of the left or right child, respectively. E.g. if you want to specify the syntactic category of the left child, then you call such attribute in the semantic rule as "child0_category". You can still underspecify the attributes of the children. If the CCG node only has one child, then it is considered a left child (and its attributes are specified using "child0_" as a prefix).

If you use this software or the semantic templates for your work, please consider citing it.

- Pascual Martínez-Gómez, Koji Mineshima, Yusuke Miyao, Daisuke Bekki. ccg2lambda: A Compositional Semantics System. Proceedings of the 54th Annual Meeting of the Association for Computational Linguistics - System Demonstrations, pages 85–90, Berlin, Germany, August 7-12, 2016. pdf

@InProceedings{pascual-EtAl:2016:ACL-2016-System-Demonstrations,

author = {Mart\'{i}nez-G\'{o}mez, Pascual and Mineshima, Koji and Miyao, Yusuke and Bekki, Daisuke},

title = {ccg2lambda: A Compositional Semantics System},

booktitle = {Proceedings of ACL 2016 System Demonstrations},

month = {August},

year = {2016},

address = {Berlin, Germany},

publisher = {Association for Computational Linguistics},

pages = {85--90},

url = {https://aclweb.org/anthology/P/P16/P16-4015.pdf}

}

- Hitomi Yanaka, Koji Mineshima, Pascual Martínez-Gómez and Daisuke Bekki. Acquisition of Phrase Correspondences using Natural Deduction Proofs. Proceedings of 16th Annual Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, New Orleans, Louisiana, 1-6 June 2018. arXiv

@InProceedings{yanaka-EtAl:2018:NAACL-HLT,

author = {Yanaka, Hitomi and Mineshima, Koji and Mart\'{i}nez-G\'{o}mez, Pascual and Bekki, Daisuke},

title = {Acquisition of Phrase Correspondences using Natural Deduction Proofs},

booktitle = {Proceedings of 16th Annual Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies},

month = {June},

year = {2018},

address = {New Orleans, Louisiana},

publisher = {Association for Computational Linguistics},

}

- Hitomi Yanaka, Koji Mineshima, Pascual Martínez-Gómez and Daisuke Bekki. Determining Semantic Textual Similarity using Natural Deduction Proofs. Proceedings of the 2017 Conference on Empirical Methods in Natural Language Processing, Copenhagen, Denmark, 7-11 September 2017. arXiv

@InProceedings{yanaka-EtAl:2017:EMNLP,

author = {Yanaka, Hitomi and Mineshima, Koji and Mart\'{i}nez-G\'{o}mez, Pascual and Bekki, Daisuke},

title = {Determining Semantic Textual Similarity using Natural Deduction Proofs},

booktitle = {Proceedings of the 2017 Conference on Empirical Methods in Natural Language Processing},

month = {September},

year = {2017},

address = {Copenhagen, Denmark},

publisher = {Association for Computational Linguistics},

}

- Pascual Martínez-Gómez, Koji Mineshima, Yusuke Miyao, Daisuke Bekki. On-demand Injection of Lexical Knowledge for Recognising Textual Entailment. Proceedings of the 15th Conference of the European Chapter of the Association for Computational Linguistics, pages 710-720, Valencia, Spain, 3-7 April, 2017. pdf

@InProceedings{martinezgomez-EtAl:2017:EACLlong,

author = {Mart\'{i}nez-G\'{o}mez, Pascual and Mineshima, Koji and Miyao, Yusuke and Bekki, Daisuke},

title = {On-demand Injection of Lexical Knowledge for Recognising Textual Entailment},

booktitle = {Proceedings of the 15th Conference of the European Chapter of the Association for Computational Linguistics: Volume 1, Long Papers},

month = {April},

year = {2017},

address = {Valencia, Spain},

publisher = {Association for Computational Linguistics},

pages = {710--720},

url = {http://www.aclweb.org/anthology/E17-1067}

}

- Koji Mineshima, Pascual Martínez-Gómez, Yusuke Miyao, Daisuke Bekki. Higher-order logical inference with compositional semantics. Proceedings of the 2015 Conference on Empirical Methods in Natural Language Processing, pages 2055–2061, Lisbon, Portugal, 17-21 September 2015. pdf

@InProceedings{mineshima-EtAl:2015:EMNLP,

author = {Mineshima, Koji and Mart\'{i}nez-G\'{o}mez, Pascual and Miyao, Yusuke and Bekki, Daisuke},

title = {Higher-order logical inference with compositional semantics},

booktitle = {Proceedings of the 2015 Conference on Empirical Methods in Natural Language Processing},

month = {September},

year = {2015},

address = {Lisbon, Portugal},

publisher = {Association for Computational Linguistics},

pages = {2055--2061},

url = {http://aclweb.org/anthology/D15-1244}

}

- Koji Mineshima, Ribeka Tanaka, Pascual Martínez-Gómez, Yusuke Miyao, Daisuke Bekki. Building compositional semantics and higher-order inference system for wide-coverage Japanese CCG parsers. Proceedings of the 2016 Conference on Empirical Methods in Natural Language Processing, pages 2236-2242, Austin, Texas, 1-5 November 2016. pdf

@InProceedings{D16-1242,

author = "Mineshima, Koji

and Tanaka, Ribeka

and Mart{\'i}nez-G{\'o}mez, Pascual

and Miyao, Yusuke

and Bekki, Daisuke",

title = "Building compositional semantics and higher-order inference system for a wide-coverage {J}apanese {CCG} parser",

booktitle = "Proceedings of the 2016 Conference on Empirical Methods in Natural Language Processing",

year = "2016",

publisher = "Association for Computational Linguistics",

pages = "2236--2242",

location = "Austin, Texas",

url = "http://aclweb.org/anthology/D16-1242"

}