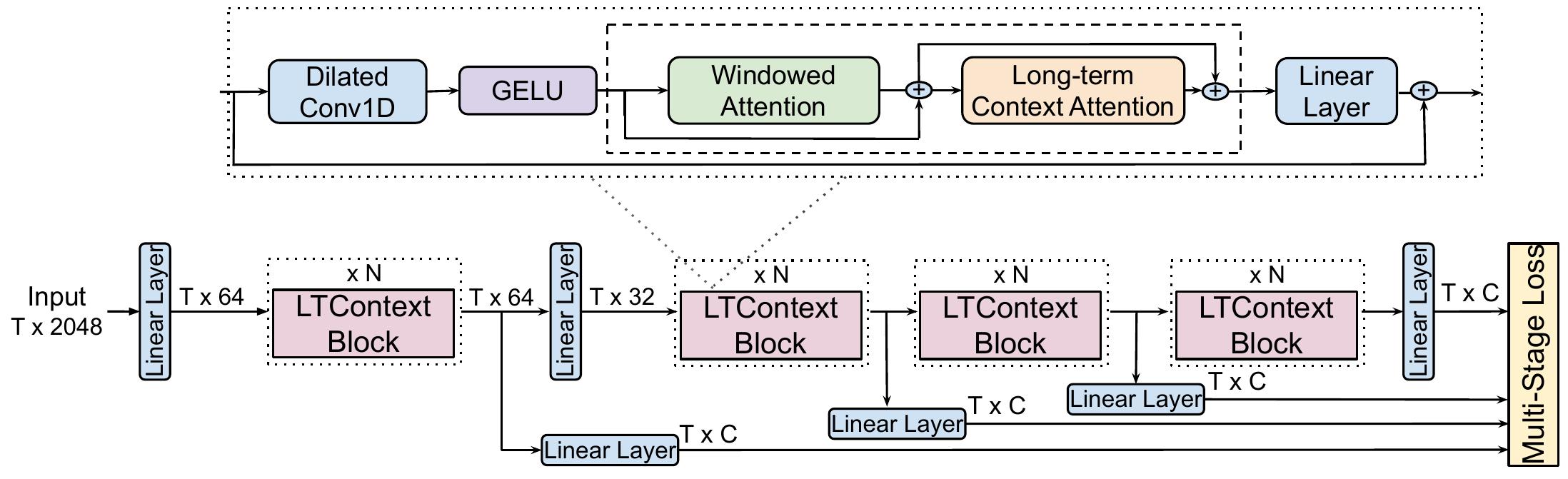

LTContext is an approach for temporal action segmentation, where it leverages sparse attention to capture the long-term context of a video and windowed attention to model the local information in the neighboring frames.

Here is an overview of the architecture:

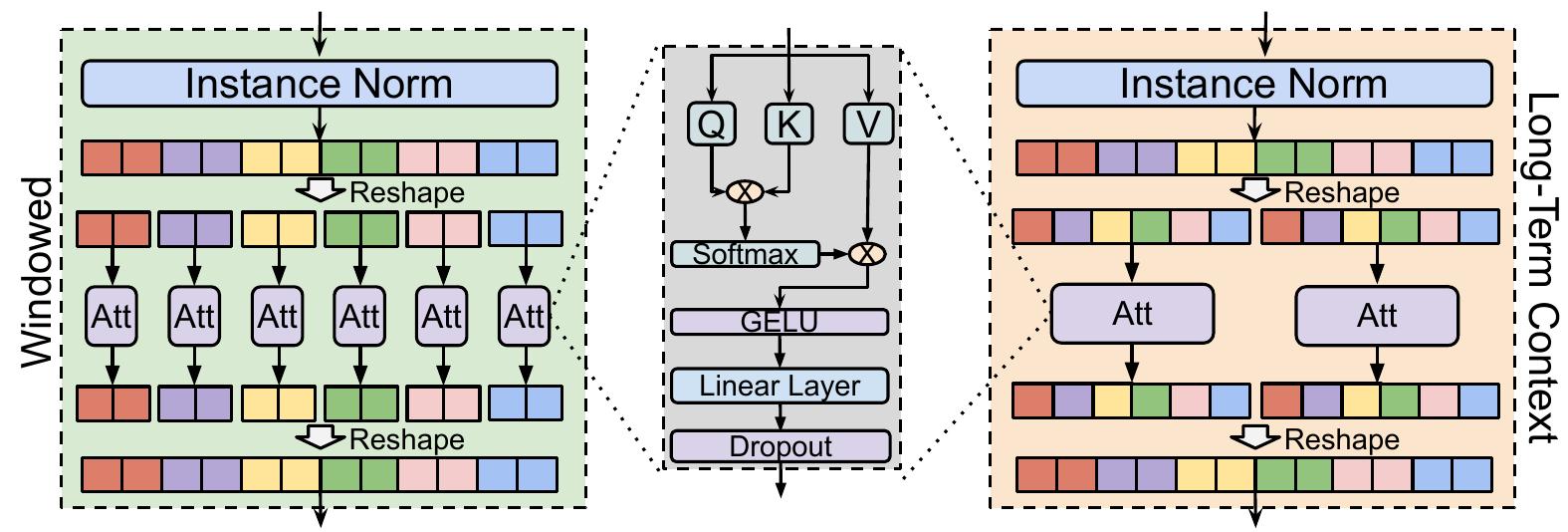

The attention mechanism consist of Windowed Attention and Long-term Context Attention:

If you use this code or our model, please cite our paper:

@inproceedings{ltc2023bahrami,

author = {Emad Bahrami and Gianpiero Francesca and Juergen Gall},

title = {How Much Temporal Long-Term Context is Needed for Action Segmentation?},

booktitle = {IEEE International Conference on Computer Vision (ICCV)},

year = {2023}

}To create the conda environment run the following command:

conda env create --name ltc --file environment.yml

source activate ltc The features and annotations of the Breakfast dataset can be downloaded from link 1 or link 2.

Follow the instructions at Assembly101-Download-Scripts to download the .lmdb TSM features.

The annotations for action segmentation can be downloaded from assembly101-annotations.

After downloading the annotation put coarse-annotations inside data/assembly101 folder.

We noticed loading from numpy can be faster, you can convert the .lmdb features to numpy and use LTContext_Numpy.yaml config.

Here is an example of the command to train the model.

python run_net.py \

--cfg configs/Breakfast/LTContext.yaml \

DATA.PATH_TO_DATA_DIR [path_to_your_dataset] \

OUTPUT_DIR [path_to_logging_dir]For more options look at ltc/config/defaults.py.

The value of DATA.PATH_TO_DATA_DIR for assembly101 should be the path to the folder containing the TSM features.

If you want to evaluate a pretrained model use the following command.

python run_net.py \

--cfg configs/Breakfast/LTContext.yaml \

DATA.PATH_TO_DATA_DIR [path_to_your_dataset] \

TRAIN.ENABLE False \

TEST.ENABLE True \

TEST.DATASET 'breakfast' \

TEST.CHECKPOINT_PATH [path_to_trained_model] \

TEST.SAVE_RESULT_PATH [path_to_save_result]Check the model card to download the pretrained models.

The structure of the code is inspired by SlowFast. The MultiHeadAttention is based on xFormers. We thank the authors of these codebases.

This work is licensed under a Creative Commons Attribution-NonCommercial 4.0 International License.