An attempt at improving an existing website.

Using wget I extracted a copy of the site, as it was running on 2020-08-24. The command I used was as follows:

wget

--recursive

--page-requisites

--adjust-extension

--span-hosts

--convert-links

--restrict-file-names=windows

--domains nzxr.dev,googleusercontent.com

https://nzxr.dev

This extract doesn't include the webfonts and extraneous CSS served from fonts.google.com, as this is user-agent specfic.

I ran a local python webserver, to get an idea of the footprint of assets required to run off one host. I also disabled JavaScript to get a sense of the site's reliability.

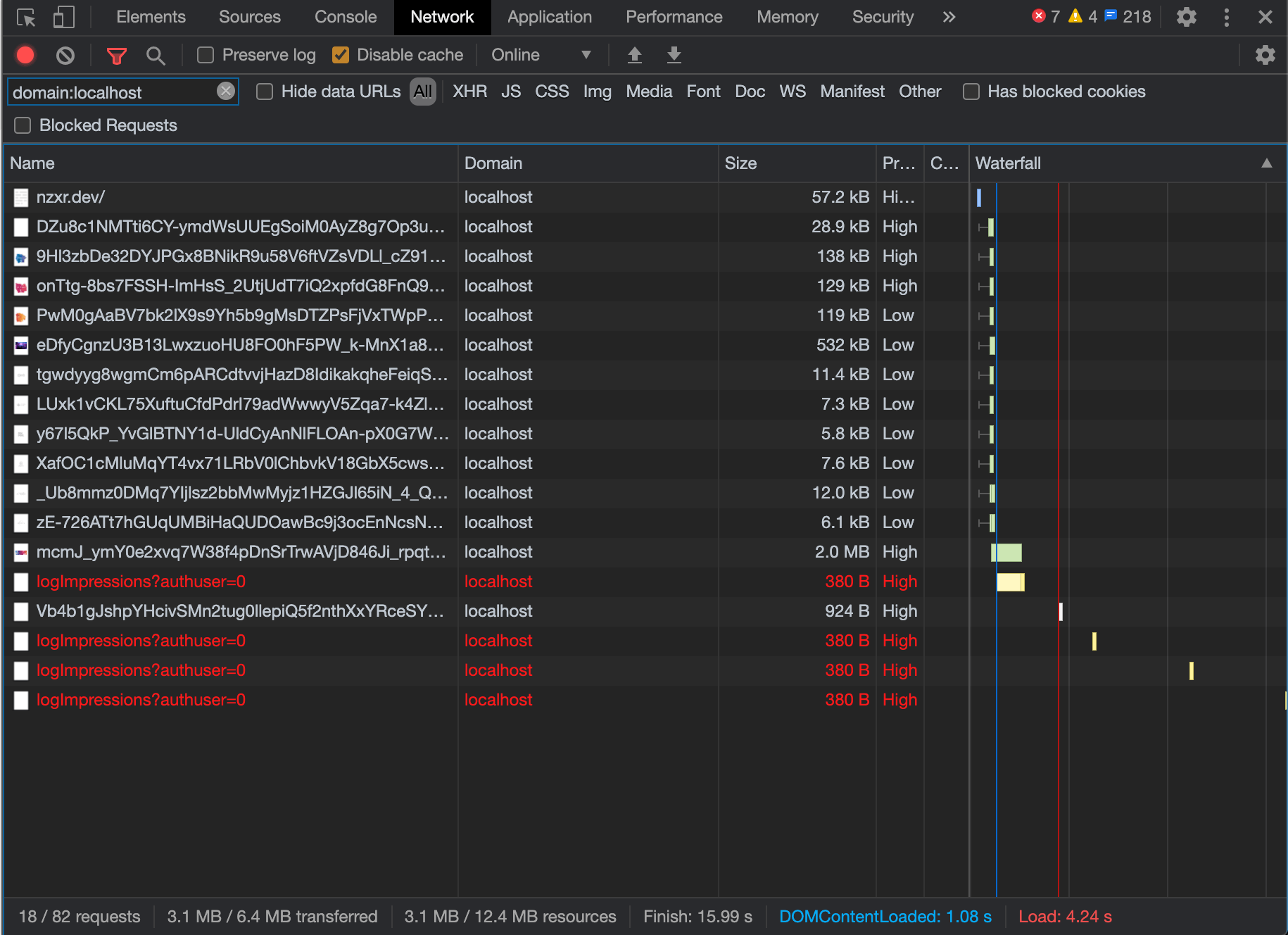

Filtering by domain: localhost, I got the following result:

That's quite a lot for a one-pager, without JavaScript.

To make sure I didn't make any mistakes in the extract, here are two screenshots of the live site vs. my extract:

There are some massive uncompressed images at play here. These will be the first target for reducing the overall weight of the site.

e.g. A 2MB masthead image

The HTML is a mess of JavaScript and Google proprietary code. A sitebuilder is at play here, could it be Google Sites?

5 domains to serve HTML, inline CSS, and images. That excludes the additional domains required for Google Fonts. Using a warm and open connection for these supporting assets would see the response times drop significantly.

The amount of third-party requests is insane. The Youtube video embeds are the major first offender, but the amount of analytics and ad data too is creepy.

If I have enough time, I'll see what can be done to drain the swamp.