!!! Note that only hadoop-slave1 will be run in this forked project.

git clone https://github.com/neoremind/hadoop-cluster-docker

docker network create --driver=bridge hadoop

cd hadoop-cluster-docker && docker build -t xuzh/hadoop:1.0 .

you can check by running:

$ docker images

cd hadoop-cluster-docker

./start-container.sh

output:

start hadoop-master container...

start hadoop-slave1 container...

root@hadoop-master:~#

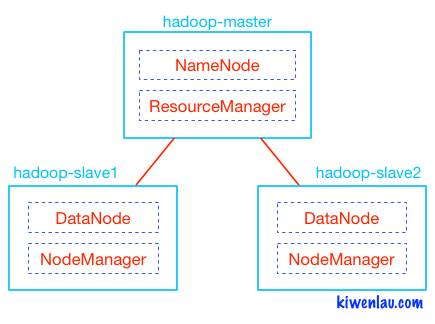

- start 2 containers with 1 master and 1 slaves

- you will get into the /root directory of hadoop-master container

you can check by running:

$ docker ps

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

e3f5ad6ebac0 xuzh/hadoop:1.0 "sh -c 'service ssh s" 7 hours ago Up 7 hours 0.0.0.0:8042->8042/tcp, 0.0.0.0:8090-8091->8090-8091/tcp, 0.0.0.0:8190-8191->8190-8191/tcp hadoop-slave1

c5c094753715 xuzh/hadoop:1.0 "sh -c 'service ssh s" 7 hours ago Up 7 hours 0.0.0.0:8088->8088/tcp, 0.0.0.0:50070->50070/tcp hadoop-master

./start-hadoop.sh

./start-historyserver.sh

add the following to /etc/hosts

127.0.0.1 hadoop-master

127.0.0.1 hadoop-slave1

http://hadoop-master:8088

If you can open the page, then everything is done.

docker stop <CONTAINER_ID>

or

docker rm -f <CONTAINER_ID>