This codebase contains the implementation of [Communication-Aware DNN Pruning] (INFOCOM2023).

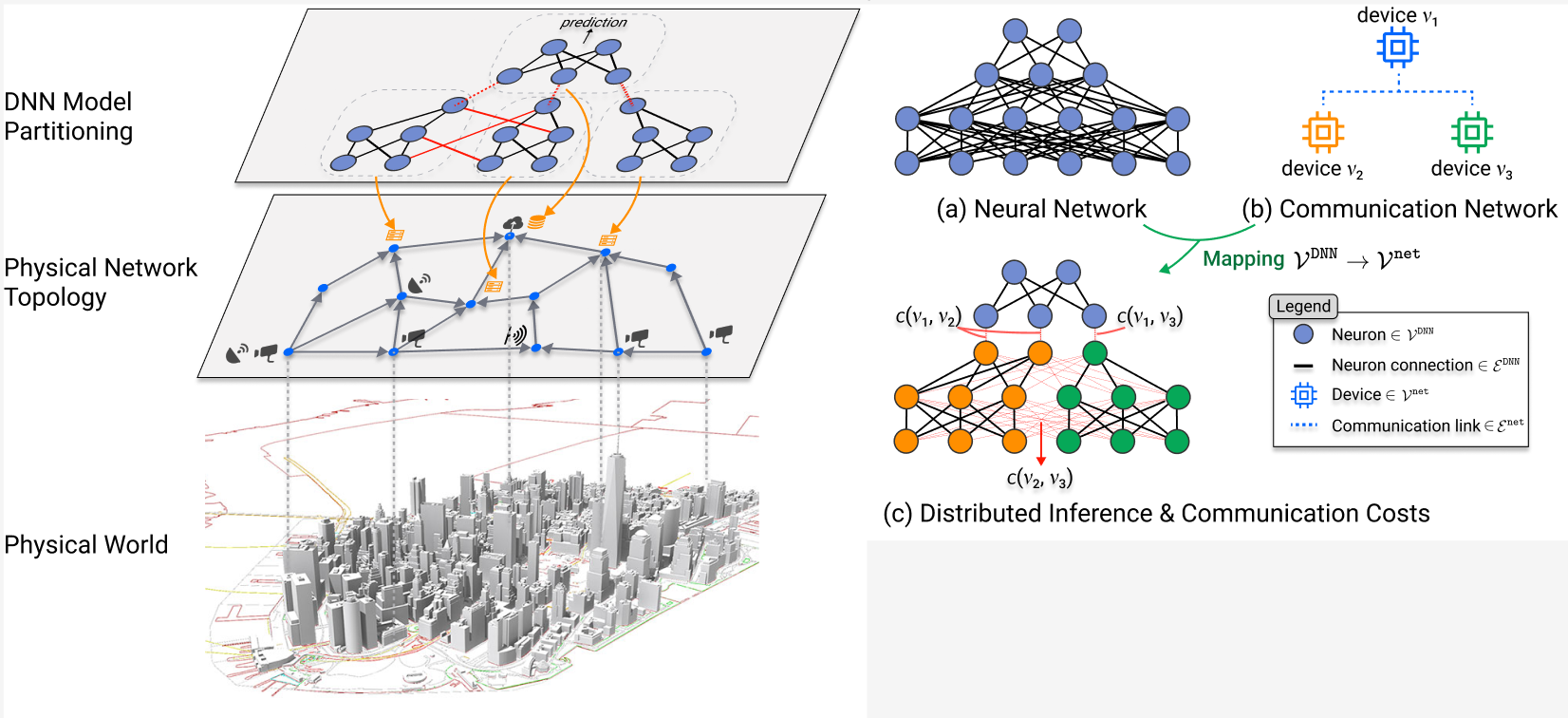

We propose a Communication-aware Pruning (CaP) algorithm, a novel distributed inference framework for distributing DNN computations across a physical network. Departing from conventional pruning methods, CaP takes the physical network topology into consideration and produces DNNs that are communication-aware, designed for both accurate and fast execution over such a distributed deployment. Our experiments on CIFAR-10 and CIFAR-100, two deep learning benchmark datasets, show that CaP beats state of the art competitors by up to 4% w.r.t. accuracy on benchmarks. On experiments over real-world scenarios, it simultaneously reduces total execution time by 27%--68% at negligible performance decrease (less than 1%).

Please install either python 3.9.X or 3.10.X and create a virtual environment using the requirements.txt file.

We provide a sample bash script to run our method at 0.75 sparsity ratio on CIFAR-10.

To run CaP:

source env.sh

run-cifar10-resnet18.shModel inference over a network can be emulated by starting multiple threads on a local or remote machine. For windows users, it is assumed that WSL or another bash emulator is installed. The following is the procedure for making a run:

- Train and prune the model

- Save the model in the assets/models folder

- Create the network setup files (see config/resnet_4_network as example):

i. config-leaf.json -- indicates how to reach (via ip and port) leaf nodes of network for input transmission

ii. ip-map.json -- indicates server (ip and port) that each node monitors for incoming connections

iii. network-graph.json -- defines network graph topology - Update local_network/start_servers.sh and local_network/start_server_helper.bat (windows) or local_network/start_servers_linux.sh (linux) with the following:

i. file paths to network setup files

ii. the python environment activation

iii. terminal/bash emulator (e.g. gnome-terminal, terminator, etc.).

iv. model name - Setup the servers (from directory ./CaP):

# Windows (with wsl)

bash local_network/start_servers.sh

# Linux

bash local_network/start_servers_linus.sh

- Activate python environment

- Send inputs (WARNING only works for cifar10 inputs).:

# Windows

python -m source.utils.send_leaf_split_model [path to config-leaf.json]

# Linux

python -m ./source/utils/send_leaf_split_model.py [path to config-leaf.json]

Ouputs will appear in the logs/[dir log out] folder specified in the start servers script. Post processing and visaulization tools are found in sandbox/plot_timing.ipynb

Example colosseum run procedure (TODO: generalize, add detail, and verify works):

- connect to VPN via cisco

- make reservation

- wait until srn nodes are spun up

- manually configure colosseum/nodes.txt

- wait until srn nodes are running

- open bash session in CaP/colosseum repo

- move repo to snr nodes, start rf, and collect ip addresses: bash ./setup.sh nodes_test.txt cifar10-resnet18-kernel-npv2-pr0.75-lcm0.001

- start servers for running split model: bash ./start_servers_colosseum.sh "./nodes_test.txt" "./ip-map.json" "./network-graph.json" "cifar10-resnet18-kernel-npv2-pr0.75-lcm0.001.pt"

- send inputs to leaf nodes:

open windows terminal in CaP repo

conda activate cap_nb

source env.sh

python -m send_leaf_split_model colosseum/config-leaf.json

OR

gnome-terminal -- bash -c "sshpass -p ChangeMe ssh genesys-115 'cd /root/CaP && source env.sh && source ../cap-310/bin/activate && python3 -m send_leaf_split_model colosseum/config-leaf.json; bash '" &

@article{jian2023cap,

title={Communication-Aware DNN Pruning},

author={Jian, Tong and Roy, Debashri Roy and Salehi, Batool and Soltani, Nasim and Chowdhury, Kaushik and Ioannidis, Stratis}

journal={INFOCOM},

year={2023}

}