A Kaggle competition was held at CentraleSupélec between teams of students; the goal was to provide the best algorithm possible to reach highest accuracy (computed with the Dice coefficient) while segmenting images taken from small UAVs.

We had toimplement and solve a remote sensing problem using recent deep learning techniques. In particular, the main objective of this challenge is to segment images acquired by a small UAV (sUAV) at the area on Houston, Texas. These images were acquired in order to assess the damages on residential and public properties after Hurricane Harvey. In total there are 25 categories of segments (e.g. roof, trees, pools etc.).

The task was to design and implement a deep learning model in order to perform the automatic segmentation of such images. The model could be trained using the train images which contain pixel-wise annotations. Using the trained model, a prediction on the test images had to be performed and submitted on the platform. Depending on the performance of the submitted file and the leaderboard you would be ranked accordingly using macro F1 score.

Following classes are present in the dataset.

- Background

- Property Roof

- Secondary Structure

- Swimming Pool

- Vehicle

- Grass

- Trees / Shrubs

- Solar Panels

- Chimney

- Street Light

- Window

- Satellite Antenna

- Garbage Bins

- Trampoline

- Road/Highway

- Under Construction / In Progress Status

- Power Lines & Cables

- Water Tank / Oil Tank

- Parking Area - Commercial

- Sports Complex / Arena

- Industrial Site

- Dense Vegetation / Forest

- Water Body

- Flooded

- Boat

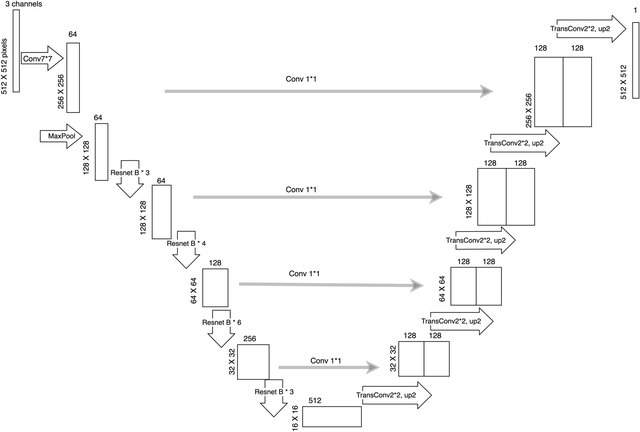

The model architecture used was based on the following architecture.

Model achieved 71% accuracy on a train dataset of ~250 images, without using any pre-trained ResNet network inside the Unet network.

The "accuracy" was computed accordingly to the Sørensen-Dice Coefficient.