[Embedded in AREkit-0.20.0 and later versions]

UPD December 7rd, 2019: this attention model becomes a part of AREkit framework (original, interactive). Please proceed with this framework for an embedded implementation.

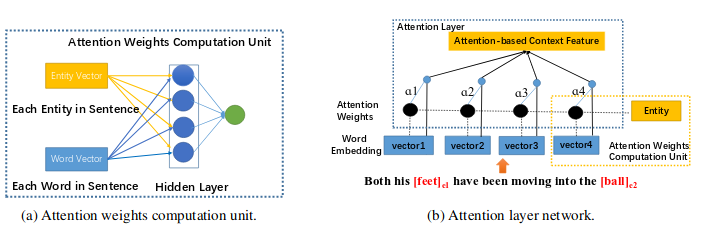

This project is an unofficial implementation of MLP attention -- multilayer perceptron attention network, proposed by Yatian Shen and Xuanjing Huang as an application for Relation Extraction Task [paper].

Vector representation of words and entities includes:

- Term embedding;

- Part-Of-Speech (POS) embedding;

- Distance embedding;

You may proceed with the following repositories NLDB-2020 paper/code; WIMS-2020 paper/code.

This version has been embedded in AREkit-[0.20.3], and become a part of the following papers:

- Attention-Based Neural Networks for Sentiment Attitude Extraction using Distant Supervision

[ACM-DOI] /

[presentation]

- Rusnachenko Nicolay, Loukachevitch Natalia

- WIMS-2020

- Studying Attention Models in Sentiment Attitude Extraction Task

[Springer] /

[arXiv:2006.11605] /

[presentaiton]

- Rusnachenko Nicolay, Loukachevitch Natalia

- NLDB-2020

- Attention-Based Convolutional Neural Network for Semantic Relation Extraction [paper]

- Yatian Shen and Xuanjing Huang

- COLING 2016