Crawlie is a simple Elixir library for writing decently-performing crawlers with minimum effort.

See the crawlie_example project.

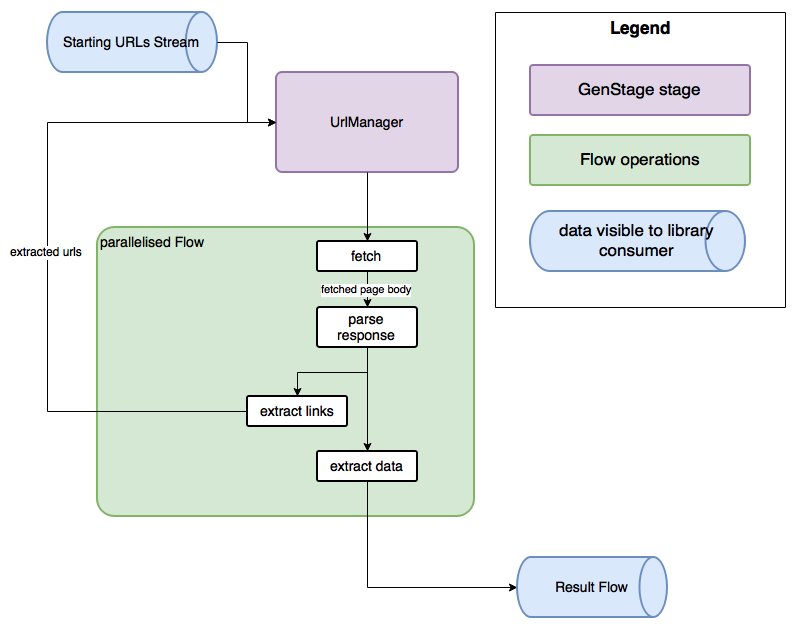

Crawlie uses Elixir's GenStage to parallelise

the work. Most of the logic is handled by the Crawlie.Stage.UrlManager, which consumes the url collection passed by the user, receives the urls extracted by the subsequent processing, makes sure no url is processed more than once, makes sure that the "discovered urls" collection is as small as possible by traversing the url tree in a roughly depth-first manner.

The urls are requested from the Crawlie.Stage.UrlManager by a GenStage Flow, which in parallel

fetches the urls using HTTPoison, and parses the responses using user-provided callbacks. Discovered urls get sent back to UrlManager.

Here's a rough diagram:

If you're interested in the crawling statistics or want to track the progress in real time, see Crawlie.crawl_and_track_stats/3. It starts a Stats GenServer in Crawlie's supervision tree, which accumulates the statistics for the crawling session.

See the docs for supported options.

The package can be installed as:

- Add

crawlieto your list of dependencies inmix.exs:

def deps do

[{:crawlie, "~> 1.0.0"}]

end- Ensure

crawlieis started before your application:

def application do

[applications: [:crawlie]]

end