A collection of AWESOME things about HUGE AI models.

There is a trend of training large-scale deep learning models (w.r.t. params, dataset, FLOPs) led by big companies. These models achieve the SoTA performance at a high price, with bags of training tricks and distributed training systems. Keeping an eye on this trend informs us of the current boundaries of AI models. [Intro in Chinese]

To support the open source process of LLM, we highlight the open-sourced LLM models here:

LLaMA-65B, GLM-130B, BLOOM-176B, OPT-175B, T5-11B, UL2-20B, RWKV-14B, Cerabras-GPT-13B, Dolly-12B.

- A Dive into Vision-Language Models

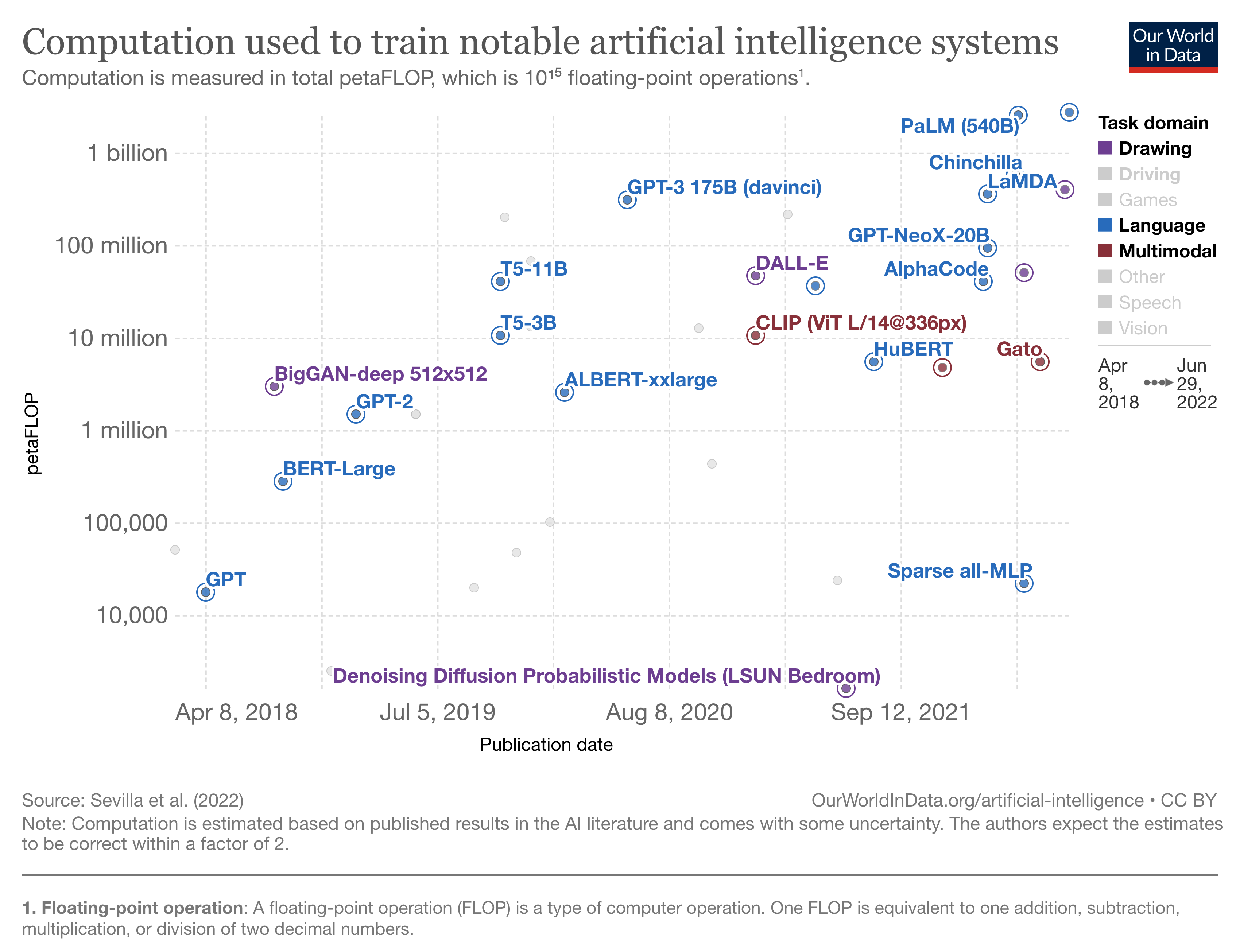

- Compute Trends Across Three Eras of Machine Learning [chart]

- A Roadmap to Big Model

- On the Opportunities and Risk of Foundation Models

- Pre-Trained Models: Past, Present and Future

-

StableLM [Stability AI] Apr. 2023 [open]

Field: Language Params: 3B, 7B, 15B, 30B, 65B, 175B Training Data: The Pile (1.5t tokens)

-

Dolly 2.0 [EleutherAI] Apr. 2023 [open]

Field: Language Params: 12B Training Data: databricks-dolly-15k

-

Cerabras-GPT [Cerabras] Mar. 2023 [open]

Training Compute-Optimal Large Language Models [preprint]Field: Language Params: 13B Training Data: (371B tokens)

-

GPT-4 [OpenAI] Mar. 2023 [close]

GPT-4 Technical Report [Preprint]Field: Language-Vision

-

LLaMa [Meta] Feb. 2023 [open]

Open and Efficient Foundation Language Models [Preprint]Field: Language Params: 65B Training Data: 4TB (1.4T tokens) Training Cost: 1,022,362 (2048 80G-A100 x 21 days) Training Power Consumption: 449 MWh

-

RWKV-4-14B [Personal] Dec. 2022 [open]

Field: Language Params: 14B Training Data: (332B tokens)

-

AnthropicLM [Anthropic] Dec. 2022 [close]

Constitutional AI: Harmlessness from AI FeedbackField: Language Params: 52B

-

BLOOM [BigScience] Nov. 2022 [open]

A 176B-Parameter Open-Access Multilingual Language Model [Preprint]Field: Language Params: 176B Training Data: 174GB (336B tokens) Training Cost: 1M A100 GPU hours = 384 80G-A100 x 4 months Training Power Consumption: 475 MWh Training Framework: Megatron + Deepspeed

-

Galactica [Meta] Nov. 2022 [open] A scientific language model trained on over 48 million scientific texts [Preprint]

Field: Language Params: 125M, 1.3B, 6.7B, 30B, 120B

-

Pythia [EleutherAI] Oct. 2022 [open]

Field: Language Params: 12B

-

GLM-130B [BAAI] Oct. 2022 [open]

GLM-130B: An Open Bilingual Pre-trained Model [ICLR'23]Field: Language Params: 130B Training Data: (400B tokens) Training Cost: 516,096 A100 hours = 768 40G-A100 x 28 days Training Framework: Megatron + Deepspeed

-

UL2 [Google] May 2022 [open]

Unifying Language Learning Paradigms [Preprint]Field: Language Params: 20B (1T tokens) Training Data: 800GB Achitecture: En-De Training Framework: Jax + T5x

-

OPT [Meta] May 2022 [open]

OPT: Open Pre-trained Transformer Language Models [Preprint]Field: Language Params: 175B Training Data: 800GB (180B tokens) Training Cost: 809,472 A100 hours = 992 80G-A100 x 34 days Training Power Consumption: 356 MWh Architecutre: De Training Framework: Megatron + Fairscale

-

PaLM [Google] Apr. 2022 [close]

PaLM: Scaling Language Modeling with Pathways [Preprint]Field: Language Params: 550B Training Data: 3TB (780B tokens) Training Cost: $10M (16,809,984 TPUv4core-hours, 64 days) Training petaFLOPs: 2.5B Architecture: De Training Framework: Jax + T5x

-

GPT-NeoX [EleutherAI] Apr. 2022 [open]

GPT-NeoX-20B: An Open-Source Autoregressive Language Model [Preprint]Field: Language Params: 20B Training Data: 525GiB Training petaFLOPs: 93B Architecture: De Training Framework: Megatron + Fairscale

-

InstructGPT [OpenAI] Mar. 2022 [close]

Training language models to follow instructions with human feedback [Preprint]Field: Language Params: 175B

-

Chinchilla [DeepMind] Mar. 2022 [close]

Training Compute-Optimal Large Language Models [Preprint]Field: Language Params: 70B Training Data: 5.2TB (500B tokens) Training petaFLOPs: 580M Architecture: De

-

EVA 2.0 [BAAI] Mar. 2022 [open]

EVA2.0: Investigating Open-Domain Chinese Dialogue Systems with Large-Scale Pre-Training [Preprint]Field: Language (Dialogue) Params: 2.8B Training Data: 180G (1.4B samples, Chinese)

-

AlphaCode [DeepMind] Mar. 2022 [close]

Competition-Level Code Generation with AlphaCode [Preprint]Field: Code Generation Params: 41B Training Data: (967B tokens) Architecture: De

-

ST-MoE [Google] Feb. 2022 [close]

ST-MoE: Designing Stable and Transferable Sparse Expert Models [Preprint]Field: Language Params: 296B Architecture: En-De, MoE

-

LaMDA [Google] Jan. 2022 [close]

LaMDA: Language Models for Dialog Applications [Preprint]Field: Language (Dialogue) Params: 137B Training Data: (1.56T words) Training petaFLOPs: 360M Architecture: De

-

ERNIE-ViLG [Baidu] Dec. 2022 [close]

ERNIE-ViLG: Unified Generative Pre-training for Bidirectional Vision-Language Generation [Preprint]Field: Image Generation (text to image) Params: 10B Training Data: (145M text-image pairs) Architecture: Transformer, dVAE + De

-

GLaM [Google] Dec. 2021 [close]

GLaM: Efficient Scaling of Language Models with Mixture-of-Experts [Preprint]Field: Language Params: 1.2T Architecture: De, MoE

-

Gopher [DeepMind] Dec. 2021 [close]

Scaling Language Models: Methods, Analysis & Insights from Training Gopher [Preprint]Field: Language Params: 280B Training Data: 1.3TB (300B tokens) Training petaFLOPs: 630M Architecture: De

-

Yuan 1.0 [inspur] Oct. 2021 [close]

Yuan 1.0: Large-Scale Pre-trained Language Model in Zero-Shot and Few-Shot Learning [Preprint]Field: Language Params: 245B Training Data: 5TB (180B tokens, Chinese) Training petaFLOPs: 410M Architecture: De, MoE

-

MT-NLG [Microsoft, Nvidia] Oct. 2021 [close]

Using DeepSpeed and Megatron to Train Megatron-Turing NLG 530B, A Large-Scale Generative Language Model [Preprint]Field: Language Params: 530B Training Data: (339B tokens) Training petaFLOPs: 1.4B Architecture: De

-

Plato-XL [Baidu] Sept. 2021 [close]

PLATO-XL: Exploring the Large-scale Pre-training of Dialogue Generation [Preprint]Field: Language (Dialogue) Params: 11B Training Data: (1.2B samples)

-

Jurassic-1 [AI21 Labs] Aug. 2021 [close]

Jurassic-1: Technical Details and Evaluation [Preprint]Field: Language Params: 178B Training petaFLOPs: 370M Architecture: De

-

Codex [OpenAI] July 2021 [close]

Evaluating Large Language Models Trained on Code [Preprint]Field: Code Generation Params: 12B Training Data: 159GB Architecture: De

-

ERNIE 3.0 [Baidu] July 2021 [close]

ERNIE 3.0: Large-scale Knowledge Enhanced Pre-training for Language Understanding and Generation [Preprint]Field: Language Params: 10B Training Data: 4TB (375B tokens, with knowledge graph) Architecture: En Objective: MLM

-

CPM-2 [BAAI] June 2021 [open]

CPM-2: Large-scale Cost-effective Pre-trained Language Models [Preprint]Field: Language Params: 198B Training Data: 2.6TB (Chinese 2.3TB, English 300GB) Architecture: En-De Objective: MLM

-

HyperClova [Naver] May 2021 [close]

What Changes Can Large-scale Language Models Bring? Intensive Study on HyperCLOVA: Billions-scale Korean Generative Pretrained Transformers [Preprint]Field: Language Params: 82B Training Data: (562B tokens, Korean) Training petaFLOPs: 63B Architecture: De

-

ByT5 [Google] May 2021 [open]

ByT5: Towards a token-free future with pre-trained byte-to-byte models [TACL'22]Field: Language Params: 13B Training Data: (101 languages) Architecture: En-De

-

PanGu-α [Huawei] Apr. 2021 [close]

PanGu-α: Large-scale Autoregressive Pretrained Chinese Language Models with Auto-parallel Computation [Preprint]Field: Language Params: 200B Training Data: 1.1TB (Chinese) Training petaFLOPs: 58M Architecture: De

-

mT5 [Google] Mar. 2021 [open]

mT5: A massively multilingual pre-trained text-to-text transformer [Preprint]Field: Language Params: 13B Training Data: (101 languages) Architecture: En-De

-

WuDao-WenHui [BAAI] Mar. 2021 [open]

Field: Language Params: 2.9B Training Data: 303GB (Chinese)

-

GLM [BAAI] Mar. 2021 [open]

GLM: General Language Model Pretraining with Autoregressive Blank Infilling [Preprint]Field: Language Params: 10B Architecture: De

-

Switch Transformer [Google] Jan. 2021 [open]

Switch Transformers: Scaling to Trillion Parameter Models with Simple and Efficient Sparsity [Preprint]Field: Language Params: 1.6T Training Data: 750GB Training petaFLOPs: 82M Architecture: En-De, MoE Objective: MLM

-

CPM [BAAI] Dec. 2020 [open]

CPM: A Large-scale Generative Chinese Pre-trained Language Model [Preprint]Field: Language Params: 2.6B Training Data: 100G (Chinese) Training petaFLOPs: 1.8M Architecture: De Objective: LTR

-

GPT-3 [OpenAI] May 2020 [close]

Language Models are Few-Shot Learners [NeurIPS'20]Field: Language Params: 175B Training Data: 45TB (680B Tokens) Training Time: 95 A100 GPU years (835584 A100 GPU hours, 355 V100 GPU years) Training Cost: $4.6M Training petaFLOPs: 310M Architecture: De Obective: LTR

-

Blender [Meta] Apr. 2020 [close]

Recipes for building an open-domain chatbot [Preprint]Field: Language (Dialogue) Params: 9.4B

-

T-NLG [Microsoft] Feb. 2020 [close]

Field: Language Params: 17B Training petaFLOPs: 16M Architecture: De Obective: LTR

-

Meena [Google] Jan. 2020 [close]

Towards a Human-like Open-Domain Chatbot [Preprint]Field: Language (Dialogue) Params: 2.6B Training Data: 341GB (40B words) Training petaFLOPs: 110M

-

DialoGPT [Microsoft] Nov. 2019 [open]

DialoGPT: Large-Scale Generative Pre-training for Conversational Response Generation [ACL'20]Field: Language (Dialogue) Params: 762M Training Data: (147M conversation) Architecture: De

-

T5 [Google] Oct. 2019 [open]

Exploring the Limits of Transfer Learning with a Unified Text-to-Text Transformer [JMLR'19]Field: Language Params: 11B Training Data: 800GB Training Cost: $1.5M Training petaFLOPs: 41M Architecture: En-De Obective: MLM

-

Megatron-LM [Nvidia] Sept. 2019 [open]

Megatron-LM: Training Multi-Billion Parameter Language Models Using Model Parallelism [Preprint]Field: Language Params: 8.3B Training Data: 174 GB Training petaFLOPs: 9.1M Architecture: De Obective: LTR Training Framework: Megatron

-

Megatron-BERT [Nvidia] Sept. 2019 [open]

Megatron-LM: Training Multi-Billion Parameter Language Models Using Model Parallelism [Preprint]Field: Language Params: 3.9B Training Data: 174 GB Training petaFLOPs: 57M Architecture: En Obective: MLM Training Framework: Megatron

-

RoBERTa [Meta] July 2019 [open]

RoBERTa: A Robustly Optimized BERT Pretraining Approach [Preprint]Field: Language Params: 354M Training Data: 160GB Training Time: 1024 V100 GPU days Architecture: En Objective: MLM

-

XLNet [Google] June 2019 [open]

XLNet: Generalized Autoregressive Pretraining for Language Understanding [NeurIPS'19]Field: Language Params: 340M Training Data: 113GB (33B words) Training Time: 1280 TPUv3 days Training Cost: $245k Architecture: En Objective: PLM

-

GPT-2 [OpenAI] Feb. 2019 [open]

Language Models are Unsupervised Multitask Learners [Preprint]Field: Language Params: 1.5B Training Data: 40GB (8M web pages) Training Cost: $43k Training petaFLOPs: 1.5M Architecture: De Objective: LTR

-

BERT [Google] Oct. 2018 [open]

BERT: Pre-training of Deep Bidirectional Transformers for Language Understanding [NAACL'18]Field: Language Params: 330M Training Data: 16GB (3.3B words) Training Time: 64 TPUv2 days (280 V100 GPU days) Training Cost: $7k Training petaFLOPs: 290k Architecture: En Objective: MLM, NSP

-

GPT [OpenAI] June 2018 [open] Improving Language Understanding by Generative Pre-Training [Preprint]

Field: Language Params: 117M Training Data: 1GB (7k books) Training petaFLOPs: 18k Architecture: De Objective: LTR

-

MAE->WSP-2B [Meta] Mar. 2023 [close]

The effectiveness of MAE pre-pretraining for billion-scale pretrainingField: Vision Params: 6.5B Training Data: (3B images) Architecture: Transformer Objective: MAE, Weakly-Supervised

-

OpenCLIP G/14 [LAION] Mar. 2023 [open]

Field: Vision-Language Params: 2.5B Training Data: (2B images)

-

ViT-22B [Google] Feb. 2023 [close] Scaling Vision Transformers to 22 Billion Parameters

Field: Vision Params: 22B Training Data: (4B images) Architecture: Transformer Objective: Supervised

-

InternImage-G [Shanghai AI Lab] Nov. 2022 [open] InternImage: Exploring Large-Scale Vision Foundation Models with Deformable Convolutions [CVPR'23 Highlight]

Field: Vision Params: 3B Architecture: CNN Core Operator: Deformable Convolution v3

-

Stable Diffusion [Stability AI] Aug. 2022 [open]

Field: Image Generation (text to image) Params: 890M Training Data: (5B images) Architecture: Transformer, Diffusion

-

Imagen [Google] May 2022

Photorealistic Text-to-Image Diffusion Models with Deep Language Understanding [Preprint]Field: Image Generation (text to image) Text Encoder: T5 Image Decoder: Diffusion, Upsampler

-

Flamingo [DeepMind] Apr. 2022 [close]

Flamingo: a Visual Language Model for Few-Shot Learning [Preprint]Field: Vision-Language Params: 80B

-

DALL·E 2 [OpenAI] Apr. 2022

Hierarchical Text-Conditional Image Generation with CLIP Latents [Preprint]Field: Image Generation (text to image) Text Encoder: GPT2 (CLIP) Image Encoder: ViT (CLIP) Image Decoder: Diffusion, Upsampler

-

BaGuaLu [BAAI, Alibaba] Apr. 2022

BaGuaLu: targeting brain scale pretrained models with over 37 million cores [PPoPP'22]Field: Vision-Language Params: 174T Architecture: M6

-

SEER [Meta] Feb. 2022 [open]

Vision Models Are More Robust And Fair When Pretrained On Uncurated Images Without Supervision [Preprint]Field: Vision Params: 10B Training Data: (1B images) Architecture: Convolution Objective: SwAV

-

ERNIE-ViLG [Baidu] Dec. 2021

ERNIE-ViLG: Unified Generative Pre-training for Bidirectional Vision-Language Generation [Preprint]Field: Image Generation (text to image) Params: 10B Training Data: (145M text-image pairs) Architecture: Transformer, dVAE + De

-

NUWA [Microsoft] Nov. 2021 [open]

NÜWA: Visual Synthesis Pre-training for Neural visUal World creAtion [Preprint]Field: Vision-Language Generatioon: Image, Video Params: 870M

-

SwinV2-G [Google] Nov. 2021 [open]

Swin Transformer V2: Scaling Up Capacity and Resolution [CVPR'22]Field: Vision Params: 3B Training Data: 70M Architecture: Transformer Objective: Supervised

-

Zidongtaichu [CASIA] Sept. 2021 [close]

Field: Image, Video, Language, Speech Params: 100B

-

ViT-G/14 [Google] June 2021

Scaling Vision Transformers [Preprint]Field: Vision Params: 1.8B Training Data: (300M images) Training petaFLOPs: 3.4M Architecture: Transformer Objective: Supervised

-

CoAtNet [Google] June 2021 [open]

CoAtNet: Marrying Convolution and Attention for All Data Sizes [NeurIPS'21]Field: Vision Params: 2.4B Training Data: (300M images) Architecture: Transformer, Convolution Objective: Supervised

-

V-MoE [Google] June 2021

Scaling Vision with Sparse Mixture of Experts [NeurIPS'21]Field: Vision Params: 15B Training Data: (300M images) Training Time: 16.8k TPUv3 days Training petaFLOPs: 33.9M Architecture: Transformer, MoE Objective: Supervised

-

CogView [BAAI, Alibaba] May 2021 </>

CogView: Mastering Text-to-Image Generation via Transformers [NeurIPS'21]Field: Vision-Language Params: 4B Training Data: (30M text-image pairs) Training petaFLOPs: 27M Image Encoder: VAE Text Encoder & Image Decoder: GPT2

-

M6 [Alibaba] Mar. 2021

M6: A Chinese Multimodal Pretrainer [Preprint]Field: Vision-Language Params: 10T Training Data: 300G Texts + 2TB Images Training petaFLOPs: 5.5M Fusion: Single-stream Objective: MLM, IC

-

DALL·E [OpenAI] Feb. 2021

Zero-Shot Text-to-Image Generation [ICML'21]Field: Image Generation (text to image) Params: 12B Training Data: (250M text-images pairs) Training petaFLOPs: 47M Image Encoder: dVAE Text Encoder & Image Decoder: GPT2

-

CLIP [OpenAI] Jan. 2021

Learning Transferable Visual Models From Natural Language Supervision [ICML'22]Field: Vision-Language Training Data: 400M text-image pairs Training petaFLOPs: 11M Image Encoder: ViT Text Encoder: GPT-2 Fusion: Dual Encoder Objective: CMCL

-

ViT-H/14 [Google] Oct. 2020 [open]

An Image is Worth 16x16 Words: Transformers for Image Recognition at Scale [ICLR'20]Field: Vision Params: 632M Training Data: (300M images) Training petaFLOPs: 13M Architecture: Transformer Objective: Supervised

-

iGPT-XL [OpenAI] June 2020 [open]

Generative Pretraining From Pixels [ICML'20]Field: Image Generation Params: 6.8B Training Data: (1M images) Training petaFLOPs: 33M Architecture: Transformer, De

-

BigGAN-deep [DeepMind] Sept. 2018 [open]

Large Scale GAN Training for High Fidelity Natural Image Synthesis [ICLR'19]Field: Image Generation Params: 158M Training Data: (300M images) Training petaFLOPs: 3M Architecture: Convolution, GAN Resolution: 512x512

-

PaLM-E [Google] March 2023 [close]

PaLM-E: An Embodied Multimodal Language Model [Preprint]Field: Reinforcement Learning Params: 562B (540B LLM + 22B Vi)

-

Gato [DeepMind] May 2022 [close]

A Generalist Agent [Preprint]Field: Reinforcement Learning Params: 1.2B Training Data: (604 Tasks) Objective: Supervised

-

USM [Google] Mar. 2023 [close]

Google USM: Scaling Automatic Speech Recognition Beyond 100 Languages [Preprint]Field: Speech Params: 2B Training Data: 12,000,000 hours

-

Whisper [OpenAI] Sept. 2022 [close]

Robust Speech Recognition via Large-Scale Weak Supervision [Preprint]Field: Speech Params: 1.55B Training Data: 680,000 hours Objective: Weakly Supervised

-

HuBERT [Meta] June 2021 [open]

HuBERT: Self-Supervised Speech Representation Learning by Masked Prediction of Hidden Units [Preprint]Field: Speech Params: 1B Training Data: 60,000 hours Objective: MLM

-

wav2vec 2.0 [Meta] Oct. 2020 [open]

wav2vec 2.0: A Framework for Self-Supervised Learning of Speech Representations [NeurIPS'20]Field: Speech Params: 317M Training Data: 50,000 hours Training petaFLOPs: 430M Objective: MLM

-

DeepSpeech 2 [Meta] Dec. 2015 [open]

Deep Speech 2: End-to-End Speech Recognition in English and Mandarin [ICML'15]```yaml Field: Speech Params: 300M Training Data: 21,340 hours ```

-

AlphaFold 2 [DeepMind] July 2021 [open]

Highly accurate protein structure prediction with AlphaFold [Nature]Field: Biology Params: 21B Training petaFLOPs: 100k

Deep Learning frameworks supportting distributed training are marked with *.

- Accelerate [Huggingface] Oct. 2020 [open]

- Hivemind Aug. 2020 [open]

Towards Crowdsourced Training of Large Neural Networks using Decentralized Mixture-of-Experts [Preprint] - FairScale [Meta] July 2020 [open]

- DeepSpeed [Microsoft] Oct. 2019 [open]

ZeRO: Memory Optimizations Toward Training Trillion Parameter Models [SC'20] - Megatron [Nivida] Sept. 2019 [open]

Megatron: Training Multi-Billion Parameter Language Models Using Model Parallelism [Preprint] - PyTorch* [Meta] Sept. 2016 [open]

PyTorch: An Imperative Style, High-Performance Deep Learning Library [NeurIPS'19]

- T5x [Google] Mar. 2022 [open]

Scaling Up Models and Data with 𝚝𝟻𝚡 and 𝚜𝚎𝚚𝚒𝚘 [Preprint] - Alpa [Google] Jan. 2022 [open]

Alpa: Automating Inter- and Intra-Operator Parallelism for Distributed Deep Learning [OSDI'22] - Pathways [Google] Mar. 2021 [close]

Pathways: Asynchronous Distributed Dataflow for ML [Preprint] - Colossal-AI [HPC-AI TECH] Nov. 2021 [open]

Colossal-AI: A Unified Deep Learning System For Large-Scale Parallel Training [Preprint] - GShard [Google] June 2020

GShard: Scaling Giant Models with Conditional Computation and Automatic Sharding [Preprint] - Jax* Google Oct 2019 [open]

- Mesh Tensorflow [Google] Nov. 2018 [open]

- Horovod [Uber] Feb. 2018 [open]

Horovod: fast and easy distributed deep learning in TensorFlow [Preprint] - Tensorflow* [Google] Nov. 2015 [open]

TensorFlow: A system for large-scale machine learning [OSDI'16]

- OneFlow* [OneFlow] July 2020 [open]

OneFlow: Redesign the Distributed Deep Learning Framework from Scratch [Preprint] - MindSpore* [Huawei] Mar. 2020 [open]

- PaddlePaddle* [Baidu] Nov. 2018 [open]

End-to-end Adaptive Distributed Training on PaddlePaddle [Preprint] - Ray [Berkeley] Dec. 2017 [open]

Ray: A Distributed Framework for Emerging AI Applications [OSDI'17]

- Petals [BigScience] Dec. 2022 [open]

- FlexGen [Stanford, Berkerley, CMU, etc.] May 2022 [open]

- FastTransformer [NVIDIA] Apr. 2021 [open]

- MegEngine [MegEngine] Mar. 2020

- DeepSpeed-Inference [Microsoft] Oct. 2019 [open]

- MediaPipe [Google] July 2019 [open]

- TensorRT [Nvidia] Jun 2019 [open]

- MNN [Alibaba] May 2019 [open]

- OpenVINO [Intel] Oct. 2019 [open]

- ONNX [Linux Foundation] Sep 2017 [open]

- ncnn [Tencent] July 2017 [open]

-

HET [Tencent] Dec. 2021

HET: Scaling out Huge Embedding Model Training via Cache-enabled Distributed Framework [VLDB'22] -

Persia [Kuaishou] Nov. 2021

Persia: An Open, Hybrid System Scaling Deep Learning-based Recommenders up to 100 Trillion Parameters [Preprint]Embeddings Params: 100T

-

ZionEX [Meta] Apr. 2021

Software-Hardware Co-design for Fast and Scalable Training of Deep Learning Recommendation Models [ISCA'21]Embeddings Params: 10T

-

ScaleFreeCTR [Huawei] Apr. 2021

ScaleFreeCTR: MixCache-based Distributed Training System for CTR Models with Huge Embedding Table [SIGIR'21] -

Kraken [Kuaishou] Nov. 2020

Kraken: Memory-Efficient Continual Learning for Large-Scale Real-Time Recommendations [SC'20] -

TensorNet [Qihoo360] Sept. 2020 [open]

-

HierPS [Baidu] Mar. 2020

Distributed Hierarchical GPU Parameter Server for Massive Scale Deep Learning Ads Systems [MLSys'20] -

AIBox [Baidu] Oct. 2019

AIBox: CTR Prediction Model Training on a Single Node [CIKM'20]Embeddings Params: 0.1T

-

XDL [Alibaba] Aug. 2019

XDL: an industrial deep learning framework for high-dimensional sparse data [DLP-KDD'21]Embeddings Params: 0.01T

- Company tags: the related company name. Other institudes may also involve in the job.

- Params: number of parameters of the largest model

- Training data size, training cost and training petaFLOPs may have some uncertainty.

- Training cost

- TPUv2 hour: $4.5

- TPUv3 hour: $8

- V100 GPU hour: $0.55 (2022)

- A100 GPU hoor: $1.10 (2022)

- Architecture

- En: Encoder-based Language Model

- De: Decoder-based Language Model

- En-De=Encoder-Decoder-based Language Model

- The above three architectures are powered with transformers.

- MoE: Mixture of Experts

- Objective (See explanation in section 6–8 of this paper)

- MLM: Masked Language Modeling

- LTR: Left-To-Right Language Modeling

- NSP: Next Sentence Prediction

- PLM: Permuted Language Modeling

- IC: Image Captioning

- VLM: Vision Languauge Matching

- CMCL: Cross-Modal Contrastive Learning

- FLOPs: number of FLOating-Point operations [explanation]

- 1 petaFLOPs = 1e15 FLOPs