EPro-PnP: Generalized End-to-End Probabilistic Perspective-n-Points for Monocular Object Pose Estimation

In CVPR 2022 (Oral, Best Student Paper). [paper][video]

Hansheng Chen*1,2, Pichao Wang†2, Fan Wang2, Wei Tian†1, Lu Xiong1, Hao Li2

1Tongji University, 2Alibaba Group

*Part of work done during an internship at Alibaba Group.

†Corresponding Authors: Pichao Wang, Wei Tian.

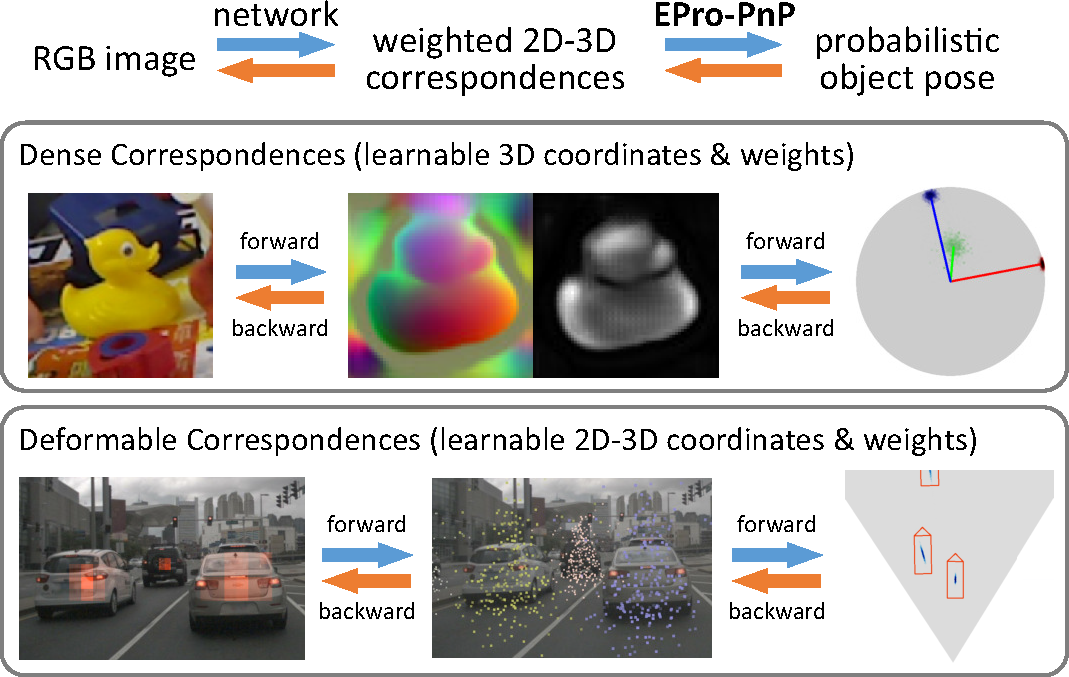

EPro-PnP is a probabilistic Perspective-n-Points (PnP) layer for end-to-end 6DoF pose estimation networks. Broadly speaking, it is essentially a continuous counterpart of the widely used categorical Softmax layer, and is theoretically generalizable to other learning models with nested optimization.

Given the layer input: an -point correspondence set

consisting of 3D object coordinates

, 2D image coordinates

, and 2D weights

, a conventional PnP solver searches for an optimal pose

(rigid transformation in SE(3)) that minimizes the weighted reprojection error. Previous work tries to backpropagate through the PnP operation, yet

is inherently non-differentiable due to the inner

operation. This leads to convergence issue if all the components in

must be learned by the network.

In contrast, our probabilistic PnP layer outputs a posterior distribution of pose, whose probability density can be derived for proper backpropagation. The distribution is approximated via Monte Carlo sampling. With EPro-PnP, the correspondences

can be learned from scratch altogether by minimizing the KL divergence between the predicted and target

pose distribution.

Models used in the original paper:

-

EPro-PnP-6DoF for 6DoF pose estimation

-

EPro-PnP-Det for 3D object detection

New models:

-

EPro-PnP-Det v2 for 3D object detection

At the time of submission (Aug 30, 2022), EPro-PnP-Det v2 ranks 1st among all camera-based single-frame object detection models on the official nuScenes benchmark (test split, without extra data). Code (and maybe a technical report or paper) will be released.

Method Backbone NDS mAP mATE mASE mAOE mAVE mAAE Schedule EPro-PnP-Det v2 R101 0.490 0.423 0.547 0.236 0.302 1.071 0.123 12 ep PETR Swin-B 0.483 0.445 0.627 0.249 0.449 0.927 0.141 24 ep BEVDet-Base Swin-B 0.482 0.422 0.529 0.236 0.395 0.979 0.152 20 ep PolarFormer R101 0.470 0.415 0.657 0.263 0.405 0.911 0.139 24 ep BEVFormer-S R101 0.462 0.409 0.650 0.261 0.439 0.925 0.147 24 ep PETR R101 0.455 0.391 0.647 0.251 0.433 0.933 0.143 24 ep EPro-PnP-Det v1 R101 0.453 0.373 0.605 0.243 0.359 1.067 0.124 12 ep PGD R101 0.448 0.386 0.626 0.245 0.451 1.509 0.127 24+24 ep FCOS3D R101 0.428 0.358 0.690 0.249 0.452 1.434 0.124 -

We provide a demo on the usage of the EPro-PnP layer.

If you find this project useful in your research, please consider citing:

@inproceedings{epropnp,

author = {Hansheng Chen and Pichao Wang and Fan Wang and Wei Tian and Lu Xiong and Hao Li,

title = {EPro-PnP: Generalized End-to-End Probabilistic Perspective-n-Points for Monocular Object Pose Estimation},

booktitle = {IEEE Conference on Computer Vision and Pattern Recognition (CVPR)},

year = {2022}

}