In this project, you will be provided with a real-world dataset, extracted from Kaggle, on San Francisco crime incidents, and you will provide statistical analyses of the data using Apache Spark Structured Streaming. You will draw on the skills and knowledge you've learned in this course to create a Kafka server to produce data, and ingest data through Spark Structured Streaming.

./start.sh

/usr/bin/zookeeper-server-start ./config/zookeeper.properties

/usr/bin/kafka-server-start ./config/server.properties

python kafka_server.py

kafka-topics --list --zookeeper localhost:2181

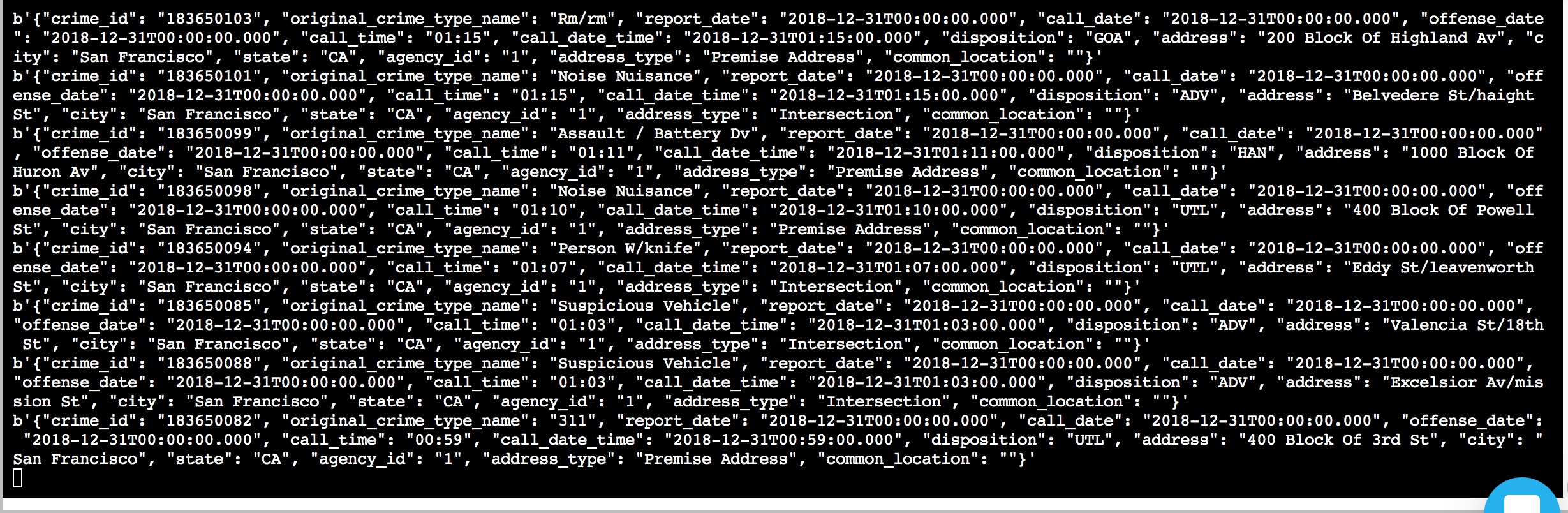

5. Run consumer_server.py to get consumed messages and check correctness or run kafka consumer in terminal with the following command

kafka-console-consumer --bootstrap-server localhost:9092 --topic com.udacity.sfcrime.ly --from-beginning

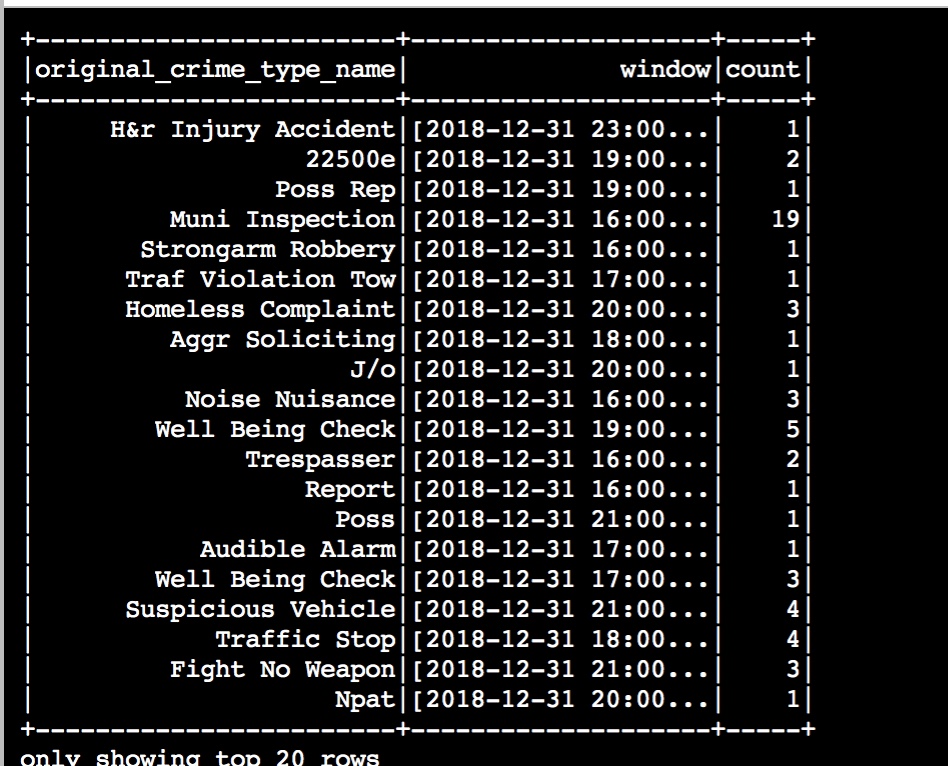

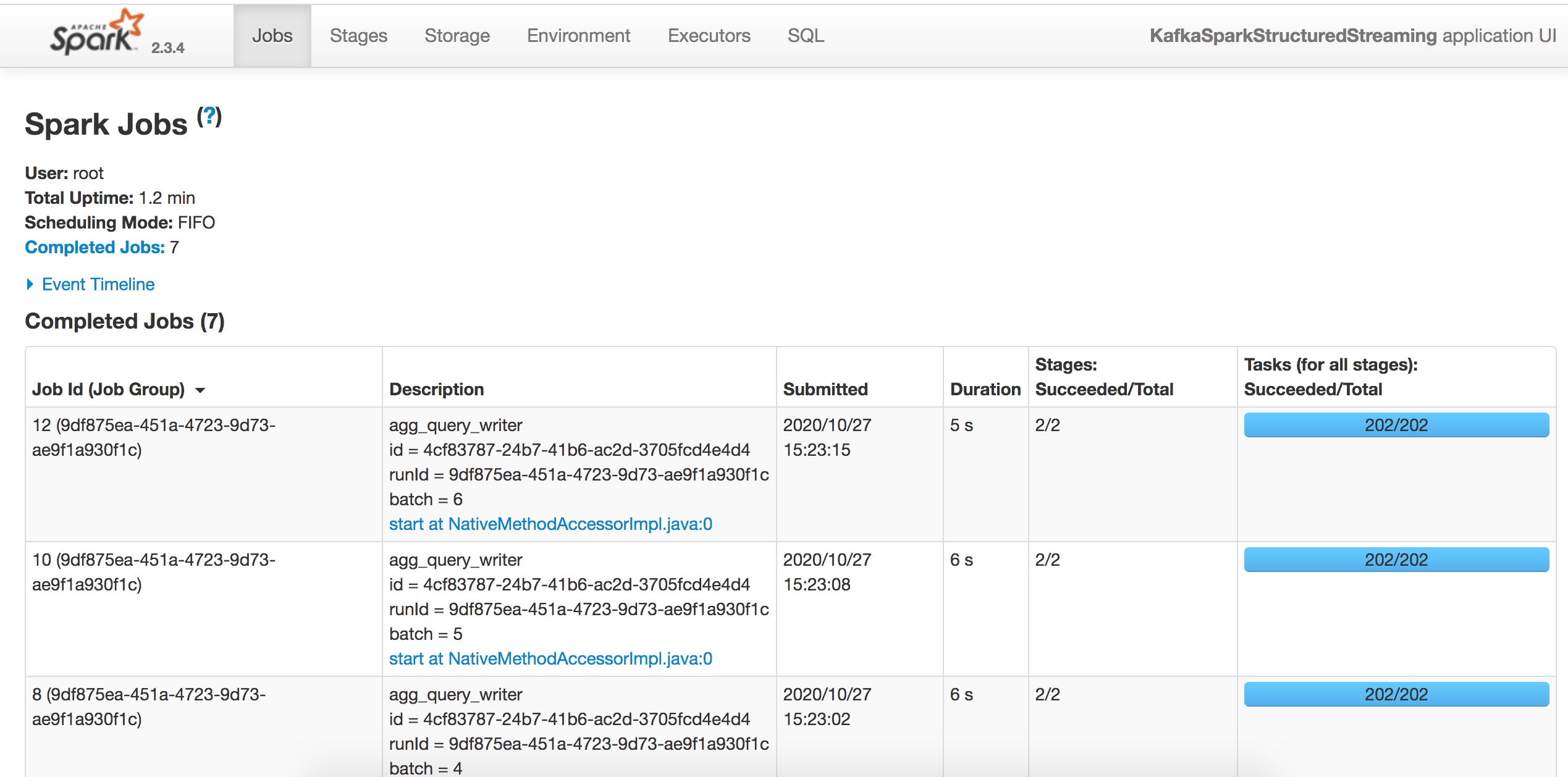

spark-submit --packages org.apache.spark:spark-sql-kafka-0-10_2.11:2.3.4 --master local[*] data_stream.py

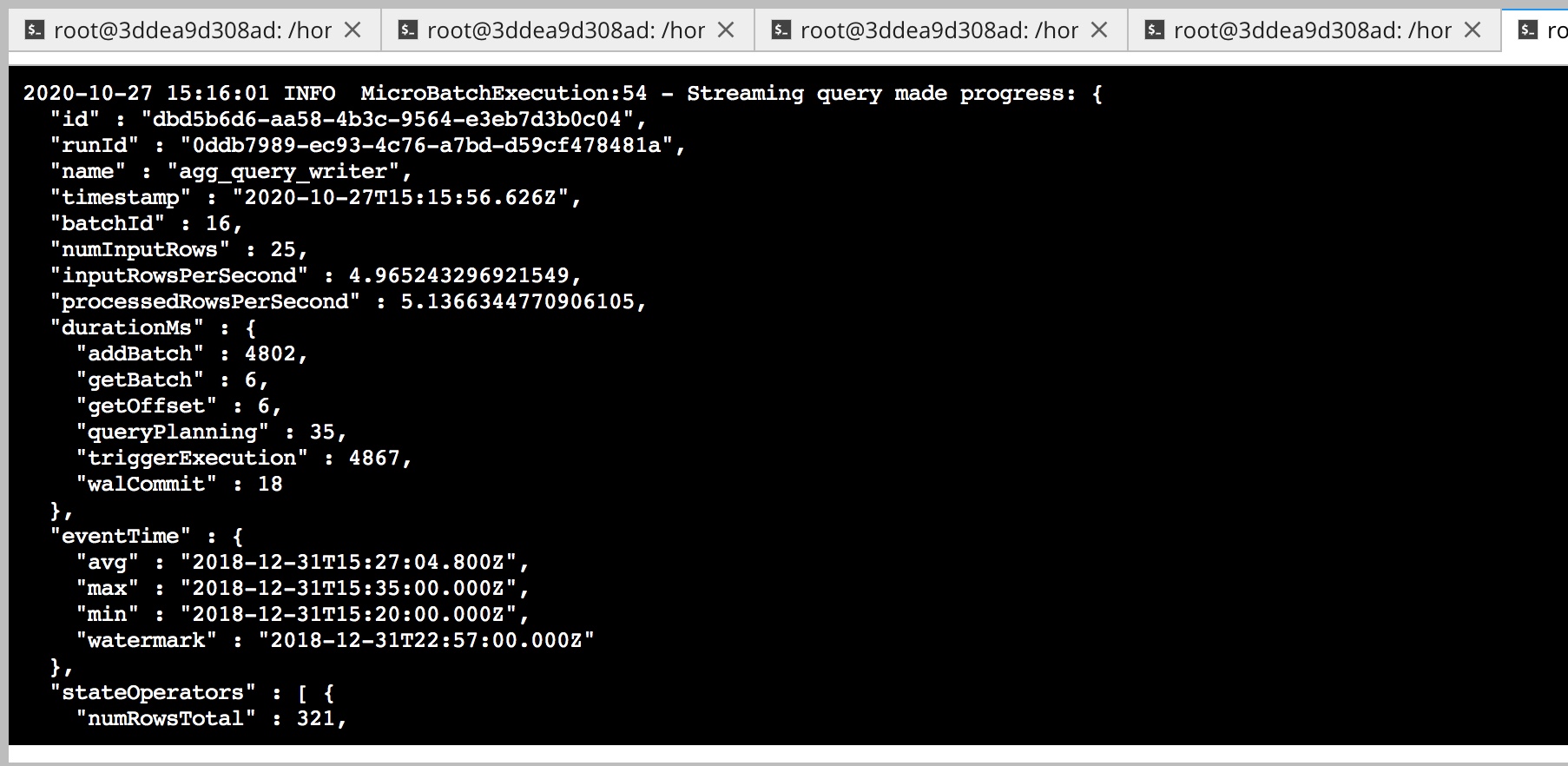

How did changing values on the SparkSession property parameters affect the throughput and latency of the data?

I chaned below 2 parameters and increasing each or both of them will increase the throughput as well as the latency of the data

maxRatePerPartition is used to set the maximum number of messages per partition per batch

maxOffsetsPerTrigger is used to limit the number of records to fetch per trigger.

What were the 2-3 most efficient SparkSession property key/value pairs? Through testing multiple variations on values, how can you tell these were the most optimal?

The most effective properties were maxOffsetsPerTrigger and maxRatePerPartition.

Based on the practice, the number of maxRatePerPartition should not extend the maxOffsetsPerTrigger

. The optimal rate was achieved with a rather large number of Offsets like 500

and maxRatePerPartition of 500.