This is official project in our paper: Is Bigger and Deeper Always Better? Probing LLaMA Across Scales and Layers

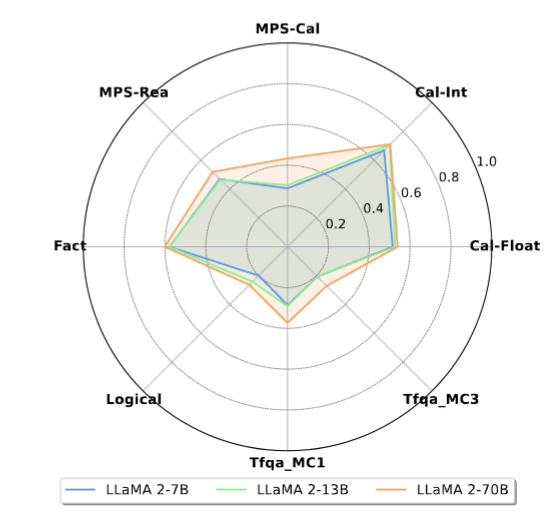

This projects presents an in-depth analysis of Large Language Models (LLMs), focusing on LLaMA, a prominent open-source foundational model in natural language processing. Instead of assessing LLaMA through its generative output, we design multiple-choice tasks to probe its intrinsic understanding in high-order tasks such as reasoning and computation. We examine the model horizontally, comparing different sizes, and vertically, assessing different layers.

We probe the LLaMA models in six high-order tasks:

- Calculation

- Math problem solving (MPS)

- Logical reasoning

- Truthfulness

- Factual knowledge detection

- Cross-lingual Reasoning

We unveil several key and uncommon findings based on the designed probing tasks:

-

Horizontally, enlarging model sizes almost could not automatically impart additional knowledge or computational prowess. Instead, it can enhance reasoning abilities, especially in math problem solving, and helps reduce hallucinations, but only beyond certain size thresholds;

-

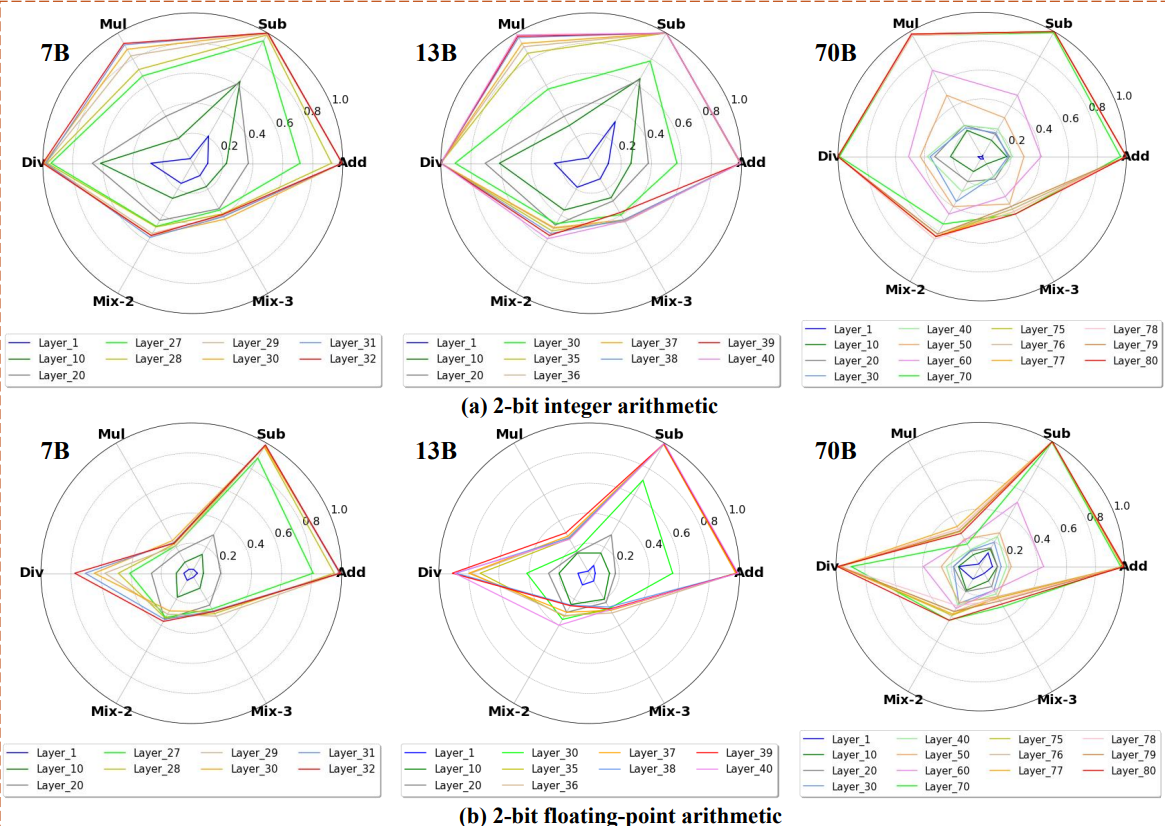

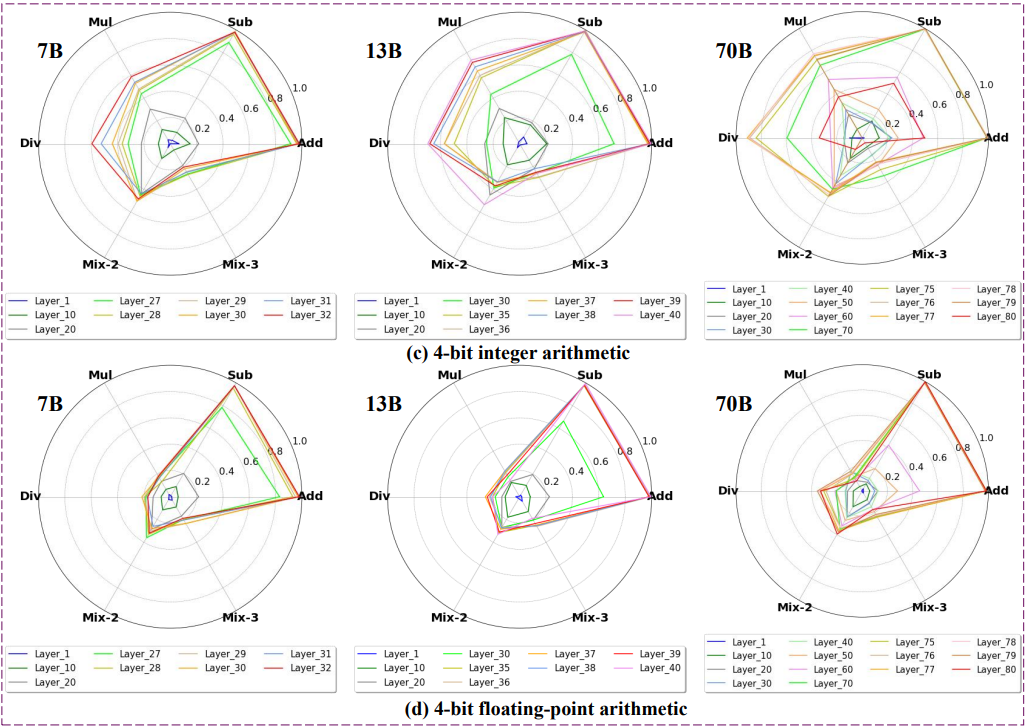

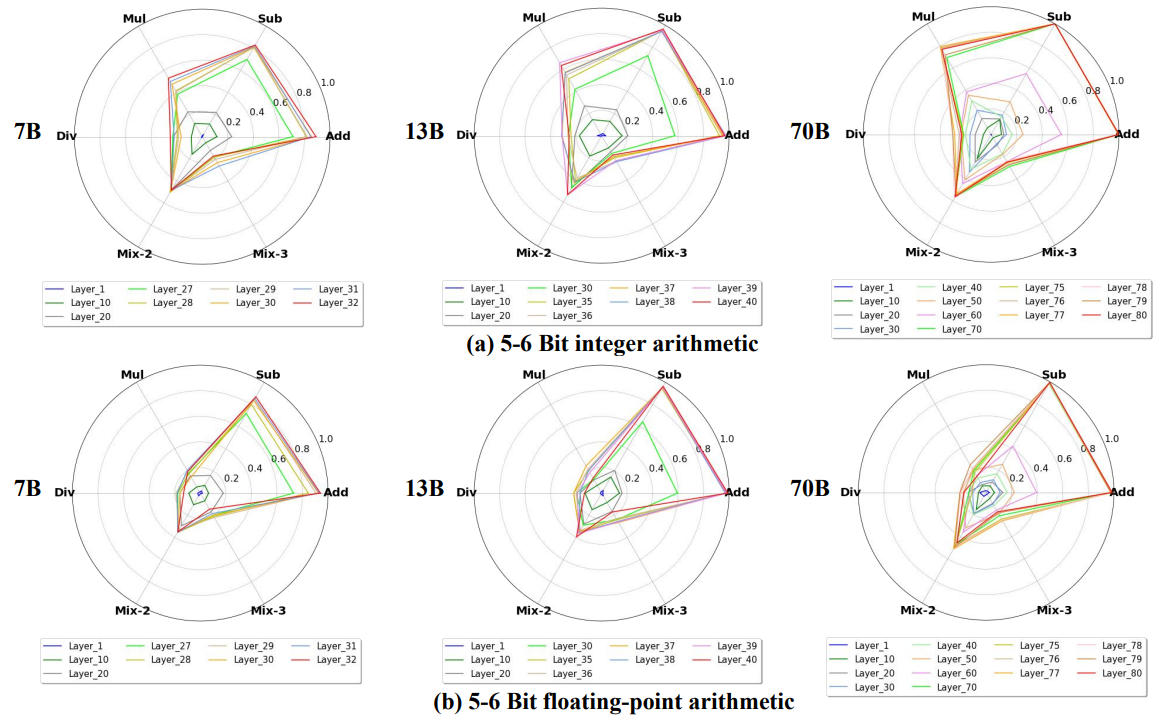

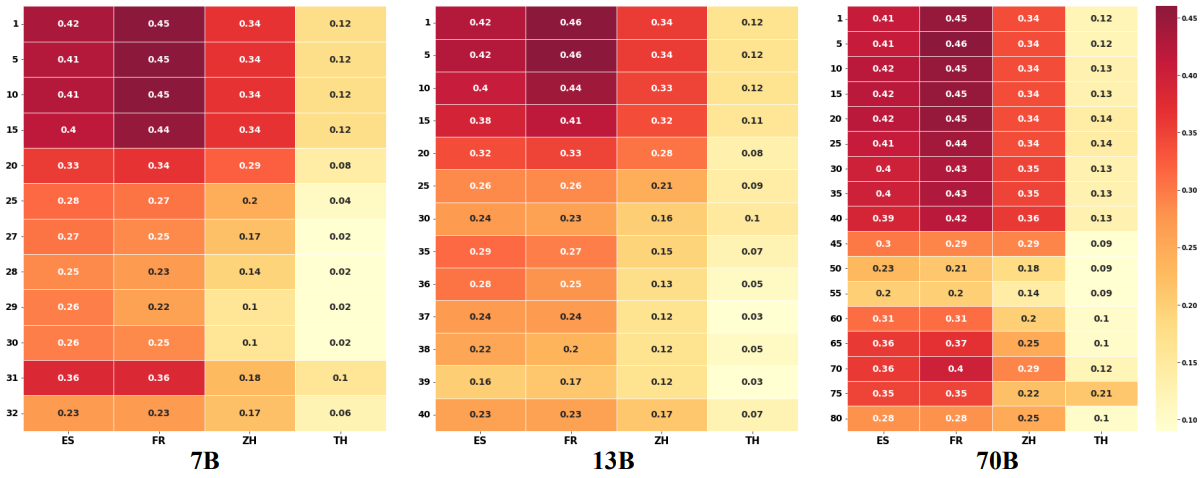

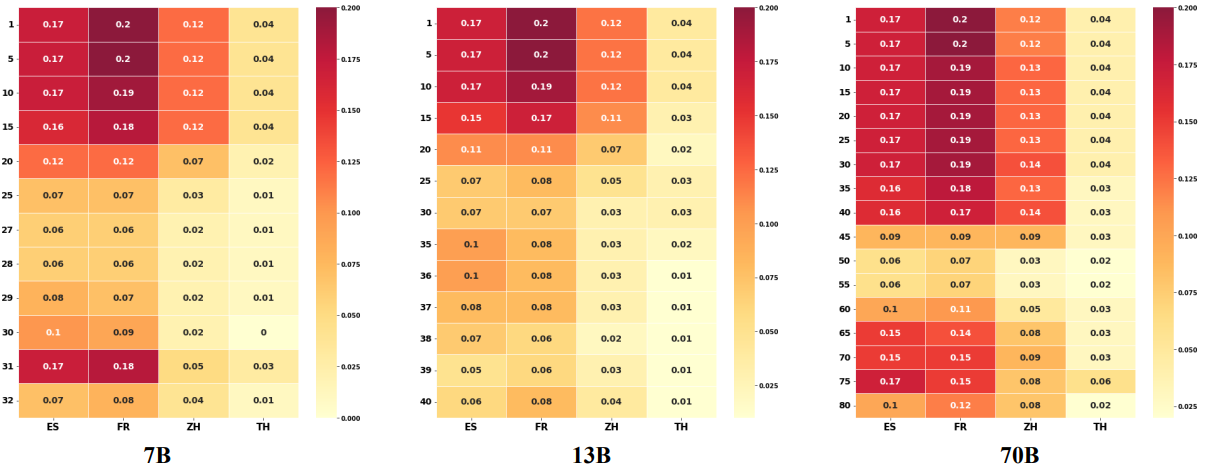

In vertical analysis, the lower layers of LLaMA lack substantial arithmetic and factual knowledge, showcasing logical thinking, multilingual and recognitive abilities, with top layers housing most computational power and real-world knowledge.

We expect these findings provide new observations into LLaMA's capabilities, offering insights into the current state of LLMs.

- Results

- Overall Probing

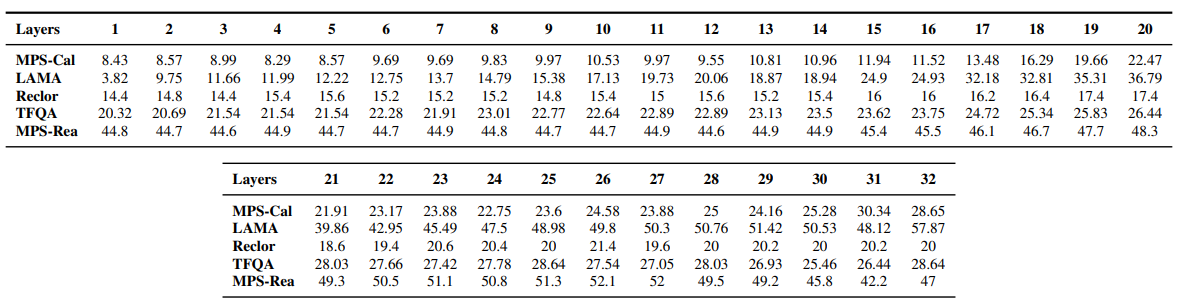

- 7B Probing

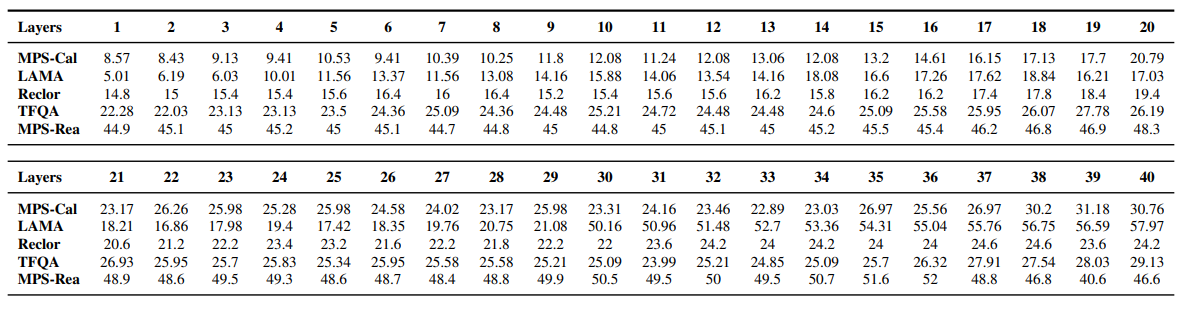

- 13B Probing

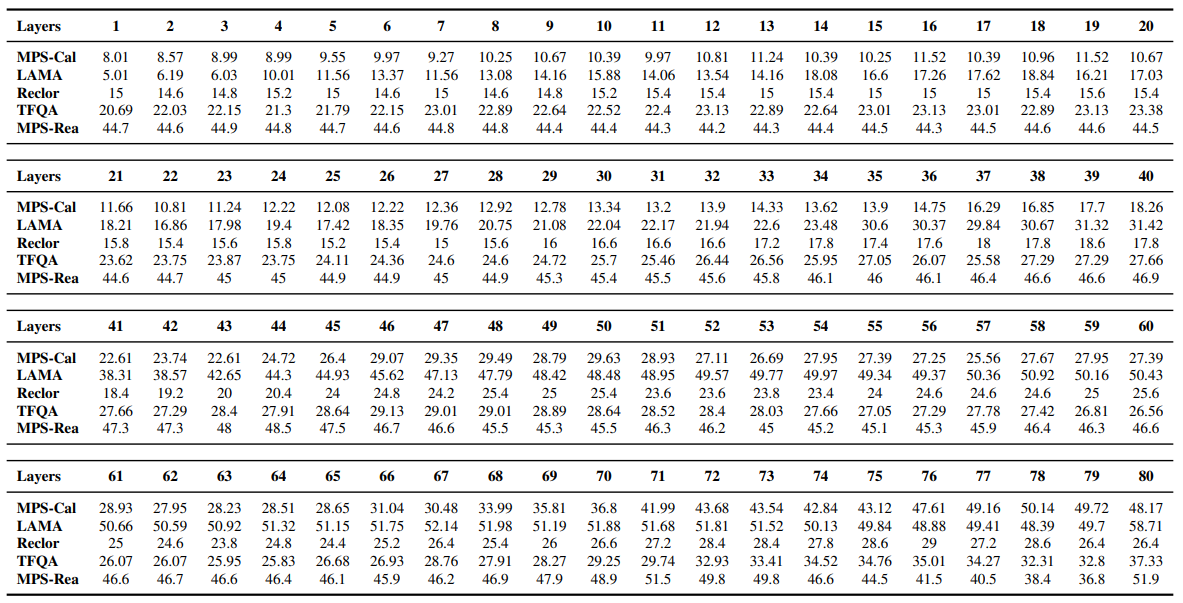

- 70B Probing

- Calculation Probing

- Cross-lingual Probing

- Reproduce

- Citation

pip install requirements.txt

for each subtask: MPS-REA, MPS-CAL, XMPS-REA and XMPS-CAL, you should use corresponding data for probing.

bash test_math.sh

We use a subset from LAMA as a testbed for our probing task.

bash test_factural.sh

We use TrufulQA MC1 and MC3 tasks as a testbed for our probing task.

bash test_tfqa.sh

We use the Reclor eval set as a testbed for our probing task.

bash test_logical.sh

@article{chen2023beyond,

title={Is Bigger and Deeper Always Better? Probing LLaMA Across Scales and Layers},

author={Chen, Nuo and Wu, Ning and Liang, Shining and Gong, Ming and Shou, Linjun and Zhang, Dongmei and Li, Jia},

journal={arXiv preprint arXiv:2312.04333},

year={2023}

}