If our project is helpful for your research, please consider citing :

@inproceedings{nguyen2024nope,

title={{NOPE: Novel Object Pose Estimation from a Single Image}},

author={Nguyen, Van Nguyen and Groueix, Thibault and Ponimatkin, Georgy and Hu, Yinlin and Marlet, Renaud and Salzmann, Mathieu and Lepetit, Vincent},

booktitle={{Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition}}

year=2024

}You can also put a star ⭐, if the code is useful to you.

If you like this project, check out related works from our group:

- GigaPose: Fast and Robust Novel Object Pose Estimation via One Correspondence (CVPR 2024)

- CNOS: A Strong Baseline for CAD-based Novel Object Segmentation (ICCVW 2023)

- Templates for 3D Object Pose Estimation Revisited: Generalization to New objects and Robustness to Occlusions (CVPR 2022)

- PIZZA: A Powerful Image-only Zero-Shot Zero-CAD Approach to 6DoF Tracking (3DV 2022)

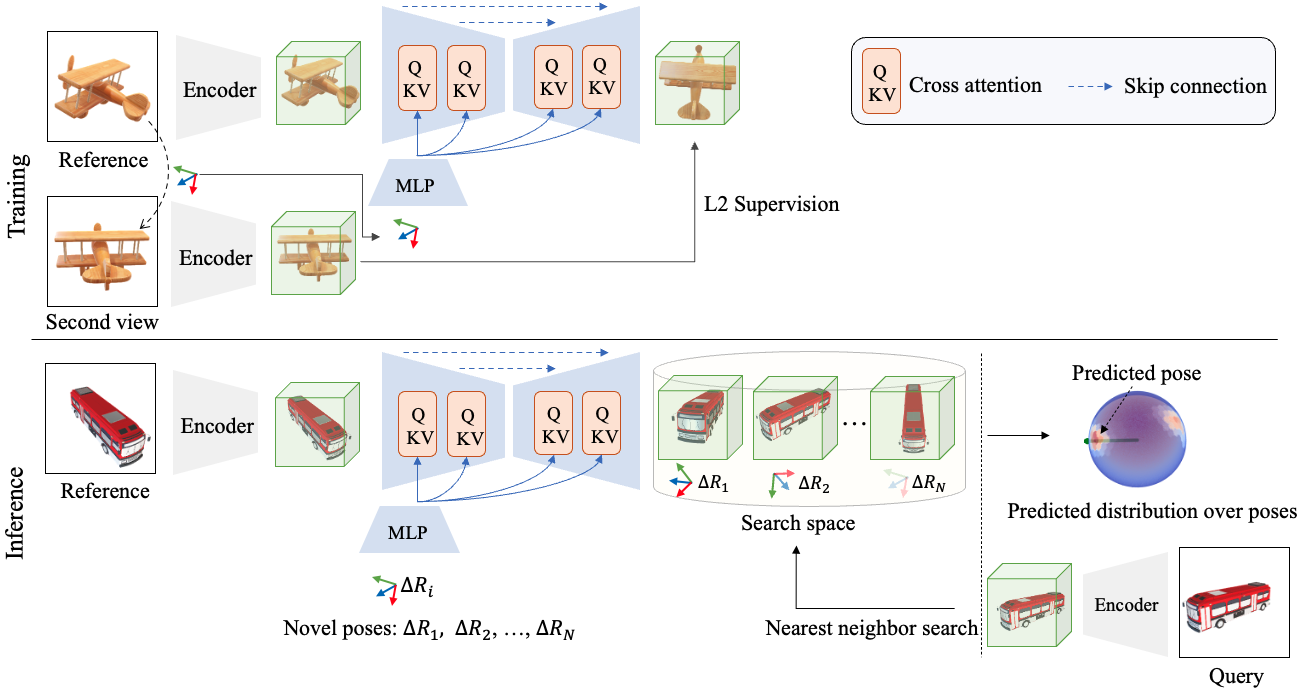

Abstract: The practicality of 3D object pose estimation remains limited for many applications due to the need for prior knowledge of a 3D model and a training period for new objects. To address this limitation, we propose an approach that takes a single image of a new object as input and predicts the relative pose of this object in new images without prior knowledge of the object’s 3D model and without requiring training time for new objects and categories. We achieve this by training a model to directly predict discriminative embeddings for viewpoints surrounding the object. This prediction is done using a simple U-Net architecture with attention and conditioned on the desired pose, which yields extremely fast inference. We compare our approach to state-of-the-art methods and show it outperforms them both in terms of accuracy and robustness.

Click to expand

conda env create -f environment.yml

conda activate nope

By default, all the datasets and experiments are saved at $ROOT_DIR as defined in this user's config.

We provide both pre-rendered datasets and scripts to render the datasets from scratch:

Option 1: Download pre-rendered datasets from our HuggingFace hub:

# Download all the datasets:

python -m src.scripts.download_preprocessed_shapenet

# Download ShapeNet models:

python -m src.scripts.download_shapenet

# Generate poses:

python -m src.scripts.generate_poses_shapenet

# Render images and templates:

python -m src.scripts.render_images_shapenet

python -m src.scripts.render_template_seen_shapenet

python -m src.scripts.render_template_unseen_shapenet

Here is the structure of $ROOT_DIR after downloading:

├── $ROOT_DIR

├── datasets/

├── shapenet/

├── test/

├── templates/

├── models/ # only for option 2

├── pretrained/

Click to expand

python test_shapeNet.py

Click to expand

python train_shapeNet.py