🐦 Follow me on X • 🤗 Hugging Face • 💻 Blog • 📙 Hands-on GNN

Simplify LLM evaluation using a convenient Colab notebook.

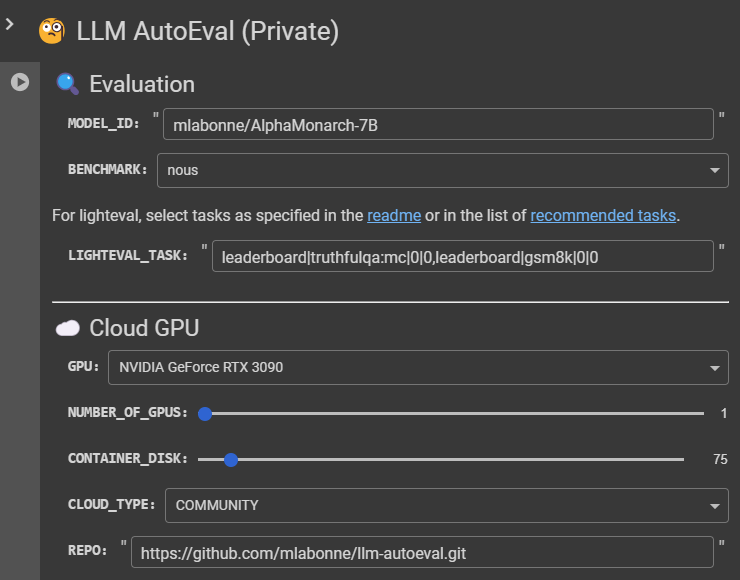

LLM AutoEval simplifies the process of evaluating LLMs using a convenient Colab notebook. You just need to specify the name of your model, a benchmark, a GPU, and press run!

- Automated setup and execution using RunPod.

- Customizable evaluation parameters for tailored benchmarking.

- Summary generation and upload to GitHub Gist for easy sharing and reference.

Note: This project is in the early stages and primarily designed for personal use. Use it carefully and feel free to contribute.

MODEL_ID: Enter the model id from Hugging Face.BENCHMARK:nous: List of tasks: AGIEval, GPT4ALL, TruthfulQA, and Bigbench (popularized by Teknium and NousResearch). This is recommended.lighteval: This is a new library from Hugging Face. It allows you to specify your tasks as shown in the readme. Check the list of recommended tasks to see what you can use (e.g., HELM, PIQA, GSM8K, MATH, etc.)openllm: List of tasks: ARC, HellaSwag, MMLU, Winogrande, GSM8K, and TruthfulQA (like the Open LLM Leaderboard). It uses the vllm implementation to enhance speed (note that the results will not be identical to those obtained without using vllm). "mmlu" is currently missing because of a problem with vllm.

LIGHTEVAL_TASK: You can select one or several tasks as specified in the readme or in the list of recommended tasks.

GPU: Select the GPU you want for evaluation (see prices here). I recommend using beefy GPUs (RTX 3090 or higher), especially for the Open LLM benchmark suite.Number of GPUs: Self-explanatory (more cost-efficient than bigger GPUs if you need more VRAM).CONTAINER_DISK: Size of the disk in GB.CLOUD_TYPE: RunPod offers a community cloud (cheaper) and a secure cloud (more reliable).REPO: If you made a fork of this repo, you can specify its URL here (the image only runsrunpod.sh).TRUST_REMOTE_CODE: Models like Phi require this flag to run them.PRIVATE_GIST: (W.I.P.) Make the Gist with the results private (true) or public (false).DEBUG: The pod will not be destroyed at the end of the run (not recommended).

Tokens use Colab's Secrets tab. Create two secrets called "runpod" and "github" and add the corresponding tokens you can find as follows:

RUNPOD_TOKEN: Please consider using my referral link if you don't have an account yet. You can create your token here under "API keys" (read & write permission). You'll also need to transfer some money there to start a pod.GITHUB_TOKEN: You can create your token here (read & write, can be restricted to "gist" only).HF_TOKEN: Optional. You can find your Hugging Face token here if you have an account.

You can compare your results with:

- YALL - Yet Another LLM Leaderboard, my leaderboard made with the gists produced by LLM AutoEval.

- Models like OpenHermes-2.5-Mistral-7B, Nous-Hermes-2-SOLAR-10.7B, or Nous-Hermes-2-Yi-34B.

- Teknium stores his evaluations in his LLM-Benchmark-Logs.

You can compare your results on a case-by-case basis, depending on the tasks you have selected.

You can compare your results with those listed on the Open LLM Leaderboard.

I use the summaries produced by LLM AutoEval to created YALL - Yet Another LLM Leaderboard with plots as follows:

Let me know if you're interested in creating your own leaderboard with your gists in one click. This can be easily converted into a small notebook to create this space.

- "Error: File does not exist": This task didn't produce the JSON file that is parsed for the summary. Activate debug mode and rerun the evaluation to inspect the issue in the logs.

- "700 Killed" Error: The hardware is not powerful enough for the evaluation. This happens when you try to run the Open LLM benchmark suite on an RTX 3070 for example.

- Outdated CUDA Drivers: That's unlucky. You'll need to start a new pod in this case.

- "triu_tril_cuda_template" not implemented for 'BFloat16': Switch the image as explained in this issue.

Special thanks to burtenshaw for integrating lighteval, EleutherAI for the lm-evaluation-harness, dmahan93 for his fork that adds agieval to the lm-evaluation-harness, Hugging Face for the lighteval library, NousResearch and Teknium for the Nous benchmark suite, and vllm for the additional inference speed.