The Platform Services' Project Registry is a single entry point for the Platform Service intake process. It is where teams can submit requests for provisioning namespaces in OpenShift 4 (OCP4) clusters, as well as perform other tasks such as:

- Update project contact details and other metadata;

- Request their project namespace set be created additional clusters;

- Request other resources be provisioned such as KeyCloak realms or Artifactory pull-through repositories.

This repo contains all the components for the Platform Service Registry as well as all the OpenShift 4.x manifests to build and deploy said project. You can find more about each component here:

Find anything relevant to the project as a whole in the repo root such as OCP4 manifests; docker compose file; and development configuration. Each of the three main components to the project are listed below and have their own build and deployment documentation:

The registry is a typical web app with an API backed by persistent storage. This serves to provide an interface for humans and automations alike, and, persist the data in a reliable way. Beyond this, the registry utilizes NATS to send message to "smart robots" that perform tasks outside of its scope.

These smart robots include:

| Name | Repo | Description |

|---|---|---|

| Namespace Provisioning | here | This automation implements a GitOps approach to provisioning a namespace set with quotas and various service accounts on our clusters |

There are lots of moving parts in the registry even though its a relatively simple web app. Each section below will walk you through the parameters and commands needed to deploy.

ProTip 🤓

All components should have the same label that can be used to remove them. This is useful in dev and test so that multiple deployments can be tested from a clean working namespace:

oc delete all,nsp,en,pvc,sa,secret,role,rolebinding \

-l "app=platsrv-registry"Assuming you are building both the Patroni and Backup images in an OCP tools namespace, you will need some RBAC to pull said images from tools to your other namespaces for deployment. Read, then run, the RBAC manifest located here. It will enough access for the image puller to do its job.

oc apply -f openshift/rbac.yamlAs of OCP4 Network Service Policy (NSP) is required to allow all the components (Web, API, DB, and SSO) to communicate. In each of the Web, API and DB deployment manifests there will be a label to uniquely identify each component by roll; for the Patroni database there will be a unique characteristic to identify any pod that is part of the cluster. These are used in the NSP to enable communication.

In the tools namespace run this NSP to allow the build to pull resources form the internet:

oc process -f openshift/templates/nsp-tools.yaml \

-p NAMESPACE=$(oc project --short) | \

oc apply -f -Then, in each of the other namespaces run the application specific NSP. It will allow each component to talk to one another as necessary. To accommodate the different configuration of NATS in dev and test vs prod some parameters are required by the OCP template:

oc process -f openshift/templates/nsp.yaml \

-p NAMESPACE=$(oc project --short) \

-p NATS_NAMESPACE=$(oc project --short) \

-p NATS_APO_IDENTIFIER="app=nats"| \

oc apply -f -| Name | Description |

|---|---|

| NAMESPACE | The namespace where the NSP is being deployed. |

| NATS_NAMESPACE | The namespace where NATS exists. |

| NATS_APO_IDENTIFIER | The unique identifier used for NATS. |

The build and deploy documents for PostgreSQL (Patroni) are located in the db directory of this project.

The community supported backup container is used to backup the database. Setup the database container using the helm charts:

helm repo add bcgov https://bcgov.github.io/helm-charts

helm install db-backup bcgov/backup-storage -f ./openshift/backup/deploy-values.yamlThe build and deploy documents for the API are located in the api directory of this project.

The build and deploy documents for the Web are located in the web directory of this project.

The API needs to connect to NATS for messaging the "smart robots". In production a shared NATS exists, however, in dev and test there no such thing. Fire up a stand alone NATS instance to accept message from the API.

Deploy a stand alone, single Pod instance of NATS with the DeploymentConfig provided. Provide its service to the API when deployed so it can find and use this service.

oc process -f openshift/templates/nats.yamlAdditional features and fixes can be found in backlog; find it under issues in this repo.

If you find issues with this application suite please create an issue describing the problem in detail.

Contributions are welcome. Please ensure they relate to an issue. See our Code of Conduct that is included with this repo for important details on contributing to Government of British Columbia projects.

We suggest running this in a docker container, you can download docker here.

This application has a function that will invite GitHub users to a specified GitHub organization, to make it locally, you will need to create your own test GitHub organization and GitHub App.

Step 1 Create a/two GitHub Organization(s) here or you can use any existing organization that you have full access permissions organization (ps*: API can invite the user into one or multiple GitHub org(s). for testing and development purpose, we suggest you at least create two GitHub orgs to make sure this function works properly)

Step 2

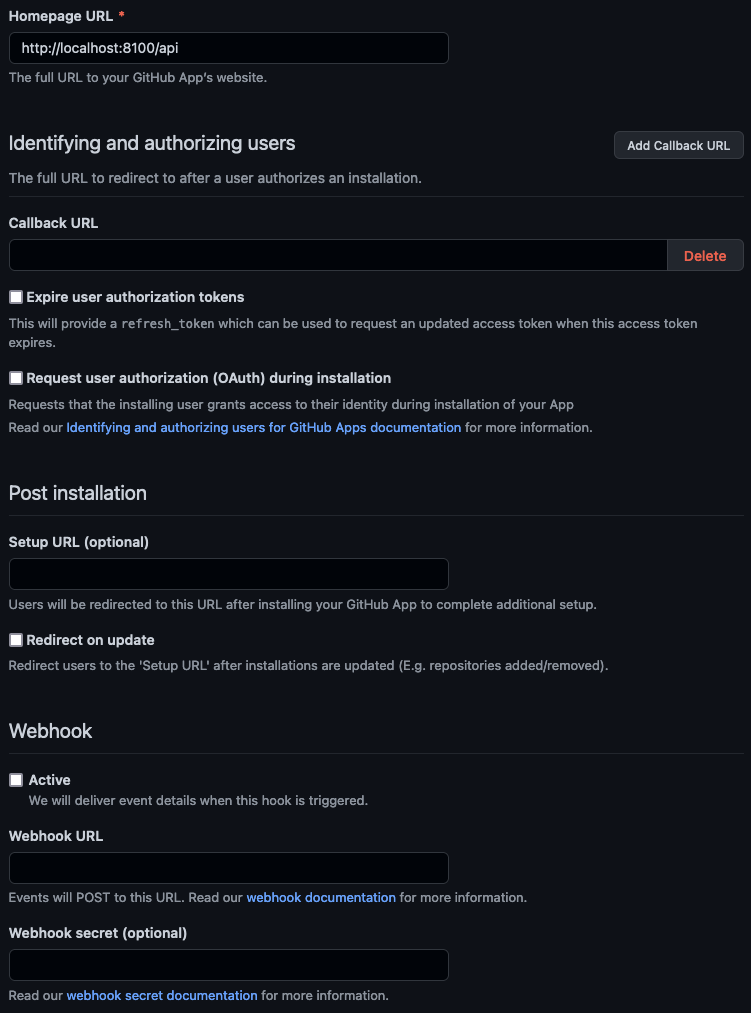

- Under organization setting => Developer settings => GitHub Apps create a new GitHub app. (

https://github.com/organizations/[REPLACE_WITH_YOUR_ORG_NAME]ap/settings/apps) - Give your app a meaningful name, and description.

- Homepage URL can be anything, I recommend we can fill in with

http://localhost:8100/api. - Make sure Expire user authorization tokens, Request user authorization (OAuth) during installation and Webhook:Active boxes are unchecked.[]

Step 3

- Create and save a client secret, this will be needed as env variable

- Create and save the GitHub App private key(.pem file), this will be needed to deploy the server

- Click Install App in side bar, find the test organization created earlier, click Install to install application to organization

Step 4

-

In

api/src/configruncp config.json.example config.jsonto copyconfig.json.exampleand createconfig.json. Update orgs and primaryOrg to the organization name that you have your GitHub App installed on. -

copy the private key from the GitHub App you just downloaded to

api/src/configand rename it togithub-private-key.pem

Step 5

- Copy

.env.exampleto create.env. - You can find the

Client IDandApp IDon the GitHub App page, copy those values toGITHUB_CLIENT_IDandGITHUB_APP_ID. - Copy the client secret that you saved in Step 3 to

GITHUB_CLIENT_SECRET - Fill

GITHUB_ORGANIZATIONwith Organization name that you have your GitHub App installed on.

Last Step

- In application root directory: run

mkdir pg_data - In /api directory run

npm install

npm run build

- In /web directory run

npm install

Now you are ready to go back to application root directory and run docker-compose up -d

After Docker Container finish creating, you can vist you local build at: http://localhost:8101/public-landing

- Create an empty pg_data folder for the database:

mkdir pg_data - Install and build the api dependencies and application:

cd api && npm install && npm run build && cd .. - Install the web dependencies:

cd web && npm install && cd .. - Build the docker images:

docker-compose build - Start Platform Services Registry

docker-compose up -d

See the included LICENSE file.