A collection of strong multimodal models for building the best multimodal agents

- 07/04/2024: OmAgent is now open-sourced. 🌟 Dive into our Multi-modal Agent Framework for complex video understanding. Read more in our paper.

- 06/09/2024: OmChat has been released. 🎉 Discover the capabilities of our multimodal language models, featuring robust video understanding and support for context up to 512k. More details in the technical report.

- 03/12/2024: OmDet is now open-sourced. 🚀 Experience our fast and accurate Open Vocabulary Detection (OVD) model, achieving 100 FPS. Learn more in our paper.

Here are the various projects we've worked on at OmLab:

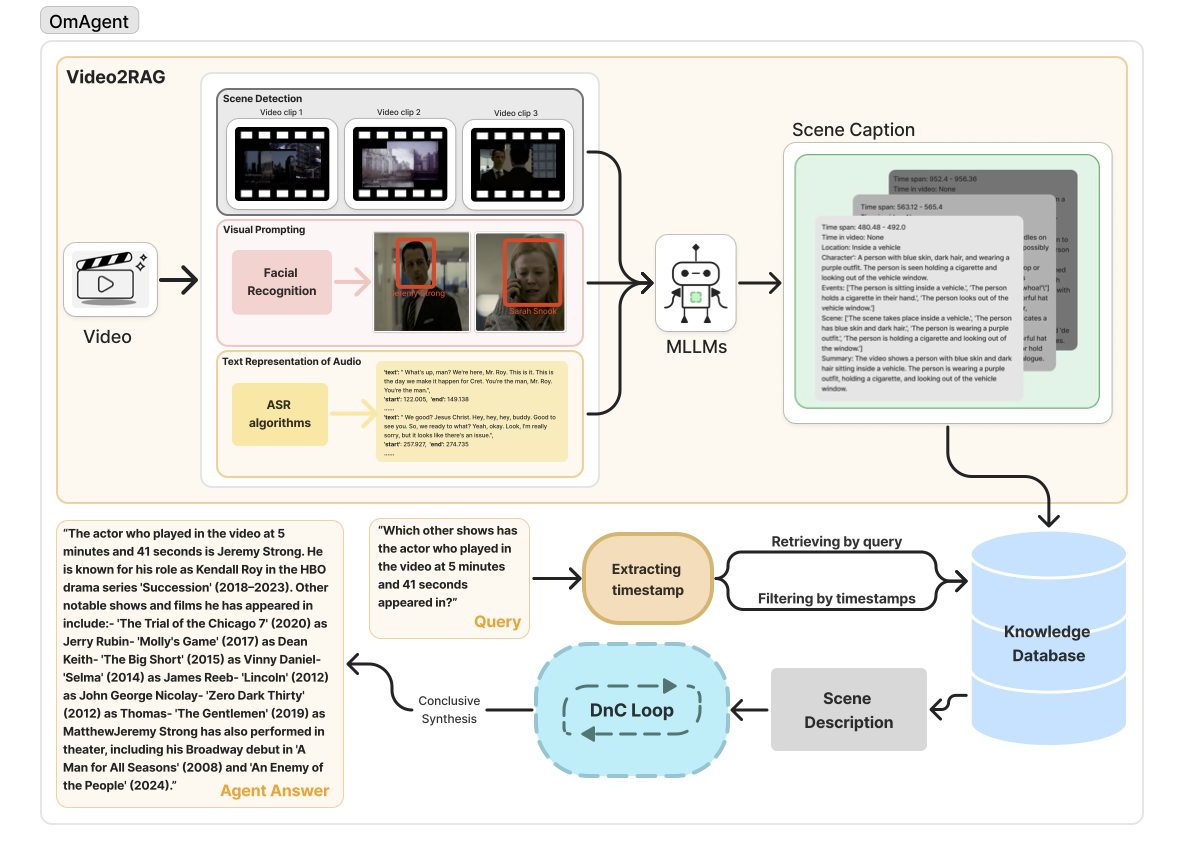

⭐️ OmAgent

Multi-modal Agent Framework for Complex Video Understanding with Task Divide-and-Conquer

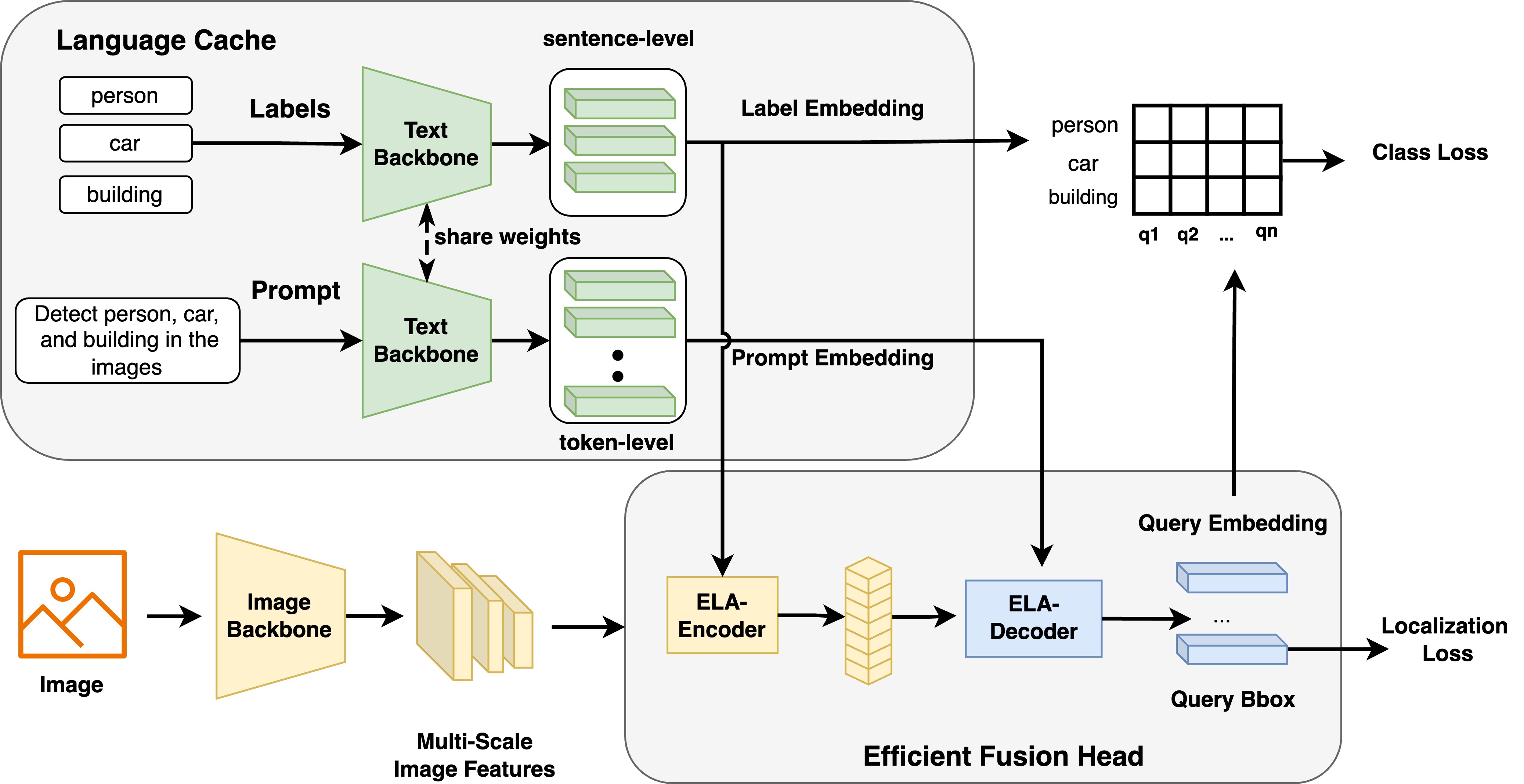

⭐️ OmDet

Fast and accurate open-vocabulary end-to-end object detection

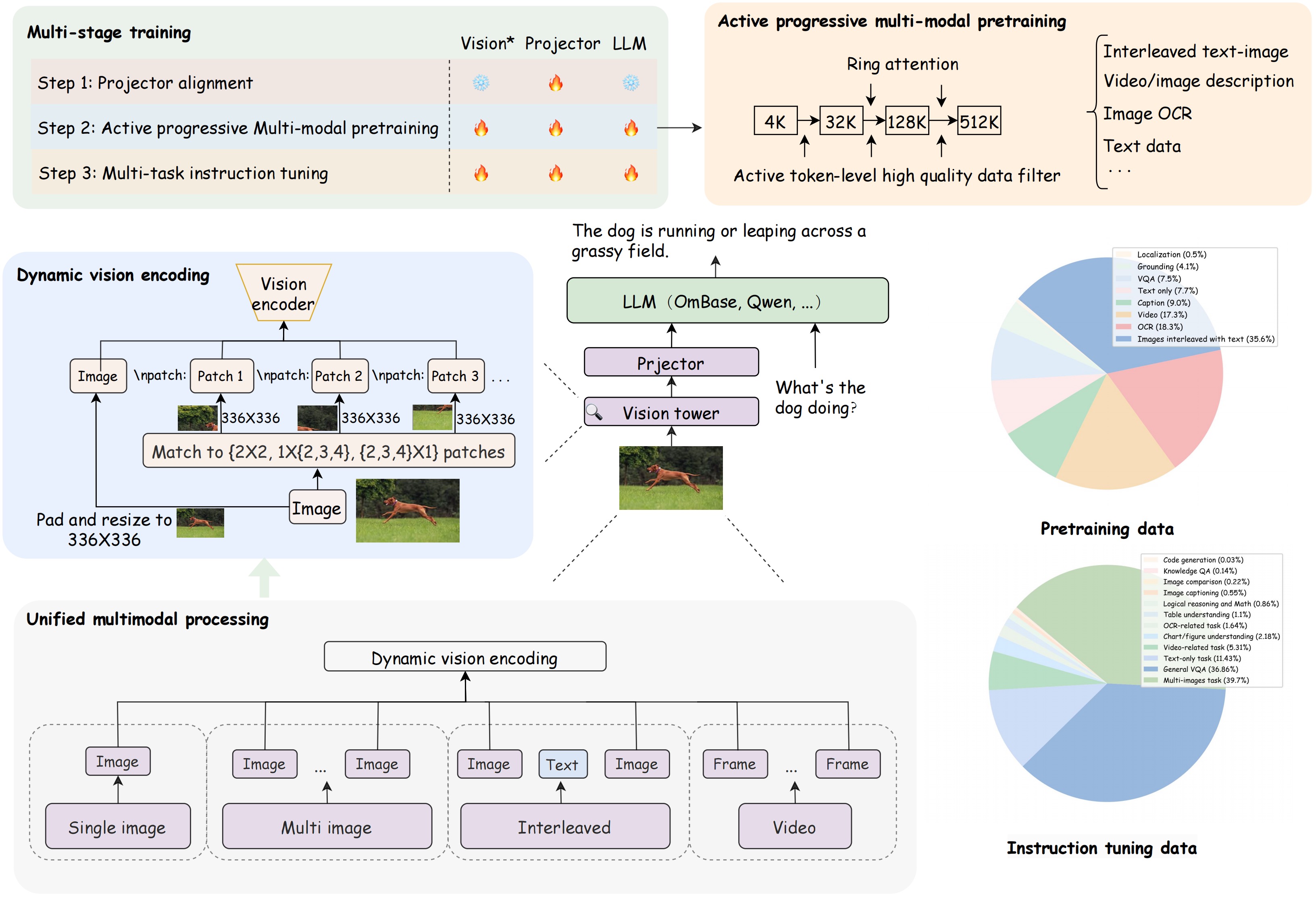

⭐️ OmChat

Multimodal Language Models with Strong Long Context and Video Understanding

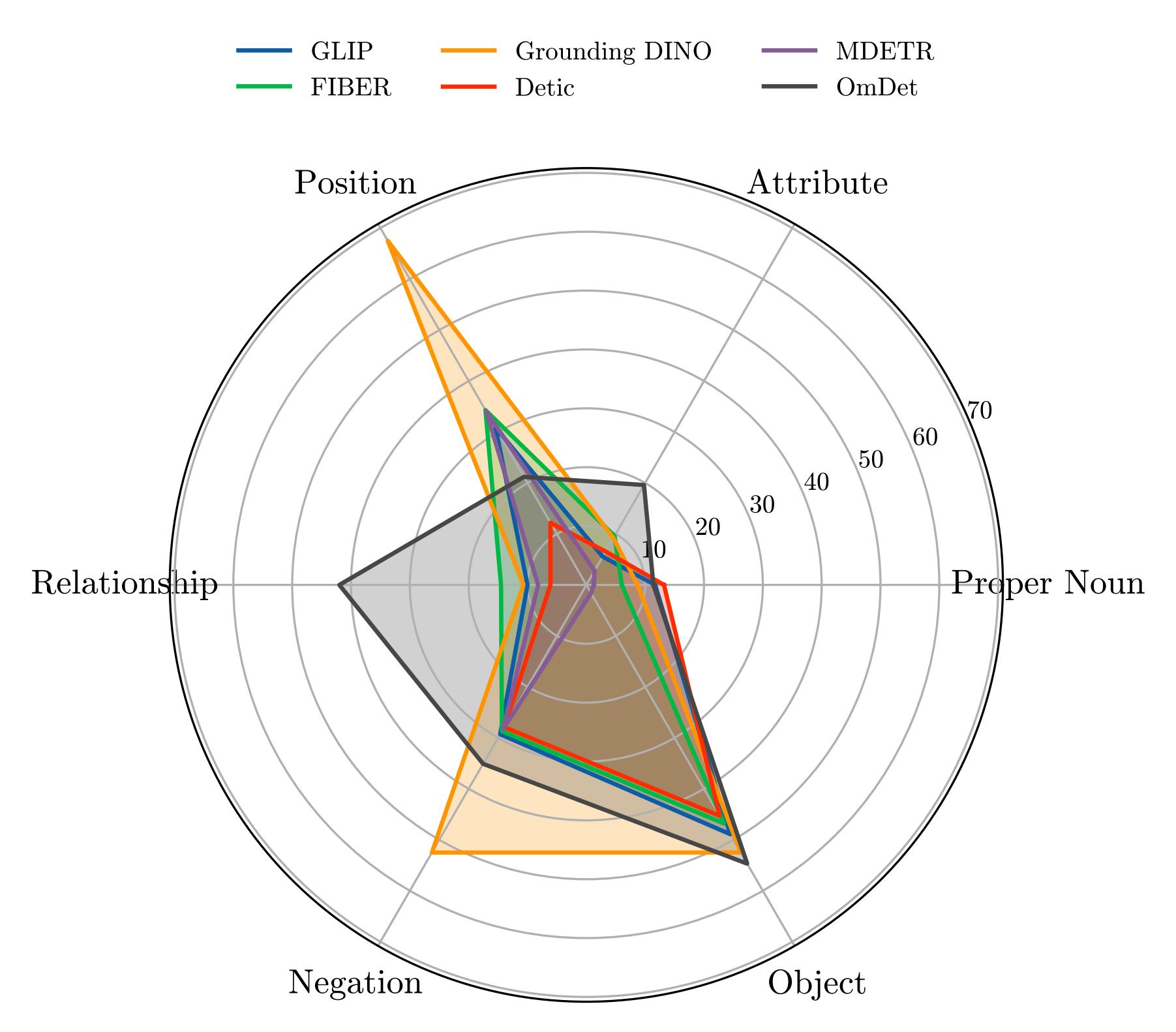

⭐️ OVDEval

A Comprehensive Evaluation Benchmark for Open-Vocabulary Detection

Here are the research papers published by OmLab:

🏷️ How to Evaluate the Generalization of Detection? A Benchmark for Comprehensive Open-Vocabulary Detection

Published in: AAAI, 2024

Published in: IET Computer Vision, 2024

Published in: Arxiv. 2024

🏷️ OmAgent: A Multi-modal Agent Framework for Complex Video Understanding with Task Divide-and-Conquer

Published in: Arxiv. 2024

🏷️ OmChat: A Recipe to Train Multimodal Language Models with Strong Long Context and Video Understanding

Published in: Arxiv. 2024

Published in: NAACL, 2021

For more information, feel free to reach out to us at tianchez@hzlh.com.

Thank you for visiting OmModel's repository. We hope you find our projects and papers insightful and useful!