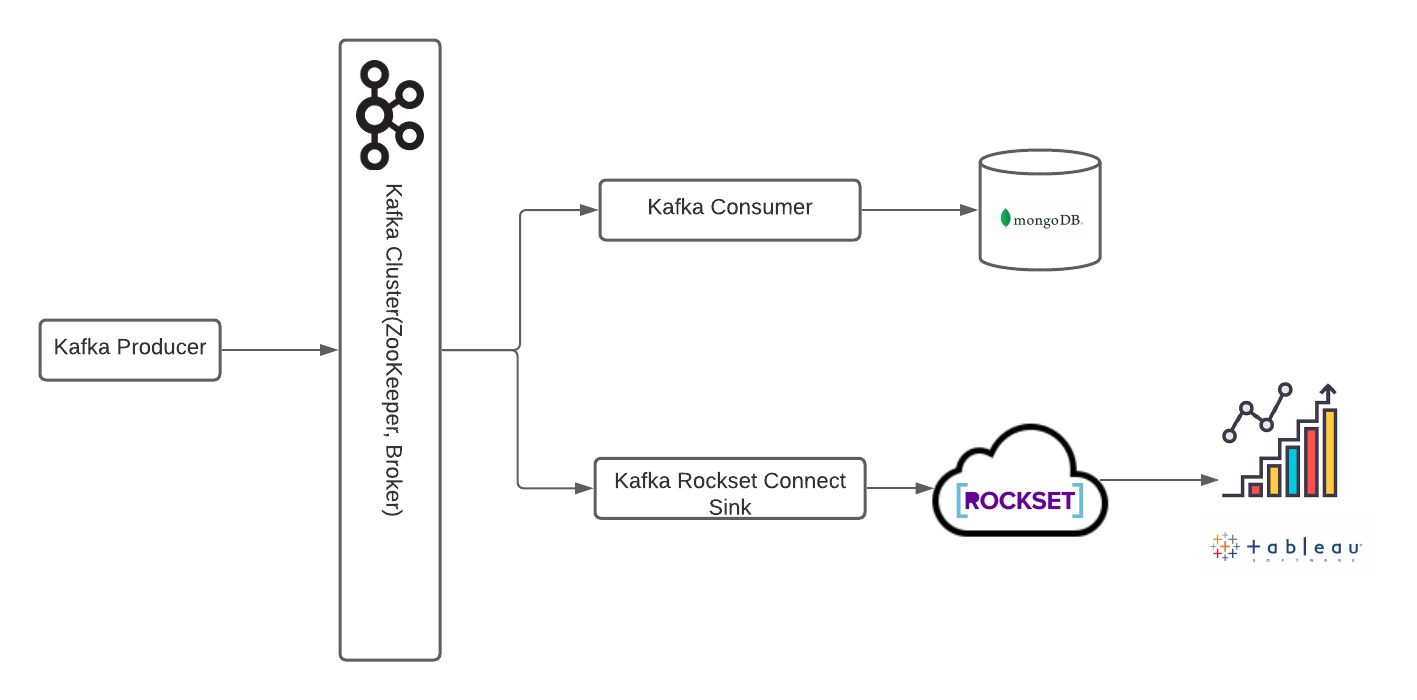

The data pipeline is designed to provide near real-time analysis of the sales for a fictional company. The project has following components:

-

- kafkaProducer.py file generates dummy sales data and streams it with topic 'sales'. The data contains information about three sales attributes: State, Category, Platform

-

- kafkaConsumer.py file consumes the stream by producer with topic 'sales' and writes the records to the collection 'salesRecords' in mongoDB.

-

- The connector is used to perform kafka integration with the Rockset Cluster.

-

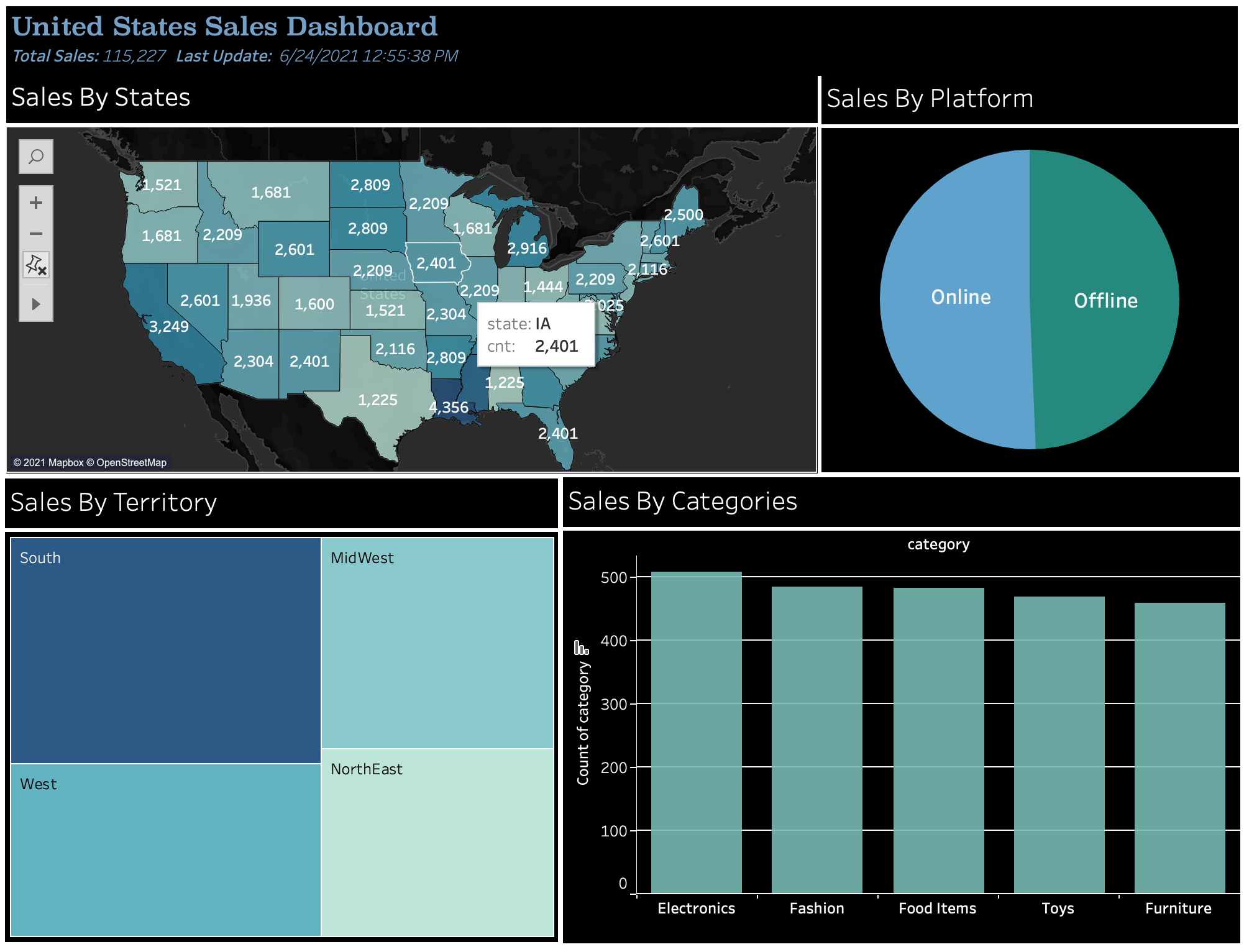

- With custom SQL the data from Rockset Cluster is queried and visualized. The dashboard Sales_Dashboard contains the code for custom SQL and visualizations.

The dashboard is published on Tableau Public and can be accessed by following this link