Lihe Yang1 · Bingyi Kang2† · Zilong Huang2

Zhen Zhao · Xiaogang Xu · Jiashi Feng2 · Hengshuang Zhao1*

1HKU 2TikTok

†project lead *corresponding author

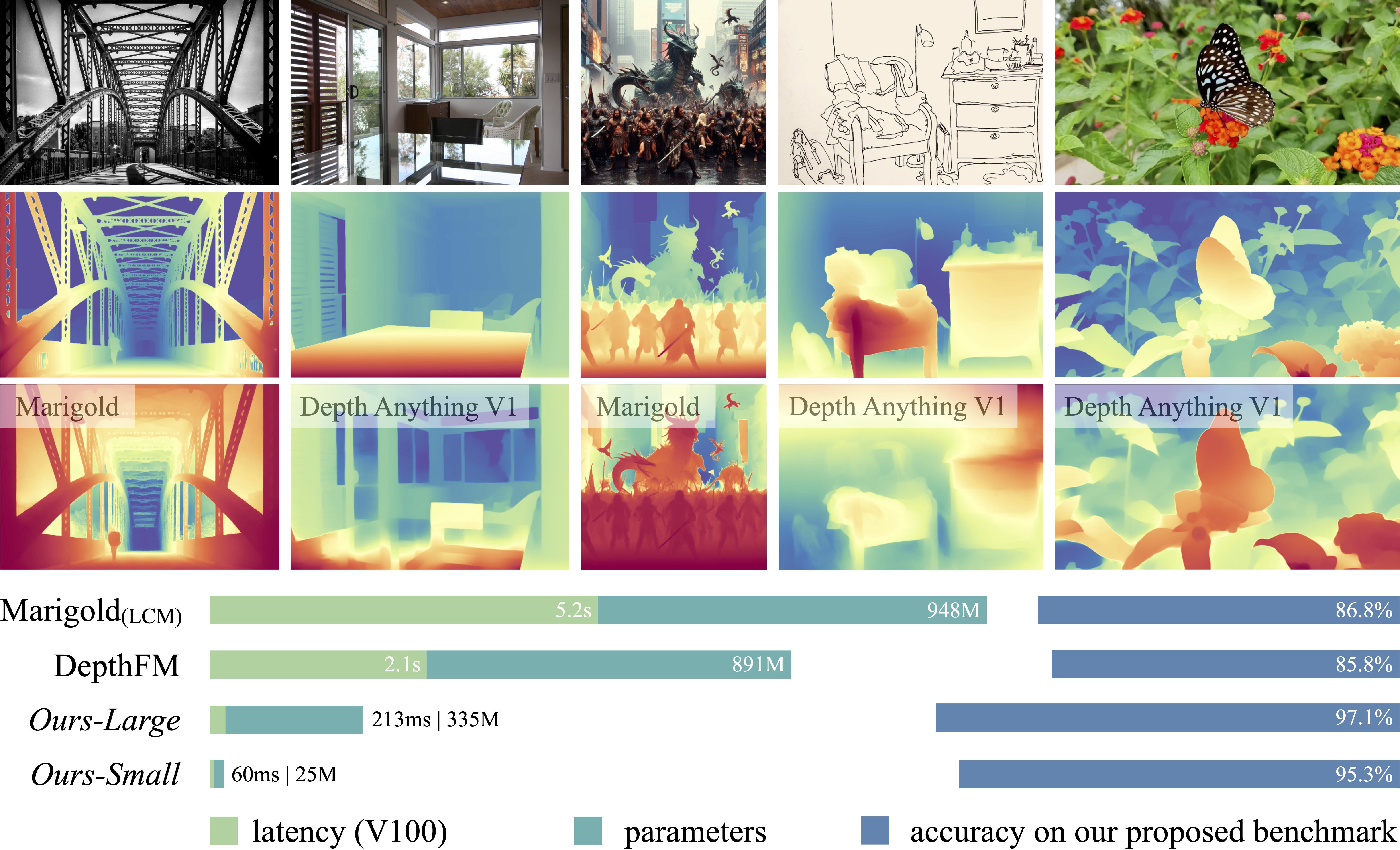

This work presents Depth Anything V2. Compared with V1, this version produces significantly more fine-grained and robust depth predictions. Compared with SD-based models, it is much more efficient and lightweight.

- 2024-06-14: Paper, project page, code, models, demo, and benchmark are all released.

We provide four models of varying scales for robust relative depth estimation:

| Model | Params | Checkpoint |

|---|---|---|

| Depth-Anything-V2-Small | 24.8M | Download |

| Depth-Anything-V2-Base | 97.5M | Download |

| Depth-Anything-V2-Large | 335.3M | Download |

| Depth-Anything-V2-Giant | 1.3B | Download |

import cv2

import torch

from depth_anything_v2.dpt import DepthAnythingV2

# take depth-anything-v2-giant as an example

model = DepthAnythingV2(encoder='vitg', features=384, out_channels=[1536, 1536, 1536, 1536])

model.load_state_dict(torch.load('checkpoints/depth_anything_v2_vitg.pth', map_location='cpu'))

model.eval()

raw_img = cv2.imread('your/image/path')

depth = model.infer_img(raw_img) # HxW raw depth mapgit clone https://github.com/DepthAnything/Depth-Anything-V2

cd Depth-Anything-V2

pip install -r requirements.txtpython run.py --encoder <vits | vitb | vitl | vitg> --img-path <path> --outdir <outdir> [--input-size <size>] [--pred-only] [--grayscale]Options:

--img-path: You can either 1) point it to an image directory storing all interested images, 2) point it to a single image, or 3) point it to a text file storing all image paths.--input-size(optional): By default, we use input size518for model inference. You can increase the size for even more fine-grained results.--pred-only(optional): Only save the predicted depth map, without raw image.--grayscale(optional): Save the grayscale depth map, without applying color palette.

For example:

python run.py --encoder vitg --img-path assets/examples --outdir depth_visIf you want to use Depth Anything V2 on videos:

python run_video.py --encoder vitg --video-path assets/examples_video --outdir video_depth_visPlease note that our larger model has better temporal consistency on videos.

To use our gradio demo locally:

python app.pyYou can also try our online demo.

Note: Compared to V1, we have made a minor modification to the DINOv2-DPT architecture (originating from this issue). In V1, we unintentionally used features from the last four layers of DINOv2 for decoding. In V2, we use intermediate features instead. Although this modification did not improve details or accuracy, we decided to follow this common practice.

Please refer to metric depth estimation.

Please refer to DA-2K benchmark.

If you find this project useful, please consider citing:

@article{depth_anything_v2,

title={Depth Anything V2},

author={Yang, Lihe and Kang, Bingyi and Huang, Zilong and Zhao, Zhen and Xu, Xiaogang and Feng, Jiashi and Zhao, Hengshuang},

journal={arXiv preprint arXiv:},

year={2024}

}