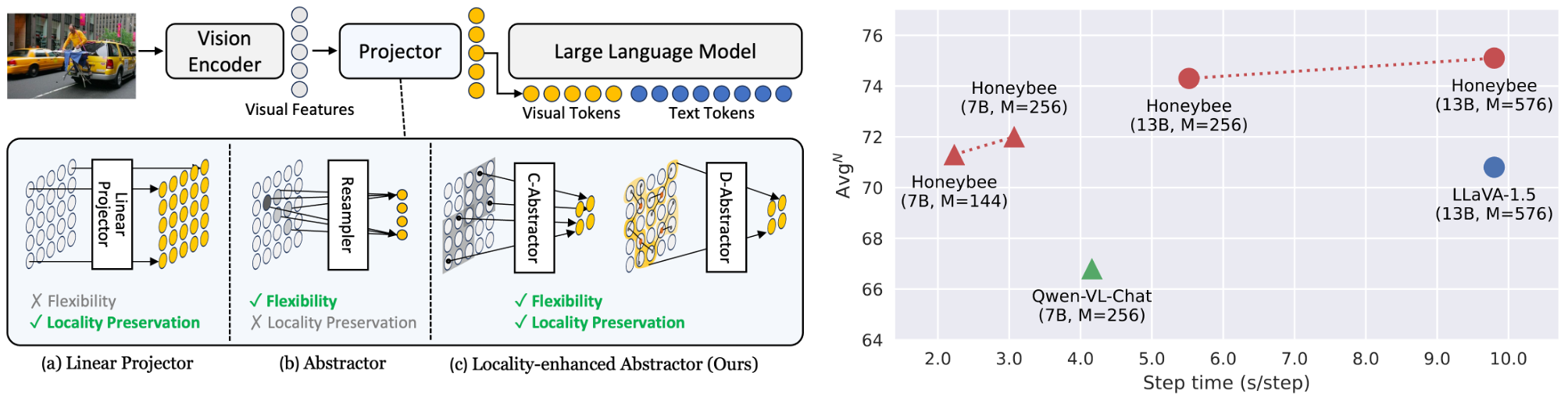

This is an official PyTorch Implementation of Honeybee: Locality-enhanced Projector for Multimodal LLM, Junbum Cha*, Wooyoung Kang*, Jonghwan Mun*, Byungseok Roh. [paper]

Coming soon:

- Arxiv

- Inference code

- Checkpoints

- Training code

- PyTorch

2.0.1

pip install -r requirements.txt

# additional requirements for demo

pip install -r requirements_demo.txtWe use MMB, MME, SEED-Bench, and LLaVA-Bench (in-the-wild) for model evaluation.

MMB, SEED-I, and LLaVA-w indicate MMB dev split, SEED-Bench images, and LLaVA-Bench (in-the-wild), respectively.

- Comparison with other SoTA methods (Table 6)

| Model | Checkpoint | MMB | MME | SEED-I | LLaVA-w |

|---|---|---|---|---|---|

| Honeybee-C-7B-M144 | download | 70.1 | 1891.3 | 64.5 | 67.1 |

| Honeybee-D-7B-M144 | download | 70.8 | 1835.5 | 63.8 | 66.3 |

| Honeybee-C-13B-M256 | download | 73.2 | 1944.0 | 68.2 | 75.7 |

| Honeybee-D-13B-M256 | download | 73.5 | 1950.0 | 66.6 | 72.9 |

- Pushing the limits of Honeybee (Table 7)

| Model | Checkpoint | MMB | MME | SEED-I | LLaVA-w | ScienceQA |

|---|---|---|---|---|---|---|

| Honeybee-C-7B-M256 | download | 71.0 | 1951.3 | 65.5 | 70.6 | 93.2 |

| Honeybee-C-13B-M576 | download | 73.6 | 1976.5 | 68.6 | 77.5 | 94.4 |

Please follow the official guidelines to prepare benchmark datasets: MMB, MME, SEED-Bench, ScienceQA, and OwlEval. Then, organize the data and checkpoints as follows:

data

├── MMBench

│ ├── mmbench_dev_20230712.tsv # MMBench dev split

│ └── mmbench_test_20230712.tsv # MMBench test split

│

├── MME

│ ├── OCR # Directory for OCR subtask

│ ├── ...

│ └── text_translation

│

├── SEED-Bench

│ ├── SEED-Bench-image # Directory for image files

│ └── SEED-Bench.json # Annotation file

│

├── ScienceQA

│ ├── llava_test_QCM-LEPA.json # Test split annotation file

│ ├── text # Directory for meta data

│ │ ├── pid_splits.json

│ │ └── problems.json

│ └── images # Directory for image files

│ └── test

│

└── OwlEval

├── questions.jsonl # Question annotations

└── images # Directory for image files

checkpoints

├── 7B-C-Abs-M144

├── 7B-C-Abs-M256

├── 7B-D-Abs-M144

├── 13B-C-Abs-M256

├── 13B-C-Abs-M576

└── 13B-D-Abs-M256

torchrun --nproc_per_node=auto --standalone eval_tasks.py \

--ckpt_path checkpoints/7B-C-Abs-M144/last \

--config \

configs/tasks/mme.yaml \

configs/tasks/mmb.yaml \

configs/tasks/seed.yaml \

configs/tasks/sqa.yamlWe utilized batch inference in our evaluation to accelerate experiments. The batch inference does not significantly change average scores, but individual scores may vary slightly (about ±0.1~0.2). To strictly reproduce the official results, the use of 8 devices (GPUs) is required; the number of devices influences batch construction, affecting the final scores.

We used the default batch size specified in each task config, except for the largest model (Honeybee-C-13B-M576) where we used B=8 due to memory constraints.

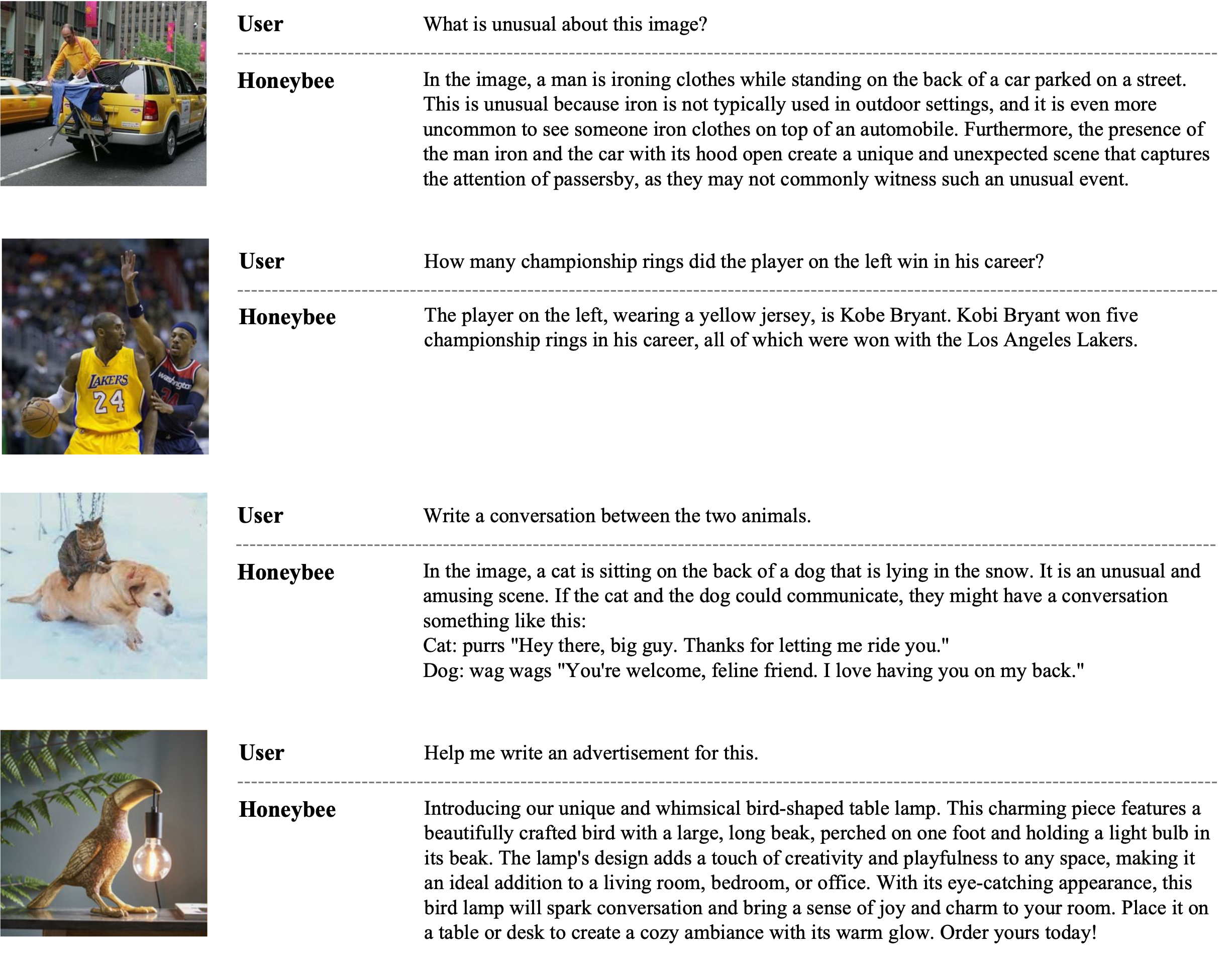

Example code for the inference is provided in inference_example.ipynb.

The example images in ./examples are adopted from mPLUG-Owl.

We also provide gradio demo:

python -m serve.web_server --bf16 --port {PORT} --base-model checkpoints/7B-C-Abs-M144/last@article{cha2023honeybee,

title={Honeybee: Locality-enhanced Projector for Multimodal LLM},

author={Junbum Cha and Wooyoung Kang and Jonghwan Mun and Byungseok Roh},

journal={arXiv preprint arXiv:2312.06742},

year={2023}

}The source code is licensed under Apache 2.0 License.

The pretrained weights are licensed under CC-BY-NC 4.0 License.

Acknowledgement: this project is developed based on mPLUG-Owl, which is also under the Apache 2.0 License.