The official open source training and inference code for our paper "FAST-VQA: Efficient End-to-end Video Quality Assessment with Fragment Sampling". ---- To Appear in ECCV2022 ----

Pretrained weights:

Supports

- Training with Large Dataset

finetune.py - Finetuning into Smaller Datasets

finetune.py - Evaluation

infer.py - Direct API Import

from fastvqa import deep_end_to_end_vqa - Package Installation as

pip install .

in Master Branch.

The Dev_Branch contains several new features which is more suitable for development of your own deep end-to-end VQA models.

Examples on Live Fragments:

(From LIVE-VQC, 720p, Original Score 38.24)

(From LIVE-VQC, 720p, Original Score 38.24)

(From LIVE-VQC, 1080p, Original Score 74.54)

(From LIVE-VQC, 1080p, Original Score 74.54)

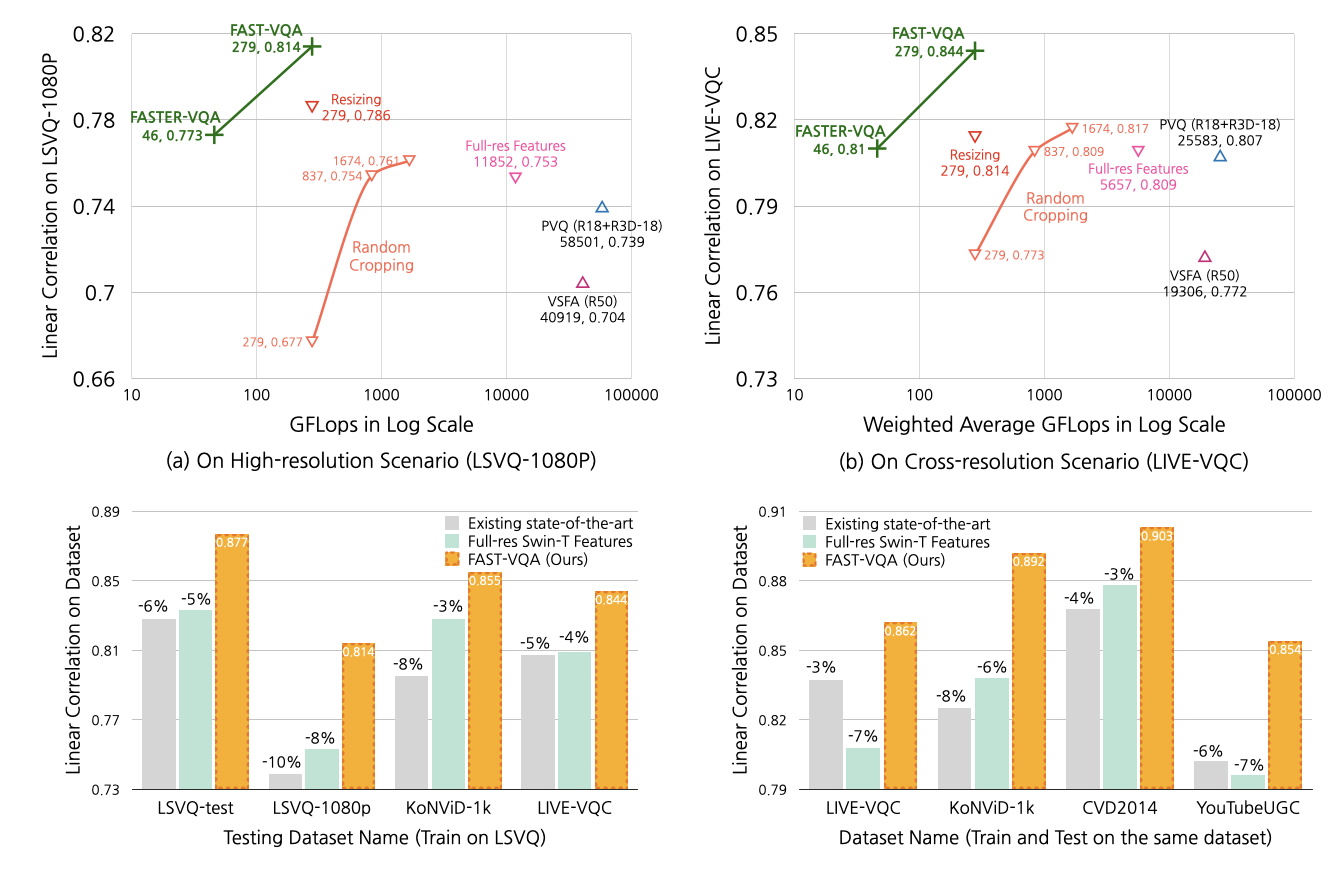

We reach SOTA performance with 210x reduced FLOPs.

We also refresh the SOTA on multiple databases by a very large margin.

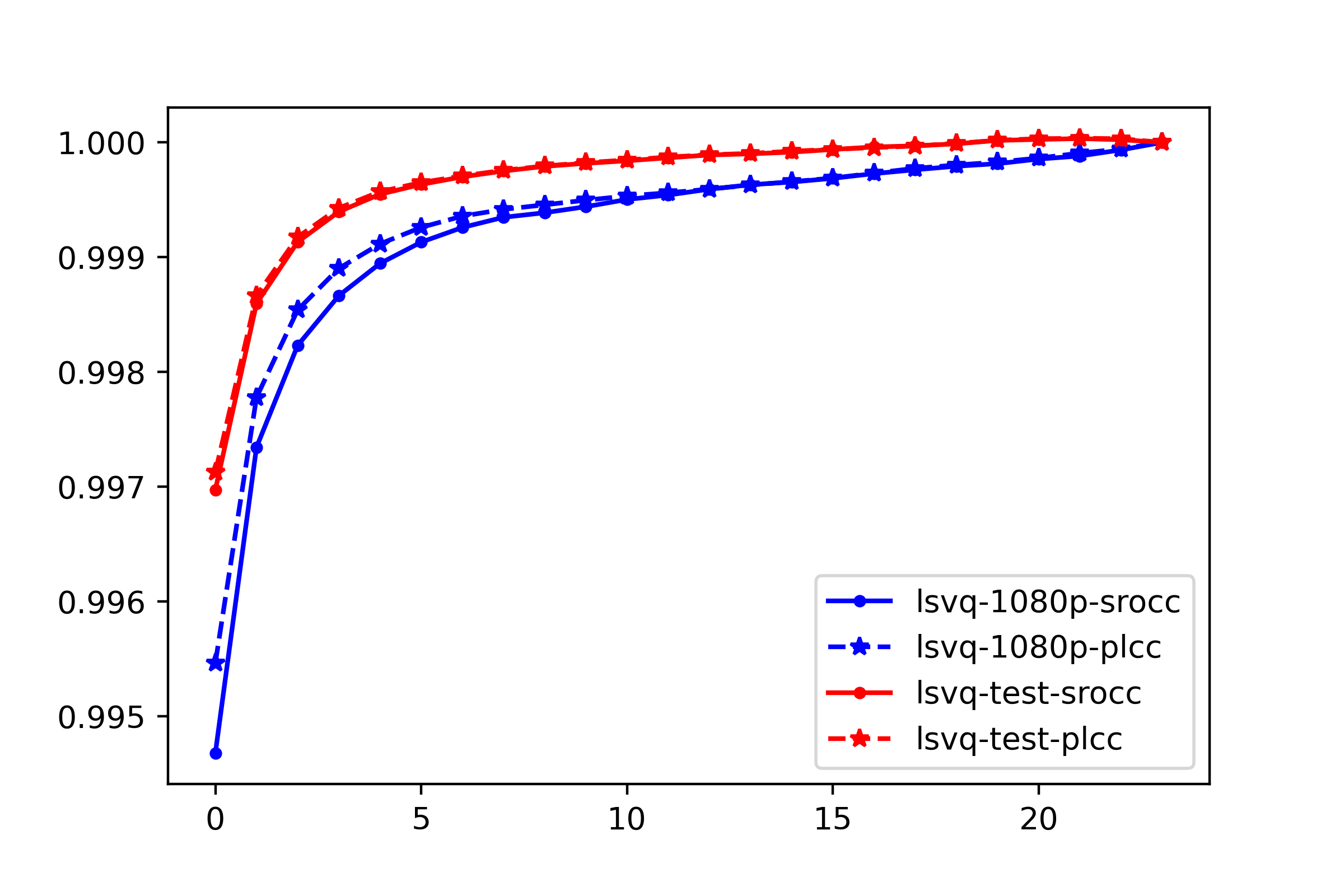

Our sparse and efficient sub-sampling also reaches at least 99.5% relative accuracy than extreme dense sampling.

See in quality map demos for examples on local quality maps.

The original method is build with

- python=3.8.8

- torch=1.10.2

- torchvision=0.11.3

while using decord module to read original videos (so that you don't need to make any transform on your original .mp4 input).

To get all the requirements, please run

pip install -r requirements.txtYou can run

pip install .or

python setup.py installlto install the full FAST-VQA with its requirements.

If you would like to visualize the proposed fragments, you can generate the demo visualizations by yourself, via the following script:

python visualize.py -d $DATASET$ You can also visualize the patch-wise local quality maps rendered on fragments, via

python visualize.py -d $DATASET$ -nmYou can install this directory by running

pip install .Then you can embed these lines into your python scripts:

from fastvqa import deep_end_to_end_vqa

dum_video = torch.randn((3,240,720,1080)) # A sample 720p, 240-frame video

vqa = deep_end_to_end_vqa(True, model_type=model_type)

score = vqa(dum_video)

print(score)This script will automatically download the pretrained model weights on LSVQ.

You can directly benchmark the model with mainstream benchmark VQA datasets.

python inference.py -d $DATASET$Available datasets are LIVE_VQC, KoNViD, (experimental: CVD2014, YouTubeUGC), LSVQ (or 'all' if you want to infer all of them).

You might need to download the original Swin-T Weights to initialize the model.

This training will split the dataset into 10 random train/test splits (with random seed 42) and report the best result on the random split of the test dataset.

python train.py -d $DATASET$ --from_arp Supported datasets are KoNViD-1k, LIVE_VQC, CVD2014, YouTube-UGC.

This training will do no split and directly report the best result on the provided validation dataset.

python inference.py -d $TRAINSET$-$VALSET$ --from_ar -lep 0 -ep 30Supported TRAINSET is LSVQ, and VALSETS can be LSVQ(LSVQ-test+LSVQ-1080p), KoNViD, LIVE_VQC.

This training will split the dataset into 10 random train/test splits (with random seed 42) and report the best result on the random split of the test dataset.

python inference.py -d $DATASET$ --from_arSupported datasets are KoNViD-1k, LIVE_VQC, CVD2014, YouTube-UGC.

You can add the argument -m fast-m in any scripts (finetune.py, inference.py, visualize.py) above to switch to FAST-VQA-M instead of FAST-VQA.

Please cite the following paper when using this repo.

@article{wu2022fastquality,

title={FAST-VQA: Efficient End-to-end Video Quality Assessment with Fragment Sampling},

author={Wu, Haoning and Chen, Chaofeng and Hou, Jingwen and Wang, Annan and Sun, Wenxiu and Yan, Qiong and Weisi, Lin},

journal={Proceedings of European Conference of Computer Vision (ECCV)},

year={2022}

}