Important

This sample has moved to https://github.com/Azure-Samples/openai-chat-backend-fastapi

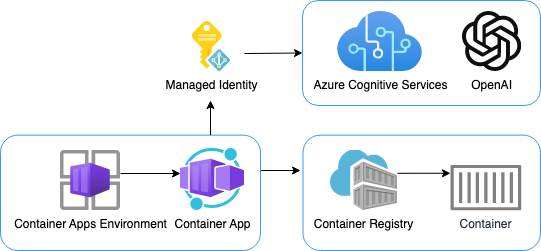

This repository includes a simple Python FastAPI app that streams responses from Azure OpenAI GPT models.

The repository is designed for use with Docker containers, both for local development and deployment, and includes infrastructure files for deployment to Azure Container Apps. 🐳

We recommend first going through the deployment steps before running this app locally, since the local app needs credentials for Azure OpenAI to work properly.

This project has Dev Container support, so it will be be setup automatically if you open it in Github Codespaces or in local VS Code with the Dev Containers extension.

If you're not using one of those options for opening the project, then you'll need to:

-

Create a Python virtual environment and activate it.

-

Install the requirements:

python3 -m pip install -r requirements-dev.txt

-

Install the pre-commit hooks:

pre-commit install

This repo is set up for deployment on Azure Container Apps using the configuration files in the infra folder.

- Sign up for a free Azure account and create an Azure Subscription.

- Request access to Azure OpenAI Service by completing the form at https://aka.ms/oai/access and awaiting approval.

- Install the Azure Developer CLI. (If you open this repository in Codespaces or with the VS Code Dev Containers extension, that part will be done for you.)

-

Login to Azure:

azd auth login

-

Provision and deploy all the resources:

azd up

It will prompt you to provide an

azdenvironment name (like "chat-app"), select a subscription from your Azure account, and select a location where OpenAI is available (like "francecentral"). Then it will provision the resources in your account and deploy the latest code. If you get an error or timeout with deployment, changing the location can help, as there may be availability constraints for the OpenAI resource. -

When

azdhas finished deploying, you'll see an endpoint URI in the command output. Visit that URI, and you should see the chat app! 🎉 -

When you've made any changes to the app code, you can just run:

azd deploy

You can pair this backend with a frontend of your choice. The frontend needs to be able to read NDJSON from a ReadableStream, and send JSON to the backend with an HTTP POST request. The JSON schema should conform to the Chat App Protocol.

Here are frontends that are known to work with this backend:

To pair a frontend with this backend, you'll need to:

- Deploy the backend using the steps above. Make sure to note the endpoint URI.

- Open the frontend project.

- Deploy the frontend using the steps in the frontend repo, following their instructions for setting the backend endpoint URI.

- Open this project again.

- Run

azd env set ALLOWED_ORIGINS "https://<your-frontend-url>". That URL (or list of URLs) will specified in the CORS policy for the backend to allow requests from your frontend. - Run

azd upto deploy the backend with the new CORS policy.

Pricing varies per region and usage, so it isn't possible to predict exact costs for your usage. The majority of the Azure resources used in this infrastructure are on usage-based pricing tiers. However, Azure Container Registry has a fixed cost per registry per day.

You can try the Azure pricing calculator for the resources:

- Azure OpenAI Service: S0 tier, ChatGPT model. Pricing is based on token count. Pricing

- Azure Container App: Consumption tier with 0.5 CPU, 1GiB memory/storage. Pricing is based on resource allocation, and each month allows for a certain amount of free usage. Pricing

- Azure Container Registry: Basic tier. Pricing

- Log analytics: Pay-as-you-go tier. Costs based on data ingested. Pricing

azd down.

Assuming you've run the steps in Opening the project and have run azd up, you can now run the FastAPI app locally using the uvicorn server:

python3 -m uvicorn src.app:app --reload

You may want to save costs by developing against a local LLM server, such as llamafile. Note that a local LLM will generally be slower and not as sophisticated.

Once you've got your local LLM running and serving an OpenAI-compatible endpoint, define LOCAL_OPENAI_ENDPOINT in your .env file.

For example, to point at a local llamafile server running on its default port:

LOCAL_OPENAI_ENDPOINT="http://localhost:8080/v1"If you're running inside a dev container, use this local URL instead:

LOCAL_OPENAI_ENDPOINT="http://host.docker.internal:8080/v1"In addition to the Dockerfile that's used in production, this repo includes a docker-compose.yaml for

local development which creates a volume for the app code. That allows you to make changes to the code

and see them instantly.

-

Install Docker Desktop. If you opened this inside Github Codespaces or a Dev Container in VS Code, installation is not needed.

⚠️ If you're on an Apple M1/M2, you won't be able to rundockercommands inside a Dev Container; either use Codespaces or do not open the Dev Container. -

Make sure that the

.envfile exists. Theazd updeployment step should have created it. -

Add your Azure OpenAI API key to the

.envfile if not already there. You can find your key in the Azure Portal for the OpenAI resource, under Keys and Endpoint tab.

AZURE_OPENAI_KEY="<your-key-here>"

-

Start the services with this command:

docker-compose up --build

-

Click 'http://0.0.0.0:3100' in the terminal, which should open a new tab in the browser. You may need to navigate to 'http://localhost:3100' if that URL doesn't work.