Lite.AI 🚀🚀🌟 is a simple, low-coupling, and user-friendly C++ library for awesome🔥🔥🔥 AI models, such as YOLOX, YoloV5, YoloV4, DeepLabV3, ArcFace, CosFace, Colorization, SSD, etc. And, it only relies on OpenCV and commonly used inference engines, namely, onnxruntime, ncnn, and mnn. It currently mainly includes some CV(Computer Vision 💻) modules, such as object detection, face detection, style transfer, face alignment, face recognition, segmentation, colorization, face attributes analysis, image classification, matting, etc. You can use these awesome models through lite::cv::Type::Class syntax, such as lite::cv::detection::YoloV5 or lite::cv::face::detect::UltraFace. I do have plans to add NLP or ASR modules, but not coming soon. Currently, I am focusing🔍 on Computer Vision 💻 . It is important to note that the models here are all from third-party projects. All models used will be cited. Many thanks to these contributors. Have a good travel ~ 🙃🤪🍀

- 🔥 (20210721) Added YOLOX into Lite.AI ! Use it through lite::cv::detection::YoloX syntax ! See demo .

⚠️ (20210716) Lite.AI was rename from the LiteHub repo ! LiteHub will no longer be maintained.

Expand for More Notes.

- Working Notes. 👇🏻

Expand for Related Lite.AI Projects.

-

Related Lite.AI Projects. 👇🏻

- ❇️ lite.ai (doing✋🏻)

- ❇️ lite.ai-onnxruntime (doing✋🏻)

⚠️ lite.ai-mnn (todo️)⚠️ lite.ai-ncnn (todo️)- ❇️ lite.ai-release (doing✋🏻)

⚠️ lite.ai-python (todo️)⚠️ lite.ai-jni (todo️)

The code of Lite.AI is released under the MIT License.

- Introduction

- Related Lite.AI Projects

- Dependencies

- Model Zoo

- Build Lite.AI

- Examples for LiteHub

- LiteHub API Docs

- Other Docs

- Acknowledgements

- License

- Mac OS.

installOpenCVandonnxruntimelibraries using Homebrew or you can download the built dependencies from this repo. See third_party and build-docs1 for more details.

brew update

brew install opencv

brew install onnxruntimeExpand for More Details of Dependencies.

-

Linux.

- todo

⚠️

- todo

-

Windows.

- todo

⚠️

- todo

-

Inference Engine Plans:

- doing:

❇️onnxruntime - todo:

⚠️ NCNN

⚠️ MNN

⚠️ OpenMP

- doing:

| Namepace | Details |

|---|---|

| lite::cv::detection | Object Detection. one-stage and anchor-free detectors, YoloV5, YoloV4, SSD, etc. ✅ |

| lite::cv::classification | Image Classification. DensNet, ShuffleNet, ResNet, IBNNet, GhostNet, etc. ✅ |

| lite::cv::faceid | Face Recognition. ArcFace, CosFace, CurricularFace, etc. ❇️ |

| lite::cv::face | Face Analysis. detect, align, pose, attr, etc. ❇️ |

| lite::cv::face::detect | Face Detection. UltraFace, RetinaFace, FaceBoxes, PyramidBox, etc. ❇️ |

| lite::cv::face::align | Face Alignment. PFLD(106), FaceLandmark1000(1000 landmarks), PRNet, etc. ❇️ |

| lite::cv::face::pose | Head Pose Estimation. FSANet, etc. ❇️ |

| lite::cv::face::attr | Face Attributes. Emotion, Age, Gender. EmotionFerPlus, VGG16Age, etc. ❇️ |

| lite::cv::segmentation | Object Segmentation. Such as FCN, DeepLabV3, etc. |

| lite::cv::style | Style Transfer. Contains neural style transfer now, such as FastStyleTransfer. |

| lite::cv::matting | Image Matting. Object and Human matting. |

| lite::cv::colorization | Colorization. Make Gray image become RGB. |

| lite::cv::resolution | Super Resolution. |

Correspondence between the classes in Lite.AI and pretrained model files can be found at lite.ai.hub.md. For examples, the pretrained model files for lite::cv::detection::YoloV5 and lite::cv::detection::YoloX are listed as following.

| Model | Pretrained ONNX Files | Rename or Converted From (Repo) | Size |

|---|---|---|---|

| lite::cv::detection::YoloV5 | yolov5l.onnx | yolov5 (🔥🔥💥↑) | 188Mb |

| lite::cv::detection::YoloV5 | yolov5m.onnx | yolov5 (🔥🔥💥↑) | 85Mb |

| lite::cv::detection::YoloV5 | yolov5s.onnx | yolov5 (🔥🔥💥↑) | 29Mb |

| lite::cv::detection::YoloV5 | yolov5x.onnx | yolov5 (🔥🔥💥↑) | 351Mb |

| lite::cv::detection::YoloX | yolox_x.onnx | YOLOX (🔥🔥!!↑) | 378Mb |

| lite::cv::detection::YoloX | yolox_l.onnx | YOLOX (🔥🔥!!↑) | 207Mb |

| lite::cv::detection::YoloX | yolox_m.onnx | YOLOX (🔥🔥!!↑) | 97Mb |

| lite::cv::detection::YoloX | yolox_s.onnx | YOLOX (🔥🔥!!↑) | 34Mb |

| lite::cv::detection::YoloX | yolox_tiny.onnx | YOLOX (🔥🔥!!↑) | 19Mb |

| lite::cv::detection::YoloX | yolox_nano.onnx | YOLOX (🔥🔥!!↑) | 3.5Mb |

It means that you can load the any one yolov5*.onnx and yolox_*.onnx according to your application through the same Lite.AI classes, such as YoloV5, YoloX, etc.

auto *yolov5 = new lite::cv::detection::YoloV5("yolov5x.onnx"); // for server

auto *yolov5 = new lite::cv::detection::YoloV5("yolov5l.onnx");

auto *yolov5 = new lite::cv::detection::YoloV5("yolov5m.onnx");

auto *yolov5 = new lite::cv::detection::YoloV5("yolov5s.onnx"); // for mobile device

auto *yolox = new lite::cv::detection::YoloX("yolox_x.onnx");

auto *yolox = new lite::cv::detection::YoloX("yolox_l.onnx");

auto *yolox = new lite::cv::detection::YoloX("yolox_m.onnx");

auto *yolox = new lite::cv::detection::YoloX("yolox_s.onnx");

auto *yolox = new lite::cv::detection::YoloX("yolox_tiny.onnx");

auto *yolox = new lite::cv::detection::YoloX("yolox_nano.onnx"); // 3.5Mb only !Most of the models were converted by Lite.AI, and others were referenced from third-party libraries. The name of the class here will be different from the original repository, because different repositories have different implementations of the same algorithm. For example, ArcFace in insightface is different from ArcFace in face.evoLVe.PyTorch . ArcFace in insightface uses Arc-Loss + Softmax, while ArcFace in face.evoLVe.PyTorch uses Arc-Loss + Focal-Loss. Lite.AI uses naming to make the necessary distinctions between models from different sources. Therefore, in Lite.AI, different names of the same algorithm mean that the corresponding models come from different repositories, different implementations, or use different training data, etc. Just jump to lite.ai-demos to figure out the usage of each model in Lite.AI. ✅ means passed the test and

(Baidu Drive code: 8gin)

Expand for the pretrianed models of MNN and NCNN version.

- todo

⚠️

- todo

⚠️

Build the shared lib of Lite.AI for MacOS from sources or you can download the built lib from liblite.ai.dylib|so (TODO: Linux & Windows). Note that Lite.AI uses onnxruntime as default backend, for the reason that onnxruntime supports the most of onnx's operators. For Linux and Windows, you need to build the shared libs of OpenCV and onnxruntime firstly and put then into the third_party directory. Please reference the build-docs1 for third_party.

- Clone the Lite.AI from sources:

git clone --depth=1 -b v0.0.1 https://github.com/DefTruth/lite.ai.git # stable

git clone --depth=1 https://github.com/DefTruth/lite.ai.git # latest- For users in China, you can try:

git clone --depth=1 -b v0.0.1 https://github.com.cnpmjs.org/DefTruth/lite.ai.git # stable

git clone --depth=1 https://github.com.cnpmjs.org/DefTruth/lite.ai.git # latest- Build shared lib.

cd lite.ai

sh ./build.shcd ./build/lite.ai/lib && otool -L liblite.ai.0.0.1.dylib

liblite.ai.0.0.1.dylib:

@rpath/liblite.ai.0.0.1.dylib (compatibility version 0.0.1, current version 0.0.1)

@rpath/libopencv_highgui.4.5.dylib (compatibility version 4.5.0, current version 4.5.2)

@rpath/libonnxruntime.1.7.0.dylib (compatibility version 0.0.0, current version 1.7.0)

...Expand for more details of How to link the shared lib of Lite.AI?

cd ../ && tree .

├── bin

├── include

│ ├── lite

│ │ ├── backend.h

│ │ ├── config.h

│ │ └── lite.h

│ └── ort

└── lib

└── liblite.ai.0.0.1.dylib- Run the built examples:

cd ./build/lite.ai/bin && ls -lh | grep lite

-rwxr-xr-x 1 root staff 301K Jun 26 23:10 liblite.ai.0.0.1.dylib

...

-rwxr-xr-x 1 root staff 196K Jun 26 23:10 lite_yolov4

-rwxr-xr-x 1 root staff 196K Jun 26 23:10 lite_yolov5

..../lite_yolov5

LITEORT_DEBUG LogId: ../../../hub/onnx/cv/yolov5s.onnx

=============== Input-Dims ==============

...

detected num_anchors: 25200

generate_bboxes num: 66

Default Version Detected Boxes Num: 5- Link

lite.aishared lib. You need to make sure thatOpenCVandonnxruntimeare linked correctly. Just like:

cmake_minimum_required(VERSION 3.17)

project(testlite.ai)

set(CMAKE_CXX_STANDARD 11)

set(CMAKE_BUILD_TYPE debug)

# link opencv.

set(OpenCV_DIR ${CMAKE_SOURCE_DIR}/opencv/lib/cmake/opencv4)

find_package(OpenCV 4 REQUIRED)

include_directories(${OpenCV_INCLUDE_DIRS})

# link onnxruntime.

set(ONNXRUNTIME_DIR ${CMAKE_SOURCE_DIR}/onnxruntime/)

set(ONNXRUNTIME_INCLUDE_DIR ${ONNXRUNTIME_DIR}/include)

set(ONNXRUNTIME_LIBRARY_DIR ${ONNXRUNTIME_DIR}/lib)

include_directories(${ONNXRUNTIME_INCLUDE_DIR})

link_directories(${ONNXRUNTIME_LIBRARY_DIR})

# link lite.ai.

set(LITEHUB_DIR ${CMAKE_SOURCE_DIR}/lite.ai)

set(LITEHUB_INCLUDE_DIR ${LITEHUB_DIR}/include)

set(LITEHUB_LIBRARY_DIR ${LITEHUB_DIR}/lib)

include_directories(${LITEHUB_INCLUDE_DIR})

link_directories(${LITEHUB_LIBRARY_DIR})

# add your executable

add_executable(lite_yolov5 test_lite_yolov5.cpp)

target_link_libraries(lite_yolov5 lite.ai onnxruntime ${OpenCV_LIBS})A minimum example to show you how to link the shared lib of Lite.AI correctly for your own project can be found at lite.ai-release .

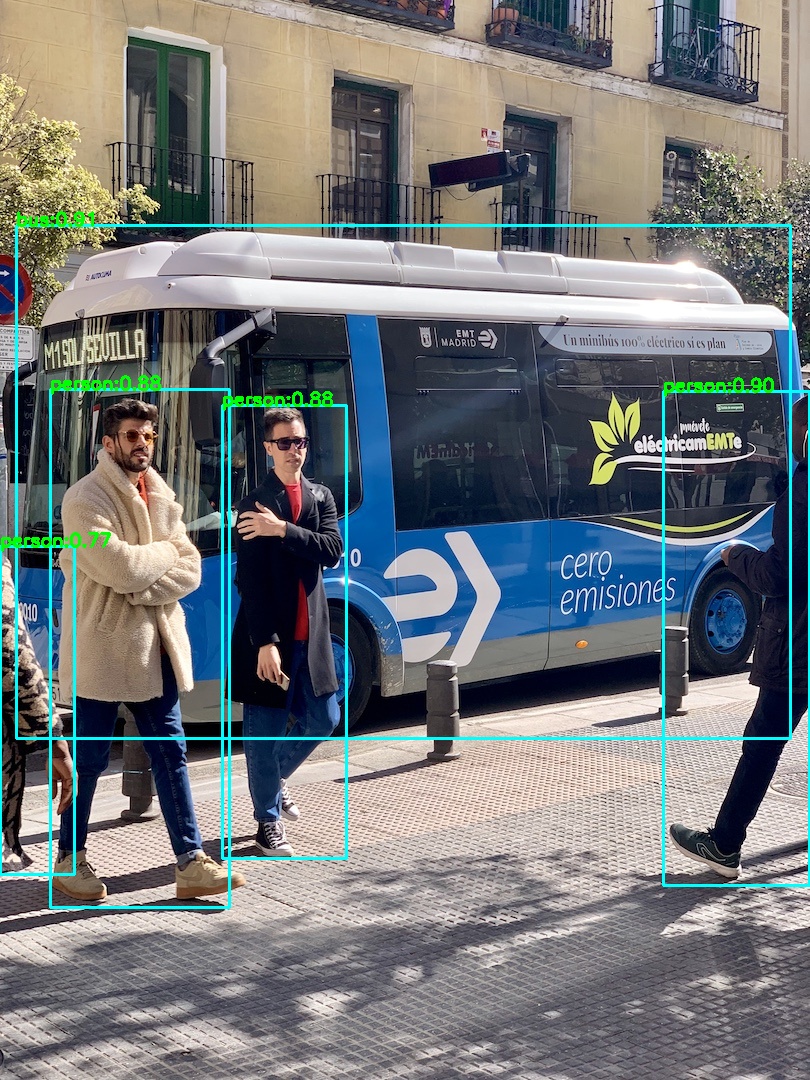

More examples can be found at lite.ai-demos. Note that the default backend for Lite.AI is onnxruntime, for the reason that onnxruntime supports the most of onnx's operators.

#include "lite/lite.h"

static void test_default()

{

std::string onnx_path = "../../../hub/onnx/cv/yolov5s.onnx";

std::string test_img_path = "../../../examples/lite/resources/test_lite_yolov5_1.jpg";

std::string save_img_path = "../../../logs/test_lite_yolov5_1.jpg";

auto *yolov5 = new lite::cv::detection::YoloV5(onnx_path);

std::vector<lite::cv::types::Boxf> detected_boxes;

cv::Mat img_bgr = cv::imread(test_img_path);

yolov5->detect(img_bgr, detected_boxes);

lite::cv::utils::draw_boxes_inplace(img_bgr, detected_boxes);

cv::imwrite(save_img_path, img_bgr);

delete yolov5;

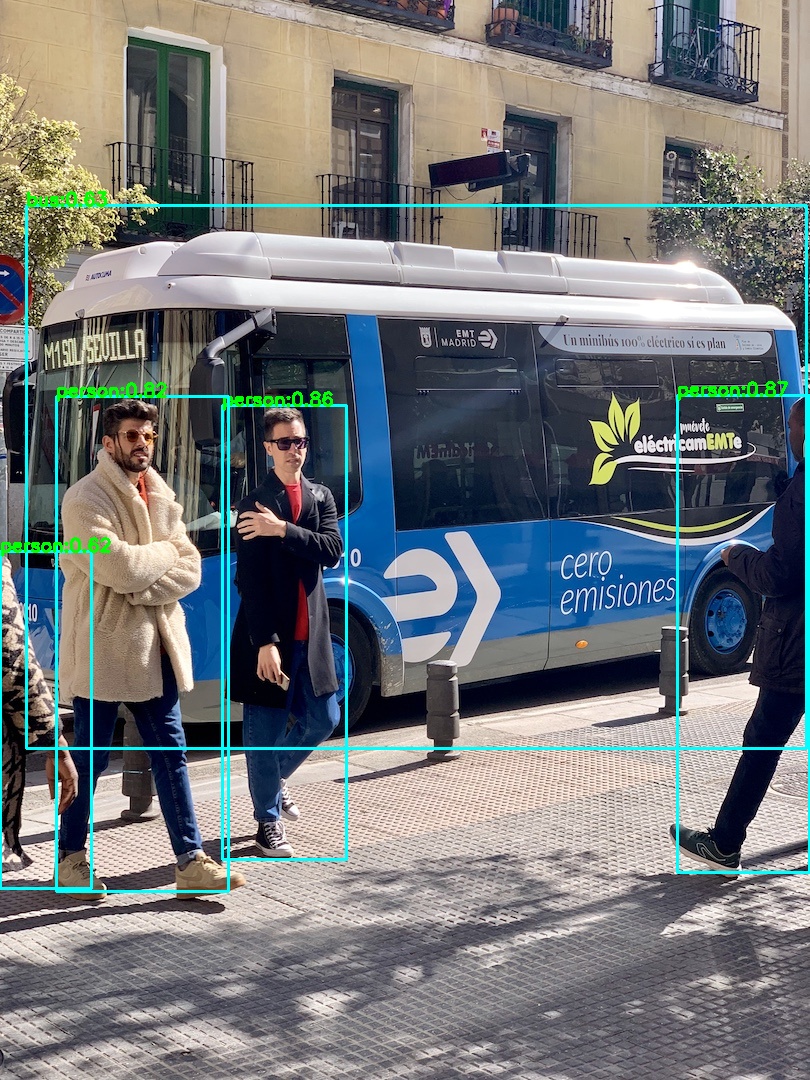

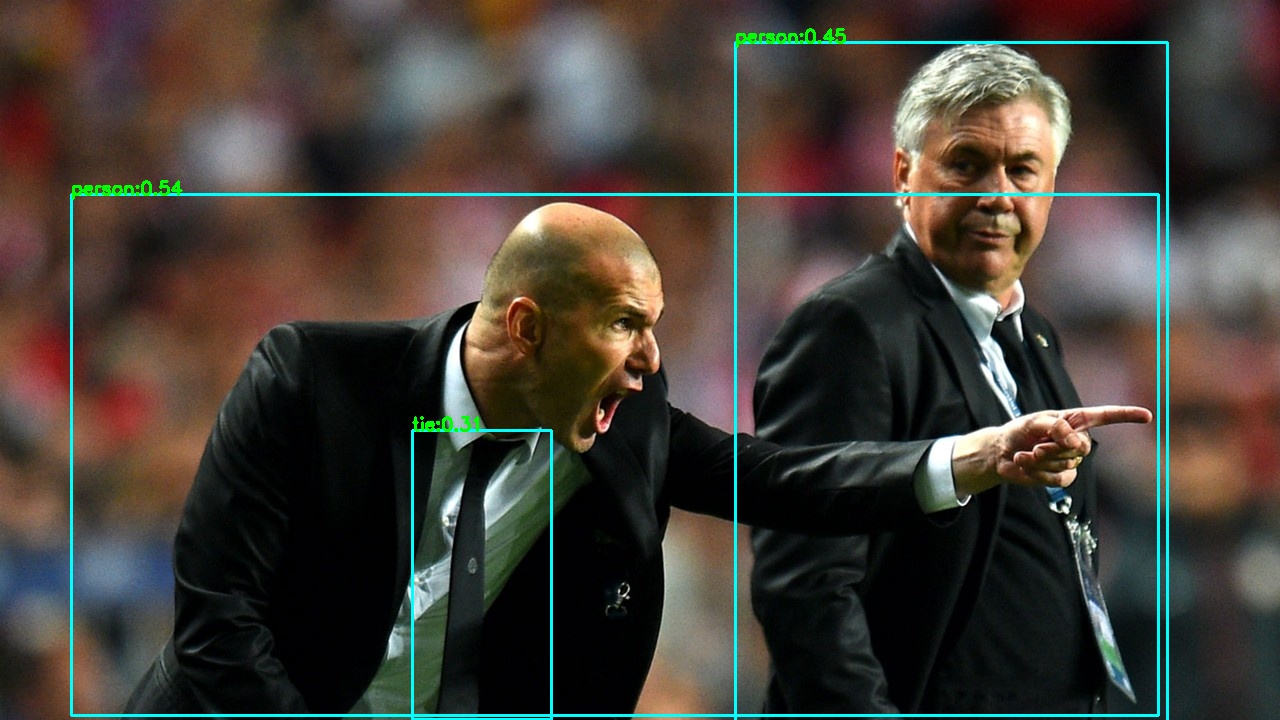

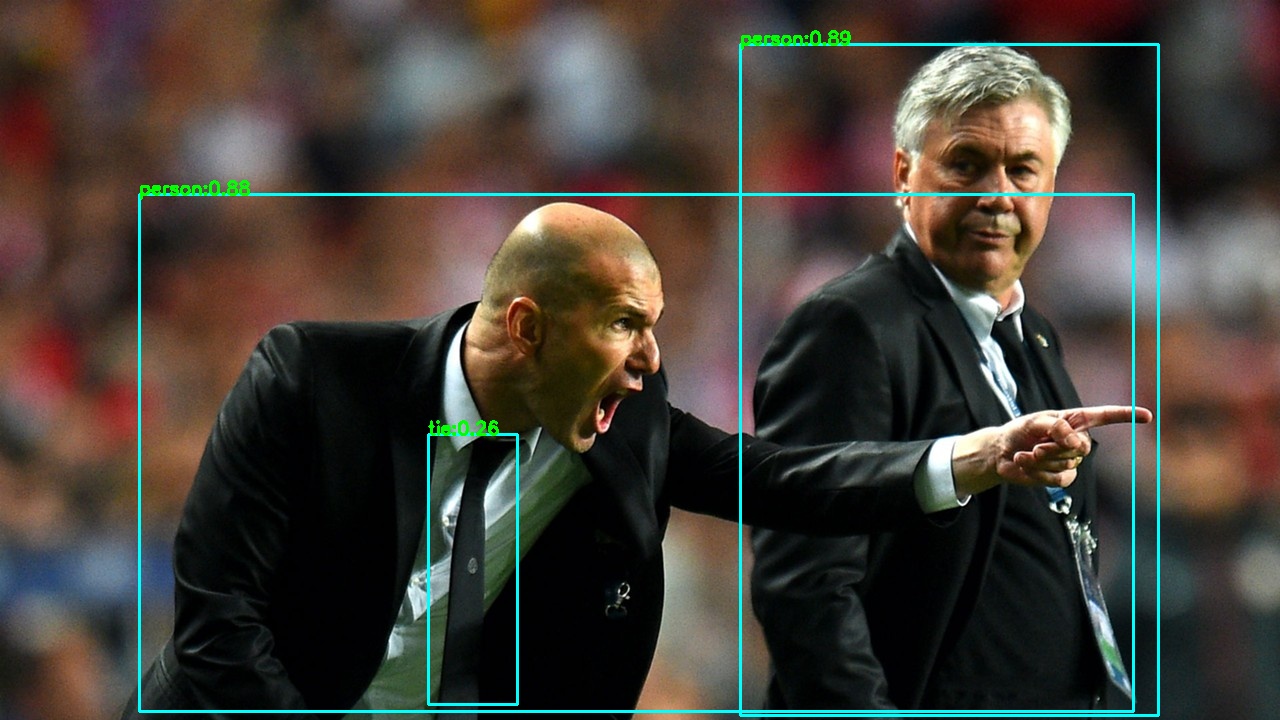

}The output is:

Or you can use Newest 🔥🔥 ! YOLO series's detector YOLOX . They got the similar results.

#include "lite/lite.h"

static void test_default()

{

std::string onnx_path = "../../../hub/onnx/cv/yolox_s.onnx";

std::string test_img_path = "../../../examples/lite/resources/test_lite_yolox_1.jpg";

std::string save_img_path = "../../../logs/test_lite_yolox_1.jpg";

auto *yolox = new lite::cv::detection::YoloX(onnx_path);

std::vector<lite::cv::types::Boxf> detected_boxes;

cv::Mat img_bgr = cv::imread(test_img_path);

yolox->detect(img_bgr, detected_boxes);

lite::cv::utils::draw_boxes_inplace(img_bgr, detected_boxes);

cv::imwrite(save_img_path, img_bgr);

delete yolox;

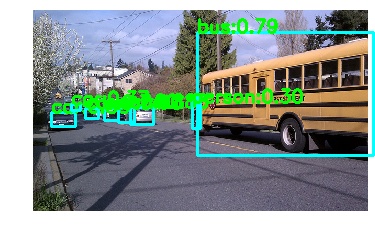

}The output is:

More models for general object detection.

auto *detector = new lite::cv::detection::YoloX(onnx_path); // new !!!

auto *detector = new lite::cv::detection::YoloV4(onnx_path);

auto *detector = new lite::cv::detection::YoloV3(onnx_path);

auto *detector = new lite::cv::detection::TinyYoloV3(onnx_path);

auto *detector = new lite::cv::detection::SSD(onnx_path);

auto *detector = new lite::cv::detection::SSDMobileNetV1(onnx_path); 4.2 Segmentation using DeepLabV3ResNet101. Download model from Model-Zoo2.

#include "lite/lite.h"

static void test_default()

{

std::string onnx_path = "../../../hub/onnx/cv/deeplabv3_resnet101_coco.onnx";

std::string test_img_path = "../../../examples/lite/resources/test_lite_deeplabv3_resnet101.png";

std::string save_img_path = "../../../logs/test_lite_deeplabv3_resnet101.jpg";

auto *deeplabv3_resnet101 = new lite::cv::segmentation::DeepLabV3ResNet101(onnx_path, 16); // 16 threads

lite::cv::types::SegmentContent content;

cv::Mat img_bgr = cv::imread(test_img_path);

deeplabv3_resnet101->detect(img_bgr, content);

if (content.flag)

{

cv::Mat out_img;

cv::addWeighted(img_bgr, 0.2, content.color_mat, 0.8, 0., out_img);

cv::imwrite(save_img_path, out_img);

if (!content.names_map.empty())

{

for (auto it = content.names_map.begin(); it != content.names_map.end(); ++it)

{

std::cout << it->first << " Name: " << it->second << std::endl;

}

}

}

delete deeplabv3_resnet101;

}The output is:

More models for segmentation.

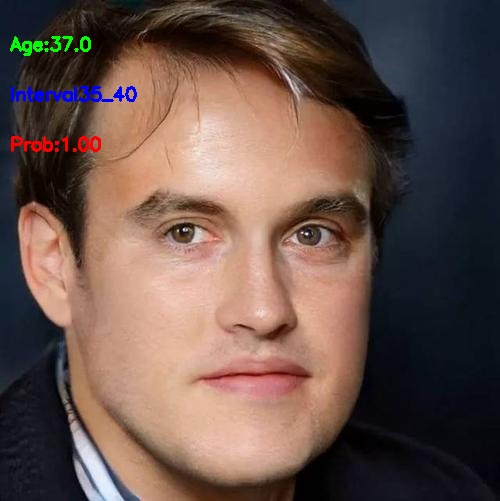

auto *segment = new lite::cv::segmentation::FCNResNet101(onnx_path);#include "lite/lite.h"

static void test_default()

{

std::string onnx_path = "../../../hub/onnx/cv/ssrnet.onnx";

std::string test_img_path = "../../../examples/lite/resources/test_lite_ssrnet.jpg";

std::string save_img_path = "../../../logs/test_lite_ssrnet.jpg";

lite::cv::face::attr::SSRNet *ssrnet = new lite::cv::face::attr::SSRNet(onnx_path);

lite::cv::types::Age age;

cv::Mat img_bgr = cv::imread(test_img_path);

ssrnet->detect(img_bgr, age);

lite::cv::utils::draw_age_inplace(img_bgr, age);

cv::imwrite(save_img_path, img_bgr);

std::cout << "Default Version Done! Detected SSRNet Age: " << age.age << std::endl;

delete ssrnet;

}The output is:

More models for face attributes analysis.

auto *attribute = new lite::cv::face::attr::AgeGoogleNet(onnx_path);

auto *attribute = new lite::cv::face::attr::GenderGoogleNet(onnx_path);

auto *attribute = new lite::cv::face::attr::EmotionFerPlus(onnx_path);

auto *attribute = new lite::cv::face::attr::VGG16Age(onnx_path);

auto *attribute = new lite::cv::face::attr::VGG16Gender(onnx_path);#include "lite/lite.h"

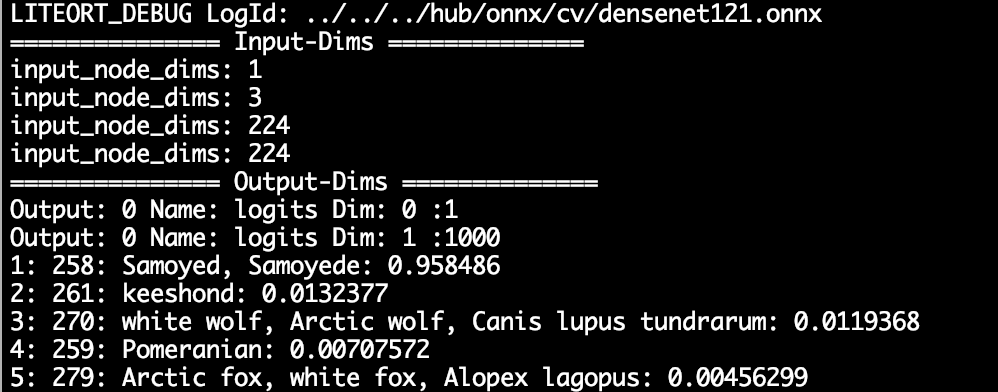

static void test_default()

{

std::string onnx_path = "../../../hub/onnx/cv/densenet121.onnx";

std::string test_img_path = "../../../examples/lite/resources/test_lite_densenet.jpg";

auto *densenet = new lite::cv::classification::DenseNet(onnx_path);

lite::cv::types::ImageNetContent content;

cv::Mat img_bgr = cv::imread(test_img_path);

densenet->detect(img_bgr, content);

if (content.flag)

{

const unsigned int top_k = content.scores.size();

if (top_k > 0)

{

for (unsigned int i = 0; i < top_k; ++i)

std::cout << i + 1

<< ": " << content.labels.at(i)

<< ": " << content.texts.at(i)

<< ": " << content.scores.at(i)

<< std::endl;

}

}

delete densenet;

}The output is:

More models for image classification.

auto *classifier = new lite::cv::classification::EfficientNetLite4(onnx_path);

auto *classifier = new lite::cv::classification::ShuffleNetV2(onnx_path);

auto *classifier = new lite::cv::classification::GhostNet(onnx_path);

auto *classifier = new lite::cv::classification::HdrDNet(onnx_path);

auto *classifier = new lite::cv::classification::IBNNet(onnx_path);

auto *classifier = new lite::cv::classification::MobileNetV2(onnx_path);

auto *classifier = new lite::cv::classification::ResNet(onnx_path);

auto *classifier = new lite::cv::classification::ResNeXt(onnx_path);#include "lite/lite.h"

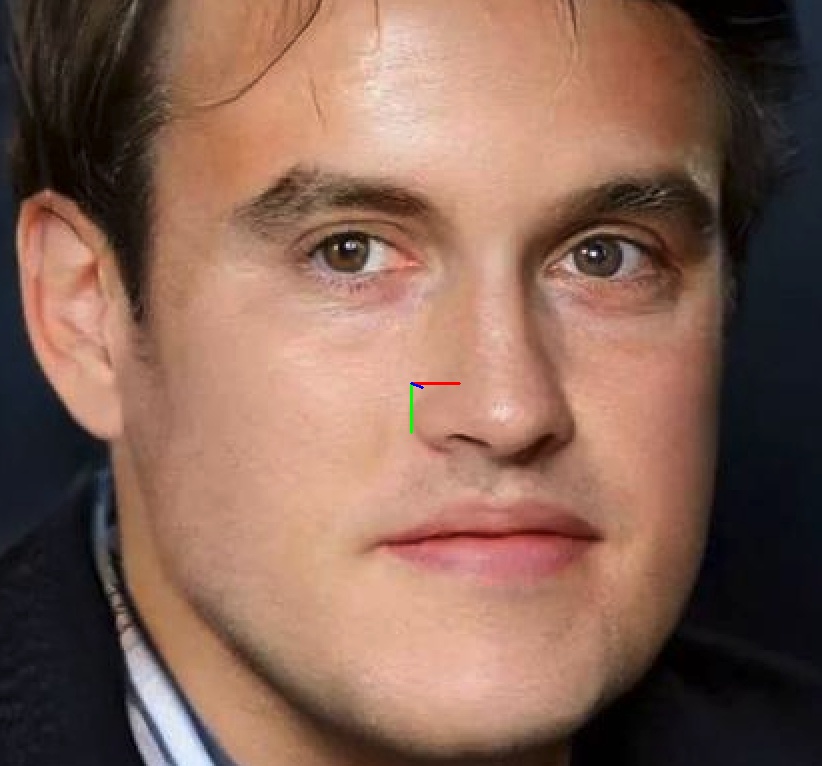

static void test_default()

{

std::string onnx_path = "../../../hub/onnx/cv/ms1mv3_arcface_r100.onnx";

std::string test_img_path0 = "../../../examples/lite/resources/test_lite_faceid_0.png";

std::string test_img_path1 = "../../../examples/lite/resources/test_lite_faceid_1.png";

std::string test_img_path2 = "../../../examples/lite/resources/test_lite_faceid_2.png";

auto *glint_arcface = new lite::cv::faceid::GlintArcFace(onnx_path);

lite::cv::types::FaceContent face_content0, face_content1, face_content2;

cv::Mat img_bgr0 = cv::imread(test_img_path0);

cv::Mat img_bgr1 = cv::imread(test_img_path1);

cv::Mat img_bgr2 = cv::imread(test_img_path2);

glint_arcface->detect(img_bgr0, face_content0);

glint_arcface->detect(img_bgr1, face_content1);

glint_arcface->detect(img_bgr2, face_content2);

if (face_content0.flag && face_content1.flag && face_content2.flag)

{

float sim01 = lite::cv::utils::math::cosine_similarity<float>(

face_content0.embedding, face_content1.embedding);

float sim02 = lite::cv::utils::math::cosine_similarity<float>(

face_content0.embedding, face_content2.embedding);

std::cout << "Detected Sim01: " << sim << " Sim02: " << sim02 << std::endl;

}

delete glint_arcface;

}The output is:

Detected Sim01: 0.721159 Sim02: -0.0626267

More models for face recognition.

auto *recognition = new lite::cv::faceid::GlintCosFace(onnx_path); // DeepGlint(insightface)

auto *recognition = new lite::cv::faceid::GlintArcFace(onnx_path); // DeepGlint(insightface)

auto *recognition = new lite::cv::faceid::GlintPartialFC(onnx_path); // DeepGlint(insightface)

auto *recognition = new lite::cv::faceid::FaceNet(onnx_path);

auto *recognition = new lite::cv::faceid::FocalArcFace(onnx_path);

auto *recognition = new lite::cv::faceid::FocalAsiaArcFace(onnx_path);

auto *recognition = new lite::cv::faceid::TencentCurricularFace(onnx_path); // Tencent(TFace)

auto *recognition = new lite::cv::faceid::TencentCifpFace(onnx_path); // Tencent(TFace)

auto *recognition = new lite::cv::faceid::CenterLossFace(onnx_path);

auto *recognition = new lite::cv::faceid::SphereFace(onnx_path);

auto *recognition = new lite::cv::faceid::PoseRobustFace(onnx_path);

auto *recognition = new lite::cv::faceid::NaivePoseRobustFace(onnx_path);

auto *recognition = new lite::cv::faceid::MobileFaceNet(onnx_path); // 3.8Mb only !

auto *recognition = new lite::cv::faceid::CavaGhostArcFace(onnx_path);

auto *recognition = new lite::cv::faceid::CavaCombinedFace(onnx_path);4.6 Expand Examples for Face Detection.

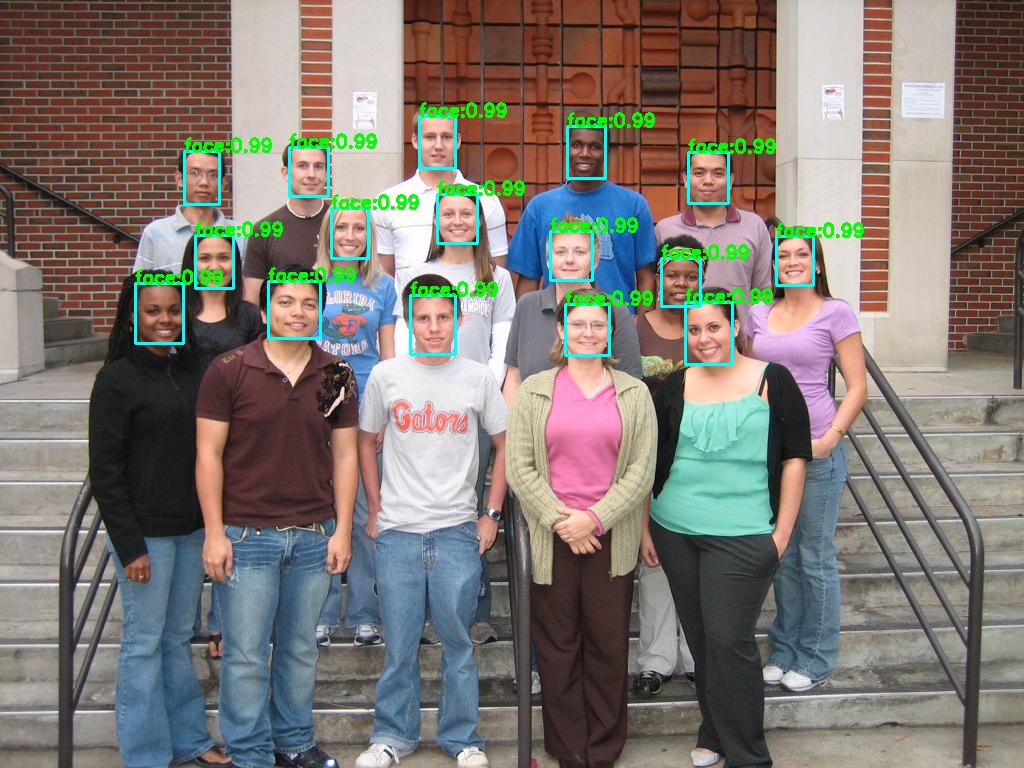

#include "lite/lite.h"

static void test_default()

{

std::string onnx_path = "../../../hub/onnx/cv/ultraface-rfb-640.onnx";

std::string test_img_path = "../../../examples/lite/resources/test_lite_ultraface.jpg";

std::string save_img_path = "../../../logs/test_lite_ultraface.jpg";

auto *ultraface = new lite::cv::face::detect::UltraFace(onnx_path);

std::vector<lite::cv::types::Boxf> detected_boxes;

cv::Mat img_bgr = cv::imread(test_img_path);

ultraface->detect(img_bgr, detected_boxes);

lite::cv::utils::draw_boxes_inplace(img_bgr, detected_boxes);

cv::imwrite(save_img_path, img_bgr);

delete ultraface;

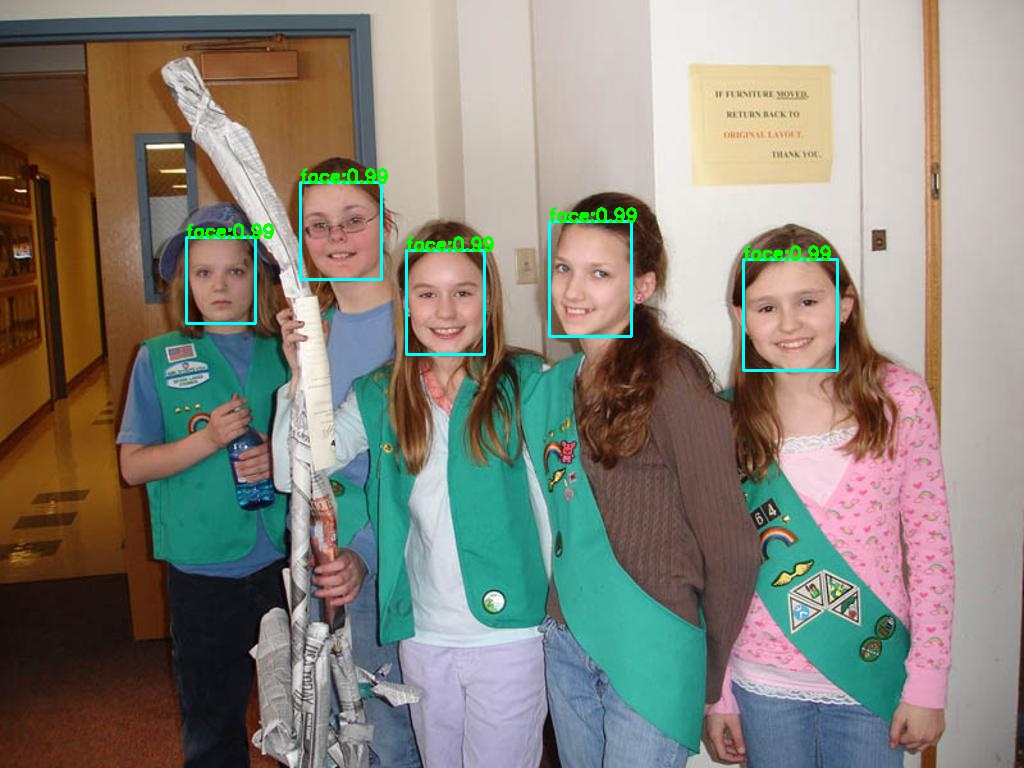

}The output is:

4.7 Expand Examples for Colorization.

4.7 Colorization using colorization. Download model from Model-Zoo2.

#include "lite/lite.h"

static void test_default()

{

std::string onnx_path = "../../../hub/onnx/cv/eccv16-colorizer.onnx";

std::string test_img_path = "../../../examples/lite/resources/test_lite_colorizer_1.jpg";

std::string save_img_path = "../../../logs/test_lite_eccv16_colorizer_1.jpg";

auto *colorizer = new lite::cv::colorization::Colorizer(onnx_path);

cv::Mat img_bgr = cv::imread(test_img_path);

lite::cv::types::ColorizeContent colorize_content;

colorizer->detect(img_bgr, colorize_content);

if (colorize_content.flag) cv::imwrite(save_img_path, colorize_content.mat);

delete colorizer;

}The output is:

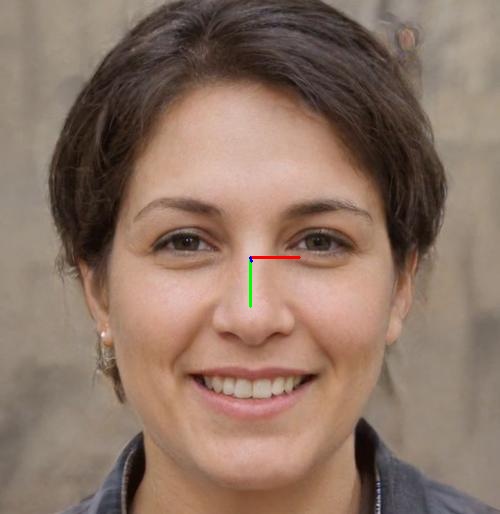

4.8 Expand Examples for Head Pose Estimation.

#include "lite/lite.h"

static void test_default()

{

std::string onnx_path = "../../../hub/onnx/cv/fsanet-var.onnx";

std::string test_img_path = "../../../examples/lite/resources/test_lite_fsanet.jpg";

std::string save_img_path = "../../../logs/test_lite_fsanet.jpg";

auto *fsanet = new lite::cv::face::pose::FSANet(onnx_path);

cv::Mat img_bgr = cv::imread(test_img_path);

lite::cv::types::EulerAngles euler_angles;

fsanet->detect(img_bgr, euler_angles);

if (euler_angles.flag)

{

lite::cv::utils::draw_axis_inplace(img_bgr, euler_angles);

cv::imwrite(save_img_path, img_bgr);

std::cout << "yaw:" << euler_angles.yaw << " pitch:" << euler_angles.pitch << " row:" << euler_angles.roll << std::endl;

}

delete fsanet;

}The output is:

4.9 Expand Examples for Face Alignment.

#include "lite/lite.h"

static void test_default()

{

std::string onnx_path = "../../../hub/onnx/cv/pfld-106-v3.onnx";

std::string test_img_path = "../../../examples/lite/resources/test_lite_pfld.png";

std::string save_img_path = "../../../logs/test_lite_pfld.jpg";

auto *pfld = new lite::cv::face::align::PFLD(onnx_path);

lite::cv::types::Landmarks landmarks;

cv::Mat img_bgr = cv::imread(test_img_path);

pfld->detect(img_bgr, landmarks);

lite::cv::utils::draw_landmarks_inplace(img_bgr, landmarks);

cv::imwrite(save_img_path, img_bgr);

delete pfld;

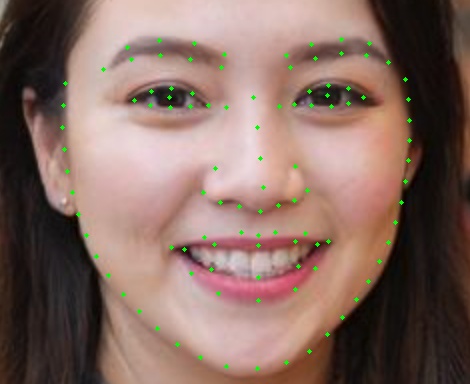

}The output is:

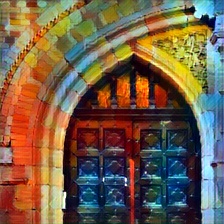

4.10 Expand Examples for Style Transfer.

4.10 Style Transfer using FastStyleTransfer. Download model from Model-Zoo2.

#include "lite/lite.h"

static void test_default()

{

std::string onnx_path = "../../../hub/onnx/cv/style-candy-8.onnx";

std::string test_img_path = "../../../examples/lite/resources/test_lite_fast_style_transfer.jpg";

std::string save_img_path = "../../../logs/test_lite_fast_style_transfer_candy.jpg";

auto *fast_style_transfer = new lite::cv::style::FastStyleTransfer(onnx_path);

lite::cv::types::StyleContent style_content;

cv::Mat img_bgr = cv::imread(test_img_path);

fast_style_transfer->detect(img_bgr, style_content);

if (style_content.flag) cv::imwrite(save_img_path, style_content.mat);

delete fast_style_transfer;

}The output is:

4.11 Expand Examples for Image Matting.

- todo

⚠️

More details of Default Version APIs can be found at default-version-api-docs . For examples, the interface for YoloV5 is:

lite::cv::detection::YoloV5

void detect(const cv::Mat &mat, std::vector<types::Boxf> &detected_boxes,

float score_threshold = 0.25f, float iou_threshold = 0.45f,

unsigned int topk = 100, unsigned int nms_type = NMS::OFFSET);Expand for ONNXRuntime, MNN and NCNN version APIs.

More details of ONNXRuntime Version APIs can be found at onnxruntime-version-api-docs . For examples, the interface for YoloV5 is:

lite::onnxruntime::cv::detection::YoloV5

void detect(const cv::Mat &mat, std::vector<types::Boxf> &detected_boxes,

float score_threshold = 0.25f, float iou_threshold = 0.45f,

unsigned int topk = 100, unsigned int nms_type = NMS::OFFSET);(todo

lite::mnn::cv::detection::YoloV5

lite::mnn::cv::detection::YoloV4

lite::mnn::cv::detection::YoloV3

lite::mnn::cv::detection::SSD

...

(todo

lite::ncnn::cv::detection::YoloV5

lite::ncnn::cv::detection::YoloV4

lite::ncnn::cv::detection::YoloV3

lite::ncnn::cv::detection::SSD

...

Expand for more details of Other Docs.

- Rapid implementation of your inference using BasicOrtHandler

- Some very useful onnxruntime c++ interfaces

- How to compile a single model in this library you needed

- How to convert SubPixelCNN to ONNX and implements with onnxruntime c++

- How to convert Colorizer to ONNX and implements with onnxruntime c++

- How to convert SSRNet to ONNX and implements with onnxruntime c++

- How to convert YoloV3 to ONNX and implements with onnxruntime c++

- How to convert YoloV5 to ONNX and implements with onnxruntime c++

6.2 Docs for third_party.

Other build documents for different engines and different targets will be added later.

| Library | Target | Docs |

|---|---|---|

| OpenCV | mac-x86_64 | opencv-mac-x86_64-build.zh.md |

| OpenCV | android-arm | opencv-static-android-arm-build.zh.md |

| onnxruntime | mac-x86_64 | onnxruntime-mac-x86_64-build.zh.md |

| onnxruntime | android-arm | onnxruntime-android-arm-build.zh.md |

| NCNN | mac-x86_64 | todo |

| MNN | mac-x86_64 | todo |

| TNN | mac-x86_64 | todo |

Many thanks to the following projects. All the Lite.AI's models are sourced from these repos. Just jump to and star 🌟👉🏻 the any awesome one you are interested in ! Have a good travel ~ 🙃🤪🍀

- [1] headpose-fsanet-pytorch (🔥↑)

- [2] pfld_106_face_landmarks (🔥🔥↑)

- [3] Ultra-Light-Fast-Generic-Face-Detector-1MB (🔥🔥🔥↑)

- [4] onnx-models (🔥🔥🔥↑)

- [5] SSR_Net_Pytorch (🔥↑)

- [6] insightface (🔥🔥🔥↑)

- [7] colorization (🔥🔥🔥↑)

- [8] SUB_PIXEL_CNN (🔥↑)

- [9] YOLOv4-pytorch (🔥🔥🔥↑)

- [10] yolov5 (🔥🔥💥↑)

- [11] torchvision (🔥🔥🔥↑)

- [12] facenet-pytorch (🔥↑)

- [13] face.evoLVe.PyTorch (🔥🔥🔥↑)

- [14] TFace (🔥🔥↑)

- [15] center-loss.pytorch (🔥🔥↑)

- [16] sphereface_pytorch (🔥🔥↑)

- [17] DREAM (🔥🔥↑)

- [18] MobileFaceNet_Pytorch (🔥🔥↑)

- [19] cavaface.pytorch (🔥🔥↑)

- [20] CurricularFace (🔥🔥↑)

- [21] face-emotion-recognition (🔥↑)

- [22] face_recognition.pytorch (🔥🔥↑)

- [23] PFLD-pytorch (🔥🔥↑)

- [24] pytorch_face_landmark (🔥🔥↑)

- [25] FaceLandmark1000 (🔥🔥↑)

- [26] Pytorch_Retinaface (🔥🔥🔥↑)

- [27] FaceBoxes (🔥🔥↑)

- [28] YOLOX (🔥🔥new!!↑)

- [??] lite.ai ( 👈🏻 yet, I guess you might be also interested in this repo ~ 🙃🤪🍀)