Deep Xi can be used for speech enhancement, for noise estimation, and as a front-end for robust ASR.

Deep Xi (where the Greek letter 'xi' or ξ is pronounced /zaɪ/) is a deep learning approach to a priori SNR estimation that was proposed in [1] and is implemented in TensorFlow. Some of its use cases include:

- Minimum mean-square error (MMSE) approaches to speech enhancement like the MMSE short-time spectral amplitude (MMSE-STSA) estimator, the MMSE log-spectral amplitude (MMSE-LSA) estimator, and the Wiener filter (WF) approach.

- Estimate the ideal ratio mask (IRM) and the ideal binary mask (IBM).

- A front-end for robust ASR, as shown in Figure 1.

|

|---|

Figure 1: Deep Xi used as a front-end for robust ASR. The back-end (Deep Speech) is available here. The noisy speech magnitude spectrogram, as shown in (a), is a mixture of clean speech with voice babble noise at an SNR level of -5 dB, and is the input to Deep Xi. Deep Xi estimates the a priori SNR, as shown in (b). The a priori SNR estimate is used to compute an MMSE approach gain function, which is multiplied elementwise with the noisy speech magnitude spectrum to produce the clean speech magnitude spectrum estimate, as shown in (c). MFCCs are computed from the estimated clean speech magnitude spectrogram, producing the estimated clean speech cepstrogram, as shown in (d). The back-end system, Deep Speech, computes the hypothesis transcript, from the estimated clean speech cepstrogram, as shown in (e). |

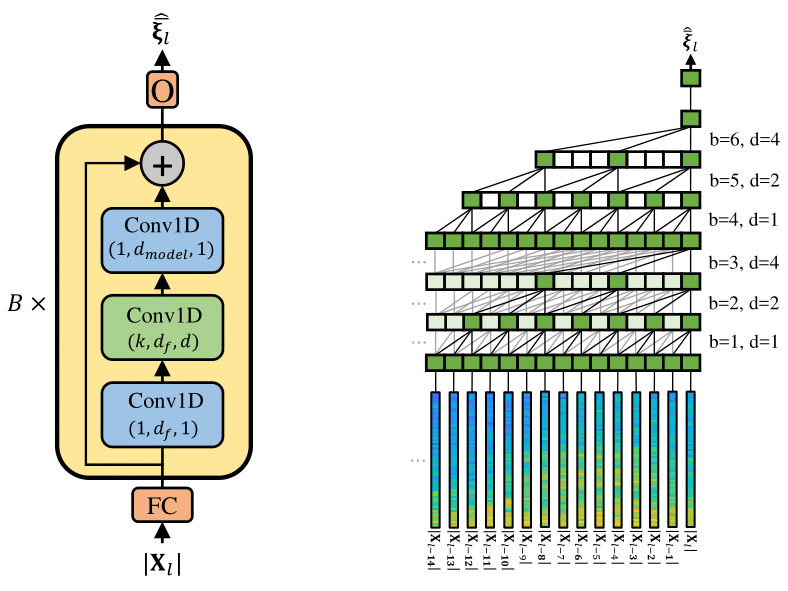

The ResLSTM and ResBLSTM networks used for Deep Xi in [1] have been replaced with a residual network (ResNet) that employs causal dilated convolutional units, a type of temporal convolutional network (TCN). Deep Xi-ResNet can be seen in Figure 2. The full model comprises of 2 million parameters, utilises 40 bottlekneck blocks, and has a maximum dilation rate of 16. This provides a contextual field of approximately 8 seconds.

A trained model for version 3f can be found in the ./model directory.

Objective scores obtained on the test set described here. As in previous works, the objective scores are averaged over all tested conditions. CSIG, CBAK, and COVL are mean opinion score (MOS) predictors of the signal distortion, background-noise intrusiveness, and overall signal quality, respectively. PESQ is the perceptual evaluation of speech quality measure. STOI is the short-time objective intelligibility measure (in %). The highest scores attained for each measure are indicated in boldface.

| Method | Causal | CSIG | CBAK | COVL | PESQ | STOI |

|---|---|---|---|---|---|---|

| Noisy speech | -- | 3.35 | 2.44 | 2.63 | 1.97 | 92 (91.5) |

| Wiener | Yes | 3.23 | 2.68 | 2.67 | 2.22 | -- |

| SEGAN | No | 3.48 | 2.94 | 2.80 | 2.16 | 93 |

| WaveNet | No | 3.62 | 3.23 | 2.98 | -- | -- |

| MMSE-GAN | No | 3.80 | 3.12 | 3.14 | 2.53 | 93 |

| Deep Feature Loss | Yes | 3.86 | 3.33 | 3.22 | -- | -- |

| Metric-GAN | No | 3.99 | 3.18 | 3.42 | 2.86 | -- |

| Deep Xi (ResNet 3e, MMSE-LSA) | Yes | 4.12 | 3.33 | 3.48 | 2.82 | 93 (93.3) |

Prerequisites for GPU usage:

To install:

git clone https://github.com/anicolson/DeepXi.gitvirtualenv --system-site-packages -p python3 ~/venv/DeepXisource ~/venv/DeepXi/bin/activatepip install --upgrade tensorflow-gpu==1.14cd DeepXipip install -r requirements.txt

If a GPU is not to be used, step 4 should be:

pip install --upgrade tensorflow==1.14

Inference:

python3 deepxi.py --infer 1 --out_type y --gain mmse-lsa --ver '3f' --epoch 175 --gpu 0

y for --out_type specifies enhanced speech .wav output. mmse-lsa specifies the used gain function (others include mmse-stsa, wf, irm, ibm, srwf, cwf).

Training:

python3 deepxi.py --train 1 --ver 'ANY_NAME' --gpu 0

Ensure to delete the data directory before training. This will allow training lists and statistics for your training set to be saved and used.

Retraining:

python3 deepxi.py --train 1 --cont 1 --ver '3f' --epoch 175 --gpu 0

Other options can be found in args.py. If a GPU is not to be used, include the following option: --gpu ''

- Masking may need to be performed after each instance of frame-wise layer normalisation due to the scaling and shift properties being applied to the zero padding at the end of each sequence during training. This will be looked into shortly.

The .wav files used for training are single-channel, with a sampling frequency of 16 kHz.

The following speech datasets were used:

- The train-clean-100 set from Librispeech corpus, which can be found here.

- The CSTR VCTK corpus, which can be found here.

- The si and sx training sets from the TIMIT corpus, which can be found here (not open source).

The following noise datasets were used:

- The QUT-NOISE dataset, which can be found here.

- The Nonspeech dataset, which can be found here.

- The Environemental Background Noise dataset, which can be found here.

- The noise set from the MUSAN corpus, which can be found here.

- Multiple packs from the FreeSound website, which can be found here

Please cite the following when using Deep Xi: