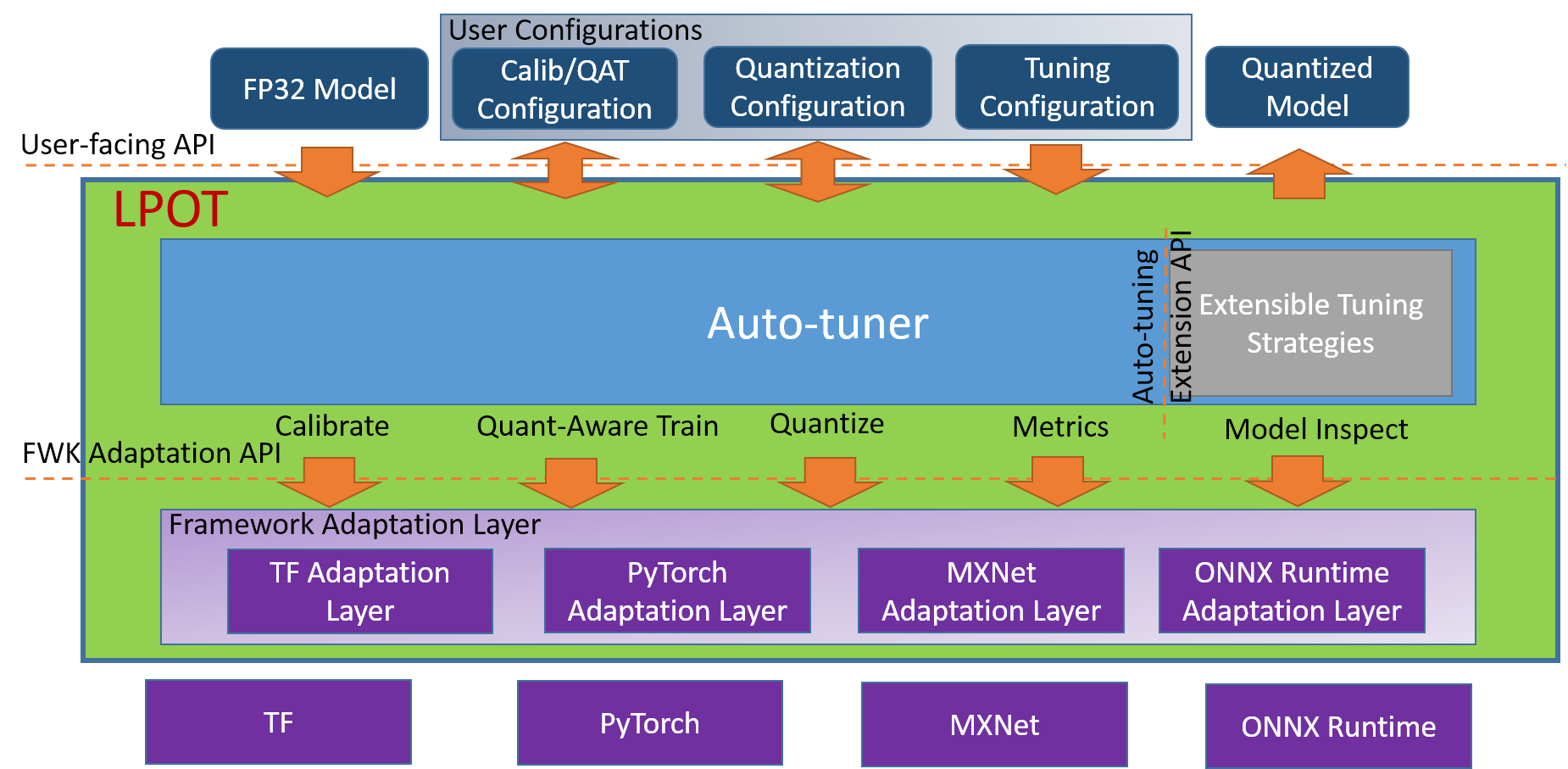

The Intel® Low Precision Optimization Tool (Intel® LPOT) is an open-source Python library that delivers a unified low-precision inference interface across multiple Intel-optimized Deep Learning (DL) frameworks on both CPUs and GPUs. It supports automatic accuracy-driven tuning strategies, along with additional objectives such as optimizing for performance, model size, and memory footprint. It also provides easy extension capability for new backends, tuning strategies, metrics, and objectives.

Note

GPU support is under development.

Visit the Intel® LPOT online document website at: https://intel.github.io/lpot.

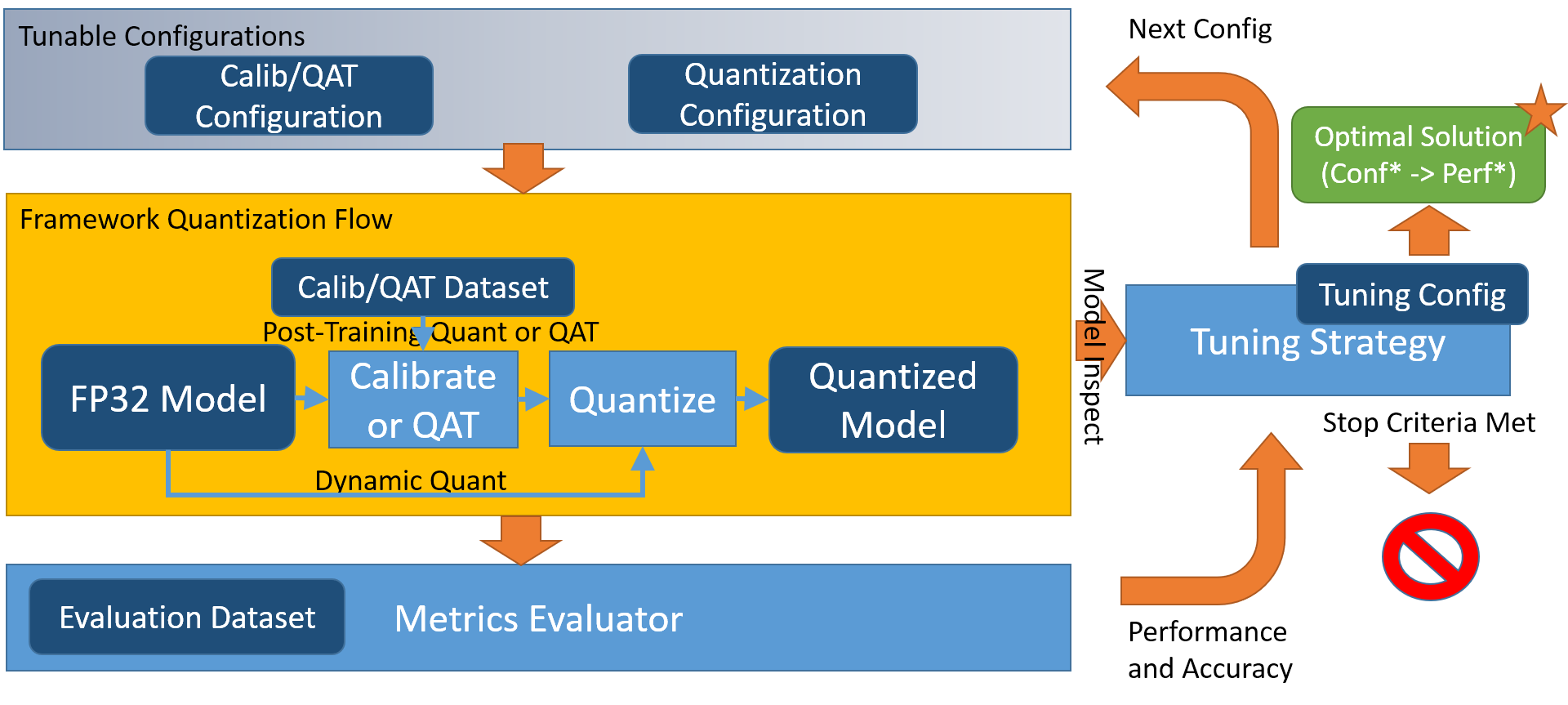

Intel® LPOT features an infrastructure and workflow that aids in increasing performance and faster deployments across architectures.

Click the image to enlarge it.

Click the image to enlarge it.

Supported Intel-optimized DL frameworks are:

- TensorFlow*, including 1.15.0 UP3, 1.15.0 UP2, 1.15.0 UP1, 2.1.0, 2.2.0, 2.3.0, 2.4.0, 2.5.0

Note: Intel Optimized TensorFlow 2.5.0 requires to set environment variable TF_ENABLE_MKL_NATIVE_FORMAT=0 before running LPOT quantization or deploying the quantized model.

- PyTorch*, including 1.5.0+cpu, 1.6.0+cpu, 1.8.0+cpu

- Apache* MXNet, including 1.6.0, 1.7.0

- ONNX* Runtime, including 1.6.0, 1.7.0, 1.8.0

Select the installation based on your operating system.

You can install LPOT using one of three options: Install just the LPOT library from binary or source, or get the Intel-optimized framework together with the LPOT library by installing the Intel® oneAPI AI Analytics Toolkit.

# install stable version from pip

pip install lpot

# install nightly version from pip

pip install -i https://test.pypi.org/simple/ lpot

# install stable version from from conda

conda install lpot -c conda-forge -c intel git clone https://github.com/intel/lpot.git

cd lpot

pip install -r requirements.txt

python setup.py installThe Intel® LPOT library is released as part of the Intel® oneAPI AI Analytics Toolkit (AI Kit). The AI Kit provides a consolidated package of Intel's latest deep learning and machine optimizations all in one place for ease of development. Along with LPOT, the AI Kit includes Intel-optimized versions of deep learning frameworks (such as TensorFlow and PyTorch) and high-performing Python libraries to streamline end-to-end data science and AI workflows on Intel architectures.

The AI Kit is distributed through many common channels, including from Intel's website, YUM, APT, Anaconda, and more. Select and download the AI Kit distribution package that's best suited for you and follow the Get Started Guide for post-installation instructions.

| Download AI Kit | AI Kit Get Started Guide |

|---|

Prerequisites

The following prerequisites and requirements must be satisfied for a successful installation:

-

Python version: 3.6 or 3.7 or 3.8 or 3.9

-

Download and install anaconda.

-

Create a virtual environment named lpot in anaconda:

# Here we install python 3.7 for instance. You can also choose python 3.6, 3.8, or 3.9. conda create -n lpot python=3.7 conda activate lpot

Installation options

# install stable version from pip

pip install lpot

# install nightly version from pip

pip install -i https://test.pypi.org/simple/ lpot

# install from conda

conda install lpot -c conda-forge -c intel git clone https://github.com/intel/lpot.git

cd lpot

pip install -r requirements.txt

python setup.py installGet Started

- APIs explains Intel® Low Precision Optimization Tool's API.

- Transform introduces how to utilize LPOT's built-in data processing and how to develop a custom data processing method.

- Dataset introduces how to utilize LPOT's built-in dataset and how to develop a custom dataset.

- Metric introduces how to utilize LPOT's built-in metrics and how to develop a custom metric.

- Tutorial provides comprehensive instructions on how to utilize LPOT's features with examples.

- Examples are provided to demonstrate the usage of LPOT in different frameworks: TensorFlow, PyTorch, MXNet, and ONNX Runtime.

- UX is a web-based system used to simplify LPOT usage.

- Intel oneAPI AI Analytics Toolkit Get Started Guide explains the AI Kit components, installation and configuration guides, and instructions for building and running sample apps.

- AI and Analytics Samples includes code samples for Intel oneAPI libraries.

Deep Dive

- Quantization are processes that enable inference and training by performing computations at low-precision data types, such as fixed-point integers. LPOT supports Post-Training Quantization (PTQ) and Quantization-Aware Training (QAT). Note that (Dynamic Quantization) currently has limited support.

- Pruning provides a common method for introducing sparsity in weights and activations.

- Benchmarking introduces how to utilize the benchmark interface of LPOT.

- Mixed precision introduces how to enable mixed precision, including BFP16 and int8 and FP32, on Intel platforms during tuning.

- Graph Optimization introduces how to enable graph optimization for FP32 and auto-mixed precision.

- Model Conversion introduces how to convert TensorFlow QAT model to quantized model running on Intel platforms.

- TensorBoard provides tensor histograms and execution graphs for tuning debugging purposes.

Advanced Topics

- Adaptor is the interface between LPOT and framework. The method to develop adaptor extension is introduced with ONNX Runtime as example.

- Strategy can automatically optimized low-precision recipes for deep learning models to achieve optimal product objectives like inference performance and memory usage with expected accuracy criteria. The method to develop a new strategy is introduced.

Intel® Low Precision Optimization Tool supports systems based on Intel 64 architecture or compatible processors, specially optimized for the following CPUs:

- Intel Xeon Scalable processor (formerly Skylake, Cascade Lake, Cooper Lake, and Icelake)

- future Intel Xeon Scalable processor (code name Sapphire Rapids)

Intel® Low Precision Optimization Tool requires installing the pertinent Intel-optimized framework version for TensorFlow, PyTorch, MXNet, and ONNX runtime.

| Platform | OS | Python | Framework | Version |

|---|---|---|---|---|

| Cascade Lake Cooper Lake Skylake Ice Lake |

CentOS 8.3 Ubuntu 18.04 |

3.6 3.7 3.8 3.9 |

TensorFlow | 2.5.0 |

| 2.4.0 | ||||

| 2.3.0 | ||||

| 2.2.0 | ||||

| 2.1.0 | ||||

| 1.15.0 UP1 | ||||

| 1.15.0 UP2 | ||||

| 1.15.0 UP3 | ||||

| 1.15.2 | ||||

| PyTorch | 1.5.0+cpu | |||

| 1.6.0+cpu | ||||

| 1.8.0+cpu | ||||

| IPEX | ||||

| MXNet | 1.7.0 | |||

| 1.6.0 | ||||

| ONNX Runtime | 1.6.0 | |||

| 1.7.0 | ||||

| 1.8.0 |

Intel® Low Precision Optimization Tool provides numerous examples to show promising accuracy loss with the best performance gain. A full quantized model list on various frameworks is available in the Model List.

| Framework | Version | Model | Accuracy | Performance speed up | ||

|---|---|---|---|---|---|---|

| INT8 Tuning Accuracy | FP32 Accuracy Baseline | Acc Ratio [(INT8-FP32)/FP32] | Realtime Latency Ratio[FP32/INT8] | |||

| tensorflow | 2.4.0 | resnet50v1.5 | 76.92% | 76.46% | 0.60% | 3.37x |

| tensorflow | 2.4.0 | resnet101 | 77.18% | 76.45% | 0.95% | 2.53x |

| tensorflow | 2.4.0 | inception_v1 | 70.41% | 69.74% | 0.96% | 1.89x |

| tensorflow | 2.4.0 | inception_v2 | 74.36% | 73.97% | 0.53% | 1.95x |

| tensorflow | 2.4.0 | inception_v3 | 77.28% | 76.75% | 0.69% | 2.37x |

| tensorflow | 2.4.0 | inception_v4 | 80.39% | 80.27% | 0.15% | 2.60x |

| tensorflow | 2.4.0 | inception_resnet_v2 | 80.38% | 80.40% | -0.02% | 1.98x |

| tensorflow | 2.4.0 | mobilenetv1 | 73.29% | 70.96% | 3.28% | 2.93x |

| tensorflow | 2.4.0 | ssd_resnet50_v1 | 37.98% | 38.00% | -0.05% | 2.99x |

| tensorflow | 2.4.0 | mask_rcnn_inception_v2 | 28.62% | 28.73% | -0.38% | 2.96x |

| tensorflow | 2.4.0 | vgg16 | 72.11% | 70.89% | 1.72% | 3.76x |

| tensorflow | 2.4.0 | vgg19 | 72.36% | 71.01% | 1.90% | 3.85x |

| Framework | Version | Model | Accuracy | Performance speed up | ||

|---|---|---|---|---|---|---|

| INT8 Tuning Accuracy | FP32 Accuracy Baseline | Acc Ratio [(INT8-FP32)/FP32] | Realtime Latency Ratio[FP32/INT8] | |||

| pytorch | 1.5.0+cpu | resnet50 | 75.96% | 76.13% | -0.23% | 2.46x |

| pytorch | 1.5.0+cpu | resnext101_32x8d | 79.12% | 79.31% | -0.24% | 2.63x |

| pytorch | 1.6.0a0+24aac32 | bert_base_mrpc | 88.90% | 88.73% | 0.19% | 2.10x |

| pytorch | 1.6.0a0+24aac32 | bert_base_cola | 59.06% | 58.84% | 0.37% | 2.23x |

| pytorch | 1.6.0a0+24aac32 | bert_base_sts-b | 88.40% | 89.27% | -0.97% | 2.13x |

| pytorch | 1.6.0a0+24aac32 | bert_base_sst-2 | 91.51% | 91.86% | -0.37% | 2.32x |

| pytorch | 1.6.0a0+24aac32 | bert_base_rte | 69.31% | 69.68% | -0.52% | 2.03x |

| pytorch | 1.6.0a0+24aac32 | bert_large_mrpc | 87.45% | 88.33% | -0.99% | 2.65x |

| pytorch | 1.6.0a0+24aac32 | bert_large_squad | 92.85 | 93.05 | -0.21% | 1.92x |

| pytorch | 1.6.0a0+24aac32 | bert_large_qnli | 91.20% | 91.82% | -0.68% | 2.59x |

| pytorch | 1.6.0a0+24aac32 | bert_large_rte | 71.84% | 72.56% | -0.99% | 1.34x |

| pytorch | 1.6.0a0+24aac32 | bert_large_cola | 62.74% | 62.57% | 0.27% | 2.67x |