Paper | Documentation | Leaderboard | Dataset & Benchmark

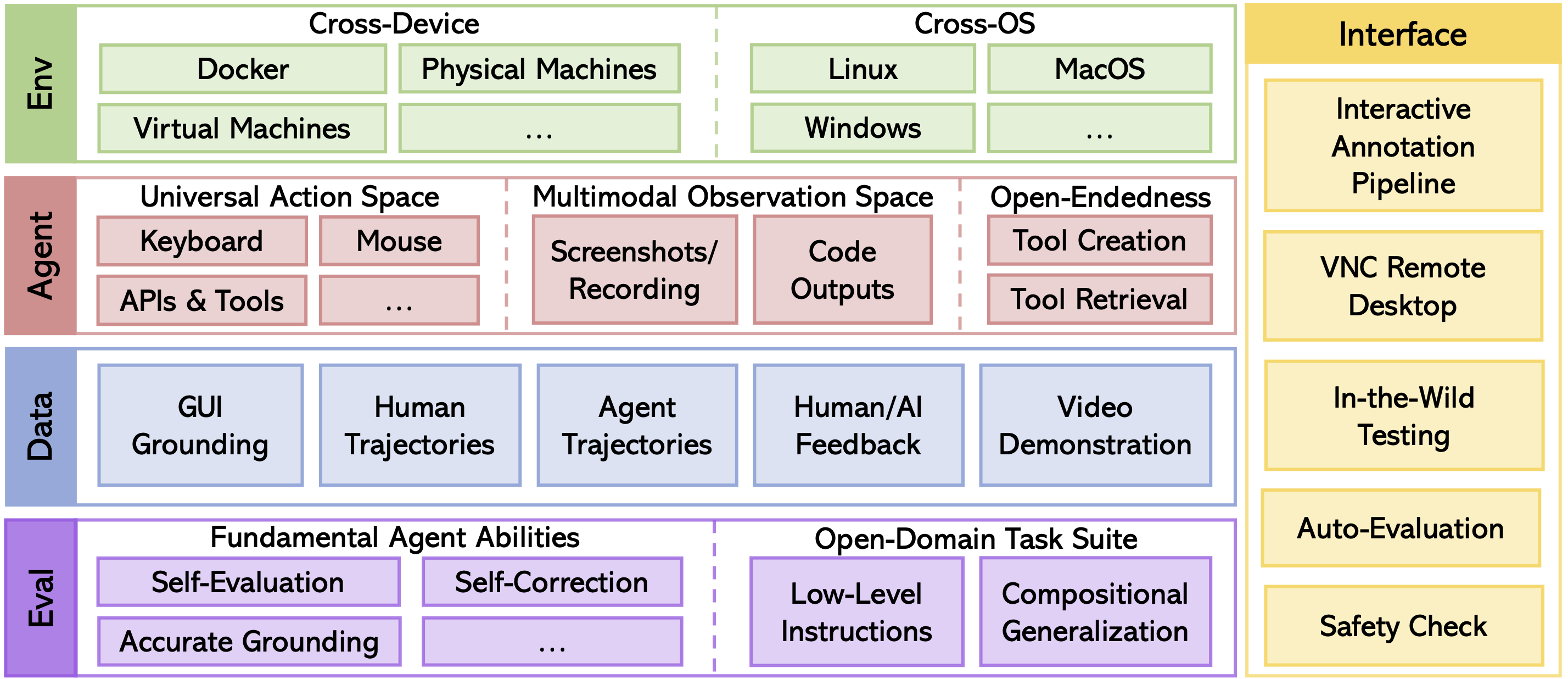

AgentStudio is an open toolkit covering the entire lifespan of building virtual agents that can interact with everything on digital worlds. Here, we open-source the beta of environment implementations, benchmark suite, data collection pipeline, and graphical interfaces to promote research towards generalist virtual agents of the future.

We plan to expand the collection of environments, tasks, and data over time. Contributions and feedback from everyone on how to make this into a better tool are more than welcome, no matter the scale. Please check out CONTRIBUTING.md for how to get involved.

You should note that the toolkit may do some non-reversible actions, such as deleting files, creating files, running commands, and deleting Google Calendar events.

Please make sure you are hosting the toolkit in a safe environment (E.g. virtual machine or docker) or have backups of your data.

Some tasks may require you to provide API keys. Before running the tasks, please make sure the account doesn't have important data.

Test one example from the dataset:

python eval_dataset.py --start_idx 0 --end_idx 1 --data_path data/grounding/windows/powerpoint/actions.jsonl --provider gpt-4-vision-previewRun complete experiments over the dataset:

python eval_dataset.py --data_path data/grounding/windows/powerpoint/actions.jsonl --provider gpt-4-vision-previewInstall requirements:

apt-get install gnome-screenshot xclip xdotool # If using Ubuntu 22.04

conda create --name agent-studio python=3.11 -y

conda activate agent-studio

pip install -r requirements.txt

pip install -e .This command will download the task suite and agent trajectories from Huggingface (you may need to configure huggingface and git lfs).

git submodule update --init --remote --recursiveAlternatively, you can directly clone the dataset repository:

git clone git@hf.co:datasets/Skywork/agent-studio-data dataPlease refer to the doc for detailed instructions.

This step is optional, only for running tasks with GUI in a docker container.

Build Docker image:

docker build -f dockerfiles/Dockerfile.ubuntu.amd64 . -t agent-studio:latestYou may modify config.py to configure the environment.

headless: Set toFalsefor GUI mode orTruefor CLI mode.remote: Set toTruefor running experiments in the docker or remote machines. Otherwise, experiments will run locally.task_config_paths: The path to the task configuration file.

Set headless = True and remote = False. This setup is the simplest, and it is suitable for evaluating agents that do not require GUI (e.g., Google APIs).

Start benchmarking:

python run.py --mode evalSet headless = False and remote = True. This setup is suitable for evaluating agents in visual tasks. The remote machines can either be a docker container or a remote machine, connected via VNC remote desktop.

docker run -d -e RESOLUTION=1024x768 -p 5900:5900 -p 8000:8000 -e VNC_PASSWORD=123456 -v /dev/shm:/dev/shm -v ${PWD}/agent_studio/config/:/home/ubuntu/agent_studio/agent_studio/config -v ${PWD}/data:/home/ubuntu/agent_studio/data:ro agent-studio:latestStart benchmarking:

python run.py --mode evalPlease refer to the our documentation for detailed instructions on environment setup, running experiments, recording dataset, adding new tasks, and troubleshooting.

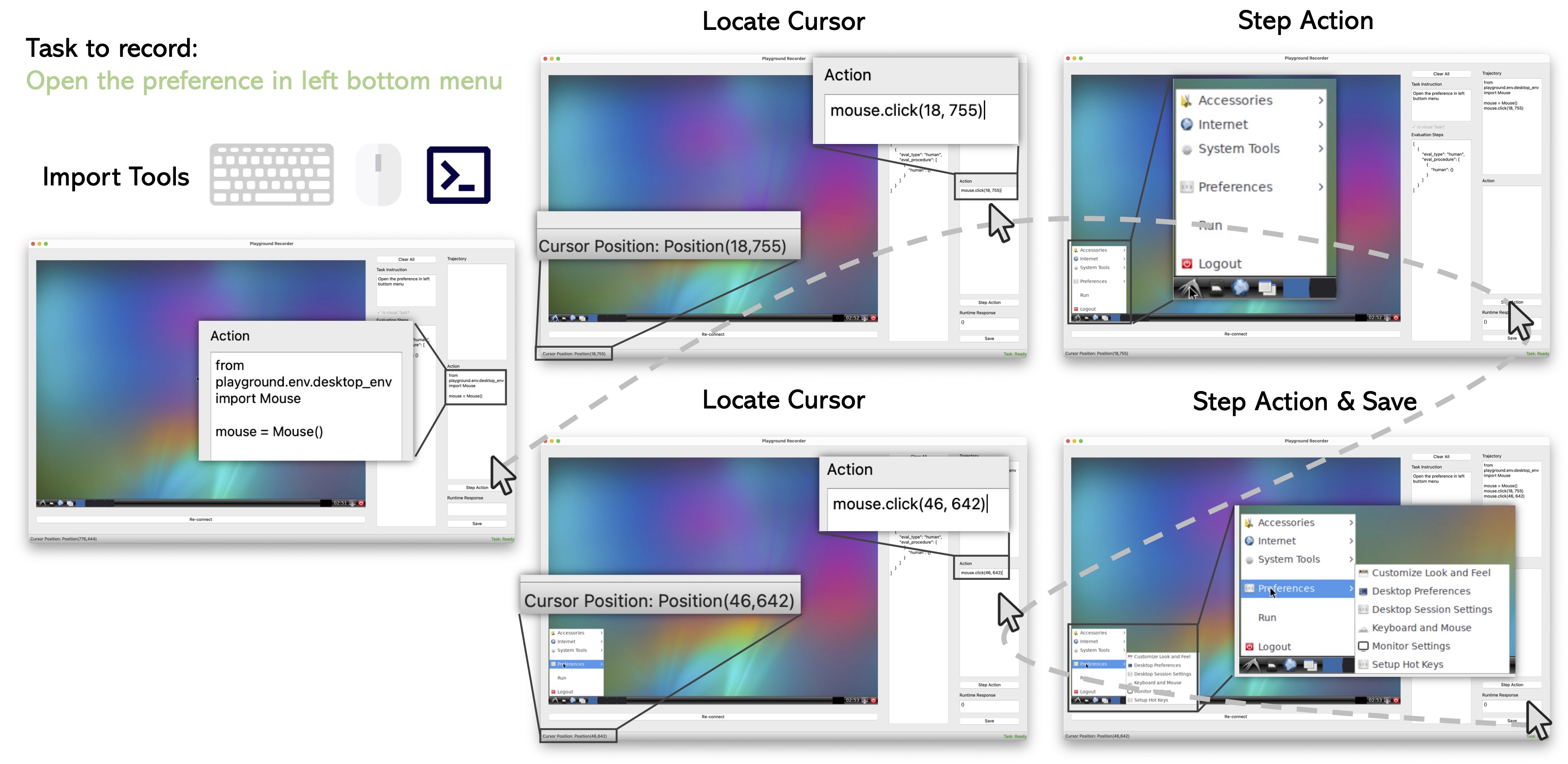

Here is an example of recording human demonstrations:

We provide a simple annotator for GUI grounding data. Please refer to the doc for detailed instructions.

We would like to thank the following projects for their inspiration and contributions to the open-source community:

If you find AgentStudio usedul, please cite our paper:

@article{zheng2024agentstudio,

title={AgentStudio: A Toolkit for Building General Virtual Agents},

author={Longtao Zheng and Zhiyuan Huang and Zhenghai Xue and Xinrun Wang and Bo An and Shuicheng Yan},

journal={arXiv preprint arXiv:2403.17918},

year={2024}

}