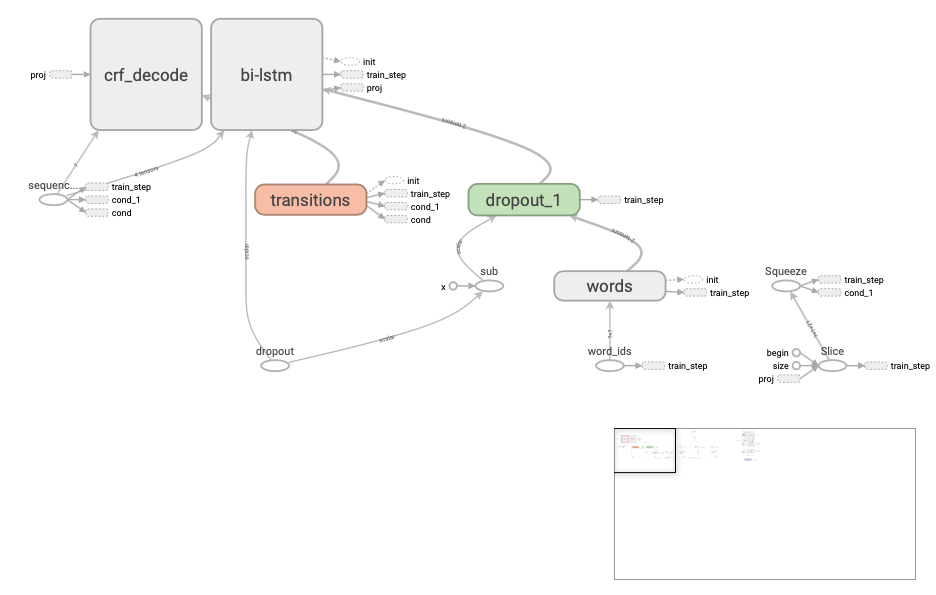

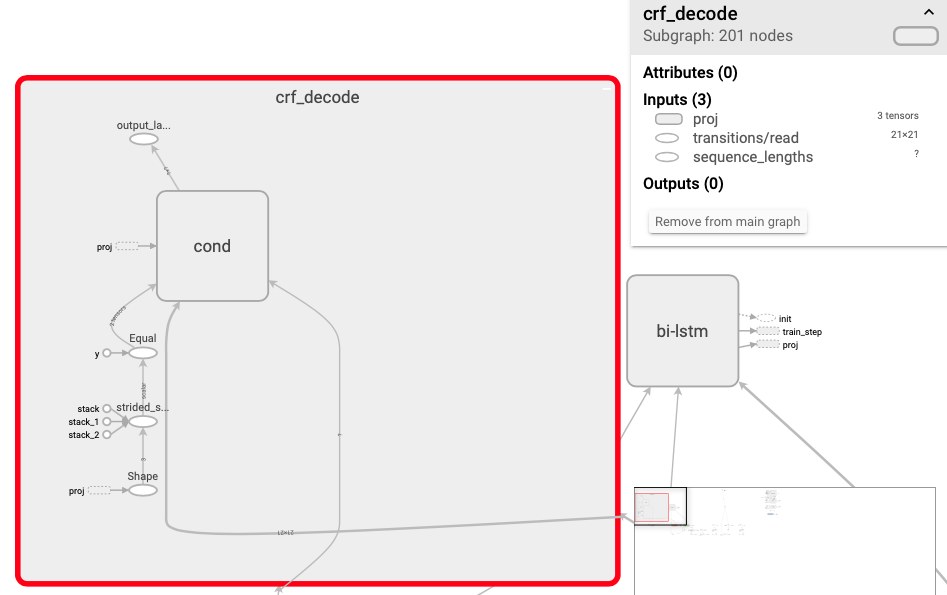

适应跨语言(c++/java)调用的模型生成,需要针对性的增加输入输出的标志,需要注意定义Graph输出时,不同于平时直接的crf解码方法,这里需要加上CRF解码层。

1.细粒度情感分析 2.固定输出输出 3.增加CRF-Decode

self.word_ids = tf.placeholder(tf.int32, shape=[None, None], name="word_ids")

self.labels = tf.placeholder(tf.int32, shape=[None, None], name="labels")

self.sequence_lengths = tf.placeholder(tf.int32, shape=[None], name="sequence_lengths")

self.dropout_pl = tf.placeholder(dtype=tf.float32, shape=[], name="dropout")

# self.lr_pl = tf.placeholder(dtype=tf.float32, shape=[], name="lr")

self.lr_pl=tf.Variable(initial_value=self.lr,name='lr',trainable=False)with tf.variable_scope("crf_decode"):

self.best_score,_=tf.contrib.crf.crf_decode(self.logits,self.transition_params,self.sequence_lengths)tf.identity(self.best_score, name="output_labels")self.transition_params = tf.Variable(initial_value=self.transition_params,name='transition_params',trainable=False)tf.saved_model.simple_save(sess,self.model_path,inputs={"word_ids":self.word_ids,"dropout":self.dropout_pl,"sequence_lengths":self.sequence_lengths},outputs={"best_score":self.best_score})下载地址:https://github.com/CLUEbenchmark/CLUE

本数据是在清华大学开源的文本分类数据集THUCTC基础上,选出部分数据进行细粒度命名实体标注,原数据来源于Sina News RSS.

训练集:10748 验证集:1343

标签类别: 数据分为10个标签类别,分别为: 地址(address),书名(book),公司(company),游戏(game),政府(goverment),电影(movie),姓名(name),组织机构(organization),职位(position),景点(scene)

cluener下载链接:数据下载

data_path_save/1591586134/checkpoints/model/

C++ 调用.pb model 项目,依赖libtensorflow_cc.so动态库与tensorflow include head file. xcode C++ project: TF-NER

This repository includes the code for buliding a very simple character-based BiLSTM-CRF sequence labeling model for Chinese Named Entity Recognition task. Its goal is to recognize three types of Named Entity: PERSON, LOCATION and ORGANIZATION.

This code works on Python 3 & TensorFlow 1.2 and the following repository https://github.com/guillaumegenthial/sequence_tagging gives me much help.

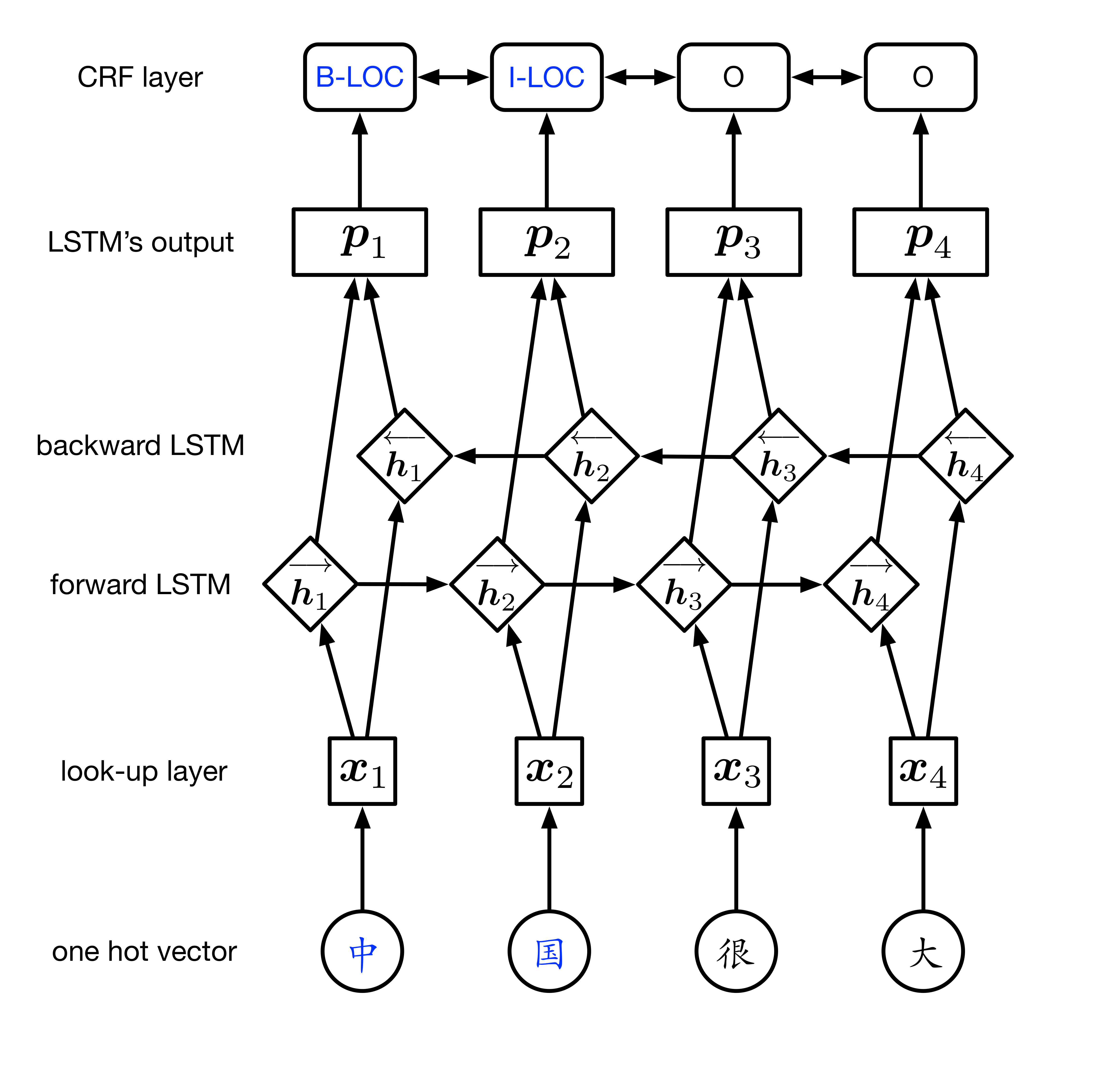

This model is similar to the models provided by paper [1] and [2]. Its structure looks just like the following illustration:

For one Chinese sentence, each character in this sentence has / will have a tag which belongs to the set {O, B-PER, I-PER, B-LOC, I-LOC, B-ORG, I-ORG}.

The first layer, look-up layer, aims at transforming each character representation from one-hot vector into character embedding. In this code I initialize the embedding matrix randomly. We could add some linguistic knowledge later. For example, do tokenization and use pre-trained word-level embedding, then augment character embedding with the corresponding token's word embedding. In addition, we can get the character embedding by combining low-level features (please see paper[2]'s section 4.1 and paper[3]'s section 3.3 for more details).

The second layer, BiLSTM layer, can efficiently use both past and future input information and extract features automatically.

The third layer, CRF layer, labels the tag for each character in one sentence. If we use a Softmax layer for labeling, we might get ungrammatic tag sequences beacuse the Softmax layer labels each position independently. We know that 'I-LOC' cannot follow 'B-PER' but Softmax doesn't know. Compared to Softmax, a CRF layer can use sentence-level tag information and model the transition behavior of each two different tags.

#sentence #PER #LOC #ORG train 46364 17615 36517 20571 test 4365 1973 2877 1331 It looks like a portion of MSRA corpus. I downloaded the dataset from the link in

./data_path/original/link.txtThe directory

./data_pathcontains:

- the preprocessed data files,

train_dataandtest_data- a vocabulary file

word2id.pklthat maps each character to a unique idFor generating vocabulary file, please refer to the code in

data.py.Each data file should be in the following format:

中 B-LOC 国 I-LOC 很 O 大 O 句 O 子 O 结 O 束 O 是 O 空 O 行 OIf you want to use your own dataset, please:

- transform your corpus to the above format

- generate a new vocabulary file

python main.py --mode=train

python main.py --mode=test --demo_model=1521112368Please set the parameter

--demo_modelto the model that you want to test.1521112368is the model trained by me.An official evaluation tool for computing metrics: here (click 'Instructions')

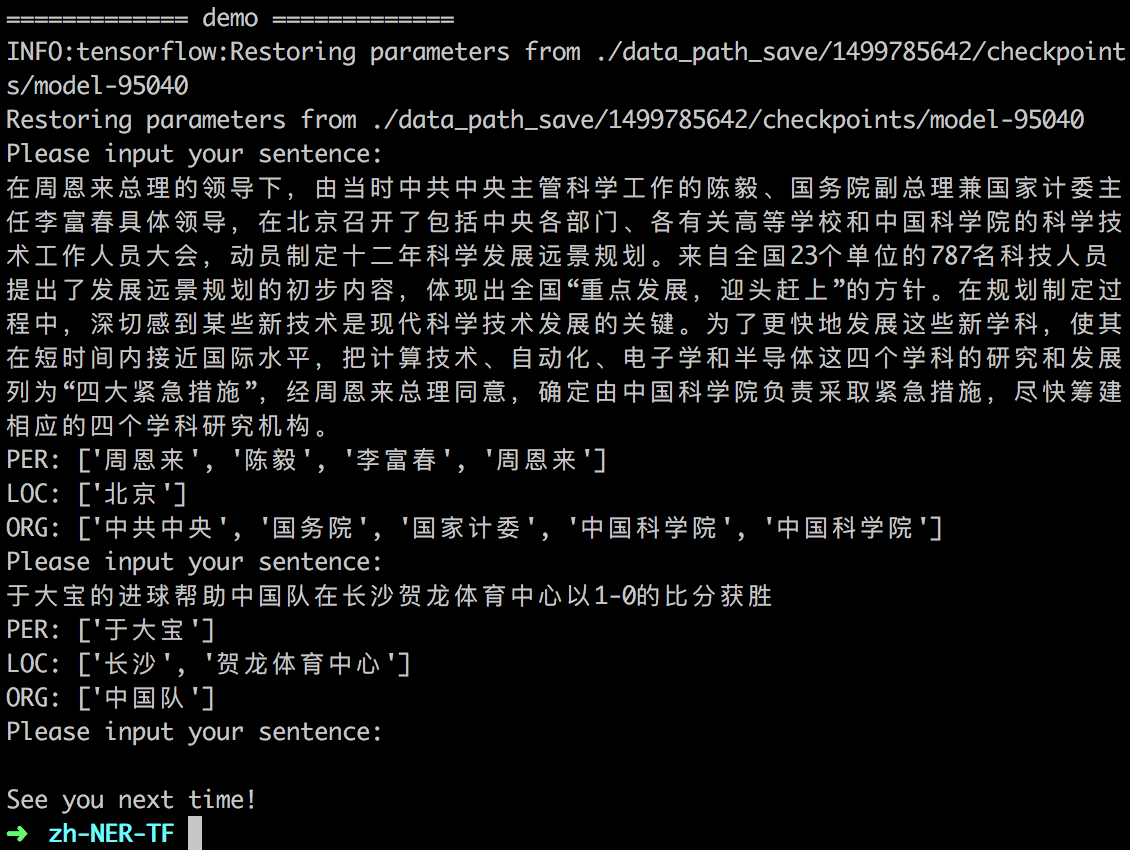

My test performance:

P R F F (PER) F (LOC) F (ORG) 0.8945 0.8752 0.8847 0.8688 0.9118 0.8515

python main.py --mode=demo --demo_model=1521112368You can input one Chinese sentence and the model will return the recognition result:

[1] Bidirectional LSTM-CRF Models for Sequence Tagging

[2] Neural Architectures for Named Entity Recognition

[3] [Character-Based LSTM-CRF with Radical-Level Features for Chinese Named Entity Recognition](https://> link.springer.com/chapter/10.1007/978-3-319-50496-4_20)