By Yichun Shi and Anil K. Jain

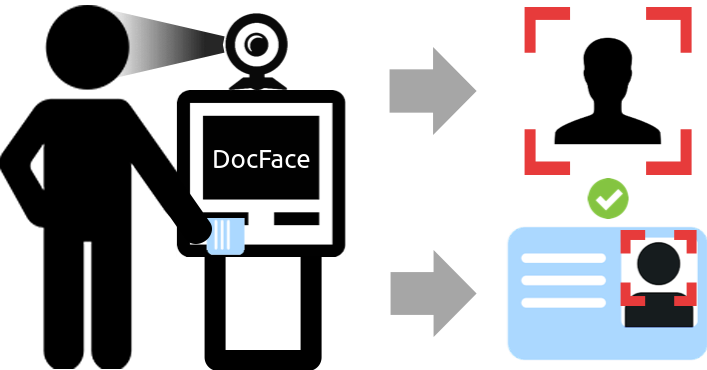

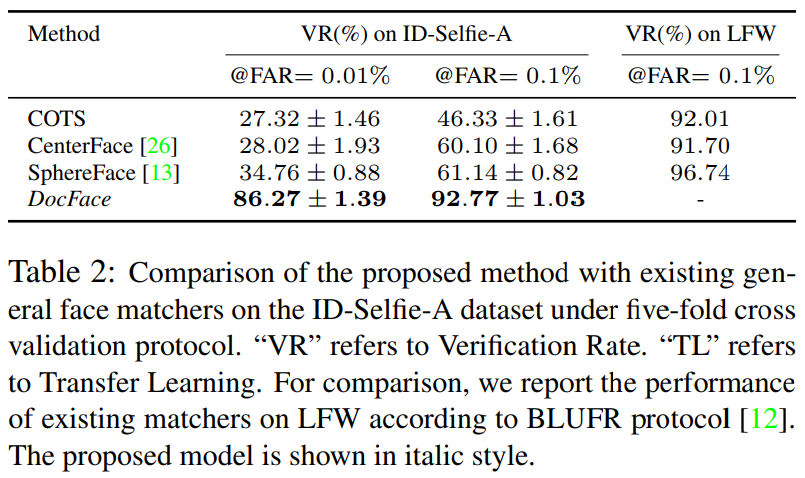

This repository includes the tensorflow implementation of DocFace, which is a system proposed for matching ID photos and live face photos. DocFace is shown to siginificantly outperformn general face matchers on the ID-Selfie matching problem. We here give the example training code and pre-trained models in the paper. For the preprocessing part, we follow the repository of SphereFace to align the face images using MTCNN. The user can also use other methods for face alignment. Because the dataset used in the paper is private, we cannot publish it here. One can test the system on their own dataset.

If you find DocFace helpful to your research, please cite:

@article{shi2018docface,

title = {DocFace: Matching ID Document Photos to Selfies},

author = {Shi, Yichun and Jain, Anil K.},

booktitle = {arXiv:1805.02283},

year = {2018}

}

- Requirements for

Python3 - Requirements for

Tensorflow 1.2ror newer versions. - Run

pip install -r requirements.txtfor other dependencies.

Download the Ms-Celeb-1M and LFW dataset for training and testing the base model. Other dataset such as CASIA-Webface can also be used for training. Because Ms-Celeb-1M is known to be a very noisy dataset, we use the clean list provided by Wu et al. Arrange Ms-Celeb-1M dataset and LFW dataset as the following structure, where each subfolder represents a subject:

Aaron_Eckhart

Aaron_Eckhart_0001.jpg

Aaron_Guiel

Aaron_Guiel_0001.jpg

Aaron_Patterson

Aaron_Patterson_0001.jpg

Aaron_Peirsol

Aaron_Peirsol_0001.jpg

Aaron_Peirsol_0002.jpg

Aaron_Peirsol_0003.jpg

Aaron_Peirsol_0004.jpg

...

For the ID-Selfie dataset, make sure each folder has only two images and is in such a structure:

Subject1

1.jpg

2.jpg

Subject2

1.jpg

2.jpg

...

Here "1.jpg" are the ID photos and "2.jpg" are the selfies.

We align all the face images following the SphereFace. The user is recommended to use their code for face alignment. It is okay to use other face alignment methods, but make sure all the images are resized to 96 x 112. Users can also use an input size of 112 x 112 by changing the "image_size" in the configuration files.

Note: In this part, we assume you are in the directory $DOCFACE_ROOT/

-

Set up the dataset paths in

config/basemodel.py:# Training dataset path train_dataset_path = '/path/to/msceleb1m/dataset/folder' # Testing dataset path test_dataset_path = '/path/to/lfw/dataset/folder'

-

Due to the memory cost, the user may need more than one GPUs to use a batch size of

256on Ms-Celeb-1M. In particular, we used four GTX 1080 Ti GPUs. In such cases, change the following entry inconfig/basemodel.py:# Number of GPUs num_gpus = 1

-

Run the following command in the terminal:

python src/train_base.py config/basemodel.py

After training, a model folder will appear under

log/faceres_ms/. We will use it for fine-tuning. If the training code is run more than once, multiple folders will appear with time stamps as their names. The user can also skip this part and use the pre-trained base model we provide.

-

Set up the dataset paths and the pre-trained model path in

config/finetune.py# Training dataset path train_dataset_path = '/path/to/training/dataset/folder' # Testing dataset path test_dataset_path = '/path/to/testing/dataset/folder' ... # The model folder from which to retore the parameters restore_model = '/path/to/the/pretrained/model/folder'

-

Run the following command in the terminal:

python src/train_sibling.py config/finetune.py

Note: In this part, we assume you are in the directory $DOCFACE_ROOT/

To extract features using a pre-trained model (either base network or sibling network), prepare a .txt file of image list. The images should be aligned in the same way as the training dataset. Then run the following command in terminal:

python src/extract_features.py \

--model_dir /path/to/pretrained/model/dir \

--image_list /path/to/imagelist.txt \

--output /path/to/output.npyNotice that when extracting features using a sibling network, we assume that the images are in the order of template, selfie, template, selfie ... One needs to change the code for other cases.

-

BaseModel (unconstraind face matching): Google Drive | Baidu Yun

-

Fine-tuned DocFace model: Google Drive | Baidu Yun

-

Using our pre-trained base model, one should be able to achieve 99.67% on the standard LFW verification protocol and 99.60% on the BLUFR protocol. Similar results should be achieved by using our code to train the Face-ResNet on Ms-Celeb-1M.

-

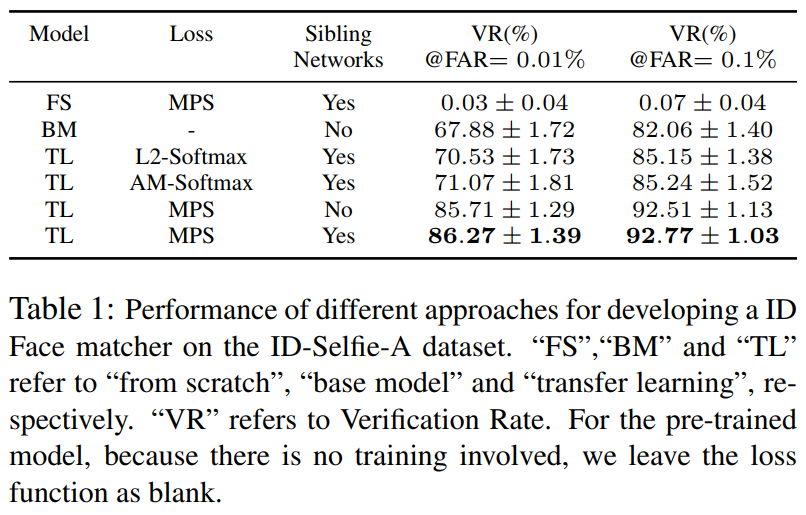

Using the proposed Max-margin Pairwise Score loss and sibling network, DocFace acheives a significant improvement compared with Base Model on our private ID-Selfie dataset after transfer learning:

Yichun Shi: shiyichu at msu dot edu