Image Super-Resolution via Iterative Refinement

Brief

This is a unoffical implementation about Image Super-Resolution via Iterative Refinement(SR3) by Pytorch.

There are some implement details with paper description, which maybe different with actual SR3 structure due to details missing.

- We used the ResNet block and channel concatenation style like vanilla

DDPM. - We used the attention mechanism in low resolution feature(16×16) like vanilla

DDPM. - We encoding the

$\gamma$ asFilMstrcutrue did inWaveGrad, and embedding it without affine transformation.

Status

Conditional generation(super resolution)

- 16×16 -> 128×128 on FFHQ-CelebaHQ

- 64×64 -> 512×512 on FFHQ-CelebaHQ

Unconditional generation

- 128×128 face generation on FFHQ

- 1024×1024 face generation by a cascade of 3 models

Training Step

- log / logger

- metrics evaluation

- multi-gpu support

- resume training / pretrained model

Results

We set the maximum reverse steps budget to 2000 now.

| Tasks/Metrics | SSIM(+) | PSNR(+) | FID(-) | IS(+) |

|---|---|---|---|---|

| 16×16 -> 128×128 | 0.675 | 23.26 | - | - |

| 64×64 -> 512×512 | - | - | ||

| 128×128 | - | - | ||

| 1024×1024 | - | - |

-

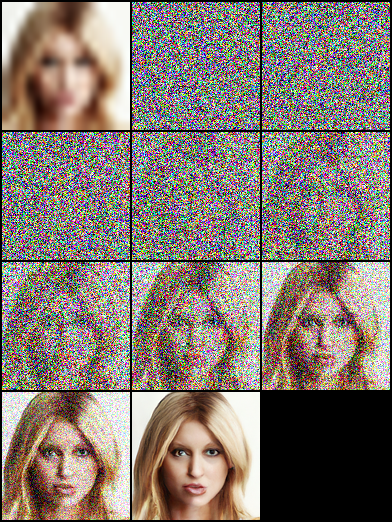

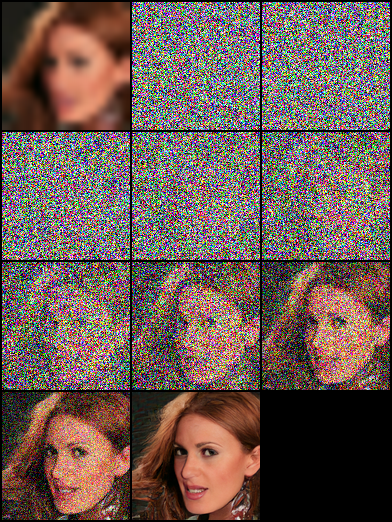

16×16 -> 128×128 on FFHQ-CelebaHQ [More Results]

|

|

|

|---|

-

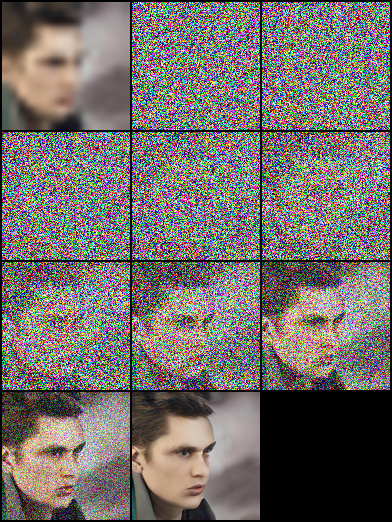

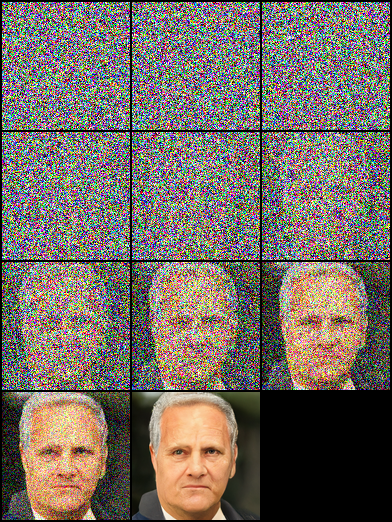

128×128 face generation on FFHQ [More Results]

|

|

|

|---|

Usage

Pretrained Model

This paper is based on "Denoising Diffusion Probabilistic Models", and we build both DDPM/SR3 network structure, which use timesteps/gama as model embedding input, respectively. In our experiments, SR3 model can achieve better visual results with same reverse steps and learning rate. You can select the json files with annotated suffix names to train different model.

| Tasks | Google Drive |

|---|---|

| 16×16 -> 128×128 on FFHQ-CelebaHQ | SR3 |

| 128×128 face generation on FFHQ | SR3 |

# Download the pretrain model and edit [sr|sample]_[ddpm|sr3]_[resolution option].json about "resume_state":

"resume_state": [your pretrain model path]We have not trained the model until converged for time reason, which means there are a lot room to optimization.

Data Prepare

New Start

If you didn't have the data, you can prepare it by following steps:

Download the dataset and prepare it in LMDB or PNG format using script.

# Resize to get 16×16 LR_IMGS and 128×128 HR_IMGS, then prepare 128×128 Fake SR_IMGS by bicubic interpolation

python prepare.py --path [dataset root] --out [output root] --size 16,128 -lthen you need to change the datasets config to your data path and image resolution:

"datasets": {

"train": {

"dataroot": "dataset/ffhq_16_128", // [output root] in prepare.py script

"l_resolution": 16, // low resolution need to super_resolution

"r_resolution": 128, // high resolution

"datatype": "lmdb", //lmdb or img, path of img files

},

"val": {

"dataroot": "dataset/celebahq_16_128", // [output root] in prepare.py script

}

},Own Data

You also can use your image data by following steps.

At first, you should organize images layout like this:

# set the high/low resolution images, bicubic interpolation images path

dataset/celebahq_16_128/

├── hr_128

├── lr_16

└── sr_16_128then you need to change the dataset config to your data path and image resolution:

"datasets": {

"train|val": {

"dataroot": "dataset/celebahq_16_128",

"l_resolution": 16, // low resolution need to super_resolution

"r_resolution": 128, // high resolution

"datatype": "img", //lmdb or img, path of img files

}

},Training/Resume Training

# Use sr.py and sample.py to train the super resolution task and unconditional generation task, respectively.

# Edit json files to adjust network structure and hyperparameters

python sr.py -p train -c config/sr_sr3.jsonTest/Evaluation

# Edit json to add pretrain model path and run the evaluation

python sr.py -p val -c config/sr_sr3.jsonEvaluation Alone

# Quantitative evaluation using SSIM/PSNR metrics on given dataset root

python eval.py -p [dataset root]Acknowledge

Our work is based on the following theoretical works:

- Denoising Diffusion Probabilistic Models

- Image Super-Resolution via Iterative Refinement

- WaveGrad: Estimating Gradients for Waveform Generation

- Large Scale GAN Training for High Fidelity Natural Image Synthesis

and we are benefit a lot from following projects: