Residual networks implementation using Keras-1.0 functional API, that works with both theano/tensorflow backend and 'th'/'tf' image dim ordering.

- Deep Residual Learning for Image Recognition (the 2015 ImageNet competition winner)

- Identity Mappings in Deep Residual Networks

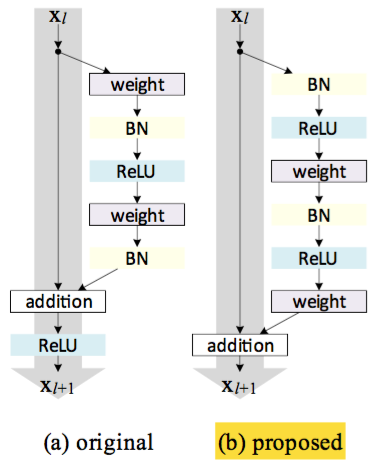

The residual blocks are based on the new improved scheme proposed in Identity Mappings in Deep Residual Networks as shown in figure (b)

Both bottleneck and basic residual blocks are supported. To switch them, simply provide the block function here

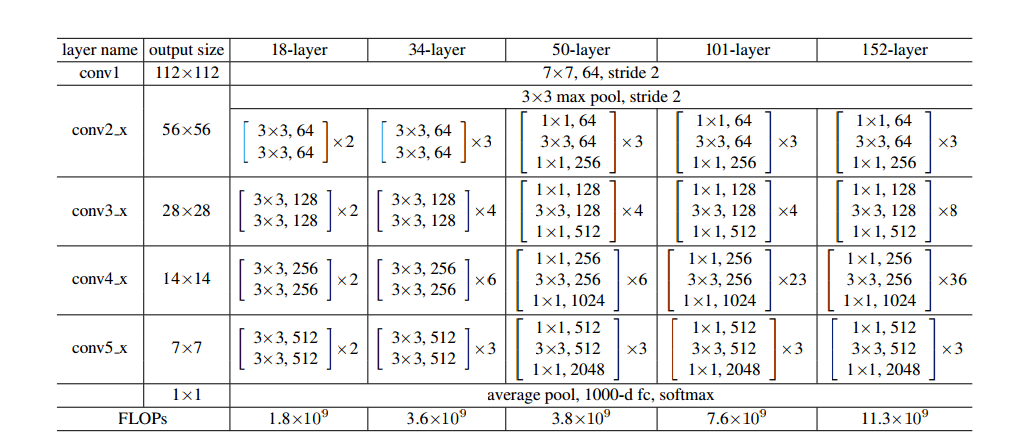

The architecture is based on 50 layer sample (snippet from paper)

There are two key aspects to note here

- conv2_1 has stride of (1, 1) while remaining conv layers has stride (2, 2) at the beginning of the block. This fact is expressed in the following lines.

- At the end of the first skip connection of a block, there is a disconnect in num_filters, width and height at the merge layer. This is addressed in

_shortcutby usingconv 1X1with an appropriate stride. For remaining cases, input is directly merged with residual block as identity.

- Use ResNetBuilder build methods to build standard ResNet architectures with your own input shape. It will auto calculate paddings and final pooling layer filters for you.

- Use the generic build method to setup your own architecture.

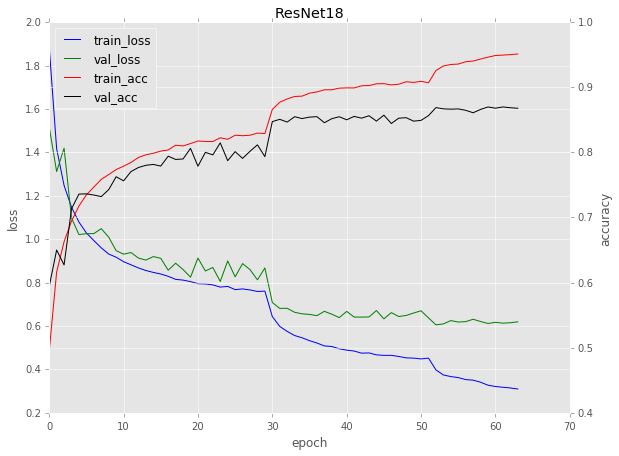

Includes cifar10 training example. Achieves ~86% accuracy using Resnet18 model.

Note that ResNet18 as implemented doesn't really seem appropriate for CIFAR-10 as the last two residual stages end up as all 1x1 convolutions from downsampling (stride). This is worse for deeper versions. A smaller, modified ResNet-like architecture achieves ~92% accuracy (see gist).