solutions for https://www.kaggle.com/c/tensorflow-speech-recognition-challenge

(sudo) pip install -e .

data_dir=~/Data/TF_Speech/speech_commands

test_dir="/home/ftli/Data/TF_Speech/test"

batch_size=48

- Sainath T N, Parada C. Convolutional neural networks for small-footprint keyword spotting[C]//Sixteenth Annual Conference of the International Speech Communication Association. 2015.

# conv 0.77

bash run.sh --mode train --model conv \

--data_dir $data_dir --test_dir $test_dir \

--hparams "" --train-opts "" \

--suffix "" --batch_size $batch_size \

--opt "{ name: Adam, params: {} }" \

--training_steps "25000,25000" --skip-infer false \

--learning_rate "0.01,0.001" --use-gpu true --feature_scaling ''

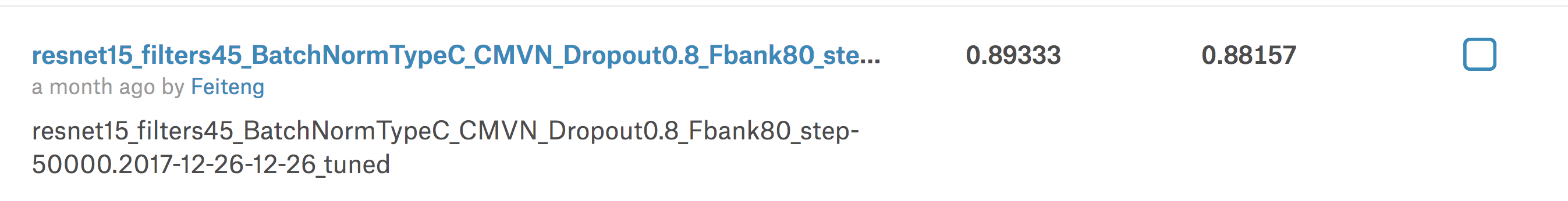

2. improved-resnet LeaderBoard 0.89 (basic 0.85)

- Tang R, Lin J. Deep Residual Learning for Small-Footprint Keyword Spotting[J]. arXiv preprint arXiv:1710.10361, 2017.

-

FeatureScale(centered mean, div variance, e.g. cmvn) + BatchNorm +

Fbank80:LB 0.89333 -

others:

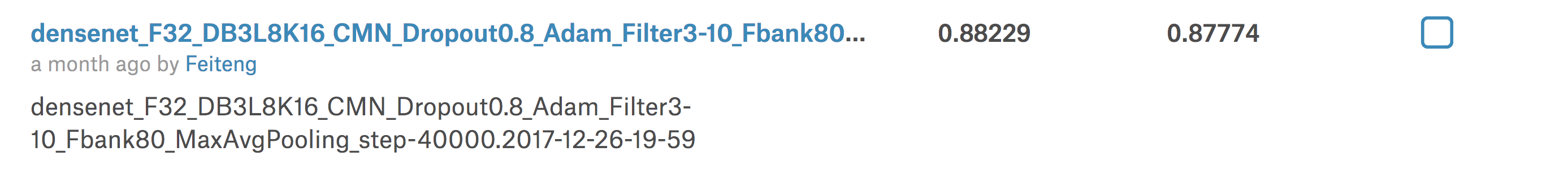

LB 0.88617during the competition, the LB is 0.88commit: 922aa0d85- Fbank 80

- dropout

- feature scale: centered mean (e.g. cmn)

- First Conv: kernel_size=(3, 10), strides=(1, 4)

- use MaxPool + AvgPool

# LB 0.886

dropout=0.8

hparams="resnet_filters=45,resnet_type=c,add_batch_norm=True,add_first_batch_norm=False,freeze_first_batch_norm=False"

suffix="15_filters45_BatchNormTypeC_CMN_Dropout${dropout}_Fbank80"

bash run.sh --mode train --model resnet \

--data_dir $data_dir --test_dir $test_dir \

--hparams "$hparams" --train-opts "--dropout_prob $dropout --dct_coefficient_count 80 --feature_type fbank" \

--suffix "$suffix" --batch_size $batch_size \

--opt "{ name: Adam, params: {} }" \

--training_steps "25000,25000" --skip-infer false \

--learning_rate "0.01,0.001" --use-gpu true --feature_scaling 'cmn'

3. improved-densenet LeaderBoard 0.88 (basic 0.86)

-

Huang G, Liu Z, Weinberger K Q, et al. Densely connected convolutional networks[C]//Proceedings of the IEEE conference on computer vision and pattern recognition. 2017, 1(2): 3.

for l in 8;do

for k in 16;do

hparams="add_first_batch_norm=True,freeze_first_batch_norm=False,inital_filters=32,dense_blocks=3,num_layers=${l},growth_rate=${k},add_bottleneck_layer=False,theta=1"

suffix="_F32_DB3L${l}K${k}_CMN"

echo '========= $suffix ========='

bash run.sh --mode train --model densenet \

--data_dir $data_dir --test_dir ${test_dir} \

--hparams "$hparams" \

--suffix "$suffix" \

--opt "{ name: Adam, params: {} }" \

--training_steps "10000,10000,10000,10000" --skip-infer false \

--learning_rate "0.01,0.001,0.0005,0.0001" --use-gpu true --feature_scaling 'cmn' || exit 1

done

done

- tried changing speed / pitch, but got a little achievement.