Welcome to the Awesome-LLM-Quantization repository! This is a curated list of resources related to quantization techniques for Large Language Models (LLMs). Quantization is a crucial step in deploying LLMs on resource-constrained devices, such as mobile phones or edge devices, by reducing the model's size and computational requirements.

| Title & Author & Link | Summary |

|---|---|

| ICLR22 GPTQ: Accurate Post-Training Quantization for Generative Pre-trained Transformers Elias Frantar, Saleh Ashkboos, Torsten Hoefler, Dan Alistarh Github |

This Paper is the first one to apply post-training quantization to GPT. GPTQ is a one-shot weight quantization method based on approximate second-order information(Hessian). The bit-width is reduced to 3-4 bits per weight. Extreme experiments on 2-bit and ternary quantization are also provided. #PTQ #3-bit #4-bit #2-bit |

| ICML23 SmoothQuant: Accurate and Efficient Post-Training Quantization for Large Language Models Guangxuan Xiao, Ji Lin, Mickael Seznec, Hao Wu, Julien Demouth, Song Han Github |

SmoothQuant is a post-training quantization framework targeting W8A8 (INT8). In General, weights are easier to quantize than activation. It propose to migrate the quantization difficulty from activations to weights using mathematically equivalent transformation using #PTQ #W8A8 #Outlier |

| MLSys24_BestPaper AWQ: Activation-aware Weight Quantization for LLM Compression and Acceleration Ji Lin, Jiaming Tang, Haotian Tang, Shang Yang, Wei-Ming Chen, Wei-Chen Wang, Guangxuan Xiao, Xingyu Dang, Chuang Gan, Song Han, Song Han Github |

Activation-aware Weight Quantization (AWQ) is low-bit weight-only quantization method targeting edge devices with W4A16. The motivation is protecting only 1% of sliant weighs can retain the performance. Then, AWQ aims to search for the optimal per-channel scaling #PTQ #W4A16 #Outlier |

| AAAI24 Oral OWQ: Outlier-Aware Quantization for Efficient Fine-tuning and Inference of Large Language Models Changhun Lee, Jungyu Jin, Taesu Kim, Hyungjun Kim, Eunhyeok Park [Github](xvyaward/owq: Code for the AAAI 2024 Oral paper "OWQ: Outlier-Aware Weight Quantization for Efficient Fine-Tuning and Inference of Large Language Models". (github.com)) |

Outlier-aware weight quantization (OWQ) aims to minimize the footprint through low-precision representation. It prioritizes a small subset of structured weight using Hessain matrices and applies the high precision to these subset. This approach is a mixed-precision quantization method. The final model is 3.1 bit, which achieves comparable performance to OPTQ in 4-bit. Moreover, it incorporates Weak Column Tuning using PEFT to further boost the quality of zero-shot tasks. #PTQ #Mixed-Precision #3-bit #PEFT |

| EMNLP23 Outlier Suppression+: Accurate quantization of large language models by equivalent and optimal shifting and scaling Xiuying Wei, Yunchen Zhang, Yuhang Li, Xiangguo Zhang, Ruihao Gong, Jinyang Guo, Xianglong Liu Github |

In PTQ of LLM, outliers are constrated in specific channels and are asymmetric across channels. To address it, Outlier Suppression+ (OS+), a PTQ framework, is proposed with channel-wise shifting for asymmetry and channel-wise scaling for concentration. Also, they propose a fast and stable scheme to calculate the hyperparameters within the shifting and scaling. (1) Channel-wise Shifting can reshape the asysmetric shapes to symmetric distribution (2) Channel-wise Scaling scales down the outliers to a threshold, resulting a unified range for different channels. (3) Unified Migration Pattern can make sure the equivalent to the original results. #PTQ #Outlier |

| EMNLP23 LLM-FP4: 4-Bit Floating-Point Quantized Transformers Shih-yang Liu, Zechun Liu, Xijie Huang, Pingcheng Dong, Kwang-Ting Cheng Github |

LLM-FP4 quantizes both weight and activation to FP4 in a post-training manner. Compared with INT quantization, FP quantization is more flexible and can better handle long-tail or bell-shaped distributions. FP quantization is sensitive to the exponent bits and clipping range. LLM-FP4 searches for the optimal quantization parameters. This paper observes a pattern, which is high inter-channel variance and low intra-channel variance. Thus, LLM-FP4 employ per-channel activation quantization. #PTQ #FP4 |

| Arxiv23 LLM-QAT: Data-Free Quantization Aware Training for Large Langugae Models Zechun Liu, Barlas Oguz, Changsheng Zhao, Ernie Chang, Pierre Stock, Yashar Mehdad, Yangyang Shi, Raghuraman Krishnamoorthi, Vikas Chandra |

LLM-QAT is a data-free distillation method that leverages generations produced by the pre-trained model (also teacher model). Besides quantizing the weights and activations, LLM-QAT also quantize the KV cache, which helps to increase the throughput and support long sequence. The experiments are conducted on 4-bits. Experiments are conducted on a single 8-GPU training node. #QAT #KVQuant #KD |

| Arxiv23 BitNet: Scaling 1-bit Transformers for Large Language Models Hongyu Wang, Shuming Ma, Li Dong, Shaohan Huang, Huaijie Wang, Lingxiao Ma, Fan Yang, Ruiping Wang, Yi Wu, Furu Wei Github |

BitNet focus on QAT for 1-bit LLMs. It employs low-precision binary weights and quantized 8-bit activations, while maintaining high precision for the optimizer states and gradients during training. BitLinear is proposed as plug-and-play module that replace all linear modules in Transformer. For training, (1) It employs STE to approximate the gradient. (2) It maintains a latent weight in a high-precision format to accumulate the paramter update. (3) It employs large learning rate to avoid the biased gradient. #QAT #1-bit |

| NeurIPS23 QLoRA: Efficient Finetuning of Quantized LLMs Tim Dettmers, Artidoro Pagnoni, Ari Holtzman, Luke Zettlemoyer Github |

QLoRA aims to reduce the memory usage of LLM by incoorporating the LoRA with 4-bit quantized pretrained model. Specifically, QLoRA introduces (1) 4-bit NormalFlot(NF4), a information theoretically optimal for normally distributed weights. (2) double quantization to reduce the memory footprint. (3) paged optimizers to manage memory spikes. #NF4 #4-bit #LoRA |

| NeurIPS23 QuIP: 2-Bit Quantization of Large Language Models with Guarantees Jerry Chee, Yaohui Cai, Volodymyr Kuleshov, Christopher De Sa Github |

This paper introduces Quantization with Incoherence Processing (QuIP), which is based on the insight that quantization benefits from incoherent weight and Hessian matrices. It consists of two steps (1) adaptive rounding procedure to minimize a quadratic proxy objective. (2) pre- and post-processing that ensures weight and Hessian incoherence using random orthgonal matrices. QuIP makes the two-bit LLM compression viable for the first time. #PTQ #2-bit #Rotation |

| ICLR24 Spotlight OmniQuant: Omnidirectionally Calibrated Quantization for Large Language Models Wenqi Shao, Mengzhao Chen, Zhaoyang Zhang, Peng Xu, Lirui Zhao, Zhiqian Li, Kaipeng Zhang, Peng Gao, Yu Qiao, Ping Luo Github |

This paper is a framework between PTQ and QAT, aiming to attain the performance of QAT and maintain the time and data efficiency of PTQ. OmniQuant just requires 1.6h for LLaMA-7B, while LLM-QAT requires 90h and SmoothQuant requires 10min. Specifically, OmniQuant freezes the original full-precision weight and only train a few learnable quantization parameters, including Learnable Weight Clipping (LWC) and Learnable Equivalent Transformation (LET). #PTQ #QAT #Learnable #Transformation |

| ICLR24 PB-LLM: Partially Binarized Large Language Models Yuzhang Shang, Zhihang Yuan, Qiang Wu, Zhen Dong Github |

PB-LLM is a mixed-precision quantization framework that filters a small ratio of salient weights to higher-bit. It analyzed the performance under PTQ and QAT settings. Under PTQ, it reconstruct the binarized weight matrix like GPTQ. Under QAT, it freezes the salient weights during training. #PTQ #QAT #1-bit |

| ICML24 BiLLM: Pushing the Limit of Post-Training Quantization for LLMs Wei Huang, Yangdong Liu, Haotong Qin, Ying Li, Shiming Zhang, Xianglong Liu, Michele Magno, Xiaojuan Qi Github |

BiLLM is the first 1-bit post-training quatization framework for pretrained LLMs. BiLLM split the weights into salient weight and non-salient one. For the salient weights, they propose the binary residual approximation strategy. For the unsalient weights, they propose an optimal splitting search to group and binarize them independently. BiLLM achieve 8.41 ppl on LLaMA2-70B with only 1.08 bit. #PTQ #1-bit |

| ICML24 AQLM: Extreme Compression of Large Language Models via Additive Quantization Github |

|

| ACL24 BitDistiller: Unleashing the Potential of Sub 4-bit LLMs via Self-Distillation Dayou Du, Yijia Zhang, Shijie Cao, Jiaqi Guo, Ting Cao, Xiaowen Chu, Ningyi Xu Github |

Bitdistiller is a QAT framework that utilizes Knowledge Distillation to boost the performance at Sub-4bit. BitDistiller (1) incorporates a tailored asymmetric quantization and clipping technique to perserve the fidelity of quantized weight and (2) proposes a Confidence-Aware Kullback-Leibler Divergence (CAKLD) as self-distillation loss. Experiments involve 3-bit and 2-bit configuration. #QAT #2-bit #3-bit #KD |

| ACL24 DB-LLM: Accurate Dual-Binarization for Efficient LLMs Hong Chen, Chengtao Lv, Liang Ding, Haotong Qin, Xiabin Zhou, Yifu Ding, Xuebo Liu, Min Zhang, Jinyang Guo, Xianglong Liu, Dacheng Tao |

DB-LLM introduces Flexible Dual Binarization (FDB) by splitting 2-bit quantized weights into two independent set of binaries (which is similar to BiLLM). It also proposes Deviation -Aware Distillation to focus differently on various samples. DB-LLM is actually a QAT framework that targeting W2A16 settings. #QAT #Binarization #2-bit |

| ACL24 Findings IntactKV: Improving Large Language Model Quantization by Keeping Pivot Tokens Intact Ruikang Liu, Haoli Bai, Haokun Lin, Yuening Li, Han Gao, Zhengzhuo Xu, Lu Hou, Jun Yao, Chun Yuan |

This paper unveils a previously overlooked type of outliers in LLMs. Such outliers are found to allocate most of the attention scores on initial tokens of input, termed as pivot tokens, which are crucial to the performance of quantized LLMs. Given that, this paper proposes IntactKV to generate the KV cache of pivot tokens losslessly from the full-precision model. The approach is simple and easy to combine with existing quantization solutions with no extra inference overhead. Besides, IntactKV can be calibrated as additional LLM parameters to boost the quantized LLMs further with minimal training costs. #Weight #Outliers |

| Arxiv24 SliM-LLM: Salience-Driven Mixed-Precision Quantization for Large Language Models Wei Huang, Haotong Qin, Yangdong Liu, Yawei Li, Xianglong Liu, Luca Benini, Michele Magno, Xiaojuan Qi Github |

This paper presents Slience-Driven Mixed-Precision Quantization for LLMs, called Slim-LLM, targeting 2-bit mixed precision quantization. Specifically, Silm-LLM involves two techniques: (1) Salience-Determined Bit Allocation (SBA): by minimizing the KL divergence between original output and the quantized output, the objective is to find the best bit assignment for each group. (2) Salience-Weighted Quantizer Calibration: by considering the element-wise salience within the group, Slim-LLM search for the calibration parameter #MixedPrecision #2-bit |

| Arxiv24 AdpQ:A Zero-shot Calibration Free Adaptive Post Training Quantization Method for LLMs |

This paper formulates outlier weight identification problem in PTQ as the concept of shinkage in statistical ML. By applying Adaptive LASSO regression model, AdpQ ensures the quantized weights distirbution is close to that of origin, thus eliminating the requirement of calibration data. Lasso Regression employ the L1 regularization and minimize the KL divergence between the original weight and quantized one. The experiments mainly focus on 3/4 bit quantization #PTQ #Regression |

| Arxiv24 Integer Scale: A Free Lunch for Faster Fine-grained Quantization for LLMs Qingyuan Li, Ran Meng, Yiduo Li, Bo Zhang, Yifan Lu, Yerui Sun, Lin Ma, Yuchen Xie |

Integer Scale is a PTQ framework that require no extra calibration and maintain the performance. It is a fine-grained quantization method and can be used plug-and-play. Specifically, it reorder the sequence of costly type conversion I32toF32. #PTQ #W4A16 #W4A8 |

| Arxiv24 Outliers and Calibration Sets have Dimishing Effect on Quantization of Mordern LLMs Davide Paglieri, Saurabh Dash, Tim Rocktaschel, Jack Parker-Holder |

This paper evaluates the effects of calibration set on the performance of LLM quantization, especially on hidden activations. Calibration set can distort the quantization range and negatively impact performance. This paper reveals that different model has shown different tendency towards quantization. (1) OPT has shown high susceptibility to outliers with varying calibration sets. (2) Newer models like Llama-2-7B, Llama-3-8B, Mistral-7B has demonstrated stronger robustness. This findings suggest a shift in PTQ strategies. These findings indicate that we should emphasis more on optimizing inference speed rather than focusing on outlier preservation. #Analysis #Evaluation #Finding |

| Arxiv24 Effective Interplay between Sparsity and Quantization: From Theory to Practice Simla Burcu Harma Ayan Chakraborty, Elizaveta Kostenok, Dnila Mishin, etc. Github |

This paper dives into the interplay between sparsity and quantization and evaluates whether thheir combination impacts final performance of LLMs. This paper theriotically proves that applying sparsity before quantization is the optimal sequence, minimizing the error in computation. The experiments involves OPT, LLaMA and ViT. Findings: (1) sparsity and quantization are not orthogonal; (2) interaction between Sparsity and quantization significantly harm the performance, where quantization error is playing a dominant role in the degradation. #Theory #Sparisty |

| NeurIPS24 Oral PV-Tuning: Beyond Straight-Through Estimation for Extreme LLM Compression Github |

|

| Arxiv24 I-LLM: Efficient Integer-Only Inference for Fully-Quantized Low-Bit Large Language Models Xing Hu, Yuan Chen, Dawei Yang, Zhihang Yuan, Jiangyong Yu, Chen Xu, Sifan Zhou |

I-LLM is a PTQ framework that targeting the Integer-only quantization. They identify the large fluctuation of activations across channels and tokens. (1) It develop Fully-Smooth Block-Reconstruction (FSBR) to aggressively smooth inter-channel variations of all activations and weights. (2) It proposes Dynamic Integer-only MatMul (DI-MatMul) to dynamically quantize the input and output with int-only operations. (3) It proposes a series of bit shift to execute non-linear operation, including DI-ClippedSoftmax, DI-Exp, DI-Normalization. #PTQ #Int-Only #W4A4 |

| ICCAD24 Fast and Efficient 2-bit LLM Inference on GPU: 2/4/16-bit in a Weight Matrix with Asynchronous Dequantization Jinhao Li, Jiaming Xu, Shiyao Li, Shan Huang, Jun Liu, Yaoxiu Lian, Guohao Dai |

This paper first identify three challenges (1) Uneven distribution in weight; (2) Speed degradation by sparse outliers; (3) Time-consuming dequant on GPUs; To tackle these, this paper proposed three techniques: (1) Intra-weight mixed-precision quant; (2) Exclusive 2-bit sparse outlier; (3) Asynchronous dequant; #QAT #2-bit |

| Arxiv24 Mixture of Scales: Memory-Efficient Token-Adaptive Binarization for Large Language Models Dongwon Jo, Taesu Kim, Yulhwa Kim, Jae-Joon Kim |

The paper proposes a novel binarization technique called Mixture of Scales (BinaryMoS) for large language models (LLMs). Unlike conventional binarization methods that use a single scaling factor, BinaryMoS employs multiple scaling experts that are dynamically combined based on the input token to generate token-adaptive scaling factors. This token-adaptive approach enhances the representational power of binarized LLMs while maintaining the memory efficiency of traditional binarization techniques. Experiments show that BinaryMoS outperforms previous binarization methods and even 2-bit quantization approaches in various NLP tasks, all while maintaining similar model size to static binarization techniques. #1-bit #PTQ #QAT |

| Arxiv24 QuaRot: Outlier-Free 4-Bit Inference in Rotated LLMs Saleh Ashkboos, Amirkeivan Mohtashami, Maximilian L. Croci, Bo Li, Martin Jaggi, Dan Alistarh, Torsten Hoefler, James Hensman Github |

This paper introduce a novel rotation-based quantization scheme, which can quantize the weight, activation, and KV cache of LLMs in 4-bit. QuaRot rotates the LLMs to remove the outliers from hideenstate. It apply randomized Hadamard transformations to the weight matrices without changing the model. When applying this transformation to attention module, it enables the KV cache quantization. #PTQ #4bit #Rotation |

| Arxiv24 SpinQuant:LLM Quantization with Learned Rotations Zechun Liu, Changsheng Zhao etc. |

Rotating activation or weight matrices heps remove outliers and benefits quantizaion (rotational invariance property). They first identify a collection of applicable rotation parameterizations that lead to identical outputs in full-precision Transformer. They find that soem random rotations lead to better quantization than others. Then, SpinQuant was proposed to optimize the rotation matrices with Cayley optimization on validation dataset. Specifically, them employ Cayley SGD method to optimize the rotation matrix on the Stiefel manifold. #PTQ #Rotation #4bit |

| NeurIPS24 Oral DuQuant: Distributing Outliers via Dual Transformation Makes Stronger Quantized LLMs Haokun Lin, Haobo Xu, Yichen Wu, Jingzhi Cui, Yingtao Zhang, Linzhan Mou, Linqi Song, Zhenan Sun, Ying Wei |

This paper finds that there exists extremely large massive outliers at down_porj layer of FFN modules. This paper introduces DuQuant, a novel approach that utilizes rotation and permutation transformations to more effectively mitigate both massive and normal outliers. First, DuQuant starts by constructing rotation matrices, using specific outlier dimensions as prior knowledge, to redistribute outliers to adjacent channels by block-wise rotation. Second, We further employ a zigzag permutation to balance the distribution of outliers across blocks, thereby reducing block-wise variance. A subsequent rotation further smooths the activation landscape, enhancing model performance. DuQuant establishs new state-of-the-art baselines for 4-bit weight-activation quantization across various model types and downstream tasks. #PTQ #Rotation #4bit #WA |

| Under Review Scaling Laws for Mixed Quantization in Large Language Models Zeyu Cao, Cheng Zhang, Pedro Gimenes, Jianqiao Lu, Jianyi Cheng, Yiren Zhao |

The paper examines the scaling law of mixed-precision quantization in large language models (LLMs). The key findings are: (1) As model size increases, the maximum achievable quantization ratio (ratio of low-precision to total parameters) increases under a fixed performance target. This suggests larger models can tolerate more aggressive quantization. (2) Finer granularity of quantization (e.g. per matrix multiplication vs per layer) allows for higher quantization ratios while maintaining performance. This is due to the irregular distribution of outliers in weights and activations. The authors formulate these observations as "LLM-MPQ Scaling Laws" and discuss the implications for future AI hardware design and efficient AI algorithms. #mixed #scaling-law |

| EMNLP 2024 Findings SQFT: Low-cost Model Adaptation in Low-precision Sparse Foundation Models J. Pablo Muñoz, Jinjie Yuan, Nilesh Jain Hardware-Aware-Automated-Machine-Learning |

SQFT is an end-to-end solution for low-precision sparse parameter-efficient fine-tuning of large pre-trained models. It includes stages for sparsification, quantization, fine-tuning with neural low-rank adapter search (NLS), and sparse parameter-efficient fine-tuning (SparsePEFT) with optional quantization-awareness. SQFT addresses the challenges of merging sparse/quantized weights with dense adapters by preserving sparsity and handling different numerical precisions. #Quantization #Pruning #PEFT |

| Under review ARB-LLM: Alternating Refined Binarizations for Large Language Models Zhiteng Li, Xianglong Yan, Tianao Zhang, Haotong Qin, Dong Xie, Jiang Tian, Zhongchao Shi, Linghe Kong, Yulun Zhang, Xiaokang Yang |

ARB-LLM proposes a novel 1-bit post-training quantization (PTQ) technique for large language models (LLMs). It uses an alternating refined binarization (ARB) algorithm to progressively update the binarization parameters and incorporates calibration data and row-column-wise scaling factors to enhance performance. The paper also introduces a refined strategy to combine salient column bitmap and group bitmap (CGB) to improve bitmap utilization. #LLM #Binarization #PTQ |

| Under review PREFIXQUANT: STATIC QUANTIZATION BEATS DYNAMIC THROUGH PREFIXED OUTLIERS IN LLMS Mengzhao Chen, Yi Liu, Jiahao Wang, Yi Bin, Wenqi Shao, Ping Luo PrefixQuant |

PrefixQuant isolates high-frequency outlier tokens and prefixes them in the KV cache to enable efficient static quantization. It prevents the generation of outlier tokens during inference, eliminating the need for per-token dynamic quantization and significantly improving the model's perplexity and accuracy without retraining. Experiments demonstrate its superior performance and inference speed over existing methods like QuaRot. #Quantization #LLMs #PrefixQuant #StaticQuantization |

| Under Review Scaling Laws for Precision Tanishq Kumar, Zachary Ankner, Benjamin F. Spector, Blake Bordelon, Niklas Muennighoff, Mansheej Paul, Cengiz Pehlevan, Christopher Ré, Aditi Raghunathan |

This paper introduces "precision-aware" scaling laws for both training and inference in language models, considering the impact of low-precision training and inference on model quality and cost. Key findings include: (1) Lower precision training reduces the model's effective parameter count; (2) Post-training quantization degradation increases with training data, potentially making additional data harmful; (3) A unified scaling law predicts loss degradation from training and inference with varied precisions; (4) Training larger models in lower precision may be compute-optimal. The authors validate their findings using over 465 pretraining runs on models up to 1.7B parameters trained on up to 26B tokens. #Quantization #ScalingLaws #LowPrecisionTraining #LanguageModels |

| EMNLP2024 main VPTQ: Extreme Low-bit Vector Post-Training Quantization for Large Language Models Yifei Liu, Jicheng Wen, Yang Wang, Shengyu Ye, Li Lyna Zhang, Ting Cao, Cheng Li, Mao Yang |

VPTQ is a novel post-training quantization (PTQ) method for extremely low-bit (e.g., 2-bit) quantization of Large Language Models (LLMs). Unlike traditional scalar quantization, VPTQ leverages vector quantization (VQ) to compress vectors into indices using lookup tables, mitigating the limitations of low-bit scalar representation. It employs second-order optimization to formulate the VQ problem, guiding algorithm design and enabling channel-independent optimization for granular VQ. A efficient codebook initialization algorithm is also proposed. Furthermore, VPTQ incorporates residual and outlier quantization to improve accuracy and compression. Experimental results on LLaMA-2, Mistral-7B, and LLaMA-3 demonstrate significant perplexity reduction (0.01-0.34 on LLaMA-2, 0.38-0.68 on Mistral-7B, 4.41-7.34 on LLaMA-3) and accuracy improvements (0.79-1.5% on LLaMA-2, 1% on Mistral-7B, 11-22% on LLaMA-3 on QA tasks) compared to state-of-the-art (SOTA) 2-bit quantization methods. VPTQ achieves a 1.6-1.8× increase in inference throughput with only 10.4-18.6% of the quantization algorithm execution time. The code is available on GitHub. #PTQ #VectorQuantization #Low-bit #LLM |

| Arxiv23 RPTQ: Reorder-based Post-training Quantization for Larg Language Models Zhihang Yuan, Liu Niu, Jiawei Liu, Wenyu Liu, Xinggang Wang, Yuzhang Shang, Guangyu Sun, Qiang Wu, Jiaxiang Wu, Bingzhe Wu |

This paper addresses the challenge of post-training quantization (PTQ) for Large Language Models (LLMs), focusing on the efficient quantization of activations. The authors observe that the difficulty lies not just in outliers but primarily in the varying ranges across different channels within the activations. Existing methods struggle with this channel-wise range variation, leading to significant quantization errors. To overcome this, RPTQ (Reorder-based Post-training Quantization) is proposed. RPTQ employs a reorder-based approach, clustering channels with similar value ranges before quantization. This allows for the use of different quantization parameters for different clusters, minimizing errors caused by the diverse ranges. Crucially, to avoid the computational overhead of explicit reordering, RPTQ fuses the reordering operation into the existing layer normalization operation and the weights in linear layers. The effectiveness of RPTQ is demonstrated through experiments, achieving a significant milestone by successfully using 3-bit activation quantization in LLMs for the first time. This leads to substantial memory savings; for example, quantizing OPT-175B can reduce memory consumption by up to 80%. #PTQ #Low-bit #LLM |

| ICLR24 AffineQuant: Affine Transformation Quantization for Large Language Models Yuexiao Ma, Huixia Li, Xiawu Zheng, Feng Ling, Xuefeng Xiao, Rui Wang, Shilei Wen, Fei Chao, Rongrong Ji |

This paper introduces AffineQuant, a novel Post-Training Quantization (PTQ) method for Large Language Models (LLMs) that utilizes affine transformations to minimize quantization errors. Unlike existing PTQ methods which primarily focus on scaling transformations, AffineQuant directly optimizes the affine transformation matrix applied to the weights before quantization. This broader optimization scope significantly reduces quantization errors, especially in low-bit scenarios (e.g., 4-bit). To ensure the invertibility of the transformation matrix during optimization and maintain the equivalence between pre- and post-quantization outputs, the authors introduce a gradual mask optimization method. This method starts by optimizing the diagonal elements of the matrix and progressively extends to off-diagonal elements, aligning with the Levy-Desplanques theorem to guarantee invertibility. Experimental results on various LLMs (including LLaMA2-7B and LLaMA-30B) and datasets demonstrate significant performance improvements compared to existing methods like OmniQuant, particularly in low-bit quantization. For instance, on LLaMA2-7B with W4A4 quantization, AffineQuant achieves a C4 perplexity of 15.76, a 2.26 point improvement over OmniQuant's 18.02. On zero-shot tasks with LLaMA-30B using 4/4-bit quantization, AffineQuant achieves 58.61% accuracy, a 1.98% improvement over OmniQuant, setting a new state-of-the-art for PTQ in LLMs. This advancement enables efficient deployment of large models on resource-constrained devices like edge devices. #PTQ #4bit #W4A4 |

| EMNLP24 DL-QAT: Weight-Decomposed Low-Rank Quantization-Aware Training for Large Language Models Wenjing Ke, Zhe Li, Dong Li, Lu Tian, Emad Barsoum |

Improving the efficiency of inference in Large Language Models (LLMs) is a critical area of research. Post-training Quantization (PTQ) is a popular technique, but it often faces challenges at low-bit levels, particularly in downstream tasks. Quantization-aware Training (QAT) can alleviate this problem, but it requires significantly more computational resources. To tackle this, we introduced Weight-Decomposed Low-Rank Quantization-Aware Training (DL-QAT), which merges the advantages of QAT while training only less than 1% of the total parameters. Specifically, we introduce a group-specific quantization magnitude to adjust the overall scale of each quantization group. Within each quantization group, we use LoRA matrices to update the weight size and direction in the quantization space. We validated the effectiveness of our method on the LLaMA and LLaMA2 model families. The results show significant improvements over our baseline method across different quantization granularities. For instance, for LLaMA-7B, our approach outperforms the previous state-of-the-art method by 4.2% in MMLU on 3-bit LLaMA-7B. Additionally, our quantization results on pre-trained models also surpass previous QAT methods, demonstrating the superior performance and efficiency of our approach. #QAT #3bit #LoRA |

| EMNLP24 LLMC: Benchmarking Large Language Model Quantization with a Versatile Compression Toolkit Ruihao Gong, Yang Yong, Shiqiao Gu, Yushi Huang, Chengtao Lv, Yunchen Zhang, Dacheng Tao, Xianglong Liu Github |

Recent advancements in large language models (LLMs) are propelling us toward artificial general intelligence with their remarkable emergent abilities and reasoning capabilities. However, the substantial computational and memory requirements limit the widespread adoption. Quantization, a key compression technique, can effectively mitigate these demands by compressing and accelerating LLMs, albeit with potential risks to accuracy. Numerous studies have aimed to minimize the accuracy loss associated with quantization. However, their quantization configurations vary from each other and cannot be fairly compared. In this paper, we present LLMC, a plug-and-play compression toolkit, to fairly and systematically explore the impact of quantization. LLMC integrates dozens of algorithms, models, and hardware, offering high extensibility from integer to floating-point quantization, from LLM to vision-language (VLM) model, from fixed-bit to mixed precision, and from quantization to sparsification. Powered by this versatile toolkit, our benchmark covers three key aspects: calibration data, algorithms (three strategies), and data formats, providing novel insights and detailed analyses for further research and practical guidance for users. #Benchmark #Toolkit #Quantization |

| EMNLP24 QDyLoRA: Quantized Dynamic Low-Rank Adaptation for Efficient Large Language Model Tuning Hossein Rajabzadeh, Mojtaba Valipour, Tianshu Zhu, Marzieh S. Tahaei, Hyock Ju Kwon, Ali Ghodsi, Boxing Chen, Mehdi Rezagholizadeh |

Finetuning large language models requires huge GPU memory, restricting the choice to acquire Larger models. While the quantized version of the Low-Rank Adaptation technique, named QLoRA, significantly alleviates this issue, finding the efficient LoRA rank is still challenging. Moreover, QLoRA is trained on a pre-defined rank and, therefore, cannot be reconfigured for its lower ranks without requiring further fine-tuning steps. This paper proposes QDyLoRA -Quantized Dynamic Low-Rank Adaptation-, as an efficient quantization approach for dynamic low-rank adaptation. Motivated by Dynamic LoRA, QDyLoRA is able to efficiently finetune LLMs on a set of pre-defined LoRA ranks. QDyLoRA enables fine-tuning Falcon-40b for ranks 1 to 64 on a single 32 GB V100-GPU through one round of fine-tuning. Experimental results show that QDyLoRA is competitive to QLoRA and outperforms when employing its optimal rank. #QLoRA #PEFT #DynamicRank |

| EMNLP24 Findings KV Cache Compression, But What Must We Give in Return? A Comprehensive Benchmark of Long Context Capable Approaches Jiayi Yuan, Hongyi Liu, Shaochen Zhong, Yu-Neng Chuang, Songchen Li, Guanchu Wang, Duy Le, Hongye Jin, Vipin Chaudhary, Zhaozhuo Xu, Zirui Liu, Xia Hu Github |

Long context capability is crucial for LLMs to handle tasks like book summarization and code assistance. However, transformer-based LLMs face challenges with long context due to KV cache size and attention complexity. This paper provides a comprehensive benchmark of 10+ state-of-the-art approaches across seven categories, including KV cache quantization, token dropping, prompt compression, and hybrid architectures. The study reveals previously unknown phenomena and offers insights for developing more efficient long context-capable LLMs. #Benchmark #KVCache #LongContext |

| EMNLP24 Findings RoLoRA: Fine-tuning Rotated Outlier-free LLMs for Effective Weight-Activation Quantization Xijie Huang, Zechun Liu, Shih-Yang Liu, Kwang-Ting Cheng |

This paper introduces RoLoRA, the first LoRA-based scheme that applies rotation for outlier elimination in weight-activation quantization. While LoRA methods have been successful with weight-only quantization, applying weight-activation quantization to the LoRA pipeline faces challenges due to activation outliers. RoLoRA proposes rotation-aware fine-tuning to eliminate and preserve outlier-free characteristics through rotation operations. The method shows significant improvements across various LLM series (LLaMA2, LLaMA3, LLaVA-1.5) and tasks, achieving up to 29.5% absolute accuracy gain with 4-bit weight-activation quantized LLaMA2-13B on commonsense reasoning tasks compared to the LoRA baseline. The approach also demonstrates effectiveness with Large Multimodal Models and compatibility with advanced LoRA variants. #PEFT #LoRA #Rotation #W4A4 |

| EMNLP24 Findings MobileQuant: Mobile-friendly Quantization for On-device Language Models Fuwen Tan, Royson Lee, Łukasz Dudziak, Shell Xu Hu, Sourav Bhattacharya, Timothy Hospedales, Georgios Tzimiropoulos, Brais Martinez |

Large language models (LLMs) have revolutionized language processing but face significant deployment challenges on edge devices due to substantial memory, energy, and compute costs. While existing works have found partial success in quantizing LLMs to lower bitwidths, quantizing activations beyond 16 bits often leads to large computational overheads or considerable accuracy drops. MobileQuant introduces a simple post-training quantization method that extends previous weight equivalent transformation works by jointly optimizing weight transformation and activation range parameters in an end-to-end manner. The approach demonstrates superior capabilities by achieving near-lossless quantization on a wide range of LLM benchmarks, reducing latency and energy consumption by 20%-50%, requiring limited compute budget, and ensuring compatibility with mobile-friendly compute units like Neural Processing Units (NPUs). Specifically, 8-bit activations are shown to be particularly attractive for on-device deployment, enabling full exploitation of mobile-friendly hardware. #OnDeviceLLM #Quantization #MobileDeploy #NPU |

| EMNLP24 Findings Optimize Weight Rounding via Signed Gradient Descent for the Quantization of LLMs Wenhua Cheng, Weiwei Zhang, Haihao Shen, Yiyang Cai, Xin He, Lv Kaokao, Yi Liu |

Large Language Models (LLMs) have demonstrated exceptional proficiency in language-related tasks, but their deployment poses significant challenges due to substantial memory and storage requirements. Weight-only quantization has emerged as a promising solution to address these challenges. Previous research suggests that fine-tuning through up and down rounding can enhance performance. This study introduces SignRound, a method that utilizes signed gradient descent (SignSGD) to optimize rounding values and weight clipping within just 200 steps. SignRound integrates the advantages of Quantization-Aware Training (QAT) and Post-Training Quantization (PTQ), achieving exceptional results across 2 to 4 bits while maintaining low tuning costs and avoiding additional inference overhead. For example, SignRound achieves absolute average accuracy improvements ranging from 6.91% to 33.22% at 2 bits, as measured by the average zero-shot accuracy across 11 tasks. It also demonstrates strong generalization to recent models, achieving near-lossless 4-bit quantization in most scenarios. #WeightQuantization #SignSGD #LowBitQuantization #QAT |

| EMNLP24 Findings Beyond Perplexity: Multi-dimensional Safety Evaluation of LLM Compression Zhichao Xu, Ashim Gupta, Tao Li, Oliver Bentham, Vivek Srikumar |

As model compression techniques enable large language models (LLMs) to be deployed in real-world applications, the impact on safety requires systematic assessment. This study investigates the effects of LLM compression along four dimensions: degeneration harm, representational harm, dialect bias, and language modeling performance. Examining various compression techniques, including unstructured pruning, semi-structured pruning, and quantization, the analysis reveals unexpected consequences. While compression may unintentionally alleviate degeneration harm, it can exacerbate representational harm. Increasing compression produces divergent impacts on different protected groups, and different compression methods have drastically different safety impacts. The findings underscore the importance of integrating safety assessments into the development of compressed LLMs to ensure reliability across real-world applications. #LLMCompression #AIEthics #ModelSafety #BiasMitigation |

| EMNLP24 Findings How Does Quantization Affect Multilingual LLMs? Kelly Marchisio, Saurabh Dash, Hongyu Chen, Dennis Aumiller, Ahmet Üstün, Sara Hooker, Sebastian Ruder |

Quantization techniques are widely used to improve inference speed and deployment of large language models. While a wide body of work examines the impact of quantization on LLMs in English, none have evaluated across languages. This study conducts a thorough analysis of quantized multilingual LLMs, focusing on performance across languages and at varying scales. Using automatic benchmarks, LLM-as-a-Judge, and human evaluation, the research finds that (1) harmful effects of quantization are apparent in human evaluation, which automatic metrics severely underestimate: a 1.7% average drop in Japanese across automatic tasks corresponds to a 16.0% drop reported by human evaluators on realistic prompts; (2) languages are disparately affected by quantization, with non-Latin script languages impacted worst; and (3) challenging tasks like mathematical reasoning degrade fastest. As the ability to serve low-compute models is critical for wide global adoption of NLP technologies, the results urge consideration of multilingual performance as a key evaluation criterion for efficient models. #MultilingualLLM #Quantization #LanguageBias #ModelEvaluation |

| EMNLP24 Findings When Compression Meets Model Compression: Memory-Efficient Double Compression for Large Language Models Weilan Wang, Yu Mao, Tang Dongdong, Du Hongchao, Nan Guan, Chun Jason Xue |

Large language models (LLMs) exhibit excellent performance in various tasks. However, the memory requirements of LLMs present a great challenge when deploying on memory-limited devices, even for quantized LLMs. This paper introduces a framework to compress LLM after quantization further, achieving about 2.2x compression ratio. A compression-aware quantization is first proposed to enhance model weight compressibility by re-scaling the model parameters before quantization, followed by a pruning method to improve further. Upon this, we notice that decompression can be a bottleneck during practical scenarios. We then give a detailed analysis of the trade-off between memory usage and latency brought by the proposed method. A speed-adaptive method is proposed to overcome it. The experimental results show inference with the compressed model can achieve a 40% reduction in memory size with negligible loss in accuracy and inference speed. #LLMCompression #MemoryEfficiency #QuantizationTechniques #ModelInference |

| EMNLP24 Findings ATQ: Activation Transformation for Weight-Activation Quantization of Large Language Models Yundong Gai, Ping Li |

There are many emerging quantization methods to resolve the problem that the huge demand on computational and storage costs hinders the deployment of Large language models (LLMs). However, their accuracy performance still can not satisfy the entire academic and industry community. In this work, we propose ATQ, an INT8 weight-activation quantization of LLMs, that can achieve almost lossless accuracy. We employ a mathematically equivalent transformation and a triangle inequality to constrain weight-activation quantization error to the sum of a weight quantization error and an activation quantization error. For the weight part, transformed weights are quantized along the in-feature dimension and the quantization error is compensated by optimizing following in-features. For the activation part, transformed activations are in the normal range and can be quantized easily. We provide comparison experiments to demonstrate that our ATQ method can achieve almost lossless in accuracy on OPT and LLaMA families in W8A8 quantization settings. The increase of perplexity is within 1 and the accuracy degradation is within 0.5 percent even in the worst case. #LLMQuantization #ActivationTransformation #WeightQuantization #LosslessAccuracy |

| EMNLP24 Main Prefixing Attention Sinks can Mitigate Activation Outliers for Large Language Model Quantization Seungwoo Son, Wonpyo Park, Woohyun Han, Kyuyeun Kim, Jaeho Lee |

Despite recent advances in LLM quantization, activation quantization remains to be challenging due to the activation outliers. Conventional remedies, e.g., mixing precisions for different channels, introduce extra overhead and reduce the speedup. In this work, we develop a simple yet effective strategy to facilitate per-tensor activation quantization by preventing the generation of problematic tokens. Precisely, we propose a method to find a set of key-value cache, coined CushionCache, which mitigates outliers in subsequent tokens when inserted as a prefix. CushionCache works in two steps: First, we greedily search for a prompt token sequence that minimizes the maximum activation values in subsequent tokens. Then, we further tune the token cache to regularize the activations of subsequent tokens to be more quantization-friendly. The proposed method successfully addresses activation outliers of LLMs, providing a substantial performance boost for per-tensor activation quantization methods. We thoroughly evaluate our method over a wide range of models and benchmarks and find that it significantly surpasses the established baseline of per-tensor W8A8 quantization and can be seamlessly integrated with the recent activation quantization method. #ActivationOutliers #CushionCache #PerTensorQuantization |

| EMNLP24 Main QUIK: Towards End-to-end 4-Bit Inference on Generative Large Language Models Saleh Ashkboos, Ilia Markov, Elias Frantar, Tingxuan Zhong, Xincheng Wang, Jie Ren, Torsten Hoefler, Dan Alistarh |

Large Language Models (LLMs) from the GPT family have become extremely popular, leading to a race towards reducing their inference costs to allow for efficient local computation. However, the vast majority of existing work focuses on weight-only quantization, which can reduce runtime costs in the memory-bound one-token-at-a-time generative setting, but does not address costs in compute-bound scenarios, such as batched inference or prompt processing. In this paper, we address the general quantization problem, where both weights and activations should be quantized, which leads to computational improvements in general. We show that the majority of inference computations for large generative models can be performed with both weights and activations being cast to 4 bits, while at the same time maintaining good accuracy. We achieve this via a hybrid quantization strategy called QUIK that compresses most of the weights and activations to 4-bit, while keeping a small fraction of "outlier" weights and activations in higher-precision. QUIK is that it is designed with computational efficiency in mind: we provide GPU kernels matching the QUIK format with highly-efficient layer-wise runtimes, which lead to practical end-to-end throughput improvements of up to 3.4x relative to FP16 execution. We provide detailed studies for models from the OPT, LLaMA-2 and Falcon families, as well as a first instance of accurate inference using quantization plus 2:4 sparsity. Anonymized code is available. #mixed-precision #4Bit |

| EMNLP24 Main ApiQ: Finetuning of 2-Bit Quantized Large Language Model Baohao Liao, Christian Herold, Shahram Khadivi, Christof Monz |

Memory-efficient finetuning of large language models (LLMs) has recently attracted huge attention with the increasing size of LLMs, primarily due to the constraints posed by GPU memory limitations and the effectiveness of these methods compared to full finetuning. Despite the advancements, current strategies for memory-efficient finetuning, such as QLoRA, exhibit inconsistent performance across diverse bit-width quantizations and multifaceted tasks. This inconsistency largely stems from the detrimental impact of the quantization process on preserved knowledge, leading to catastrophic forgetting and undermining the utilization of pretrained models for finetuning purposes. In this work, we introduce a novel quantization framework named ApiQ, designed to restore the lost information from quantization by concurrently initializing the LoRA components and quantizing the weights of LLMs. This approach ensures the maintenance of the original LLM's activation precision while mitigating the error propagation from shallower into deeper layers. Through comprehensive evaluations conducted on a spectrum of language tasks with various LLMs, ApiQ demonstrably minimizes activation error during quantization. Consequently, it consistently achieves superior finetuning results across various bit-widths. Notably, one can even finetune a 2-bit Llama-2-70b with ApiQ on a single NVIDIA A100-80GB GPU without any memory-saving techniques, and achieve promising results. #2Bit #Finetuning |

|

QTIP: Quantization with Trellises and Incoherence Processing Albert Tseng, Qingyao Sun, David Hou, Christopher De Sa |

Post-training quantization (PTQ) reduces the memory footprint of LLMs by quantizing weights to low-precision datatypes. Since LLM inference is usually memory-bound, PTQ methods can improve inference throughput. Recent state-of-the-art PTQ approaches use vector quantization (VQ) to quantize multiple weights at once, which improves information utilization through better shaping. However, VQ requires a codebook with size exponential in the dimension. This limits current VQ-based PTQ works to low VQ dimensions ( ≤8 ≤ 8 ) that in turn limit quantization quality. Here, we introduce QTIP, which instead uses trellis coded quantization (TCQ) to achieve ultra-high-dimensional quantization. TCQ uses a stateful decoder that separates the codebook size from the bitrate and effective dimension. QTIP introduces a spectrum of lookup-only to computed lookup-free trellis codes designed for a hardware-efficient "bitshift" trellis structure; these codes achieve state-of-the-art results in both quantization quality and inference speed. #PTQ #VectorQuantization |

|

FlashAttention-3: Fast and Accurate Attention with Asynchrony and Low-precision Jay Shah, Ganesh Bikshandi, Ying Zhang, Vijay Thakkar, Pradeep Ramani, Tri Dao |

Attention, as a core layer of the ubiquitous Transformer architecture, is the bottleneck for large language models and long-context applications. FlashAttention elaborated an approach to speed up attention on GPUs through minimizing memory reads/writes. However, it has yet to take advantage of new capabilities present in recent hardware, with FlashAttention-2 achieving only 35% utilization on the H100 GPU. We develop three main techniques to speed up attention on Hopper GPUs: exploiting asynchrony of the Tensor Cores and TMA to (1) overlap overall computation and data movement via warp-specialization and (2) interleave block-wise matmul and softmax operations, and (3) block quantization and incoherent processing that leverages hardware support for FP8 low-precision. We demonstrate that our method, FlashAttention-3, achieves speedup on H100 GPUs by 1.5-2.0 × × with BF16 reaching up to 840 TFLOPs/s (85% utilization), and with FP8 reaching 1.3 PFLOPs/s. We validate that FP8 FlashAttention-3 achieves 2.6 × × lower numerical error than a baseline FP8 attention. #FlashAttention #LowPrecision |

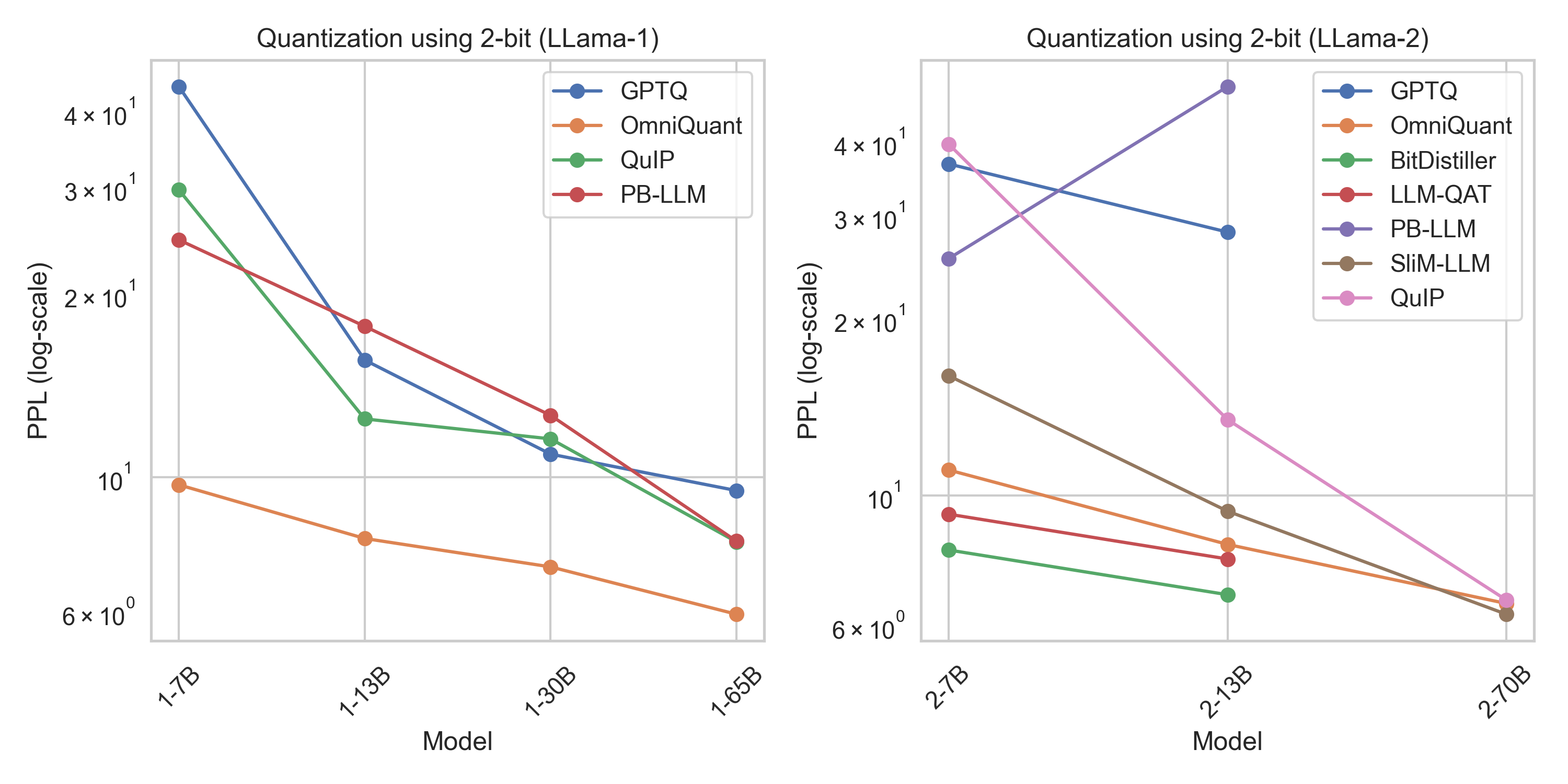

2-bit evaluation

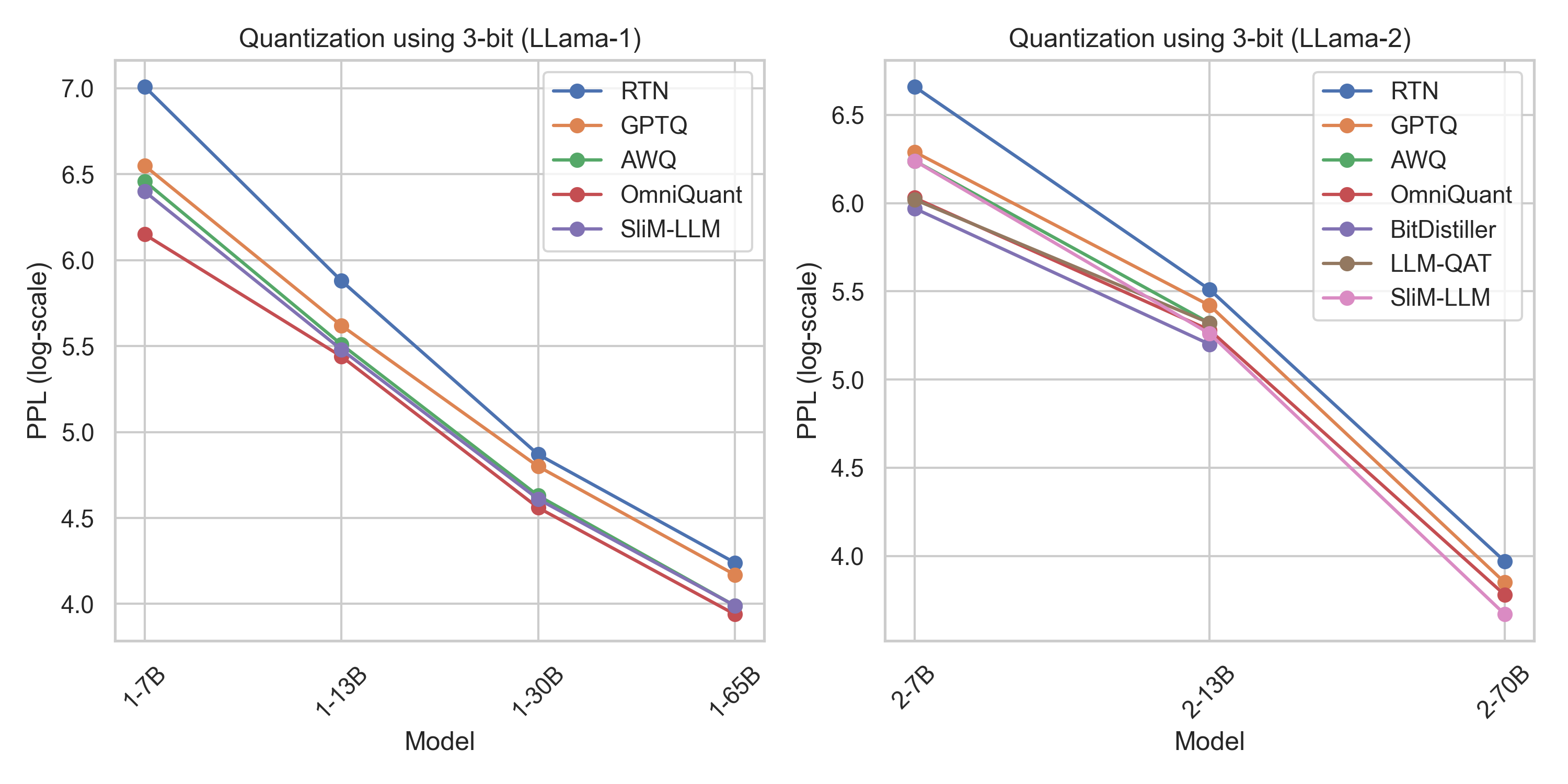

3-bit evaluation

Contributions to this repository are welcome! If you have any suggestions for new resources, or if you find any broken links or outdated information, please open an issue or submit a pull request.

This repository is licensed under the MIT License.