Tensorflow and Keras Implementation of the state of the art researches in Dialog System NLU. Tested on Tensorflow version 2.x Recently, using Huggingface Transformers library for better models coverage and other languages support.

You can still access the old version (TF 1.15.0) on TF_1 branch

- Data format as in the paper

Slot-Gated Modeling for Joint Slot Filling and Intent Prediction(Goo et al):- Consists of 3 files:

seq.infile contains text samples (utterances)seq.outfile contains tags corresponding to samples fromseq.inlabelfile contains intent labels corresponding to samples fromseq.in

- Consists of 3 files:

- Snips Dataset (

Snips voice platform: an embedded spoken language understanding system for private- by-design voice interfaces)(Coucke et al., 2018), which is collected from the Snips personal voice assistant.- The training, development and test sets contain 13,084, 700 and 700 utterances, respectively.

- There are 72 slot labels and 7 intent types for the training set.

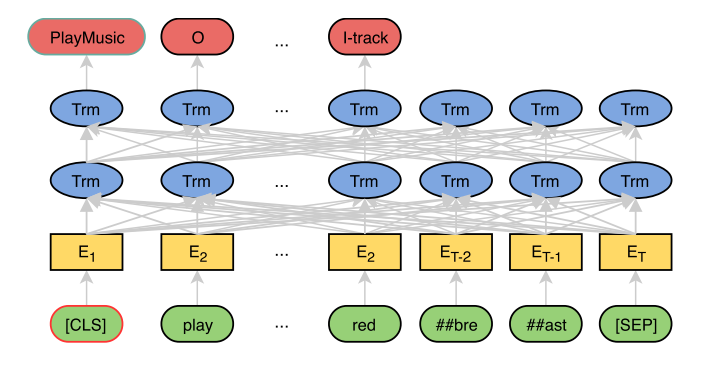

4 models implemented joint_bert and joint_bert_crf each supports bert and albert

--trainor-tPath to training data in Goo et al format.--valor-vPath to validation data in Goo et al format.--saveor-sFolder path to save the trained model.

--epochsor-eNumber of epochs.--batchor-bsBatch size.--typeor-tpto choose betweenbertandalbert. Default isbert--modelor-mPath to joint BERT / ALBERT NLU model for incremental training.

python train_joint_bert.py --train=data/snips/train --val=data/snips/valid --save=saved_models/joint_bert_model --epochs=5 --batch=64 --type=bert

python train_joint_bert.py --train=data/snips/train --val=data/snips/valid --save=saved_models/joint_albert_model --epochs=5 --batch=64 --type=albert

python train_joint_bert_crf.py --train=data/snips/train --val=data/snips/valid --save=saved_models/joint_bert_crf_model --epochs=5 --batch=32 --type=bert

python train_joint_bert_crf.py --train=data/snips/train --val=data/snips/valid --save=saved_models/joint_albert_crf_model --epochs=5 --batch=32 --type=albert

Example to do incremental training:

python train_joint_bert.py --train=data/snips/train --val=data/snips/valid --save=saved_models/joint_albert_model2 --epochs=5 --batch=64 --type=albert --model=saved_models/joint_albert_model

--modelor-mPath to joint BERT / ALBERT NLU model.--dataor-dPath to data in Goo et al format.

--batchor-bsBatch size.--typeor-tpto choose betweenbertandalbert. Default isbert

python eval_joint_bert.py --model=saved_models/joint_bert_model --data=data/snips/test --batch=128 --type=bert

python eval_joint_bert.py --model=saved_models/joint_albert_model --data=data/snips/test --batch=128 --type=albert

python eval_joint_bert_crf.py --model=saved_models/joint_bert_crf_model --data=data/snips/test --batch=128 --type=bert

python eval_joint_bert_crf.py --model=saved_models/joint_albert_crf_model --data=data/snips/test --batch=128 --type=albert

Huggingface Transformers has a lot of transformers-based models. The idea behind the integration is to be able to support more architectures as well as more languages.

Supported Models Architecture:

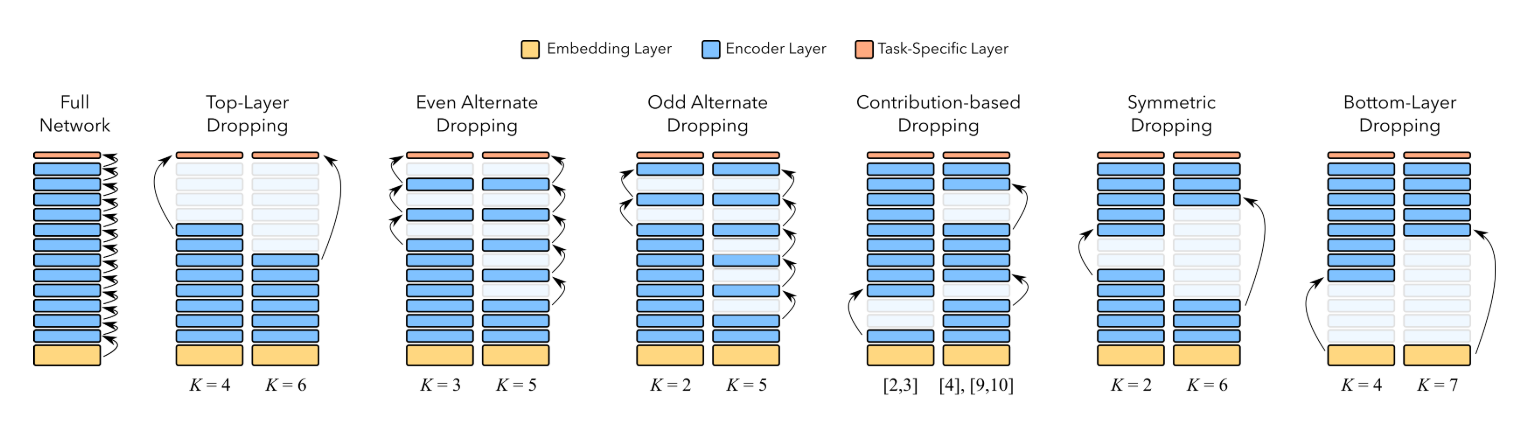

| Model | Pretrained Model Example | Layer Prunning Support |

|---|---|---|

| TFBertModel | bert-base-uncased |

Yes |

| TFDistilBertModel | distilbert-base-uncased |

Yes |

| TFAlbertModel | albert-base-v1 or albert-base-v2 |

Not yet |

| TFRobertaModel | roberta-base or distilroberta-base |

Yes |

| And more models integration to come |

| Argument | Description | Is Required | Default |

|---|---|---|---|

--train or -t |

Path to training data in Goo et al format. | Yes | |

--val or -v |

Path to validation data in Goo et al format. | Yes | |

--save or -s |

Folder path to save the trained model. | Yes | |

--epochs or -e |

Number of epochs. | No | 5 |

--batch or -bs |

Batch size. | No | 64 |

--model or -m |

Path to joint trans NLU model for incremental training. | No | |

--trans or tr |

Pretrained transformer model name or path. Is optional. Either --model OR --trans should be provided | No | |

--from_pt or -pt |

Whether the --trans (if provided) is from pytorch or not | No | False |

--cache_dir or -c |

The cache_dir for transformers library. Is optional | No |

Using Transformers Bert example:

python train_joint_trans.py --train=data/snips/train --val=data/snips/valid --save=saved_models/joint_trans_model --epochs=3 --batch=64 --cache_dir=transformers_cache_dir --trans=bert-base-uncased --from_pt=falseUsing Transformers DistilBert example:

python train_joint_trans.py --train=data/snips/train --val=data/snips/valid --save=saved_models/joint_distilbert_model --epochs=3 --batch=64 --cache_dir=transformers_cache_dir --trans=distilbert-base-uncased --from_pt=falseWe make use of seqeval library for computing f1-score per tag level not per token level.

| Argument | Description | Is Required | Default |

|---|---|---|---|

--model or -m |

Path to joint Transformer NLU model. | Yes | |

--data or -d |

Path to data in Goo et al format. | Yes | |

--batch or -bs |

Batch size. | No | 128 |

Using Transformers Bert example:

python eval_joint_trans.py --model=saved_models/joint_trans_model --data=data/snips/test --batch=128Using Transformers DistilBert example:

python eval_joint_trans.py --model=saved_models/joint_distilbert_model --data=data/snips/test --batch=128--modelor-mPath to joint BERT / ALBERT NLU model.

--typeor-tpto choose betweenbertandalbert. Default isbert

python bert_nlu_basic_api.py --model=saved_models/joint_albert_model --type=albert

- POST

- Payload:

{

"utterance": "make me a reservation in south carolina"

}

{

"intent": {

"confidence": "0.9888",

"name": "BookRestaurant"

},

"slots": [

{

"slot": "state",

"value": "south carolina",

"start": 5,

"end": 6

}

]

}