A PyTorch implementation of DenseNets, optimized to save GPU memory.

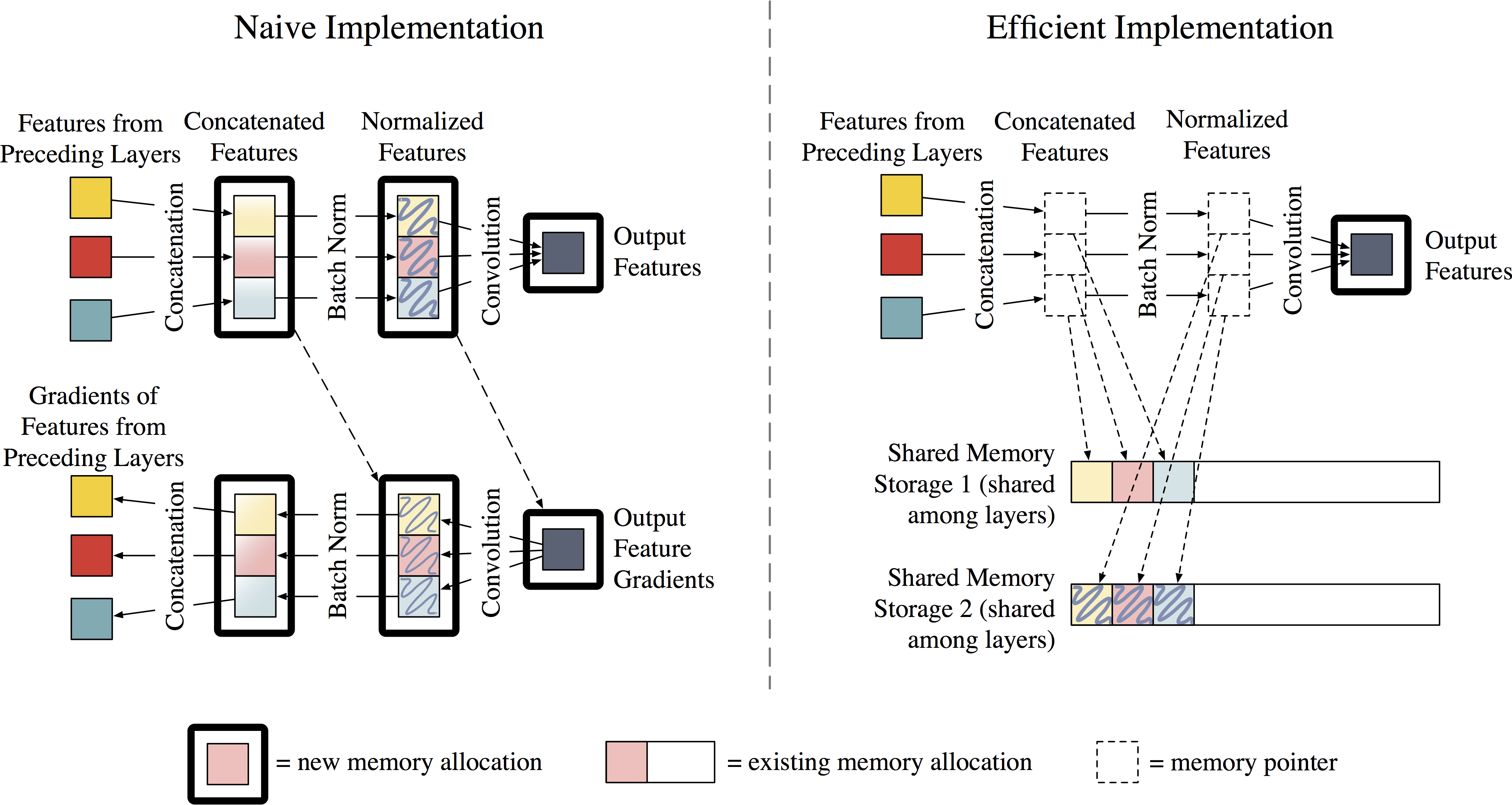

While DenseNets are fairly easy to implement in deep learning frameworks, most implmementations (such as the original) tend to be memory-hungry. In particular, the number of intermediate feature maps generated by batch normalization and concatenation operations grows quadratically with network depth. It is worth emphasizing that this is not a property inherent to DenseNets, but rather to the implementation.

This implementation uses a new strategy to reduce the memory consumption of DenseNets. We assign all intermediate feature maps to two shared memory allocations, which are utilized by every Batch Norm and concatenation operation. Because the data in these allocations are temporary, we re-populate the outputs during back-propagation. This adds 15-20% of time overhead for training, but reduces feature map consumption from quadratic to linear.

For more details, please see the technical report (soon to be on ArXiV).

In your existing project:

There are two files in the models folder.

models/densenet.pyis a "naive" implementation, based off the torchvision and project killer implementations.models/densenet_efficient.pyis the new efficient implementation. (Code is still a little ugly. We're working on cleaning it up!) Copy either one of those files into your project! They work as stand-alone files.

Running the demo:

python demo.py --efficient True --data <path_to_data_dir> --save <path_to_save_dir>Options:

--depth(int) - depth of the network (number of convolution layers) (default 40)--growth_rate(int) - number of features added per DenseNet layer (default 12)--n_epochs(int) - number of epochs for training (default 300)--batch_size(int) - size of minibatch (default 256)--seed(int) - manually set the random seed (default None)

A comparison of the two implementations (each is a DenseNet-BC with 100 layers, batch size 64, tested on a NVIDIA Pascal Titan-X):

| Implementation | Memory cosumption (GB) | Speed (sec/mini batch) |

|---|---|---|

| Naive | 2.863 | 0.165 |

| Efficient | 1.605 | 0.207 |