PyTorch code of the paper "Anatomy-aware 3D Human Pose Estimation in Videos". It is built on top of VideoPose3D.

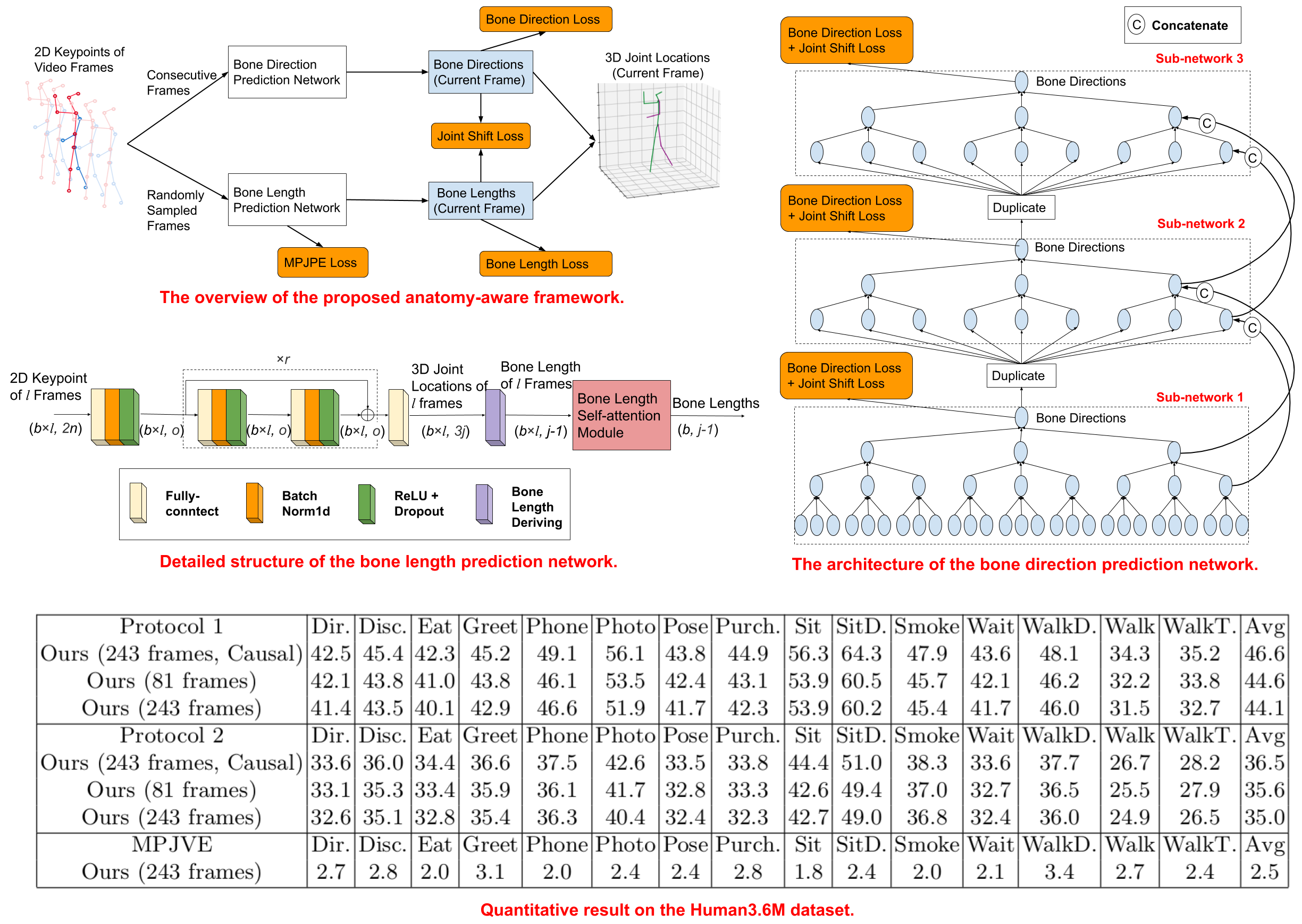

Tianlang Chen, Chen Fang, Xiaohui Shen, Yiheng Zhu, Zhili Chen, Jiebo Luo. "Anatomy-aware 3D Human Pose Estimation in Videos", arxiv, 2020. pdf

The code is developed and tested on the following environment

- Python 3.6.10

- PyTorch 1.0.1

- CUDA 9.0

The source code is for training/evaluating on the Human3.6M dataset. Our code is compatible with the dataset setup introduced by Martinez et al. and Pavllo et al.. Please refer to VideoPose3D to set up the Human3.6M dataset (./data directory).

To experiment with another dataset, you need to set up the dataset in the same way as the Human3.6M dataset. In addition, you need to modify --boneindex (./common/arguments.py) and randomaug() (./common/generators.py) to update the index mapping between joints and bones.

As described in our paper, we provide the 2D keypoint visibility scores of the Human3.6M dataset predicted by AlphaPose. You need to download the score file from here and put it into the ./data directory.

To train a model from scratch, run:

python run.py -e xxx -k cpn_ft_h36m_dbb -arc xxx --randnum xxxAs VideoPose3D, -arc controls the backbone architecture of our bone direction prediction network (and also the corresponding layer number of our bone length prediction network). --randnum indicates the randomly sampled input frame number of the bone length prediction network.

For example, to train our 243-frame standard model and causal model in our paper, please run:

python run.py -e 60 -k cpn_ft_h36m_dbb -arc 3,3,3,3,3 --randnum 50and

python run.py -e 60 -k cpn_ft_h36m_dbb -arc 3,3,3,3,3 --randnum 50 --causal-arc 3,3,3,3,3 should require 80 hours to train on 3 GeForce GTX 1080 Ti GPUs.

-arc 3,3,3,3 should require 60 hours to train on 2 GeForce GTX 1080 Ti GPUs.

We provide the pre-trained 243-frame model here. To evaluate it, put it into the ./checkpoint directory and run:

python run.py -k cpn_ft_h36m_dbb -arc 3,3,3,3,3 --evaluate pretrained_model.binWe keep our code consistent with VideoPose3D. Please refer to their project page for further information.

If you found this code useful, please cite the following paper:

@article{chen2020anatomy,

title={Anatomy-aware 3D Human Pose Estimation in Videos},

author={Chen, Tianlang and Fang, Chen and Shen, Xiaohui and Zhu, Yiheng and Chen, Zhili and Luo, Jiebo},

journal={arXiv preprint arXiv:2002.10322},

year={2020}

}

Part of our code is borrowed from VideoPose3D. We thank to the authors for releasing codes.