This repository represents the official implementation of the paper:

Hanfeng Wu1,2, Xingxing Zuo2, Stefan Leutenegger2, Or Litany3, Konrad Schindler1, Shengyu Huang1

1 ETH Zurich | 2 Technical University of Munich | 3 Technion | 4 NVIDIA

This code consists of two parts. The folder DyNFL contains our nerfstudio-based DyNFL implementation. The folder WaymoPreprocessing contains the preprocessing scripts to generate dataset used for training and evaluation.

This code has been tested on

- Python 3.10.10, PyTorch 1.13.1, CUDA 11.6, Nerfstudio 0.3.4, GeForce RTX 3090/GeForce GTX 1080Ti

To create a conda environment and install the required dependences, please run:

conda create -n "dynfl" python=3.10.10

conda activate dynflThen install nerfstudio from official guidelines in your conda environment.

After intalling nerfstudio, install DyNFL and required packages as follows:

git clone git@github.com:prs-eth/DyNFL-Dynamic-LiDAR-Re-simulation-using-Compositional-Neural-Fields.git

cd DyNFL

pip install -e .

pip install NFLStudio/ChamferDistancePytorch/chamfer3D/

pip install NFLStudio/raymarching/

Please refer to WaymoPreprocessing.

After generating the datasets, please run

ns-train NFLStudio --pipeline.datamanager.dataparser-config.context_name <context_name> --pipeline.datamanager.dataparser-config.root_dir <path_to_your_preprocessed_data_dynamic> --experiment_name <your_experiment_name>You can set the batch-size in NFLDataManagerConfig or pass it as arguments along with the ns-train command

After training, a folder containing weights will be saved in your working folder as outputs/<your_experiment_name>/NFLStudio/<time_stamp>/nerfstudio_models

In order to get quantitative results, please uncomment in tester

# pipeline.get_numbers() # get numbers of the LiDARs

and run

cd NFLStudio

python tester.py --context_name <context_name> --model_dir <model_dir>

for example

python tester.py --context_name "1083056852838271990_4080_000_4100_000" --model_dir "outputs/<your_experiment_name>/NFLStudio/<time_stamp>/nerfstudio_modelss"

We provide you the pretrained model weights of 4 Waymo Dynamic scenes to reproduce our results, the weights are avaliable here. Unpack the pretrained weights under DyNFL/NFLStudio/

and run

cd NFLStudio

bash test.sh

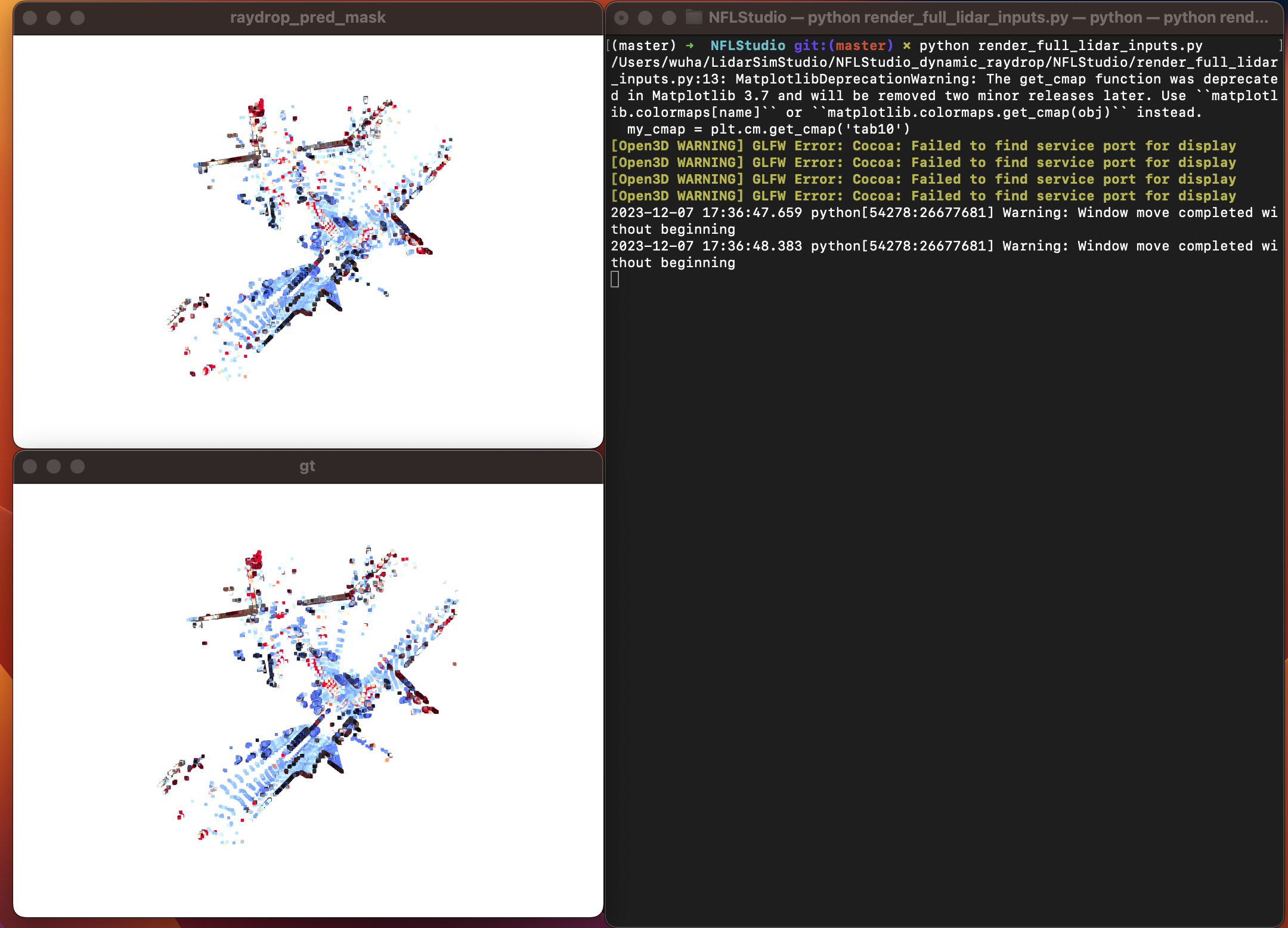

To visulize the results, uncomment in tester

# pipeline.get_pcd(context_name) # get pcd to display

and run

cd NFLStudio

python tester.py --context_name <context_name> --model_dir <model_dir>

After that, you will have a folder in NFLStudio called pcd_out.

replace the dir and context_name with path/to/pcd_out and <context_name> in NFLStudio/render_full_lidar_inputs.py

and run

cd NFLStudio

python render_full_lidar_inputs.py

It will generate two windows like follows:

@inproceedings{Wu2023dynfl,

title={Dynamic LiDAR Re-simulation using Compositional Neural Fields},

author={Wu, Hanfeng and Zuo, Xingxing and Leutenegger, Stefan and Litany, Or and Schindler, Konrad and Huang, Shengyu},

booktitle = {The IEEE Conference on Computer Vision and Pattern Recognition (CVPR)},

year = {2024},

}We use the nerfstudio framework in this project, we thank the contributors for their open-sourcing and maintenance of the work.