Taskflow

Taskflow helps you quickly write parallel tasks programs in modern C++

Why Taskflow?

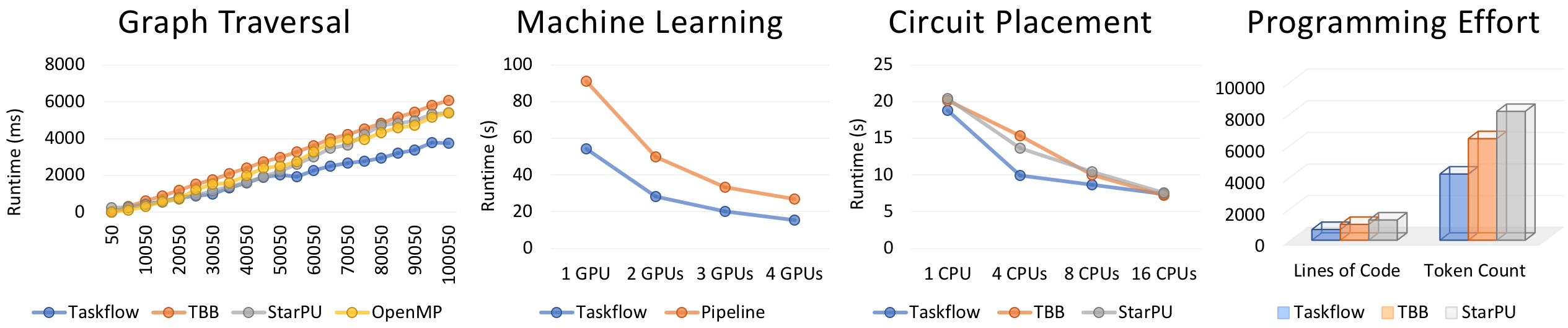

Taskflow is faster, more expressive, and easier for drop-in integration than many of existing task programming frameworks in handling complex parallel workloads.

Taskflow lets you quickly implement task decomposition strategies that incorporate both regular and irregular compute patterns, together with an efficient work-stealing scheduler to optimize your multithreaded performance.

| Static Tasking | Dynamic Tasking |

|---|---|

|

|

Taskflow supports conditional tasking for you to make rapid control-flow decisions across dependent tasks to implement cycles and conditions that were otherwise difficult to do with existing tools.

| Conditional Tasking |

|---|

|

Taskflow is composable. You can create large parallel graphs through composition of modular and reusable blocks that are easier to optimize at an individual scope.

| Taskflow Composition |

|---|

|

Taskflow supports heterogeneous tasking for you to accelerate a wide range of scientific computing applications by harnessing the power of CPU-GPU collaborative computing.

| Concurrent CPU-GPU Tasking |

|---|

|

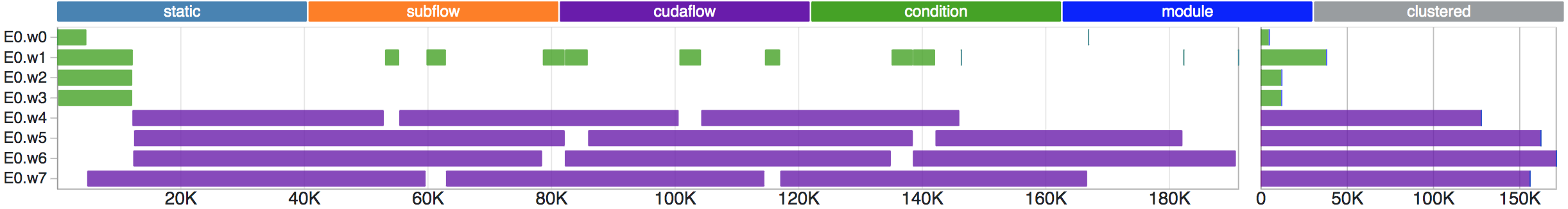

Taskflow provides visualization and tooling needed for profiling Taskflow programs.

| Taskflow Profiler |

|---|

|

We are committed to support trustworthy developments for both academic and industrial research projects in parallel computing. Check out Who is Using Taskflow and what our users say:

- "Taskflow is the cleanest Task API I've ever seen." Damien Hocking @Corelium Inc

- "Taskflow has a very simple and elegant tasking interface. The performance also scales very well." Glen Fraser

- "Taskflow lets me handle parallel processing in a smart way." Hayabusa @Learning

- "Taskflow improves the throughput of our graph engine in just a few hours of coding." Jean-Michaël @KDAB

- "Best poster award for open-source parallel programming library." Cpp Conference 2018

- "Second Prize of Open-source Software Competition." ACM Multimedia Conference 2019

See a quick presentation and visit the documentation to learn more about Taskflow. Technical details can be referred to our arXiv paper.

Table of Contents

- Get Started with Taskflow

- Create a Taskflow Application

- Dynamic Tasking

- Conditional Tasking

- Composable Tasking

- Concurrent CPU-GPU Tasking

- Visualize a Taskflow Graph

- API Reference

- System Requirements

- Compile Unit Tests, Examples, and Benchmarks

- Who is Using Taskflow?

Get Started with Taskflow

The following example simple.cpp shows the basic Taskflow API you need in most applications.

#include <taskflow/taskflow.hpp> // Taskflow is header-only

int main(){

tf::Executor executor;

tf::Taskflow taskflow;

auto [A, B, C, D] = taskflow.emplace(

[] () { std::cout << "TaskA\n"; }, // task dependency graph

[] () { std::cout << "TaskB\n"; }, //

[] () { std::cout << "TaskC\n"; }, // +---+

[] () { std::cout << "TaskD\n"; } // +---->| B |-----+

); // | +---+ |

// +---+ +-v-+

A.precede(B); // A runs before B // | A | | D |

A.precede(C); // A runs before C // +---+ +-^-+

B.precede(D); // B runs before D // | +---+ |

C.precede(D); // C runs before D // +---->| C |-----+

// +---+

executor.run(taskflow).wait();

return 0;

}Compile and run the code with the following commands:

~$ g++ simple.cpp -I path/to/include/taskflow/ -std=c++17 -O2 -lpthread -o simple

~$ ./simple

TaskA

TaskC <-- concurrent with TaskB

TaskB <-- concurrent with TaskC

TaskDCreate a Taskflow Application

Taskflow defines a very expressive API to create task dependency graphs. Most applications are developed through the following three steps:

Step 1: Create a Taskflow

Create a taskflow object to build a task dependency graph:

tf::Taskflow taskflow;A task is a callable object for which std::invoke is applicable.

Use the method emplace to create a task:

tf::Task A = taskflow.emplace([](){ std::cout << "Task A\n"; });Step 2: Define Task Dependencies

You can add dependency links between tasks to enforce one task to run before or after another.

A.precede(B); // A runs before B.Step 3: Execute a Taskflow

To execute a taskflow, you need to create an executor. An executor manages a set of worker threads to execute a taskflow through an efficient work-stealing algorithm.

tf::Executor executor;The executor provides a rich set of methods to run a taskflow. You can run a taskflow multiple times, or until a stopping criteria is met. These methods are non-blocking with a std::future return to let you query the execution status. Executor is thread-safe.

executor.run(taskflow); // runs the taskflow once

executor.run_n(taskflow, 4); // runs the taskflow four times

// keeps running the taskflow until the predicate becomes true

executor.run_until(taskflow, [counter=4](){ return --counter == 0; } );You can call wait_for_all to block the executor until all associated taskflows complete.

executor.wait_for_all(); // block until all associated tasks finishNotice that the executor does not own any taskflow. It is your responsibility to keep a taskflow alive during its execution, or it can result in undefined behavior. In most applications, you need only one executor to run multiple taskflows each representing a specific part of your parallel decomposition.

Dynamic Tasking

Another powerful feature of Taskflow is dynamic tasking.

Dynamic tasks are those tasks created during the execution of a taskflow.

These tasks are spawned by a parent task and are grouped together to a subflow graph.

To create a subflow for dynamic tasking,

emplace a callable with one argument of type tf::Subflow.

// create three regular tasks

tf::Task A = tf.emplace([](){}).name("A");

tf::Task C = tf.emplace([](){}).name("C");

tf::Task D = tf.emplace([](){}).name("D");

// create a subflow graph (dynamic tasking)

tf::Task B = tf.emplace([] (tf::Subflow& subflow) {

tf::Task B1 = subflow.emplace([](){}).name("B1");

tf::Task B2 = subflow.emplace([](){}).name("B2");

tf::Task B3 = subflow.emplace([](){}).name("B3");

B1.precede(B3);

B2.precede(B3);

}).name("B");

A.precede(B); // B runs after A

A.precede(C); // C runs after A

B.precede(D); // D runs after B

C.precede(D); // D runs after C By default, a subflow graph joins its parent node.

This ensures a subflow graph finishes before the successors of

its parent task.

You can disable this feature by calling subflow.detach().

For example, detaching the above subflow will result in the following execution flow:

// create a "detached" subflow graph (dynamic tasking)

tf::Task B = tf.emplace([] (tf::Subflow& subflow) {

tf::Task B1 = subflow.emplace([](){}).name("B1");

tf::Task B2 = subflow.emplace([](){}).name("B2");

tf::Task B3 = subflow.emplace([](){}).name("B3");

B1.precede(B3);

B2.precede(B3);

// detach the subflow to form a parallel execution line

subflow.detach();

}).name("B");A subflow can be nested or recursive. You can create another subflow from the execution of a subflow and so on.

Conditional Tasking

Taskflow supports conditional tasking for users to implement general control flow with cycles and conditionals. A condition task evalutes a set of instructions and returns an integer index of the next immediate successor to execute. The index is defined with respect to the order of its successor construction.

tf::Task init = tf.emplace([](){ }).name("init");

tf::Task stop = tf.emplace([](){ }).name("stop");

// creates a condition task that returns 0 or 1

tf::Task cond = tf.emplace([](){

std::cout << "flipping a coin\n";

return rand() % 2;

}).name("cond");

// creates a feedback loop

init.precede(cond);

cond.precede(cond, stop); // cond--0-->cond, cond--1-->stop

executor.run(tf).wait();Composable Tasking

A powerful feature of tf::Taskflow is composability.

You can create multiple task graphs from different parts of your workload

and use them to compose a large graph through the composed_of method.

tf::Taskflow f1, f2;

auto [f1A, f1B] = f1.emplace(

[]() { std::cout << "Task f1A\n"; },

[]() { std::cout << "Task f1B\n"; }

);

auto [f2A, f2B, f2C] = f2.emplace(

[]() { std::cout << "Task f2A\n"; },

[]() { std::cout << "Task f2B\n"; },

[]() { std::cout << "Task f2C\n"; }

);

auto f1_module_task = f2.composed_of(f1);

f1_module_task.succeed(f2A, f2B)

.precede(f2C);Similarly, composed_of returns a task handle and you can use

precede to create dependencies.

You can compose a taskflow from multiple taskflows and use the result

to compose a larger taskflow and so on.

Concurrent CPU-GPU Tasking

Taskflow enables concurrent CPU-GPU tasking by leveraging Nvidia CUDA Toolkit. You can harness the power of CPU-GPU collaborative computing to implement heterogeneous decomposition algorithms.

Step 1: Create a cudaFlow

A tf::cudaFlow is a graph object created at runtime

similar to dynamic tasking.

It manages a task node in a taskflow and associates it

with a CUDA Graph.

To create a cudaFlow, emplace a callable with an argument

of type tf::cudaFlow.

tf::Taskflow taskflow;

tf::Executor executor;

const unsigned N = 1<<20; // size of the vector

std::vector<float> hx(N, 1.0f), hy(N, 2.0f); // x and y vectors at host

float *dx{nullptr}, *dy{nullptr}; // x and y vectors at device

tf::Task allocate_x = taskflow.emplace([&](){ cudaMalloc(&dx, N*sizeof(float));});

tf::Task allocate_y = taskflow.emplace([&](){ cudaMalloc(&dy, N*sizeof(float));});

tf::Task cudaflow = taskflow.emplace([&](tf::cudaFlow& cf) {

tf::cudaTask h2d_x = cf.copy(dx, hx.data(), N); // host-to-device x data transfer

tf::cudaTask h2d_y = cf.copy(dy, hy.data(), N); // host-to-device y data transfer

tf::cudaTask d2h_x = cf.copy(hx.data(), dx, N); // device-to-host x data transfer

tf::cudaTask d2h_y = cf.copy(hy.data(), dy, N); // device-to-host y data transfer

// launch saxpy<<<(N+255)/256, 256, 0>>>(N, 2.0f, dx, dy)

tf::cudaTask kernel = cf.kernel((N+255)/256, 256, 0, saxpy, N, 2.0f, dx, dy);

kernel.succeed(h2d_x, h2d_y)

.precede(d2h_x, d2h_y);

});

cudaflow.succeed(allocate_x, allocate_y); // overlap data allocations

executor.run(taskflow).wait();Assume our kernel implements the canonical saxpy operation (single-precision A·X Plus Y) using the CUDA syntax.

// saxpy (single-precision A·X Plus Y) kernel

__global__ void saxpy(

int n, float a, float *x, float *y

) {

// get the thread index

int i = blockIdx.x*blockDim.x + threadIdx.x;

if (i < n) {

y[i] = a*x[i] + y[i];

}

}Step 2: Compile and Execute a cudaFlow

Name you source with the extension .cu, let's say saxpy.cu,

and compile it through nvcc:

~$ nvcc saxpy.cu -I path/to/include/taskflow -O2 -o saxpy

~$ ./saxpyOur source autonomously enables cudaFlow for compilers that support CUDA.

Visualize a Taskflow Graph

You can dump a taskflow through a std::ostream

in GraphViz format using the method dump.

There are a number of free GraphViz tools you could find online to visualize your Taskflow graph.

tf::Taskflow taskflow;

tf::Task A = taskflow.emplace([] () {}).name("A");

tf::Task B = taskflow.emplace([] () {}).name("B");

tf::Task C = taskflow.emplace([] () {}).name("C");

tf::Task D = taskflow.emplace([] () {}).name("D");

tf::Task E = taskflow.emplace([] () {}).name("E");

A.precede(B, C, E);

C.precede(D);

B.precede(D, E);

taskflow.dump(std::cout); // dump the graph in DOT to std::coutWhen you have tasks that are created at runtime (e.g., subflow, cudaFlow), you need to execute the graph first to spawn these tasks and dump the entire graph.

tf::Executor executor;

tf::Taskflow taskflow;

tf::Task A = taskflow.emplace([](){}).name("A");

// create a subflow of two tasks B1->B2

tf::Task B = taskflow.emplace([] (tf::Subflow& subflow) {

tf::Task B1 = subflow.emplace([](){}).name("B1");

tf::Task B2 = subflow.emplace([](){}).name("B2");

B1.precede(B2);

}).name("B");

A.precede(B);

executor.run(tf).wait(); // run the taskflow to spawn subflows

tf.dump(std::cout); // dump the graph including dynamic tasksAPI Reference

The official documentation explains a complete list of Taskflow API. Here, we highlight commonly used methods.

Taskflow API

The class tf::Taskflow is the main place to create a task dependency graph.

emplace/placeholder

You can use emplace to create a task from a target callable.

tf::Task task = taskflow.emplace([] () { std::cout << "my task\n"; });When a task cannot be determined beforehand, you can create a placeholder and assign the callable later.

tf::Task A = taskflow.emplace([](){});

tf::Task B = taskflow.placeholder();

A.precede(B);

B.work([](){ /* do something */ });for_each/for_each_index

The method for_each creates a subflow to perform parallel iterations over a range of elements

specified by [beg, end).

auto v = {'A', 'B', 'C', 'D'};

auto t = taskflow.for_each(v.begin(), v.end(),

[] (char i) {

std::cout << "parallel iteration on character " << i << '\n';

}

);You can also specify an index-based range with the given step size.

// [0, 11) with a step size of 2, i.e., 0, 2, 4, 6, 8, 10

auto t = taskflow.for_each_index(0, 11, 2,

[] (int i) {

std::cout << "parallel iteration on index " << i << std::endl;

}

);Task API

Each time you create a task, the taskflow object adds a node to the present task dependency graph and return a task handle to you. You can access or modify the attributes of the associated task node.

name

The method name lets you assign a human-readable string to a task.

A.name("my name is A");work

The method work lets you assign a callable to a task.

A.work([] () { std::cout << "hello world!"; });precede/succeed

The method precede/succedd lets you add a preceding/succeeding link between tasks.

// A runs before B, C, D, and E

A.precede(B, C, D, E);The method succeed is similar to precede but operates in the opposite direction.

empty/has_work

A task is empty if it is not associated with any graph node.

tf::Task task; // assert(task.empty());A placeholder task is associated with a graph node but has no work assigned yet.

tf::Task task = taskflow.placeholder(); // assert(!task.has_work());

Executor API

The class tf::Executor is used for executing one or multiple taskflow objects.

run/run_n/run_until

The run series are thread-safe and non-blocking calls to execute a taskflow. Issuing multiple runs on the same taskflow will automatically synchronize to a sequential chain of executions.

executor.run(taskflow); // runs a graph once

executor.run_n(taskflow, 5); // runs a graph five times

executor.run_until(taskflow, my_pred); // keeps running until the my_pred becomes true

executor.wait_for_all(); // blocks until all tasks finishThe first run finishes before the second run, and the second run finishes before the third run.

System Requirements

To use the latest Taskflow, you only need a C++14 compiler.

- GNU C++ Compiler at least v5.0 with -std=c++14

- Clang C++ Compiler at least v4.0 with -std=c++14

- Microsoft Visual Studio at least v15.7 (MSVC++ 19.14); see vcpkg guide

- AppleClang Xode Version at least v8

- Nvidia CUDA Toolkit and Compiler (nvcc) at least v10.0 with -std=c++14

Taskflow works on Linux, Windows, and Mac OS X. See the C++ compiler support status.

Compile Unit Tests, Examples, and Benchmarks

Taskflow uses CMake to build examples and unit tests. We recommend using out-of-source build.

~$ cmake --version # must be at least 3.9 or higher

~$ mkdir build

~$ cd build

~$ cmake ../

~$ make & make test # run all unit testsExamples

The folder examples/ contains several examples and is a great place to learn to use Taskflow.

| Example | Description |

|---|---|

| simple.cpp | uses basic task building blocks to create a trivial taskflow graph |

| visualization.cpp | inspects a taskflow through the dump method |

| parallel_for.cpp | parallelizes a for loop with unbalanced workload |

| subflow.cpp | demonstrates how to create a subflow graph that spawns three dynamic tasks |

| run_variants.cpp | shows multiple ways to run a taskflow graph |

| composition.cpp | demonstrates the decomposable interface of taskflow |

| observer.cpp | demonstrates how to monitor the thread activities in scheduling and running tasks |

| condition.cpp | creates a conditional tasking graph with a feedback loop control flow |

| cuda/saxpy.cu | uses cudaFlow to create a saxpy (single-precision A·X Plus Y) task graph |

| cuda/matmul.cu | uses cudaFlow to create a matrix multiplication workload and compares it with a CPU basline |

Benchmarks

Please visit benchmarks to learn to compile the benchmarks.

Who is Using Taskflow?

Taskflow is being used in both industry and academic projects to scale up existing workloads that incorporate complex task dependencies.

- OpenTimer: A High-performance Timing Analysis Tool for Very Large Scale Integration (VLSI) Systems

- NovusCore: An emulating project for World of Warraft (Wrath of the Lich King 3.3.5a 12340 client build)

- SA-PCB: Annealing-based Printed Circuit Board (PCB) Placement Tool

- LPMP: A C++ framework for developing scalable Lagrangian decomposition solvers for discrete optimization problems

- OpenPhySyn: A plugin-based physical synthesis optimization kit as part of the OpenRoad flow

- OSSIA: Open-source Software System for Interactive Applications

- deal.II: A C++ software library to support the creation of finite element code

- PyRepScan: A Git Repository Leaks Scanner Python Library written in C++

- MyDataModels: An online platform for self-service machine learning fro small data

- revealtech.ai: Mobile application that provides focused, intelligent analytics on the edge

Contributors

Taskflow is being actively developed and contributed by the these people. Meanwhile, we appreciate the support from many organizations for our developments.

|

|

|

|

|

|---|

License

Taskflow is licensed under the MIT License.