This is the training code for our paper "A Survey on Time-Series Pre-Trained Models", which has been accepted for publication in the IEEE Transactions on Knowledge and Data Engineering (TKDE-24).

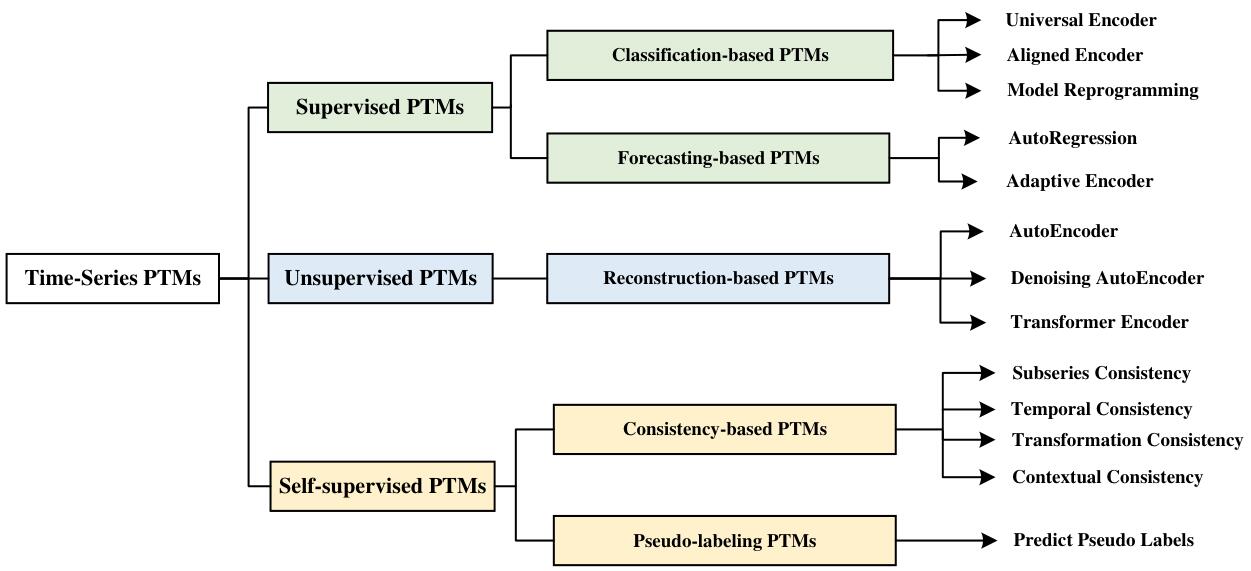

Time-Series Mining (TSM) is an important research area since it shows great potential in practical applications. Deep learning models that rely on massive labeled data have been utilized for TSM successfully. However, constructing a large-scale well-labeled dataset is difficult due to data annotation costs. Recently, pre-trained models have gradually attracted attention in the time series domain due to their remarkable performance in computer vision and natural language processing. In this survey, we provide a comprehensive review of Time-Series Pre-Trained Models (TS-PTMs), aiming to guide the understanding, applying, and studying TS-PTMs. Specifically, we first briefly introduce the typical deep learning models employed in TSM. Then, we give an overview of TS-PTMs according to the pre-training techniques. The main categories we explore include supervised, unsupervised, and self-supervised TS-PTMs. Further, extensive experiments involving 27 methods, 434 datasets, and 679 transfer learning scenarios are conducted to analyze the advantages and disadvantages of transfer learning strategies, Transformer-based models, and representative TS-PTMs. Finally, we point out some potential directions of TS-PTMs for future work.

The datasets used in this project are as follows:

- 128 UCR datasets

- 30 UEA datasets

- SleepEEG dataset

- Epilepsy dataset

- FD-A and FD-B datasets

- HAR dataset

- Gesture dataset

- ECG dataset

- EMG dataset

- Yahoo dataset

- KPI dataset

- 250 UCR anomaly detection datasets

- MSL dataset

- SMAP dataset

- PSM dataset

- SMD dataset

- SWaT dataset

- NIPS-TS-SWAN dataset

- NIPS-TS-GECCO dataset

- FCN

- FCN Encoder+CNN Decoder

- FCN Encoder+RNN Decoder

- TCN

- Transformer

- TST

- T-Loss

- SelfTime

- TS-TCC

- TS2Vec

- TimesNet

- PatchTST

- GPT4TS

For details, please refer to ts_classification_methods/README.

For details, please refer to ts_forecating_methods/README.

- SPOT

- DSPOT

- LSTM-VAE

- DONUT

- Spectral Residual (SR)

- Anomaly Transformer (AT)

- TS2Vec

- TimesNet

- GPT4TS

- DCdetector

For details, please refer to ts_anomaly_detection_methods/README.

We thank the anonymous reviewers for their helpful feedback. We thank Professor Eamonn Keogh from UCR and all the people who have contributed to the UCR&UEA time series archives and other time series datasets. The authors would like to thank Professor Garrison W. Cottrell from UCSD, and Chuxin Chen, Xidi Cai, Yu Chen, and Peitian Ma from SCUT for the helpful suggestions.

If you use this code for your research, please cite our paper:

@article{ma2024survey,

title={A survey on time-series pre-trained models},

author={Ma, Qianli and Liu, Zhen and Zheng, Zhenjing and Huang, Ziyang and Zhu, Siying and Yu, Zhongzhong and Kwok, James T},

journal={IEEE Transactions on Knowledge and Data Engineering},

year={2024}

}