A professionally curated list of awesome resources (paper, code, data, etc.) on Transformers in Time Series, which is first work to comprehensively and systematically summarize the recent advances of Transformers for modeling time series data to the best of our knowledge.

We will continue to update this list with newest resources. If you found any missed resources (paper/code) or errors, please feel free to open an issue or make a pull request.

For general AI for Time Series (AI4TS) Papers, Tutorials, and Surveys at the Top AI Conferences and Journals, please check This Repo.

For general Recent AI Advances: Tutorials and Surveys in various areas (DL, ML, DM, CV, NLP, Speech, etc.) at the Top AI Conferences and Journals, please check This Repo.

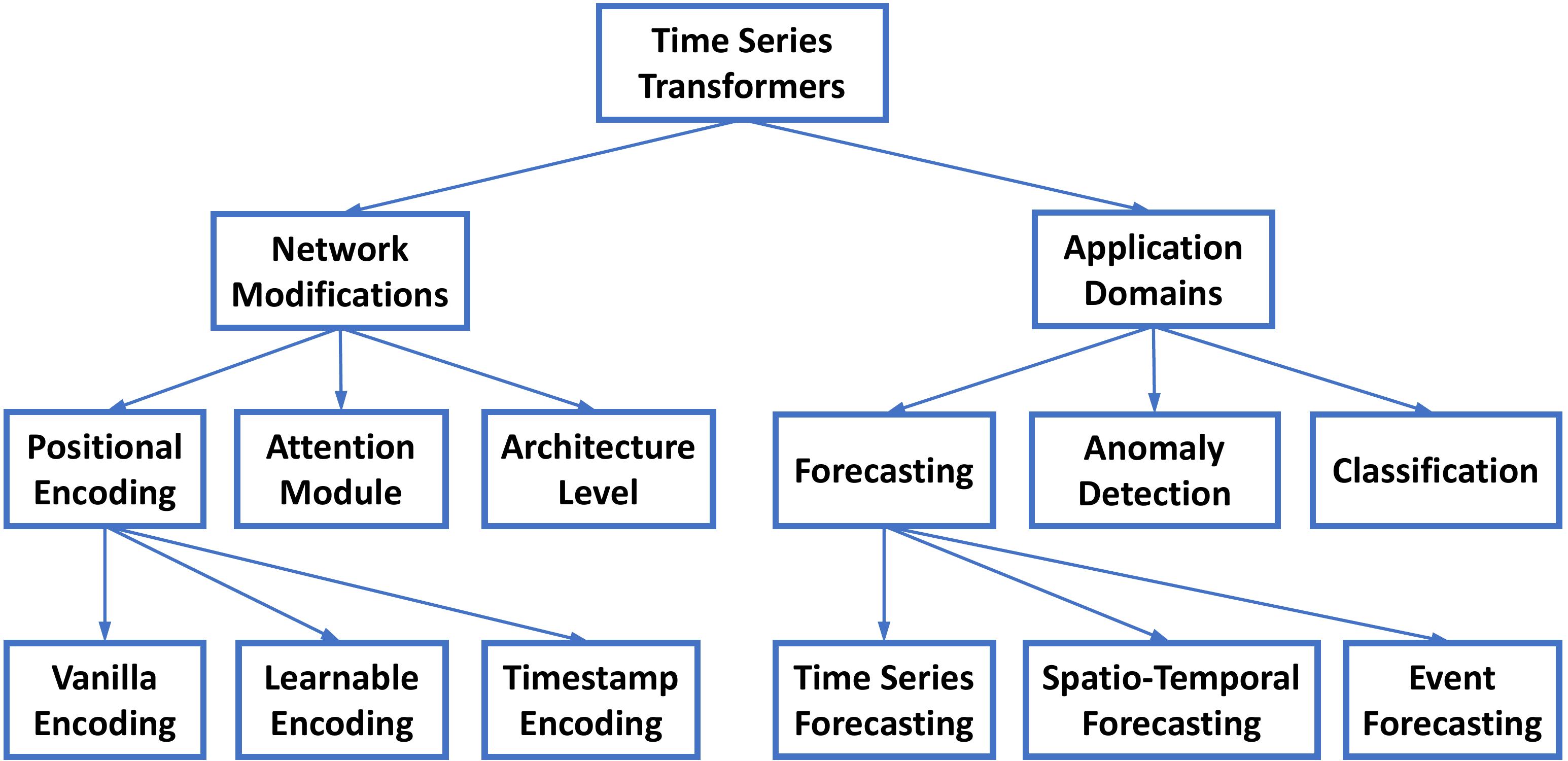

Transformers in Time Series: A Survey (IJCAI'23 Survey Track)

Qingsong Wen, Tian Zhou, Chaoli Zhang, Weiqi Chen, Ziqing Ma, Junchi Yan and Liang Sun.

@inproceedings{wen2023transformers,

title={Transformers in time series: A survey},

author={Wen, Qingsong and Zhou, Tian and Zhang, Chaoli and Chen, Weiqi and Ma, Ziqing and Yan, Junchi and Sun, Liang},

booktitle={International Joint Conference on Artificial Intelligence(IJCAI)},

year={2023}

}- CARD: Channel Aligned Robust Blend Transformer for Time Series Forecasting, in ICLR 2024. [paper] [official code]

- Pathformer: Multi-scale Transformers with Adaptive Pathways for Time Series Forecasting, in ICLR 2024. [paper] [official code]

- GAFormer: Enhancing Timeseries Transformers Through Group-Aware Embeddings, in ICLR 2024. [paper]

- Transformer-Modulated Diffusion Models for Probabilistic Multivariate Time Series Forecasting, in ICLR 2024. [paper]

- iTransformer: Inverted Transformers Are Effective for Time Series Forecasting, in ICLR 2024. [paper]

- Considering Nonstationary within Multivariate Time Series with Variational Hierarchical Transformer for Forecasting, in AAAI 2024. [paper]

- Latent Diffusion Transformer for Probabilistic Time Series Forecasting, in AAAI 2024. [paper]

- BasisFormer: Attention-based Time Series Forecasting with Learnable and Interpretable Basis, in NeurIPS 2023. [paper]

- ContiFormer: Continuous-Time Transformer for Irregular Time Series Modeling, in NeurIPS 2023. [paper]

- A Time Series is Worth 64 Words: Long-term Forecasting with Transformers, in ICLR 2023. [paper] [code]

- Crossformer: Transformer Utilizing Cross-Dimension Dependency for Multivariate Time Series Forecasting, in ICLR 2023. [paper]

- Scaleformer: Iterative Multi-scale Refining Transformers for Time Series Forecasting, in ICLR 2023. [paper]

- Non-stationary Transformers: Rethinking the Stationarity in Time Series Forecasting, in NeurIPS 2022. [paper]

- Learning to Rotate: Quaternion Transformer for Complicated Periodical Time Series Forecasting”, in KDD 2022. [paper]

- FEDformer: Frequency Enhanced Decomposed Transformer for Long-term Series Forecasting, in ICML 2022. [paper] [official code]

- TACTiS: Transformer-Attentional Copulas for Time Series, in ICML 2022. [paper]

- Pyraformer: Low-Complexity Pyramidal Attention for Long-Range Time Series Modeling and Forecasting, in ICLR 2022. [paper] [official code]

- Autoformer: Decomposition transformers with auto-correlation for long-term series forecasting, in NeurIPS 2021. [paper] [official code]

- Informer: Beyond efficient transformer for long sequence time-series forecasting, in AAAI 2021. [paper] [official code] [dataset]

- Temporal fusion transformers for interpretable multi-horizon time series forecasting, in International Journal of Forecasting 2021. [paper] [code]

- Probabilistic Transformer For Time Series Analysis, in NeurIPS 2021. [paper]

- Deep Transformer Models for Time Series Forecasting: The Influenza Prevalence Case, in arXiv 2020. [paper]

- Adversarial sparse transformer for time series forecasting, in NeurIPS 2020. [paper] [code]

- Enhancing the locality and breaking the memory bottleneck of transformer on time series forecasting, in NeurIPS 2019. [paper] [code]

- SSDNet: State Space Decomposition Neural Network for Time Series Forecasting, in ICDM 2021, [paper]

- From Known to Unknown: Knowledge-guided Transformer for Time-Series Sales Forecasting in Alibaba, in arXiv 2021. [paper]

- TCCT: Tightly-coupled convolutional transformer on time series forecasting, in Neurocomputing 2022. [paper]

- Triformer: Triangular, Variable-Specific Attentions for Long Sequence Multivariate Time Series Forecasting, in IJCAI 2022. [paper]

- AirFormer: Predicting Nationwide Air Quality in China with Transformers, in AAAI 2023. [paper] [official code]

- Earthformer: Exploring Space-Time Transformers for Earth System Forecasting, in NeurIPS 2022. [paper] [official code]

- Bidirectional Spatial-Temporal Adaptive Transformer for Urban Traffic Flow Forecasting, in TNNLS 2022. [paper]

- Spatio-temporal graph transformer networks for pedestrian trajectory prediction, in ECCV 2020. [paper] [official code]

- Spatial-temporal transformer networks for traffic flow forecasting, in arXiv 2020. [paper] [official code]

- Traffic transformer: Capturing the continuity and periodicity of time series for traffic forecasting, in Transactions in GIS 2022. [paper]

- Time Series as Images: Vision Transformer for Irregularly Sampled Time Series,in NeurIPS 2023. [paper]

- ContiFormer: Continuous-Time Transformer for Irregular Time Series Modeling,in NeurIPS 2023. [paper]

- HYPRO: A Hybridly Normalized Probabilistic Model for Long-Horizon Prediction of Event Sequences,in NeurIPS 2022. [paper] [official code]

- Transformer Embeddings of Irregularly Spaced Events and Their Participants, in ICLR 2022. [paper] [official code]

- Self-attentive Hawkes process, in ICML 2020. [paper] [official code]

- Transformer Hawkes process, in ICML 2020. [paper] [official code]

- MEMTO: Memory-guided Transformer for Multivariate Time Series Anomaly Detection,in NeurIPS 2023. [paper]

- CAT: Beyond Efficient Transformer for Content-Aware Anomaly Detection in Event Sequences, in KDD 2022. [paper] [official code]

- DCT-GAN: Dilated Convolutional Transformer-based GAN for Time Series Anomaly Detection, in TKDE 2022. [paper]

- Concept Drift Adaptation for Time Series Anomaly Detection via Transformer, in Neural Processing Letters 2022. [paper]

- Anomaly Transformer: Time Series Anomaly Detection with Association Discrepancy, in ICLR 2022. [paper] [official code]

- TranAD: Deep Transformer Networks for Anomaly Detection in Multivariate Time Series Data, in VLDB 2022. [paper] [official code]

- Learning graph structures with transformer for multivariate time series anomaly detection in IoT, in IEEE Internet of Things Journal 2021. [paper] [official code]

- Spacecraft Anomaly Detection via Transformer Reconstruction Error, in ICASSE 2019. [paper]

- Unsupervised Anomaly Detection in Multivariate Time Series through Transformer-based Variational Autoencoder, in CCDC 2021. [paper]

- Variational Transformer-based anomaly detection approach for multivariate time series, in Measurement 2022. [paper]

- Time Series as Images: Vision Transformer for Irregularly Sampled Time Series, in NeurIPS 2023. [paper]

- TrajFormer: Efficient Trajectory Classification with Transformers, in CIKM 2022. [paper]

- TARNet : Task-Aware Reconstruction for Time-Series Transformer, in KDD 2022. [paper] [official code]

- A transformer-based framework for multivariate time series representation learning, in KDD 2021. [paper] [official code]

- Voice2series: Reprogramming acoustic models for time series classification, in ICML 2021. [paper] [official code]

- Gated Transformer Networks for Multivariate Time Series Classification, in arXiv 2021. [paper] [official code]

- Self-attention for raw optical satellite time series classification, in ISPRS Journal of Photogrammetry and Remote Sensing 2020. [paper] [official code]

- Self-supervised pretraining of transformers for satellite image time series classification, in IEEE Journal of Selected Topics in Applied Earth Observations and Remote Sensing 2020. [paper]

- Self-Supervised Transformer for Sparse and Irregularly Sampled Multivariate Clinical Time-Series, in ACM TKDD 2022. [paper] [official code]

- What Can Large Language Models Tell Us about Time Series Analysis, in arXiv 2024. [paper]

- Large Models for Time Series and Spatio-Temporal Data: A Survey and Outlook, in arXiv 2023. [paper] [Website]

- Deep Learning for Multivariate Time Series Imputation: A Survey, in arXiv 2024. [paper] [Website]

- Self-Supervised Learning for Time Series Analysis: Taxonomy, Progress, and Prospects, in arXiv 2023. [paper] [Website]

- A Survey on Graph Neural Networks for Time Series: Forecasting, Classification, Imputation, and Anomaly Detection, in arXiv 2023. [paper] [Website]

- Time series data augmentation for deep learning: a survey, in IJCAI 2021. [paper]

- Neural temporal point processes: a review, in IJCAI 2021. [paper]

- Time-series forecasting with deep learning: a survey, in Philosophical Transactions of the Royal Society A 2021. [paper]

- Deep learning for time series forecasting: a survey, in Big Data 2021. [paper]

- Neural forecasting: Introduction and literature overview, in arXiv 2020. [paper]

- Deep learning for anomaly detection in time-series data: review, analysis, and guidelines, in Access 2021. [paper]

- A review on outlier/anomaly detection in time series data, in ACM Computing Surveys 2021. [paper]

- A unifying review of deep and shallow anomaly detection, in Proceedings of the IEEE 2021. [paper]

- Deep learning for time series classification: a review, in Data Mining and Knowledge Discovery 2019. [paper]

- More related time series surveys, tutorials, and papers can be found at this repo.

- Everything You Need to Know about Transformers: Architectures, Optimization, Applications, and Interpretation, in AAAI Tutorial 2023. [link]

- Transformer Architectures for Multimodal Signal Processing and Decision Making, in ICASSP Tutorial 2022. [link]

- Efficient transformers: A survey, in ACM Computing Surveys 2022. [paper] [paper]

- A survey on visual transformer, in IEEE TPAMI 2022. [paper]

- A General Survey on Attention Mechanisms in Deep Learning, in IEEE TKDE 2022. [paper]

- Attention, please! A survey of neural attention models in deep learning, in Artificial Intelligence Review 2022. [paper]

- Attention mechanisms in computer vision: A survey, in Computational Visual Media 2022. [paper]

- Survey: Transformer based video-language pre-training, in AI Open 2022. [paper]

- Transformers in vision: A survey, in ACM Computing Surveys 2021. [paper]

- Pre-trained models: Past, present and future, in AI Open 2021. [paper]

- An attentive survey of attention models, in ACM TIST 2021. [paper]

- Attention in natural language processing, in IEEE TNNLS 2020. [paper]

- Pre-trained models for natural language processing: A survey, in Science China Technological Sciences 2020. [paper]

- A review on the attention mechanism of deep learning, in Neurocomputing 2021. [paper]

- A Survey of Transformers, in arXiv 2021. [paper]

- A Survey of Vision-Language Pre-Trained Models, in arXiv 2022. [paper]

- Video Transformers: A Survey, in arXiv 2022. [paper]

- Transformer for Graphs: An Overview from Architecture Perspective, in arXiv 2022. [paper]

- Transformers in Medical Imaging: A Survey, in arXiv 2022. [paper]

- A Survey of Controllable Text Generation using Transformer-based Pre-trained Language Models, in arXiv 2022. [paper]