Qiucheng Wu1,

Yujian Liu1,

Handong Zhao2,

Ajinkya Kale2,

Trung Bui2,

Tong Yu2,

Zhe Lin2,

Yang Zhang3,

Shiyu Chang1

1UC, Santa Barbara, 2Adobe Research, 3MIT-IBM Watson AI Lab

This is the official implementation of the paper "Uncovering the Disentanglement Capability in Text-to-Image Diffusion Models".

Generative models have been widely studied in computer vision. Recently, diffusion models have drawn substantial attention due to the high quality of their generated images. A key desired property of image generative models is the ability to disentangle different attributes, which should enable modification towards a style without changing the semantic content, and the modification parameters should generalize to different images. Previous studies have found that generative adversarial networks (GANs) are inherently endowed with such disentanglement capability, so they can perform disentangled image editing without re-training or fine-tuning the network. In this work, we explore whether diffusion models are also inherently equipped with such a capability. Our finding is that for stable diffusion models, by partially changing the input text embedding from a neutral description (e.g., "a photo of person") to one with style (e.g., "a photo of person with smile") while fixing all the Gaussian random noises introduced during the denoising process, the generated images can be modified towards the target style without changing the semantic content. Based on this finding, we further propose a simple, light-weight image editing algorithm where the mixing weights of the two text embeddings are optimized for style matching and content preservation. This entire process only involves optimizing over around 50 parameters and does not fine-tune the diffusion model itself. Experiments show that the proposed method can modify a wide range of attributes, with the performance outperforming diffusion-model-based image-editing algorithms that require fine-tuning. The optimized weights generalize well to different images.

Here, we demonstrate an example of disentangling target attribute "children drawing". In this example,

Our code is based on stable-diffusion. This project requires one GPU with 48GB memory. Please first clone the repository and build the environment:

git clone https://github.com/wuqiuche/DiffusionDisentanglement

cd DiffusionDisentanglement

conda env create -f environment.yaml

conda activate ldmYou will also need to download the pretrained stable-diffusion model:

mkdir models/ldm/stable-diffusion-v1

wget -O models/ldm/stable-diffusion-v1/model.ckpt https://huggingface.co/CompVis/stable-diffusion-v-1-4-original/resolve/main/sd-v1-4.ckptpython scripts/disentangle.py --c1 <neutral_prompt> --c2 <target_prompt> --seed 42 --outdir <output_dir>We provide a bash file with a disentangling example:

chmod +x scripts/disentangle.sh

./scripts/disentangle.shYou should obtain the following results (right one) in outputs/disentangle/image/:

python scripts/edit.py --c1 <neutral_prompt> --c2 <target_prompt> --seed 42 --input <input_image> --outdir <output_dir>We provide a bash file with an image editing example:

chmod +x scripts/edit.sh

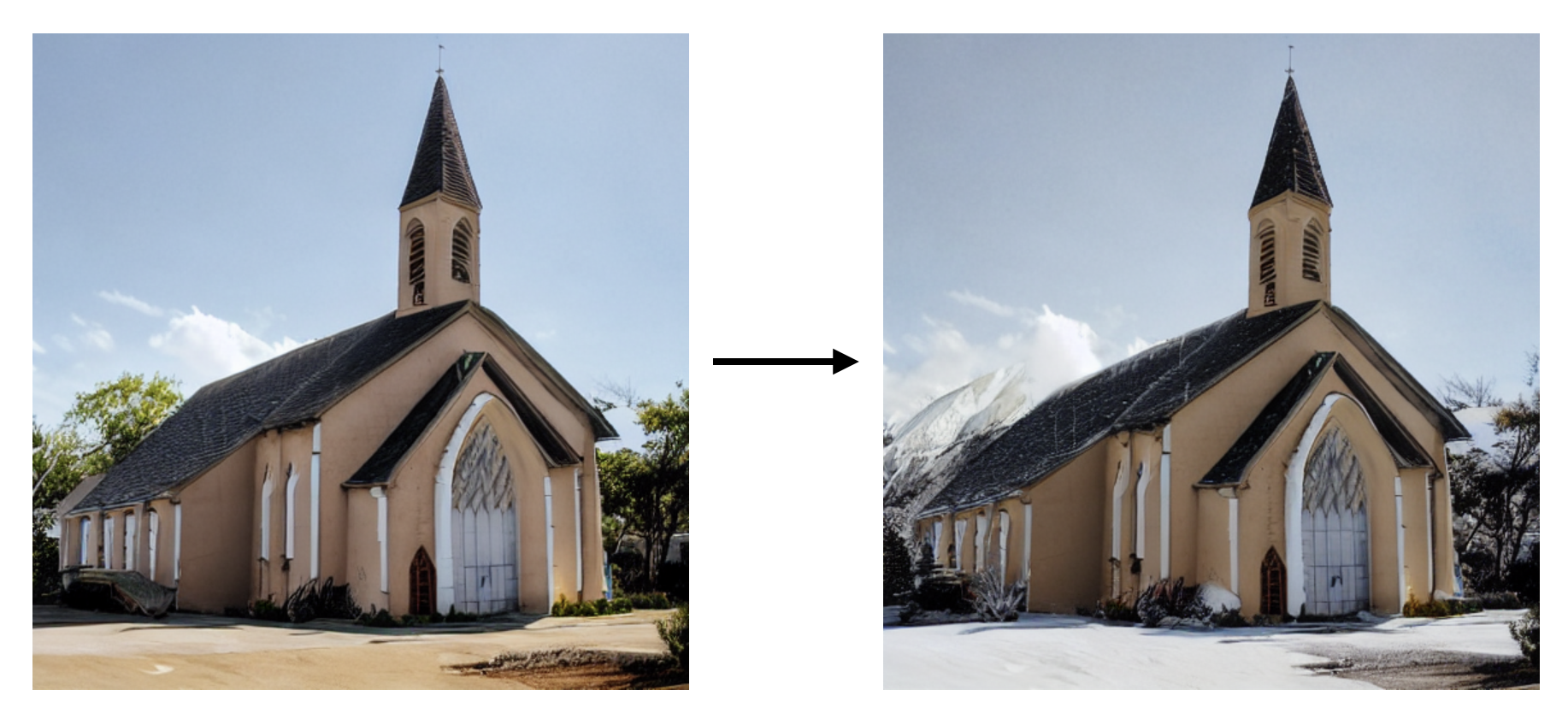

./scripts/edit.shYou should obtain the following results (right one) in outputs/edit/image/:

To replicate our results in paper, we provide a bash file with commands used. You can run them all at once, or choose the target attributes you are interested in.

chmod +x scripts/result.sh

./scripts/result.shOur method is able to disentangle a series of global and local attributes. We demonstrate examples below. The high-resolution images can be found in examples directory.

This code is adopted from https://github.com/CompVis/stable-diffusion and https://github.com/orpatashnik/StyleCLIP.