This is the original Tensorflow implementation of the ACL 2019 paper Simple and Effective Text Matching with Richer Alignment Features. Pytorch implementation: https://github.com/alibaba-edu/simple-effective-text-matching-pytorch.

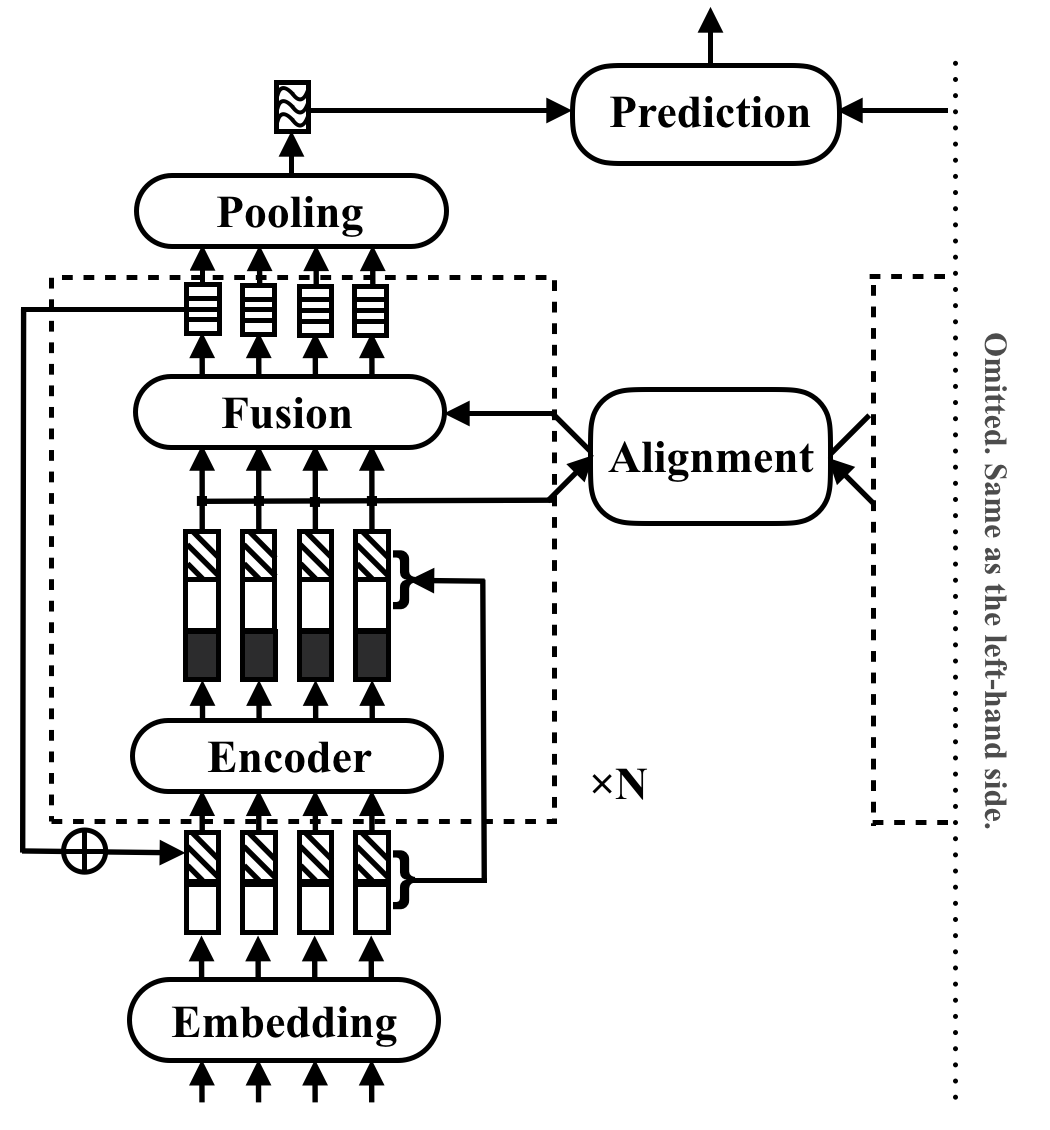

RE2 is a fast and strong neural architecture for general purpose text matching applications. In a text matching task, a model takes two text sequences as input and predicts their relationship. This method aims to explore what is sufficient for strong performance in these tasks. It simplifies or omits many slow components which are previously considered as core building blocks in text matching. It achieves its performance by a simple idea, which is keeping three key features directly available for inter-sequence alignment and fusion: previous aligned features (Residual vectors), original point-wise features (Embedding vectors), and contextual features (Encoder output).

RE2 achieves performance on par with the state of the art on four benchmark datasets: SNLI, SciTail, Quora and WikiQA, across tasks of natural language inference, paraphrase identification and answer selection with no or few task-specific adaptations. It has at least 6 times faster inference speed compared with similarly performed models.

The following table lists major experiment results. The paper reports the average and standard deviation of 10 runs and the results can be easily reproduced. Inference time (in seconds) is measured by processing a batch of 8 pairs of length 20 on Intel i7 CPUs. The computation time of POS features used by CSRAN and DIIN is not included.

| Model | SNLI | SciTail | Quora | WikiQA | Inference Time |

|---|---|---|---|---|---|

| BiMPM | 86.9 | - | 88.2 | 0.731 | 0.05 |

| ESIM | 88.0 | 70.6 | - | - | - |

| DIIN | 88.0 | - | 89.1 | - | 1.79 |

| CSRAN | 88.7 | 86.7 | 89.2 | - | 0.28 |

| RE2 | 88.9±0.1 | 86.0±0.6 | 89.2±0.2 | 0.7618 ±0.0040 | 0.03~0.05 |

Refer to the paper for more details of the components and experiment results.

- install python >= 3.6 and pip

pip install -r requirements.txt- install Tensorflow 1.4 or above (the wheel file for Tensorflow 1.4 gpu version under python 3.6 can be found here)

- Download GloVe word vectors (glove.840B.300d) to

resources/

Data used in the paper are prepared as follows:

- Download and unzip SNLI

(pre-processed by Tay et al.) to

data/orig. - Unzip all zip files in the "data/orig/SNLI" folder. (

cd data/orig/SNLI && gunzip *.gz) cd data && python prepare_snli.py

- Download and unzip SciTail

dataset to

data/orig. cd data && python prepare_scitail.py

- Download and unzip Quora

dataset (pre-processed by Wang et al.) to

data/orig. cd data && python prepare_quora.py

- Download and unzip WikiQA

to

data/orig. cd data && python prepare_wikiqa.py- Download and unzip evaluation scripts.

Use the

makecommand to compile the source files inqg-emnlp07-data/eval/trec_eval-8.0. Move the binary file "trec_eval" toresources/.

To train a new text matching model, run the following command:

python train.py $config_file.json5Example configuration files are provided in configs/:

configs/main.json5: replicate the main experiment result in the paper.configs/robustness.json5: robustness checksconfigs/ablation.json5: ablation study

The instructions to write your own configuration files:

[

{

name: 'exp1', // name of your experiment, can be the same across different data

__parents__: [

'default', // always put the default on top

'data/quora', // data specific configurations in `configs/data`

// 'debug', // use "debug" to quick debug your code

],

__repeat__: 5, // how may repetitions you want

blocks: 3, // other configurations for this experiment

},

// multiple configurations are executed sequentially

{

name: 'exp2', // results under the same name will be overwritten

__parents__: [

'default',

'data/quora',

],

__repeat__: 5,

blocks: 4,

}

]To check the configurations only, use

python train.py $config_file.json5 --dryPlease cite the ACL paper if you use RE2 in your work:

@inproceedings{yang2019simple,

title={Simple and Effective Text Matching with Richer Alignment Features},

author={Yang, Runqi and Zhang, Jianhai and Gao, Xing and Ji, Feng and Chen, Haiqing},

booktitle={Association for Computational Linguistics (ACL)},

year={2019}

}

RE2 is under Apache License 2.0.