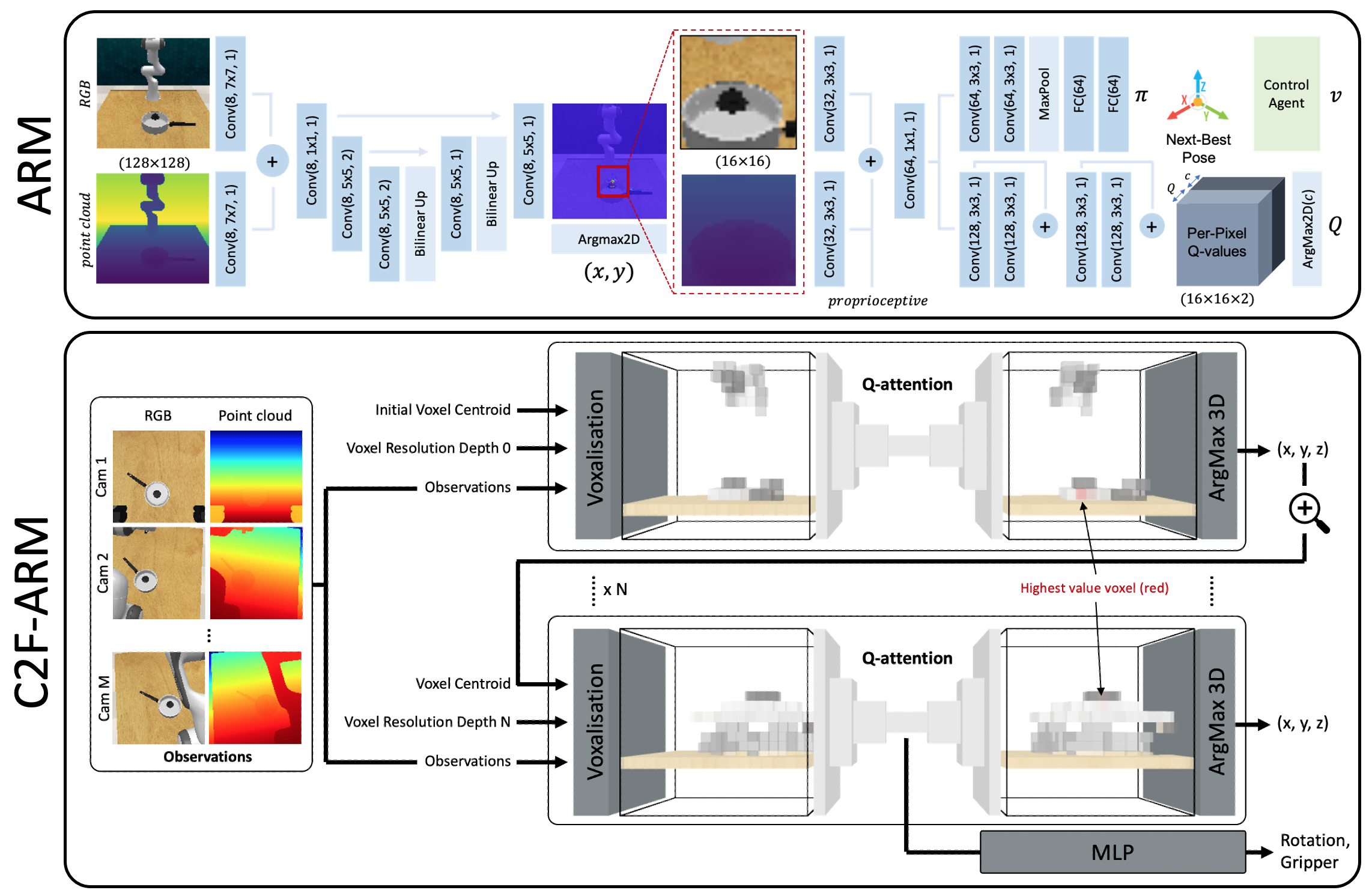

Codebase of Q-attention (within the ARM system) and coarse-to-fine Q-attention (within C2F-ARM system) from the following papers:

- Q-attention: Enabling Efficient Learning for Vision-based Robotic Manipulation (ARM system)

- Coarse-to-Fine Q-attention: Efficient Learning for Visual Robotic Manipulation via Discretisation (C2F-ARM system)

Not that C2F-ARM is the better performing system.

ARM is trained using the YARR framework. Head to the YARR github page and follow installation instructions.

ARM is evaluated on RLBench 1.1.0. Head to the RLBench github page and follow installation instructions.

Now install project requirements:

pip install -r requirements.txtBe sure to have RLBench demos saved on your machine before proceeding. To generate demos for a task, go to the tools directory in RLBench (rlbench/tools), and run:

python dataset_generator.py --save_path=/mnt/my/save/dir --tasks=take_lid_off_saucepan --image_size=128,128 \

--renderer=opengl --episodes_per_task=100 --variations=1 --processes=1Experiments are launched via Hydra. To start training C2F-ARM on the take_lid_off_saucepan task with the default parameters on gpu 0:

python launch.py method=C2FARM rlbench.task=take_lid_off_saucepan rlbench.demo_path=/mnt/my/save/dir framework.gpu=0