This is a pytorch implementation of InfoGAN.

This repository has the following features that others do not have:

-

Highly customizable.

- You can use this for your own dataset, settings.

- Most parameters including the latent variable design can be customized by editing the yaml config file.

-

OK clean, structured codes

- This is totally my personal point of view. 😉

-

TensorBoard is available by default.

- latent variable design

z~ N(0, 1), 64 dimensionsc1~ Cat(K=10, p=0.1)c2,c3,c4~ N(0, 1)

batchsize: 300,epochs: 500- mnist.yaml

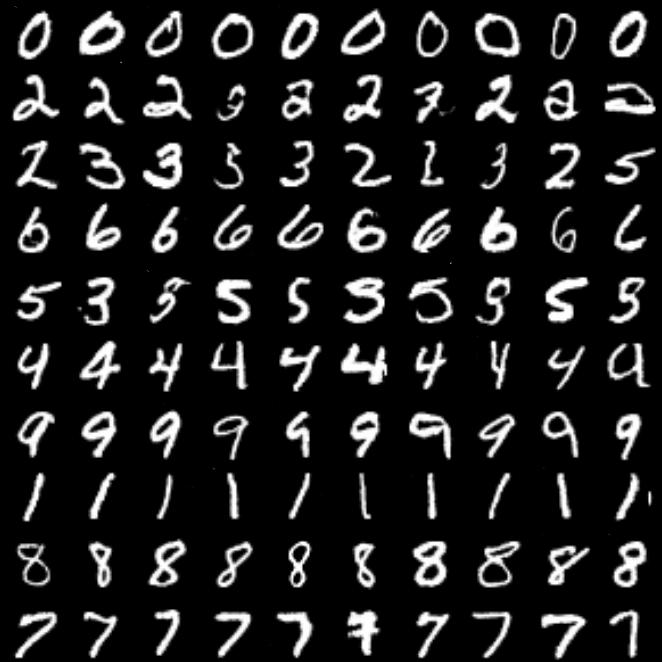

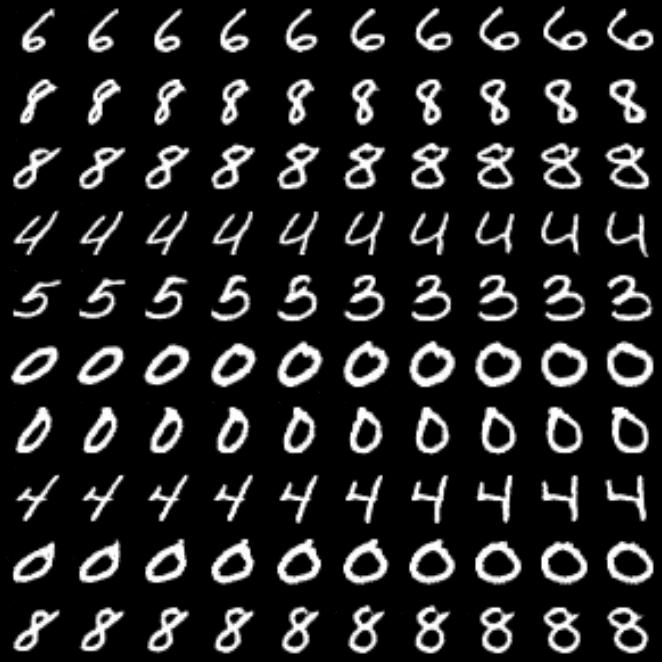

| c1 (digit type) | c2 (rotation) |

|---|---|

|

|

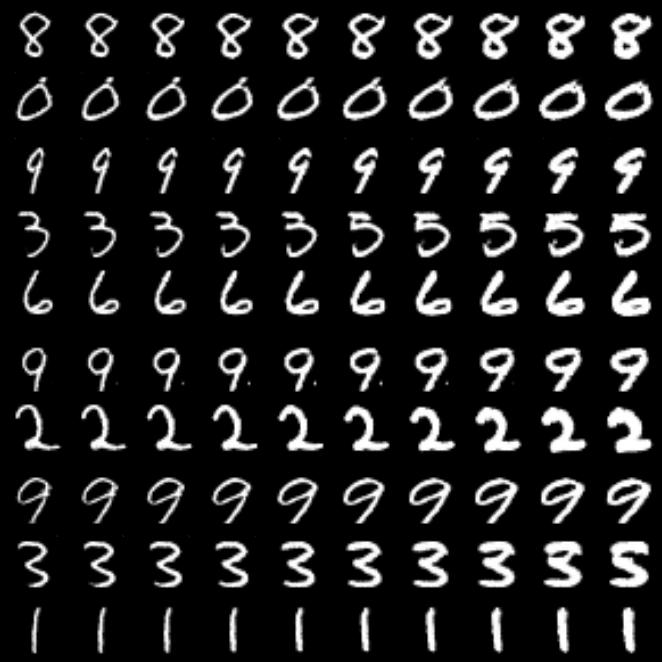

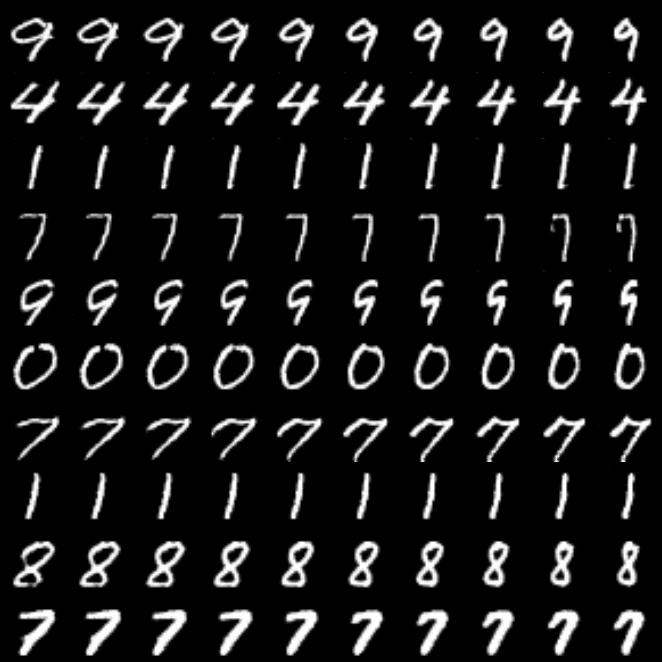

| c3 (line thickness) | c4 (digit width) |

|---|---|

|

|

- Python (

~3.6)

make setup-

Start training

python src/train.py --config <config.yaml>

You need to specify all of training settings with

yamlfromat. Example files are placed underconfigs/.If you want to try training anyway, my configuration for debugging is available.

make debug

-

Open tensorboard

make tb

Training metrics (ex. loss) are printed on console and tensorboard.

By default, tensorboard watches

./resultsdirectory. To change the path, executetensorboard --logdir <path>or editMakefile.

- upload result on MNIST dataset.

- upload result on Fashion-MNIST dataset.

- automatic hyper-parameters tuning with Optuna.