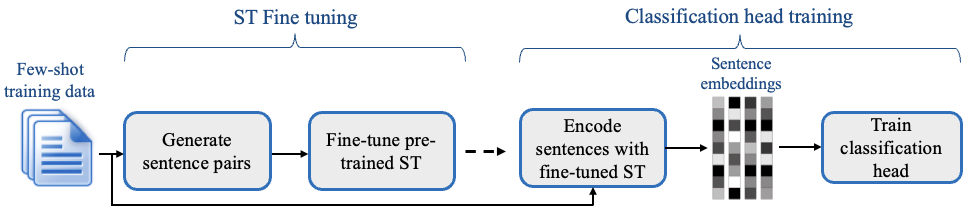

We introduce SetFit, an efficient and prompt-free framework for few-shot fine-tuning of Sentence Transformers. Compared to other few-shot learning methods, SetFit has several unique features:

- 📈 High accuracy with little labeled data: SetFit achieves comparable (or better) results than current state-of-the-art methods for text classification. For example, with only 8 labelled examples per class on the CR sentiment dataset, SetFit is competitive with fine-tuning RoBERTa-large on the full training set of 3k examples.

- 🗣 No prompts or verbalisers: Current techniques for few-shot fine-tuning require handcrafted prompts or verbalisers to convert examples into a format that's suitable for the underlying language model. SetFit dispenses with prompts altogether by generating rich embeddings directly from text examples.

- 🏎 Fast to train: SetFit doesn't require large-scale models like T0 or GPT-3 to achieve high accuracy. As a result, it is typically an order of magnitude (or more) faster to train and run inference with.

Download and install setfit by running:

cd setfit

python -m pip install .setfit is integrated with the Hugging Face Hub and provides two main classes:

SetFitModel: a wrapper that combines a pretrained body fromsentence_transformersand a classification head fromscikit-learnSetFitTrainer: a helper class that wraps the fine-tuning process of SetFit.

Here is an end-to-end example:

from datasets import load_dataset

from sentence_transformers.losses import CosineSimilarityLoss

from setfit import SetFitModel, SetFitTrainer

# Load a dataset from the Hugging Face Hub

dataset = load_dataset("emotion")

# Simulate the few-shot regime by sampling 8 examples per class

num_classes = 6

train_ds = dataset["train"].shuffle(seed=42).select(range(8 * num_classes))

test_ds = dataset["test"]

# Load SetFit model from Hub

model = SetFitModel.from_pretrained("sentence-transformers/paraphrase-mpnet-base-v2")

# Create trainer

trainer = SetFitTrainer(

model=model,

train_dataset=train_ds,

eval_dataset=test_ds,

loss_class=CosineSimilarityLoss,

batch_size=16,

num_iterations=20, # The number of text pairs to generate

)

# Train and evaluate

trainer.train()

metrics = trainer.evaluate()

# Push model to the Hub

trainer.push_to_hub("my-awesome-setfit-model")For more examples, check out the notebooks/ folder.

We provide scripts to reproduce the results for SetFit and various baselines presented in Table 2 of our paper. Checkout the setup and training instructions in the scripts/ directory.

To run the code in this project, first create a Python virtual environment using e.g. Conda:

conda create -n setfit python=3.9 && conda activate setfitThen install the base requirements with:

python -m pip install -e '.[dev]'This will install datasets and packages like black and isort that we use to ensure consistent code formatting. Next, go to one of the dedicated baseline directories and install the extra dependencies, e.g.

cd scripts/setfit

python -m pip install -r requirements.txtWe use black and isort to ensure consistent code formatting. After following the installation steps, you can check your code locally by running:

make style && make quality

├── LICENSE

├── Makefile <- Makefile with commands like `make style` or `make tests`

├── README.md <- The top-level README for developers using this project.

├── notebooks <- Jupyter notebooks.

├── final_results <- Model predictions from the paper

├── scripts <- Scripts for training and inference

├── setup.cfg <- Configuration file to define package metadata

├── setup.py <- Make this project pip installable with `pip install -e`

├── src <- Source code for SetFit

└── tests <- Unit tests

[Coming soon!]