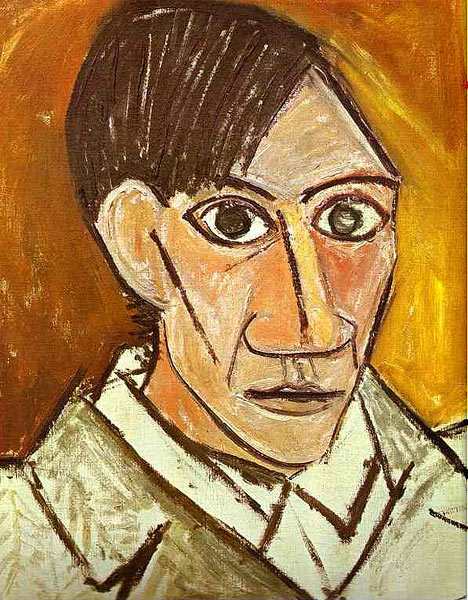

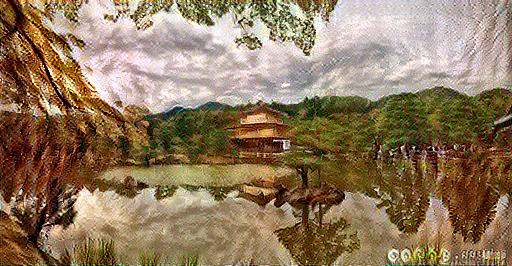

Neural Style Transfer

- Tensorflow (Code Compatible with 2.00 alpha & 1.1x version)

- Scikit-learn

- Pandas

- Numpy

- Matplotlib

- Pillow

- Function tools

Points of importance:

1. The VGG19 model trained on ImageNet is used in order to save time on learning image features.

2. Style & Content Layers are selected from the VGG19 Model and are used in computing Style loss & Content Loss values respectively

3. The formula for content loss is the Euclidean Distance between the output and content images

4. The formula for style loss is based upon the comparison of their Gram matrices

The major changes from the source(see below) include:

- Additional visualizations for every 10 epoch

- Compatibility with tf 2.00

- Additional Content Layers

- Changes to helper functions

- https://medium.com/tensorflow/neural-style-transfer-creating-art-with-deep-learning-using-tf-keras-and-eager-execution-7d541ac31398

-https://github.com/vinayak19th/Neural-Style-Transfer