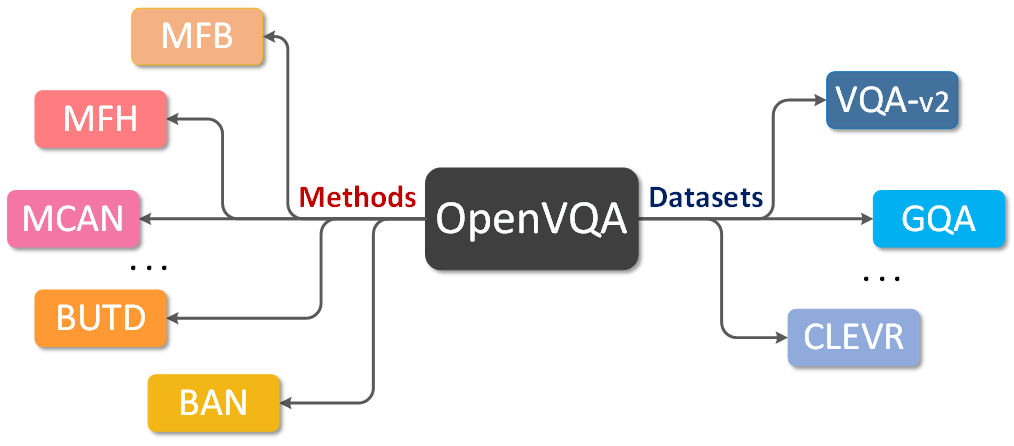

OpenVQA is a general platform for visual question ansering (VQA) research, with implementing state-of-the-art approaches (e.g., BUTD, MFH, BAN, MCAN and MMNasNet) on different benchmark datasets like VQA-v2, GQA and CLEVR. Supports for more methods and datasets will be updated continuously.

Getting started and learn more about OpenVQA here.

Supported methods and benchmark datasets are shown in the below table. Results and models are available in MODEL ZOO.

| VQA-v2 | GQA | CLEVR | |

|---|---|---|---|

| BUTD | ✓ | ✓ | |

| MFB | ✓ | ||

| MFH | ✓ | ||

| BAN | ✓ | ✓ | |

| MCAN | ✓ | ✓ | ✓ |

| MMNasNet | ✓ |

- Add supports and pre-trained models for the approaches on CLEVR.

- Add supports and pre-trained models for the approaches on GQA.

- Add an document to tell developers how to add a new model to OpenVQA.

- Refactoring the documents and using Sphinx to build the whole documents.

- Implement the basic framework for OpenVQA.

- Add supports and pre-trained models for BUTD, MFB, MFH, BAN, MCAN on VQA-v2.

This project is released under the Apache 2.0 license.

This repo is currently maintained by Zhou Yu (@yuzcccc) and Yuhao Cui (@cuiyuhao1996).

If this repository is helpful for your research or you want to refer the provided results in the modelzoo, you could cite the work using the following BibTeX entry:

@misc{yu2019openvqa,

author = {Yu, Zhou and Cui, Yuhao and Shao, Zhenwei and Gao, Pengbing and Yu, Jun},

title = {OpenVQA},

howpublished = {\url{https://github.com/MILVLG/openvqa}},

year = {2019}

}